Sotirios K. Goudos

Enhancing 3D Object Detection in Autonomous Vehicles Based on Synthetic Virtual Environment Analysis

Dec 10, 2024

Abstract:Autonomous Vehicles (AVs) use natural images and videos as input to understand the real world by overlaying and inferring digital elements, facilitating proactive detection in an effort to assure safety. A crucial aspect of this process is real-time, accurate object recognition through automatic scene analysis. While traditional methods primarily concentrate on 2D object detection, exploring 3D object detection, which involves projecting 3D bounding boxes into the three-dimensional environment, holds significance and can be notably enhanced using the AR ecosystem. This study examines an AI model's ability to deduce 3D bounding boxes in the context of real-time scene analysis while producing and evaluating the model's performance and processing time, in the virtual domain, which is then applied to AVs. This work also employs a synthetic dataset that includes artificially generated images mimicking various environmental, lighting, and spatiotemporal states. This evaluation is oriented in handling images featuring objects in diverse weather conditions, captured with varying camera settings. These variations pose more challenging detection and recognition scenarios, which the outcomes of this work can help achieve competitive results under most of the tested conditions.

Evaluating the Efficacy of AI Techniques in Textual Anonymization: A Comparative Study

May 09, 2024Abstract:In the digital era, with escalating privacy concerns, it's imperative to devise robust strategies that protect private data while maintaining the intrinsic value of textual information. This research embarks on a comprehensive examination of text anonymisation methods, focusing on Conditional Random Fields (CRF), Long Short-Term Memory (LSTM), Embeddings from Language Models (ELMo), and the transformative capabilities of the Transformers architecture. Each model presents unique strengths since LSTM is modeling long-term dependencies, CRF captures dependencies among word sequences, ELMo delivers contextual word representations using deep bidirectional language models and Transformers introduce self-attention mechanisms that provide enhanced scalability. Our study is positioned as a comparative analysis of these models, emphasising their synergistic potential in addressing text anonymisation challenges. Preliminary results indicate that CRF, LSTM, and ELMo individually outperform traditional methods. The inclusion of Transformers, when compared alongside with the other models, offers a broader perspective on achieving optimal text anonymisation in contemporary settings.

Benchmarking Advanced Text Anonymisation Methods: A Comparative Study on Novel and Traditional Approaches

Apr 22, 2024Abstract:In the realm of data privacy, the ability to effectively anonymise text is paramount. With the proliferation of deep learning and, in particular, transformer architectures, there is a burgeoning interest in leveraging these advanced models for text anonymisation tasks. This paper presents a comprehensive benchmarking study comparing the performance of transformer-based models and Large Language Models(LLM) against traditional architectures for text anonymisation. Utilising the CoNLL-2003 dataset, known for its robustness and diversity, we evaluate several models. Our results showcase the strengths and weaknesses of each approach, offering a clear perspective on the efficacy of modern versus traditional methods. Notably, while modern models exhibit advanced capabilities in capturing con textual nuances, certain traditional architectures still keep high performance. This work aims to guide researchers in selecting the most suitable model for their anonymisation needs, while also shedding light on potential paths for future advancements in the field.

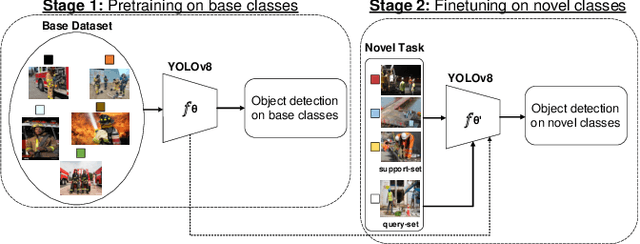

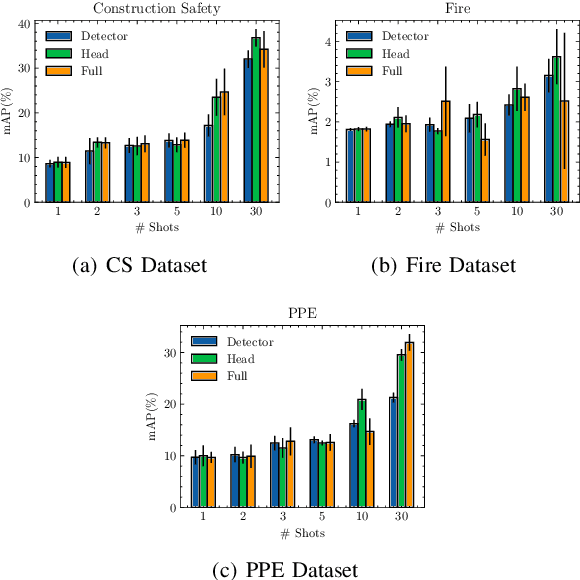

Evaluating the Energy Efficiency of Few-Shot Learning for Object Detection in Industrial Settings

Mar 11, 2024

Abstract:In the ever-evolving era of Artificial Intelligence (AI), model performance has constituted a key metric driving innovation, leading to an exponential growth in model size and complexity. However, sustainability and energy efficiency have been critical requirements during deployment in contemporary industrial settings, necessitating the use of data-efficient approaches such as few-shot learning. In this paper, to alleviate the burden of lengthy model training and minimize energy consumption, a finetuning approach to adapt standard object detection models to downstream tasks is examined. Subsequently, a thorough case study and evaluation of the energy demands of the developed models, applied in object detection benchmark datasets from volatile industrial environments is presented. Specifically, different finetuning strategies as well as utilization of ancillary evaluation data during training are examined, and the trade-off between performance and efficiency is highlighted in this low-data regime. Finally, this paper introduces a novel way to quantify this trade-off through a customized Efficiency Factor metric.

Toward Green and Human-Like Artificial Intelligence: A Complete Survey on Contemporary Few-Shot Learning Approaches

Feb 05, 2024

Abstract:Despite deep learning's widespread success, its data-hungry and computationally expensive nature makes it impractical for many data-constrained real-world applications. Few-Shot Learning (FSL) aims to address these limitations by enabling rapid adaptation to novel learning tasks, seeing significant growth in recent years. This survey provides a comprehensive overview of the field's latest advancements. Initially, FSL is formally defined, and its relationship with different learning fields is presented. A novel taxonomy is introduced, extending previously proposed ones, and real-world applications in classic and novel fields are described. Finally, recent trends shaping the field, outstanding challenges, and promising future research directions are discussed.

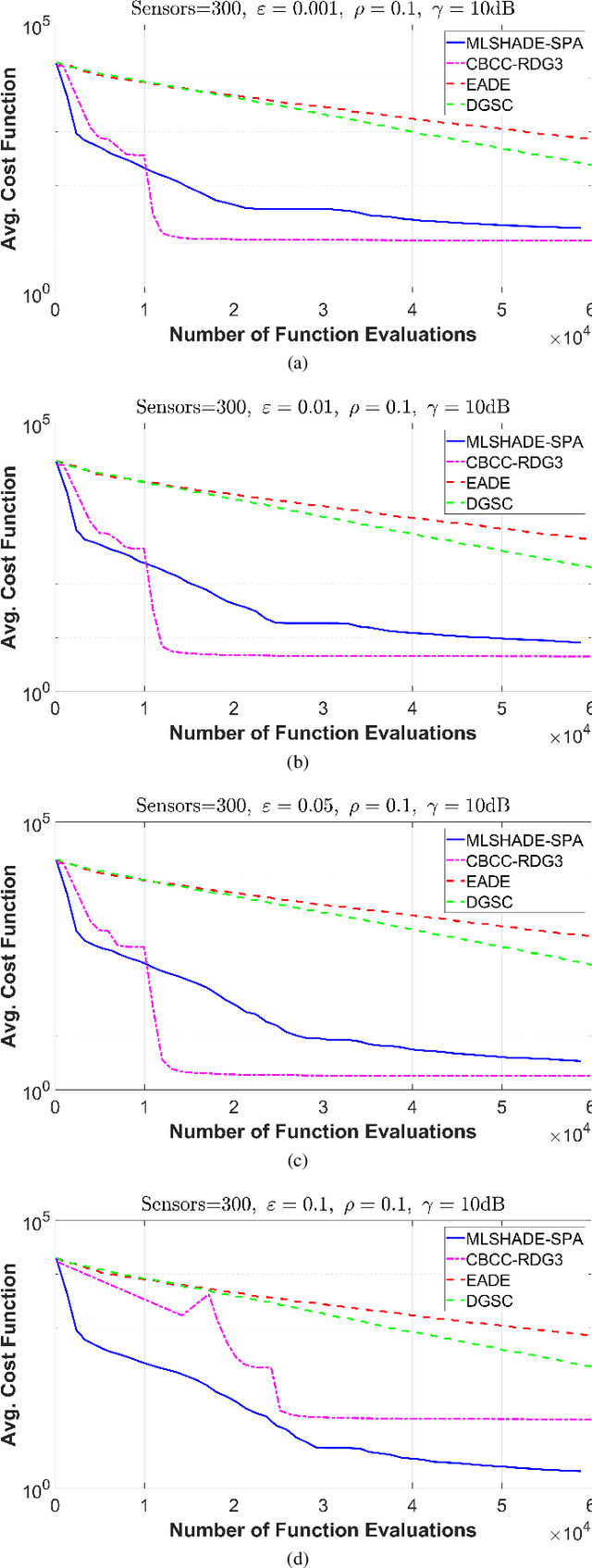

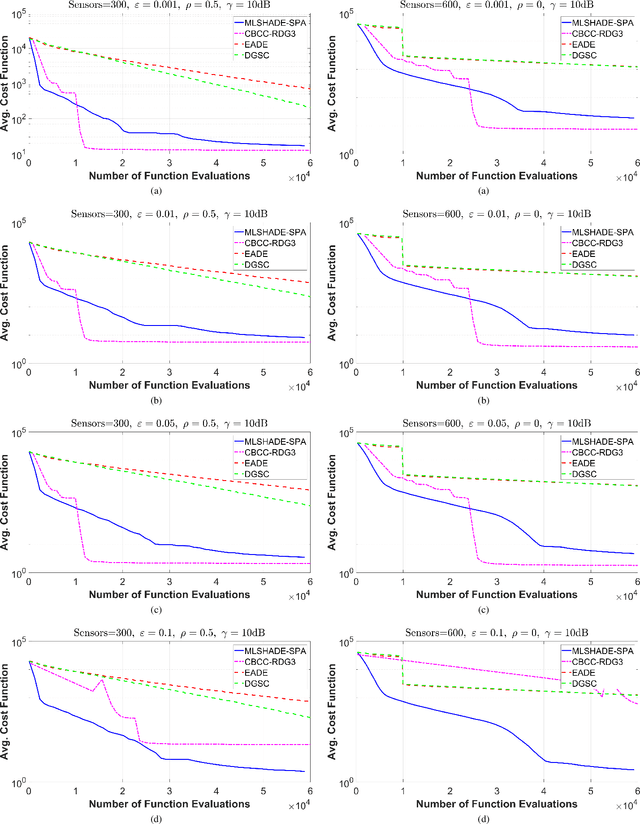

Large Scale Global Optimization Algorithms for IoT Networks: A Comparative Study

Feb 22, 2021

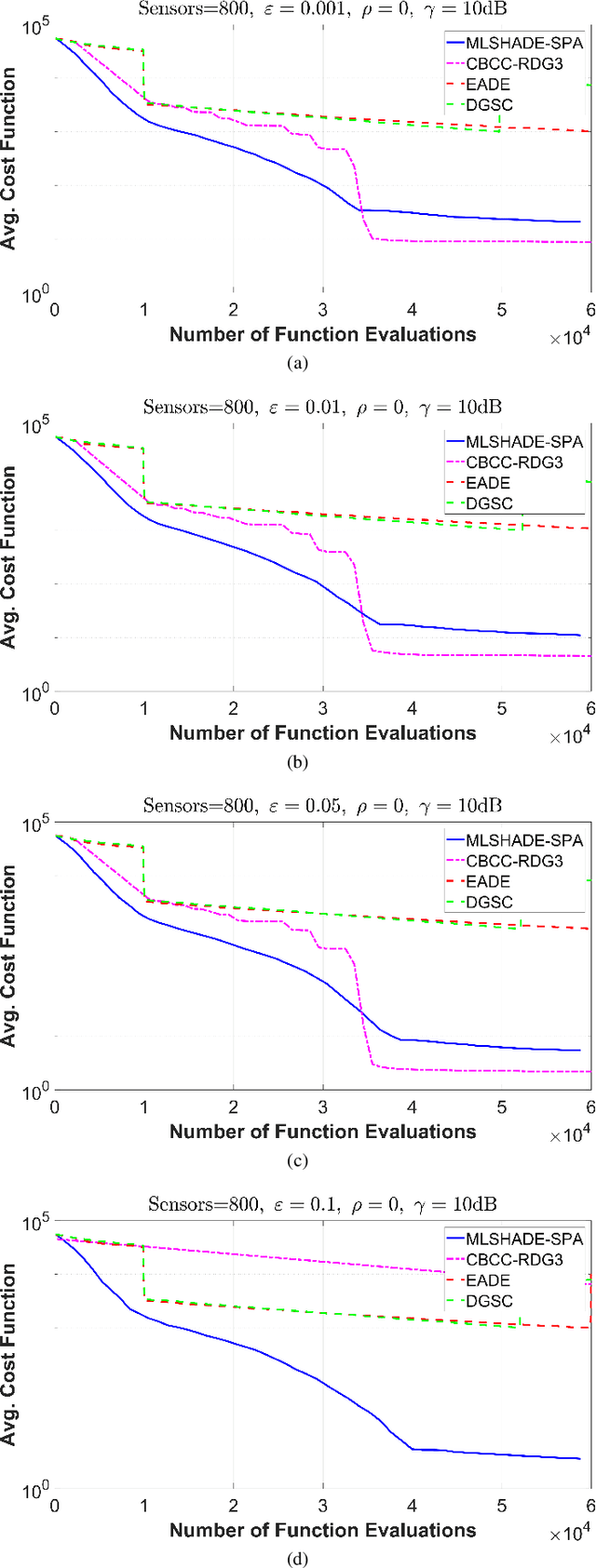

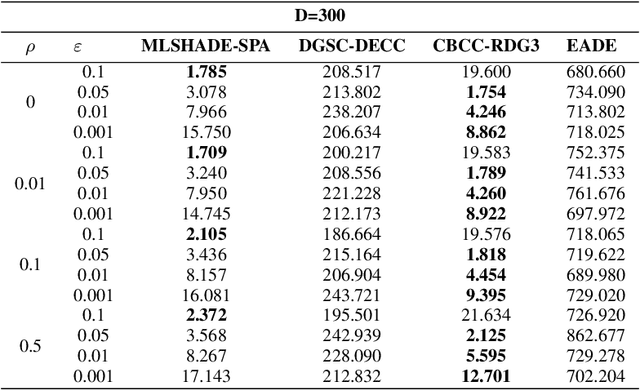

Abstract:The advent of Internet of Things (IoT) has bring a new era in communication technology by expanding the current inter-networking services and enabling the machine-to-machine communication. IoT massive deployments will create the problem of optimal power allocation. The objective of the optimization problem is to obtain a feasible solution that minimizes the total power consumption of the WSN, when the error probability at the fusion center meets certain criteria. This work studies the optimization of a wireless sensor network (WNS) at higher dimensions by focusing to the power allocation of decentralized detection. More specifically, we apply and compare four algorithms designed to tackle Large scale global optimization (LGSO) problems. These are the memetic linear population size reduction and semi-parameter adaptation (MLSHADE-SPA), the contribution-based cooperative coevolution recursive differential grouping (CBCC-RDG3), the differential grouping with spectral clustering-differential evolution cooperative coevolution (DGSC-DECC), and the enhanced adaptive differential evolution (EADE). To the best of the authors knowledge, this is the first time that LGSO algorithms are applied to the optimal power allocation problem in IoT networks. We evaluate the algorithms performance in several different cases by applying them in cases with 300, 600 and 800 dimensions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge