Shilpa Talwar

Graph Reinforcement Learning for QoS-Aware Load Balancing in Open Radio Access Networks

Apr 28, 2025

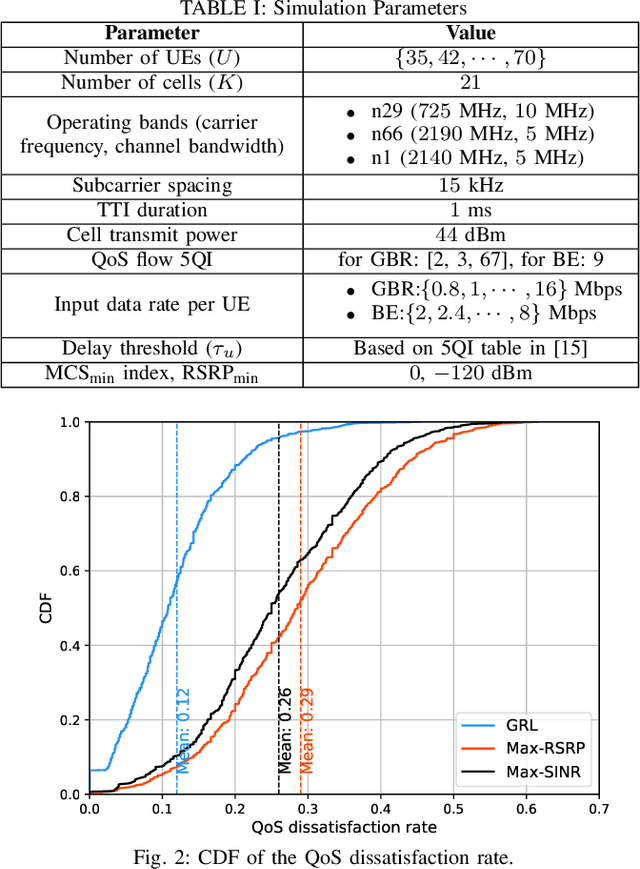

Abstract:Next-generation wireless cellular networks are expected to provide unparalleled Quality-of-Service (QoS) for emerging wireless applications, necessitating strict performance guarantees, e.g., in terms of link-level data rates. A critical challenge in meeting these QoS requirements is the prevention of cell congestion, which involves balancing the load to ensure sufficient radio resources are available for each cell to serve its designated User Equipments (UEs). In this work, a novel QoS-aware Load Balancing (LB) approach is developed to optimize the performance of Guaranteed Bit Rate (GBR) and Best Effort (BE) traffic in a multi-band Open Radio Access Network (O-RAN) under QoS and resource constraints. The proposed solution builds on Graph Reinforcement Learning (GRL), a powerful framework at the intersection of Graph Neural Network (GNN) and RL. The QoS-aware LB is modeled as a Markov Decision Process, with states represented as graphs. QoS consideration are integrated into both state representations and reward signal design. The LB agent is then trained using an off-policy dueling Deep Q Network (DQN) that leverages a GNN-based architecture. This design ensures the LB policy is invariant to the ordering of nodes (UE or cell), flexible in handling various network sizes, and capable of accounting for spatial node dependencies in LB decisions. Performance of the GRL-based solution is compared with two baseline methods. Results show substantial performance gains, including a $53\%$ reduction in QoS violations and a fourfold increase in the 5th percentile rate for BE traffic.

Reliability-Optimized User Admission Control for URLLC Traffic: A Neural Contextual Bandit Approach

Jan 05, 2024

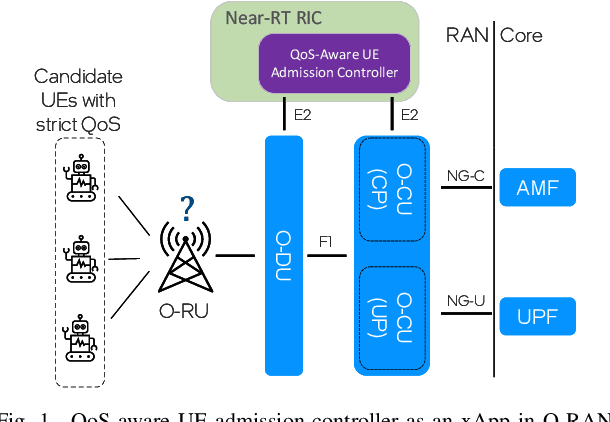

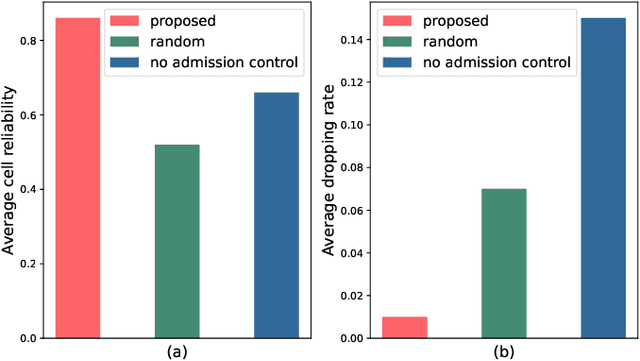

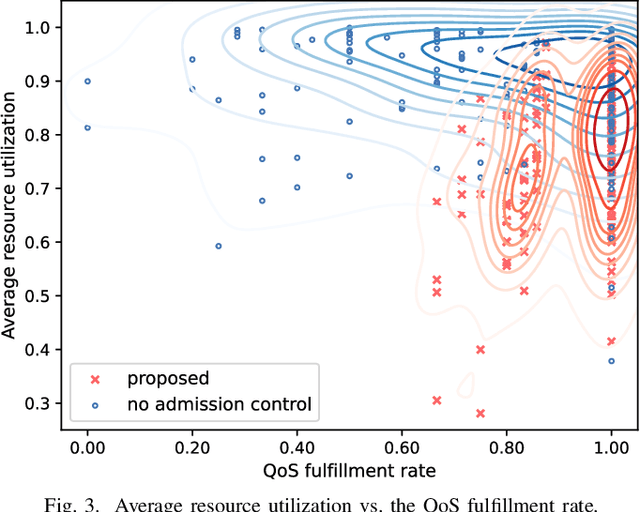

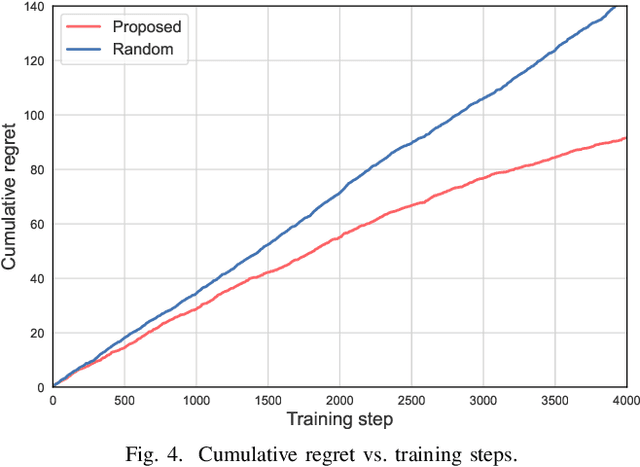

Abstract:Ultra-reliable low-latency communication (URLLC) is the cornerstone for a broad range of emerging services in next-generation wireless networks. URLLC fundamentally relies on the network's ability to proactively determine whether sufficient resources are available to support the URLLC traffic, and thus, prevent so-called cell overloads. Nonetheless, achieving accurate quality-of-service (QoS) predictions for URLLC user equipment (UEs) and preventing cell overloads are very challenging tasks. This is due to dependency of the QoS metrics (latency and reliability) on traffic and channel statistics, users' mobility, and interdependent performance across UEs. In this paper, a new QoS-aware UE admission control approach is developed to proactively estimate QoS for URLLC UEs, prior to associating them with a cell, and accordingly, admit only a subset of UEs that do not lead to a cell overload. To this end, an optimization problem is formulated to find an efficient UE admission control policy, cognizant of UEs' QoS requirements and cell-level load dynamics. To solve this problem, a new machine learning based method is proposed that builds on (deep) neural contextual bandits, a suitable framework for dealing with nonlinear bandit problems. In fact, the UE admission controller is treated as a bandit agent that observes a set of network measurements (context) and makes admission control decisions based on context-dependent QoS (reward) predictions. The simulation results show that the proposed scheme can achieve near-optimal performance and yield substantial gains in terms of cell-level service reliability and efficient resource utilization.

Coded Computing for Low-Latency Federated Learning over Wireless Edge Networks

Nov 12, 2020

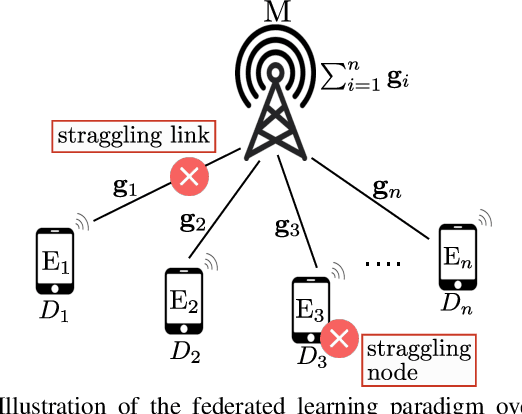

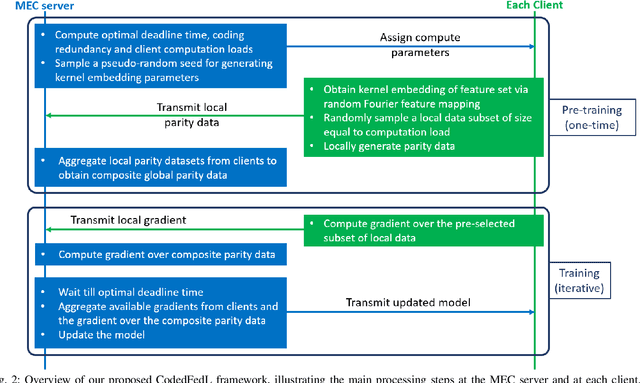

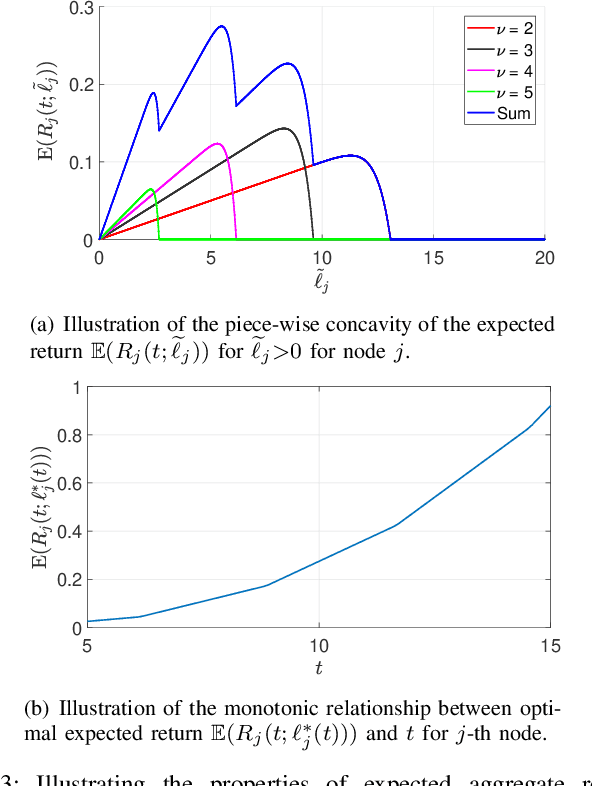

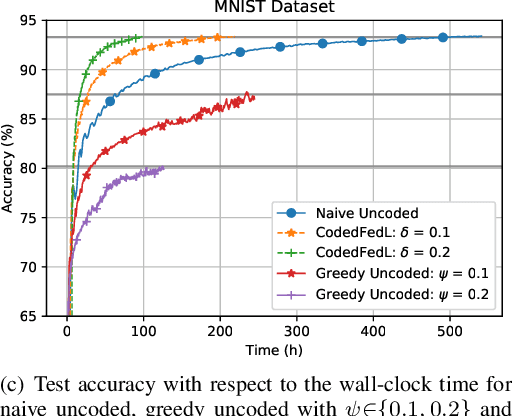

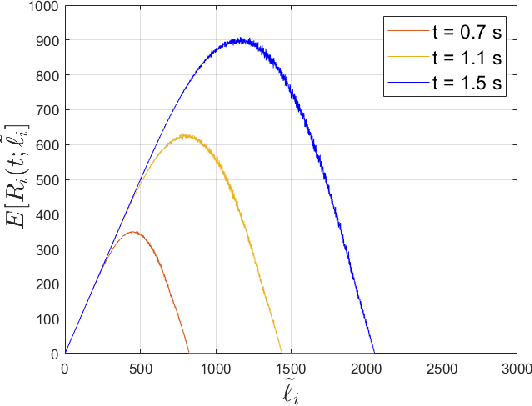

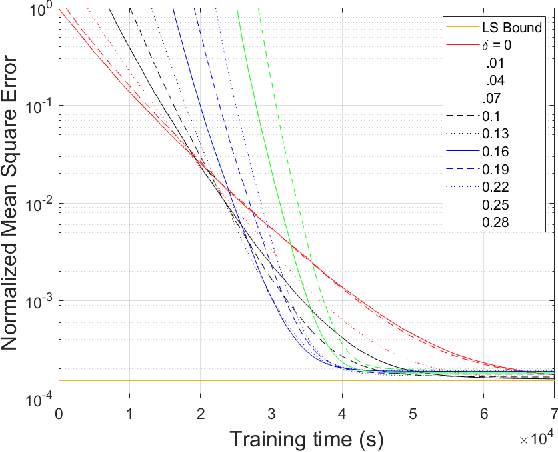

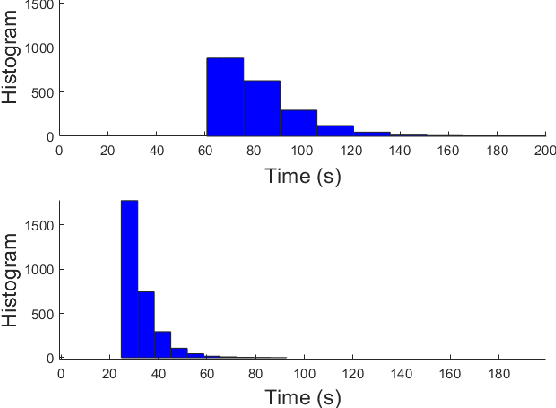

Abstract:Federated learning enables training a global model from data located at the client nodes, without data sharing and moving client data to a centralized server. Performance of federated learning in a multi-access edge computing (MEC) network suffers from slow convergence due to heterogeneity and stochastic fluctuations in compute power and communication link qualities across clients. We propose a novel coded computing framework, CodedFedL, that injects structured coding redundancy into federated learning for mitigating stragglers and speeding up the training procedure. CodedFedL enables coded computing for non-linear federated learning by efficiently exploiting distributed kernel embedding via random Fourier features that transforms the training task into computationally favourable distributed linear regression. Furthermore, clients generate local parity datasets by coding over their local datasets, while the server combines them to obtain the global parity dataset. Gradient from the global parity dataset compensates for straggling gradients during training, and thereby speeds up convergence. For minimizing the epoch deadline time at the MEC server, we provide a tractable approach for finding the amount of coding redundancy and the number of local data points that a client processes during training, by exploiting the statistical properties of compute as well as communication delays. We also characterize the leakage in data privacy when clients share their local parity datasets with the server. We analyze the convergence rate and iteration complexity of CodedFedL under simplifying assumptions, by treating CodedFedL as a stochastic gradient descent algorithm. Furthermore, we conduct numerical experiments using practical network parameters and benchmark datasets, where CodedFedL speeds up the overall training time by up to $15\times$ in comparison to the benchmark schemes.

Coded Federated Learning

Feb 21, 2020

Abstract:Federated learning is a method of training a global model from decentralized data distributed across client devices. Here, model parameters are computed locally by each client device and exchanged with a central server, which aggregates the local models for a global view, without requiring sharing of training data. The convergence performance of federated learning is severely impacted in heterogeneous computing platforms such as those at the wireless edge, where straggling computations and communication links can significantly limit timely model parameter updates. This paper develops a novel coded computing technique for federated learning to mitigate the impact of stragglers. In the proposed Coded Federated Learning (CFL) scheme, each client device privately generates parity training data and shares it with the central server only once at the start of the training phase. The central server can then preemptively perform redundant gradient computations on the composite parity data to compensate for the erased or delayed parameter updates. Our results show that CFL allows the global model to converge nearly four times faster when compared to an uncoded approach

When Multiple Agents Learn to Schedule: A Distributed Radio Resource Management Framework

Jun 20, 2019

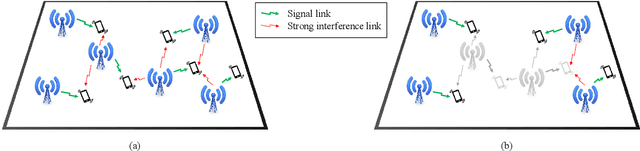

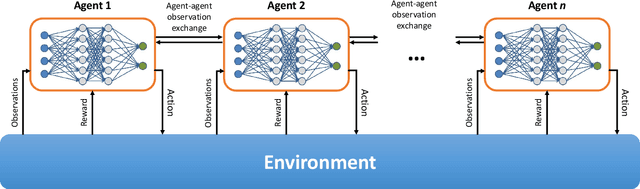

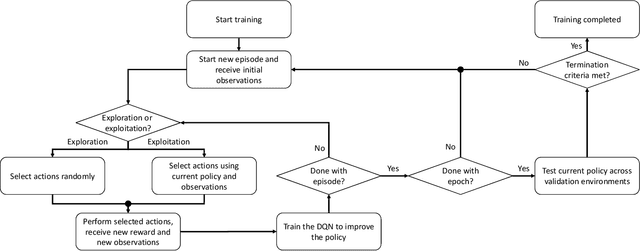

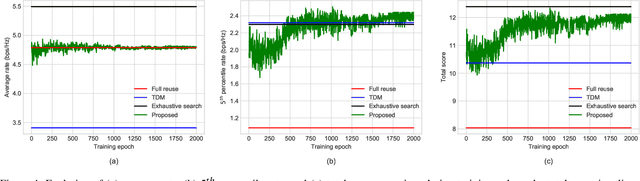

Abstract:Interference among concurrent transmissions in a wireless network is a key factor limiting the system performance. One way to alleviate this problem is to manage the radio resources in order to maximize either the average or the worst-case performance. However, joint consideration of both metrics is often neglected as they are competing in nature. In this article, a mechanism for radio resource management using multi-agent deep reinforcement learning (RL) is proposed, which strikes the right trade-off between maximizing the average and the $5^{th}$ percentile user throughput. Each transmitter in the network is equipped with a deep RL agent, receiving partial observations from the network (e.g., channel quality, interference level, etc.) and deciding whether to be active or inactive at each scheduling interval for given radio resources, a process referred to as link scheduling. Based on the actions of all agents, the network emits a reward to the agents, indicating how good their joint decisions were. The proposed framework enables the agents to make decisions in a distributed manner, and the reward is designed in such a way that the agents strive to guarantee a minimum performance, leading to a fair resource allocation among all users across the network. Simulation results demonstrate the superiority of our approach compared to decentralized baselines in terms of average and $5^{th}$ percentile user throughput, while achieving performance close to that of a centralized exhaustive search approach. Moreover, the proposed framework is robust to mismatches between training and testing scenarios. In particular, it is shown that an agent trained on a network with low transmitter density maintains its performance and outperforms the baselines when deployed in a network with a higher transmitter density.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge