Shahryar Rahnamayan

Evolutionary Feature-wise Thresholding for Binary Representation of NLP Embeddings

Jul 22, 2025Abstract:Efficient text embedding is crucial for large-scale natural language processing (NLP) applications, where storage and computational efficiency are key concerns. In this paper, we explore how using binary representations (barcodes) instead of real-valued features can be used for NLP embeddings derived from machine learning models such as BERT. Thresholding is a common method for converting continuous embeddings into binary representations, often using a fixed threshold across all features. We propose a Coordinate Search-based optimization framework that instead identifies the optimal threshold for each feature, demonstrating that feature-specific thresholds lead to improved performance in binary encoding. This ensures that the binary representations are both accurate and efficient, enhancing performance across various features. Our optimal barcode representations have shown promising results in various NLP applications, demonstrating their potential to transform text representation. We conducted extensive experiments and statistical tests on different NLP tasks and datasets to evaluate our approach and compare it to other thresholding methods. Binary embeddings generated using using optimal thresholds found by our method outperform traditional binarization methods in accuracy. This technique for generating binary representations is versatile and can be applied to any features, not just limited to NLP embeddings, making it useful for a wide range of domains in machine learning applications.

A Novel Structure-Agnostic Multi-Objective Approach for Weight-Sharing Compression in Deep Neural Networks

Jan 06, 2025Abstract:Deep neural networks suffer from storing millions and billions of weights in memory post-training, making challenging memory-intensive models to deploy on embedded devices. The weight-sharing technique is one of the popular compression approaches that use fewer weight values and share across specific connections in the network. In this paper, we propose a multi-objective evolutionary algorithm (MOEA) based compression framework independent of neural network architecture, dimension, task, and dataset. We use uniformly sized bins to quantize network weights into a single codebook (lookup table) for efficient weight representation. Using MOEA, we search for Pareto optimal $k$ bins by optimizing two objectives. Then, we apply the iterative merge technique to non-dominated Pareto frontier solutions by combining neighboring bins without degrading performance to decrease the number of bins and increase the compression ratio. Our approach is model- and layer-independent, meaning the weights are mixed in the clusters from any layer, and the uniform quantization method used in this work has $O(N)$ complexity instead of non-uniform quantization methods such as k-means with $O(Nkt)$ complexity. In addition, we use the center of clusters as the shared weight values instead of retraining shared weights, which is computationally expensive. The advantage of using evolutionary multi-objective optimization is that it can obtain non-dominated Pareto frontier solutions with respect to performance and shared weights. The experimental results show that we can reduce the neural network memory by $13.72 \sim14.98 \times$ on CIFAR-10, $11.61 \sim 12.99\times$ on CIFAR-100, and $7.44 \sim 8.58\times$ on ImageNet showcasing the effectiveness of the proposed deep neural network compression framework.

A Novel Pareto-optimal Ranking Method for Comparing Multi-objective Optimization Algorithms

Nov 27, 2024

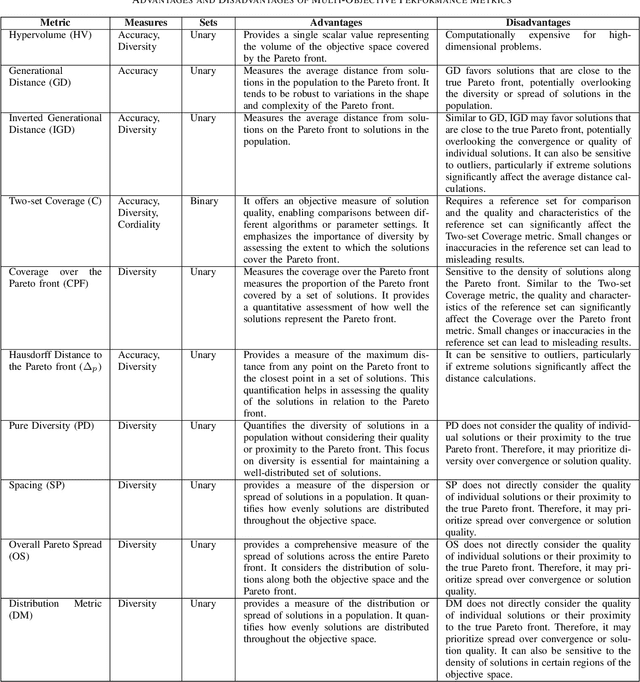

Abstract:As the interest in multi- and many-objective optimization algorithms grows, the performance comparison of these algorithms becomes increasingly important. A large number of performance indicators for multi-objective optimization algorithms have been introduced, each of which evaluates these algorithms based on a certain aspect. Therefore, assessing the quality of multi-objective results using multiple indicators is essential to guarantee that the evaluation considers all quality perspectives. This paper proposes a novel multi-metric comparison method to rank the performance of multi-/ many-objective optimization algorithms based on a set of performance indicators. We utilize the Pareto optimality concept (i.e., non-dominated sorting algorithm) to create the rank levels of algorithms by simultaneously considering multiple performance indicators as criteria/objectives. As a result, four different techniques are proposed to rank algorithms based on their contribution at each Pareto level. This method allows researchers to utilize a set of existing/newly developed performance metrics to adequately assess/rank multi-/many-objective algorithms. The proposed methods are scalable and can accommodate in its comprehensive scheme any newly introduced metric. The method was applied to rank 10 competing algorithms in the 2018 CEC competition solving 15 many-objective test problems. The Pareto-optimal ranking was conducted based on 10 well-known multi-objective performance indicators and the results were compared to the final ranks reported by the competition, which were based on the inverted generational distance (IGD) and hypervolume indicator (HV) measures. The techniques suggested in this paper have broad applications in science and engineering, particularly in areas where multiple metrics are used for comparisons. Examples include machine learning and data mining.

Massive Dimensions Reduction and Hybridization with Meta-heuristics in Deep Learning

Aug 13, 2024Abstract:Deep learning is mainly based on utilizing gradient-based optimization for training Deep Neural Network (DNN) models. Although robust and widely used, gradient-based optimization algorithms are prone to getting stuck in local minima. In this modern deep learning era, the state-of-the-art DNN models have millions and billions of parameters, including weights and biases, making them huge-scale optimization problems in terms of search space. Tuning a huge number of parameters is a challenging task that causes vanishing/exploding gradients and overfitting; likewise, utilized loss functions do not exactly represent our targeted performance metrics. A practical solution to exploring large and complex solution space is meta-heuristic algorithms. Since DNNs exceed thousands and millions of parameters, even robust meta-heuristic algorithms, such as Differential Evolution, struggle to efficiently explore and converge in such huge-dimensional search spaces, leading to very slow convergence and high memory demand. To tackle the mentioned curse of dimensionality, the concept of blocking was recently proposed as a technique that reduces the search space dimensions by grouping them into blocks. In this study, we aim to introduce Histogram-based Blocking Differential Evolution (HBDE), a novel approach that hybridizes gradient-based and gradient-free algorithms to optimize parameters. Experimental results demonstrated that the HBDE could reduce the parameters in the ResNet-18 model from 11M to 3K during the training/optimizing phase by metaheuristics, namely, the proposed HBDE, which outperforms baseline gradient-based and parent gradient-free DE algorithms evaluated on CIFAR-10 and CIFAR-100 datasets showcasing its effectiveness with reduced computational demands for the very first time.

Enhancing Diversity in Multi-objective Feature Selection

Jul 25, 2024Abstract:Feature selection plays a pivotal role in the data preprocessing and model-building pipeline, significantly enhancing model performance, interpretability, and resource efficiency across diverse domains. In population-based optimization methods, the generation of diverse individuals holds utmost importance for adequately exploring the problem landscape, particularly in highly multi-modal multi-objective optimization problems. Our study reveals that, in line with findings from several prior research papers, commonly employed crossover and mutation operations lack the capability to generate high-quality diverse individuals and tend to become confined to limited areas around various local optima. This paper introduces an augmentation to the diversity of the population in the well-established multi-objective scheme of the genetic algorithm, NSGA-II. This enhancement is achieved through two key components: the genuine initialization method and the substitution of the worst individuals with new randomly generated individuals as a re-initialization approach in each generation. The proposed multi-objective feature selection method undergoes testing on twelve real-world classification problems, with the number of features ranging from 2,400 to nearly 50,000. The results demonstrate that replacing the last front of the population with an equivalent number of new random individuals generated using the genuine initialization method and featuring a limited number of features substantially improves the population's quality and, consequently, enhances the performance of the multi-objective algorithm.

Training Artificial Neural Networks by Coordinate Search Algorithm

Feb 20, 2024

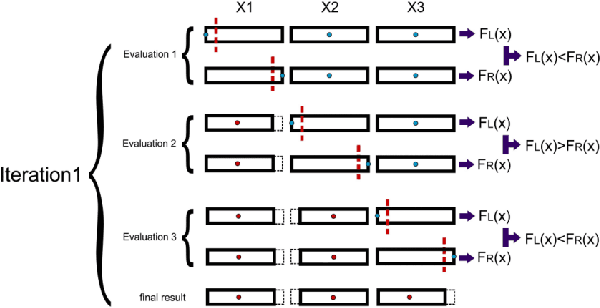

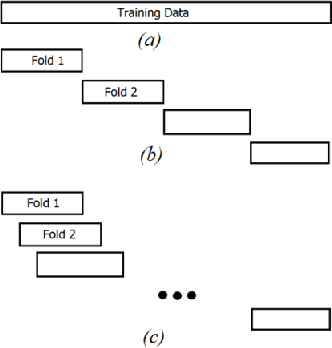

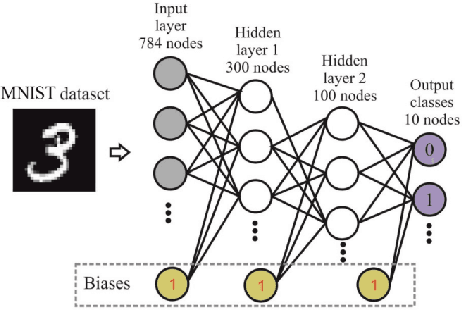

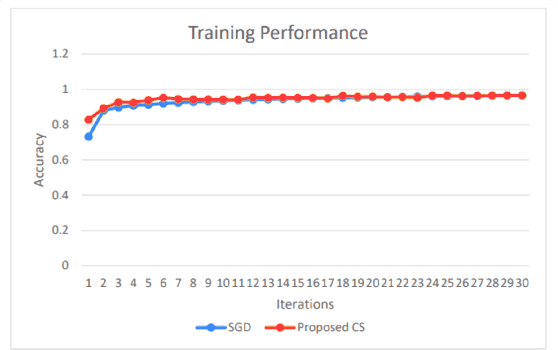

Abstract:Training Artificial Neural Networks poses a challenging and critical problem in machine learning. Despite the effectiveness of gradient-based learning methods, such as Stochastic Gradient Descent (SGD), in training neural networks, they do have several limitations. For instance, they require differentiable activation functions, and cannot optimize a model based on several independent non-differentiable loss functions simultaneously; for example, the F1-score, which is used during testing, can be used during training when a gradient-free optimization algorithm is utilized. Furthermore, the training in any DNN can be possible with a small size of the training dataset. To address these concerns, we propose an efficient version of the gradient-free Coordinate Search (CS) algorithm, an instance of General Pattern Search methods, for training neural networks. The proposed algorithm can be used with non-differentiable activation functions and tailored to multi-objective/multi-loss problems. Finding the optimal values for weights of ANNs is a large-scale optimization problem. Therefore instead of finding the optimal value for each variable, which is the common technique in classical CS, we accelerate optimization and convergence by bundling the weights. In fact, this strategy is a form of dimension reduction for optimization problems. Based on the experimental results, the proposed method, in some cases, outperforms the gradient-based approach, particularly, in situations with insufficient labeled training data. The performance plots demonstrate a high convergence rate, highlighting the capability of our suggested method to find a reasonable solution with fewer function calls. As of now, the only practical and efficient way of training ANNs with hundreds of thousands of weights is gradient-based algorithms such as SGD or Adam. In this paper we introduce an alternative method for training ANN.

* 7 pages, 9 figures

Multi-objective Binary Coordinate Search for Feature Selection

Feb 20, 2024

Abstract:A supervised feature selection method selects an appropriate but concise set of features to differentiate classes, which is highly expensive for large-scale datasets. Therefore, feature selection should aim at both minimizing the number of selected features and maximizing the accuracy of classification, or any other task. However, this crucial task is computationally highly demanding on many real-world datasets and requires a very efficient algorithm to reach a set of optimal features with a limited number of fitness evaluations. For this purpose, we have proposed the binary multi-objective coordinate search (MOCS) algorithm to solve large-scale feature selection problems. To the best of our knowledge, the proposed algorithm in this paper is the first multi-objective coordinate search algorithm. In this method, we generate new individuals by flipping a variable of the candidate solutions on the Pareto front. This enables us to investigate the effectiveness of each feature in the corresponding subset. In fact, this strategy can play the role of crossover and mutation operators to generate distinct subsets of features. The reported results indicate the significant superiority of our method over NSGA-II, on five real-world large-scale datasets, particularly when the computing budget is limited. Moreover, this simple hyper-parameter-free algorithm can solve feature selection much faster and more efficiently than NSGA-II.

* 8 pages, 1 figure

Compact NSGA-II for Multi-objective Feature Selection

Feb 20, 2024

Abstract:Feature selection is an expensive challenging task in machine learning and data mining aimed at removing irrelevant and redundant features. This contributes to an improvement in classification accuracy, as well as the budget and memory requirements for classification, or any other post-processing task conducted after feature selection. In this regard, we define feature selection as a multi-objective binary optimization task with the objectives of maximizing classification accuracy and minimizing the number of selected features. In order to select optimal features, we have proposed a binary Compact NSGA-II (CNSGA-II) algorithm. Compactness represents the population as a probability distribution to enhance evolutionary algorithms not only to be more memory-efficient but also to reduce the number of fitness evaluations. Instead of holding two populations during the optimization process, our proposed method uses several Probability Vectors (PVs) to generate new individuals. Each PV efficiently explores a region of the search space to find non-dominated solutions instead of generating candidate solutions from a small population as is the common approach in most evolutionary algorithms. To the best of our knowledge, this is the first compact multi-objective algorithm proposed for feature selection. The reported results for expensive optimization cases with a limited budget on five datasets show that the CNSGA-II performs more efficiently than the well-known NSGA-II method in terms of the hypervolume (HV) performance metric requiring less memory. The proposed method and experimental results are explained and analyzed in detail.

* 8 pages, 2 figures

ALFA -- Leveraging All Levels of Feature Abstraction for Enhancing the Generalization of Histopathology Image Classification Across Unseen Hospitals

Aug 09, 2023Abstract:We propose an exhaustive methodology that leverages all levels of feature abstraction, targeting an enhancement in the generalizability of image classification to unobserved hospitals. Our approach incorporates augmentation-based self-supervision with common distribution shifts in histopathology scenarios serving as the pretext task. This enables us to derive invariant features from training images without relying on training labels, thereby covering different abstraction levels. Moving onto the subsequent abstraction level, we employ a domain alignment module to facilitate further extraction of invariant features across varying training hospitals. To represent the highly specific features of participating hospitals, an encoder is trained to classify hospital labels, independent of their diagnostic labels. The features from each of these encoders are subsequently disentangled to minimize redundancy and segregate the features. This representation, which spans a broad spectrum of semantic information, enables the development of a model demonstrating increased robustness to unseen images from disparate distributions. Experimental results from the PACS dataset (a domain generalization benchmark), a synthetic dataset created by applying histopathology-specific jitters to the MHIST dataset (defining different domains with varied distribution shifts), and a Renal Cell Carcinoma dataset derived from four image repositories from TCGA, collectively indicate that our proposed model is adept at managing varying levels of image granularity. Thus, it shows improved generalizability when faced with new, out-of-distribution hospital images.

Ranking Loss and Sequestering Learning for Reducing Image Search Bias in Histopathology

Apr 15, 2023Abstract:Recently, deep learning has started to play an essential role in healthcare applications, including image search in digital pathology. Despite the recent progress in computer vision, significant issues remain for image searching in histopathology archives. A well-known problem is AI bias and lack of generalization. A more particular shortcoming of deep models is the ignorance toward search functionality. The former affects every model, the latter only search and matching. Due to the lack of ranking-based learning, researchers must train models based on the classification error and then use the resultant embedding for image search purposes. Moreover, deep models appear to be prone to internal bias even if using a large image repository of various hospitals. This paper proposes two novel ideas to improve image search performance. First, we use a ranking loss function to guide feature extraction toward the matching-oriented nature of the search. By forcing the model to learn the ranking of matched outputs, the representation learning is customized toward image search instead of learning a class label. Second, we introduce the concept of sequestering learning to enhance the generalization of feature extraction. By excluding the images of the input hospital from the matched outputs, i.e., sequestering the input domain, the institutional bias is reduced. The proposed ideas are implemented and validated through the largest public dataset of whole slide images. The experiments demonstrate superior results compare to the-state-of-art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge