Ron Appel

Computational 3D topographic microscopy from terabytes of data per sample

Jun 05, 2023

Abstract:We present a large-scale computational 3D topographic microscope that enables 6-gigapixel profilometric 3D imaging at micron-scale resolution across $>$110 cm$^2$ areas over multi-millimeter axial ranges. Our computational microscope, termed STARCAM (Scanning Topographic All-in-focus Reconstruction with a Computational Array Microscope), features a parallelized, 54-camera architecture with 3-axis translation to capture, for each sample of interest, a multi-dimensional, 2.1-terabyte (TB) dataset, consisting of a total of 224,640 9.4-megapixel images. We developed a self-supervised neural network-based algorithm for 3D reconstruction and stitching that jointly estimates an all-in-focus photometric composite and 3D height map across the entire field of view, using multi-view stereo information and image sharpness as a focal metric. The memory-efficient, compressed differentiable representation offered by the neural network effectively enables joint participation of the entire multi-TB dataset during the reconstruction process. To demonstrate the broad utility of our new computational microscope, we applied STARCAM to a variety of decimeter-scale objects, with applications ranging from cultural heritage to industrial inspection.

Multi-scale gigapixel microscopy using a multi-camera array microscope

Nov 30, 2022

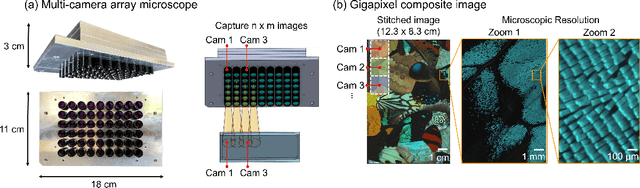

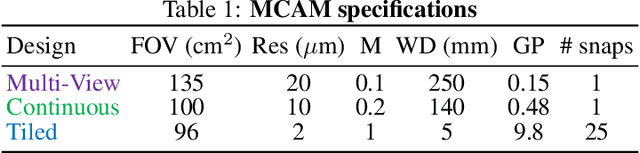

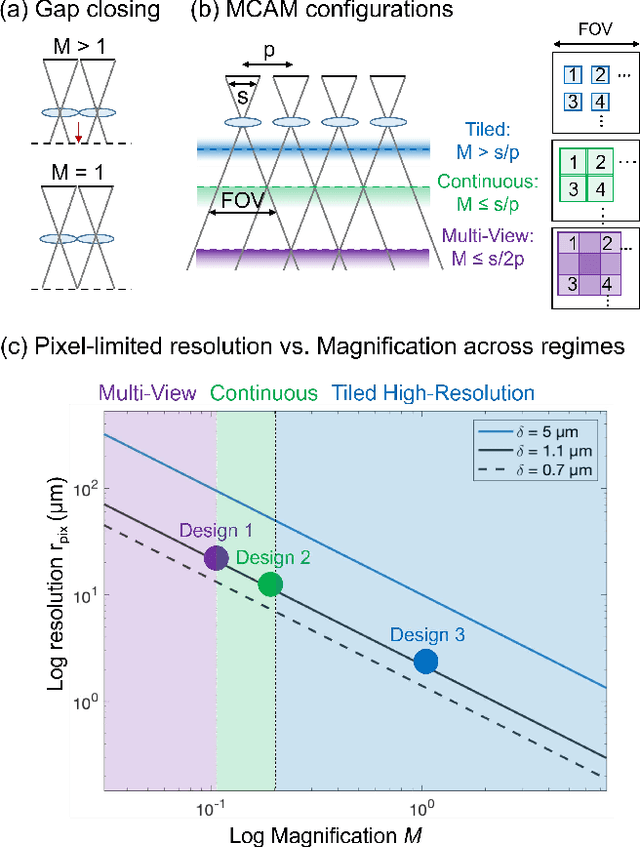

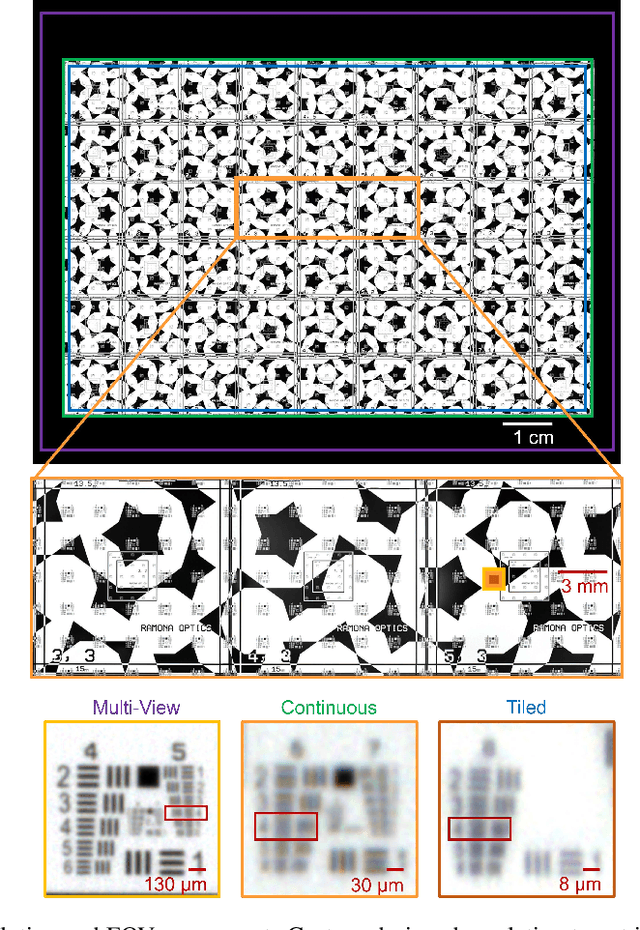

Abstract:This article experimentally examines different configurations of a novel multi-camera array microscope (MCAM) imaging technology. The MCAM is based upon a densely packed array of "micro-cameras" to jointly image across a large field-of-view at high resolution. Each micro-camera within the array images a unique area of a sample of interest, and then all acquired data with 54 micro-cameras are digitally combined into composite frames, whose total pixel counts significantly exceed the pixel counts of standard microscope systems. We present results from three unique MCAM configurations for different use cases. First, we demonstrate a configuration that simultaneously images and estimates the 3D object depth across a 100 x 135 mm^2 field-of-view (FOV) at approximately 20 um resolution, which results in 0.15 gigapixels (GP) per snapshot. Second, we demonstrate an MCAM configuration that records video across a continuous 83 x 123 mm^2 FOV with two-fold increased resolution (0.48 GP per frame). Finally, we report a third high-resolution configuration (2 um resolution) that can rapidly produce 9.8 GP composites of large histopathology specimens.

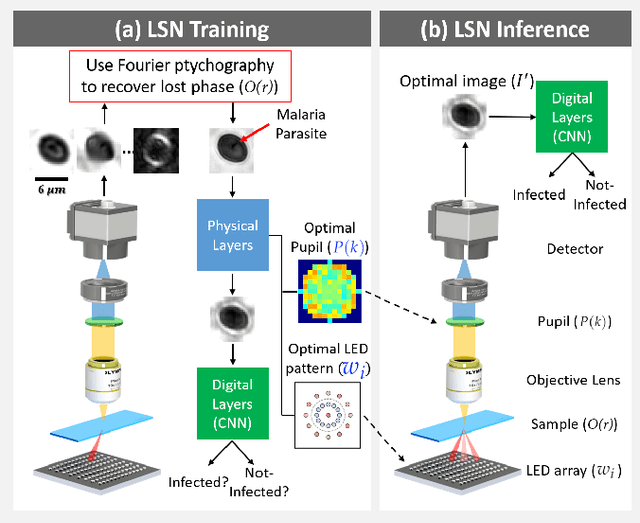

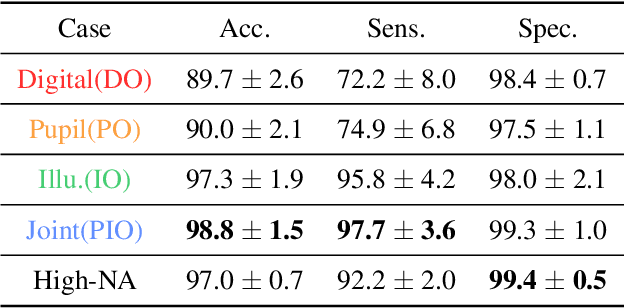

Multi-element microscope optimization by a learned sensing network with composite physical layers

Jun 27, 2020

Abstract:Standard microscopes offer a variety of settings to help improve the visibility of different specimens to the end microscope user. Increasingly, however, digital microscopes are used to capture images for automated interpretation by computer algorithms (e.g., for feature classification, detection or segmentation), often without any human involvement. In this work, we investigate an approach to jointly optimize multiple microscope settings, together with a classification network, for improved performance with such automated tasks. We explore the interplay between optimization of programmable illumination and pupil transmission, using experimentally imaged blood smears for automated malaria parasite detection, to show that multi-element "learned sensing" outperforms its single-element counterpart. While not necessarily ideal for human interpretation, the network's resulting low-resolution microscope images (20X-comparable) offer a machine learning network sufficient contrast to match the classification performance of corresponding high-resolution imagery (100X-comparable), pointing a path towards accurate automation over large fields-of-view.

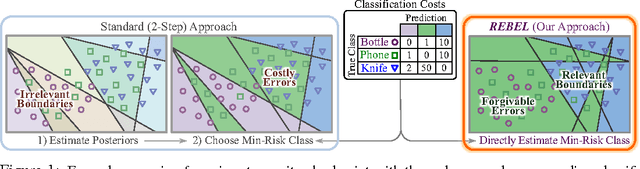

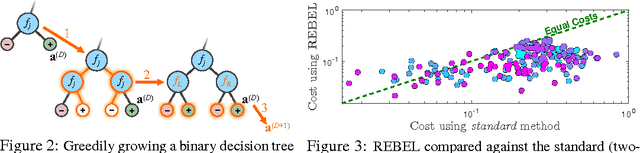

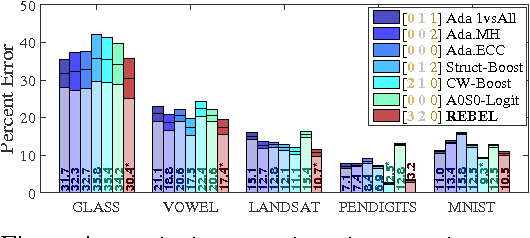

Improved Multi-Class Cost-Sensitive Boosting via Estimation of the Minimum-Risk Class

Nov 15, 2016

Abstract:We present a simple unified framework for multi-class cost-sensitive boosting. The minimum-risk class is estimated directly, rather than via an approximation of the posterior distribution. Our method jointly optimizes binary weak learners and their corresponding output vectors, requiring classes to share features at each iteration. By training in a cost-sensitive manner, weak learners are invested in separating classes whose discrimination is important, at the expense of less relevant classification boundaries. Additional contributions are a family of loss functions along with proof that our algorithm is Boostable in the theoretical sense, as well as an efficient procedure for growing decision trees for use as weak learners. We evaluate our method on a variety of datasets: a collection of synthetic planar data, common UCI datasets, MNIST digits, SUN scenes, and CUB-200 birds. Results show state-of-the-art performance across all datasets against several strong baselines, including non-boosting multi-class approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge