Reza Azadeh

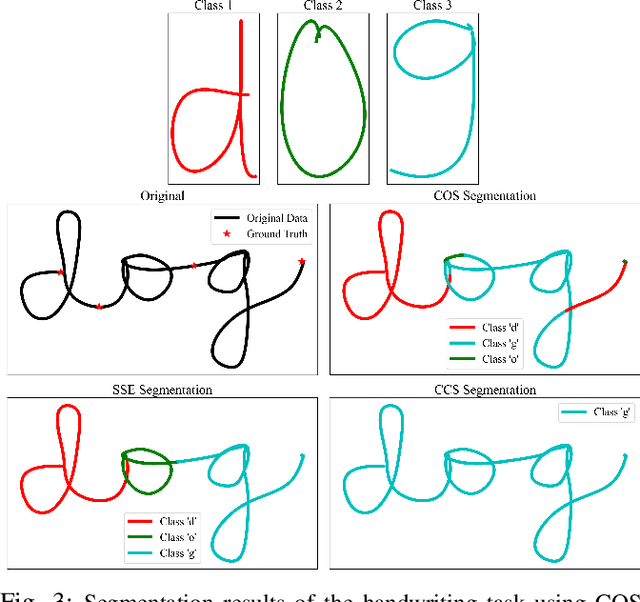

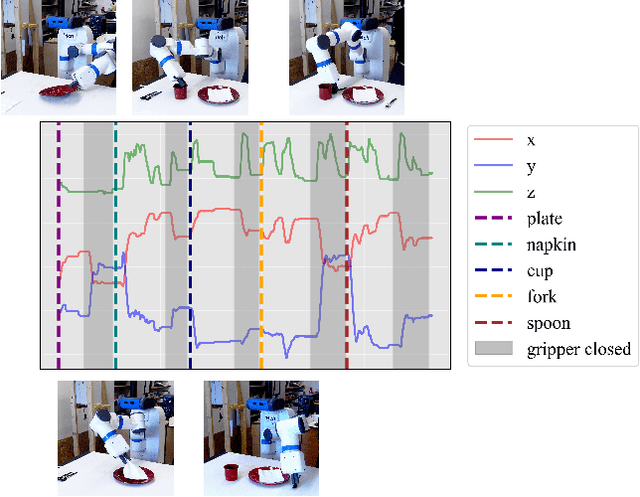

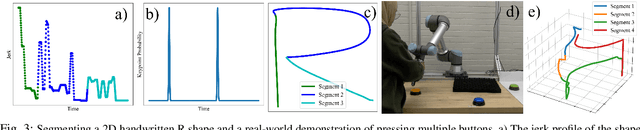

Parameter-Free Segmentation of Robot Movements with Cross-Correlation Using Different Similarity Metrics

May 09, 2025

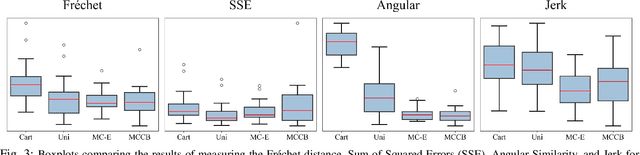

Abstract:Often, robots are asked to execute primitive movements, whether as a single action or in a series of actions representing a larger, more complex task. These movements can be learned in many ways, but a common one is from demonstrations presented to the robot by a teacher. However, these demonstrations are not always simple movements themselves, and complex demonstrations must be broken down, or segmented, into primitive movements. In this work, we present a parameter-free approach to segmentation using techniques inspired by autocorrelation and cross-correlation from signal processing. In cross-correlation, a representative signal is found in some larger, more complex signal by correlating the representative signal with the larger signal. This same idea can be applied to segmenting robot motion and demonstrations, provided with a representative motion primitive. This results in a fast and accurate segmentation, which does not take any parameters. One of the main contributions of this paper is the modification of the cross-correlation process by employing similarity metrics that can capture features specific to robot movements. To validate our framework, we conduct several experiments of complex tasks both in simulation and in real-world. We also evaluate the effectiveness of our segmentation framework by comparing various similarity metrics.

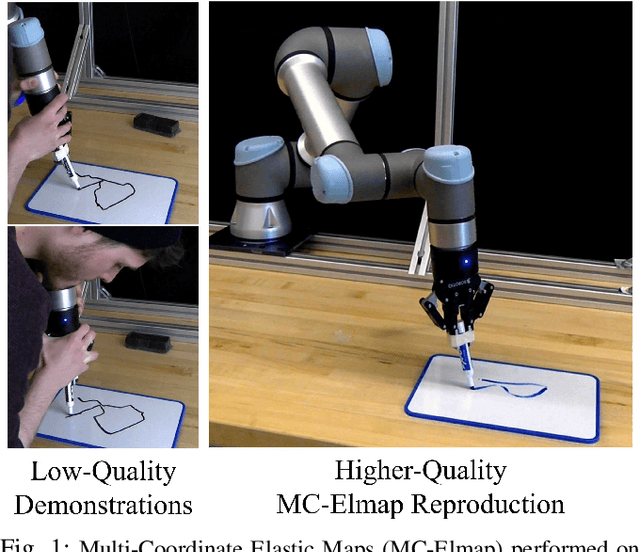

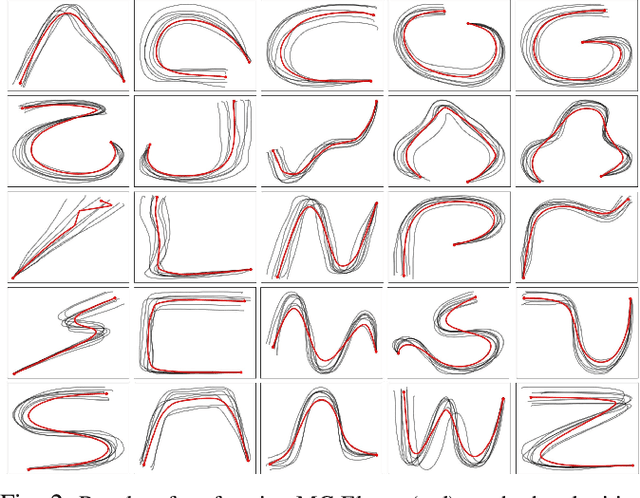

Robot Learning Using Multi-Coordinate Elastic Maps

May 09, 2025

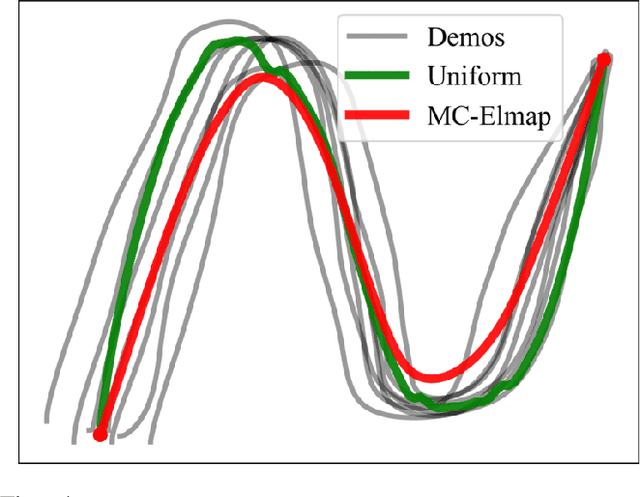

Abstract:To learn manipulation skills, robots need to understand the features of those skills. An easy way for robots to learn is through Learning from Demonstration (LfD), where the robot learns a skill from an expert demonstrator. While the main features of a skill might be captured in one differential coordinate (i.e., Cartesian), they could have meaning in other coordinates. For example, an important feature of a skill may be its shape or velocity profile, which are difficult to discover in Cartesian differential coordinate. In this work, we present a method which enables robots to learn skills from human demonstrations via encoding these skills into various differential coordinates, then determines the importance of each coordinate to reproduce the skill. We also introduce a modified form of Elastic Maps that includes multiple differential coordinates, combining statistical modeling of skills in these differential coordinate spaces. Elastic Maps, which are flexible and fast to compute, allow for the incorporation of several different types of constraints and the use of any number of demonstrations. Additionally, we propose methods for auto-tuning several parameters associated with the modified Elastic Map formulation. We validate our approach in several simulated experiments and a real-world writing task with a UR5e manipulator arm.

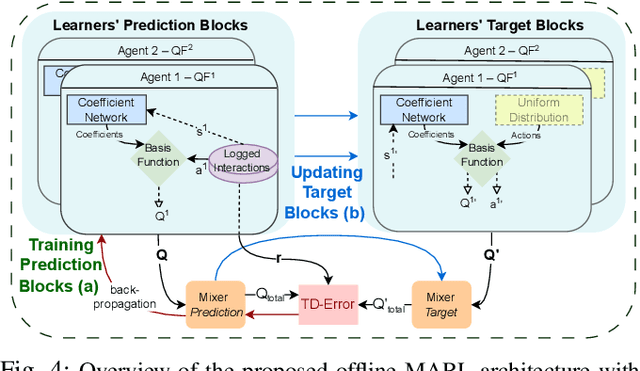

Investigating Adaptive Tuning of Assistive Exoskeletons Using Offline Reinforcement Learning: Challenges and Insights

Apr 30, 2025

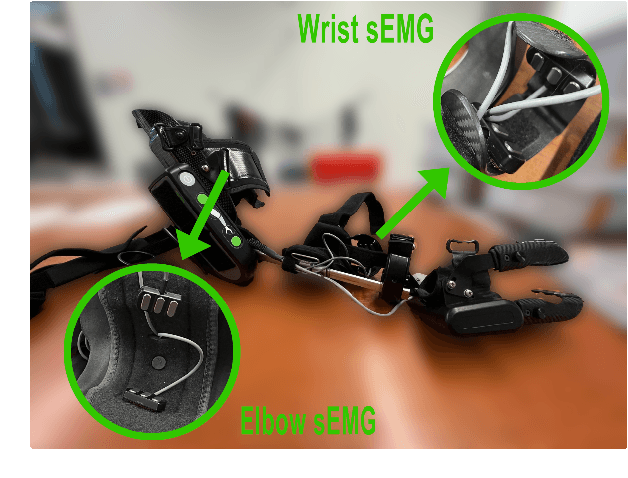

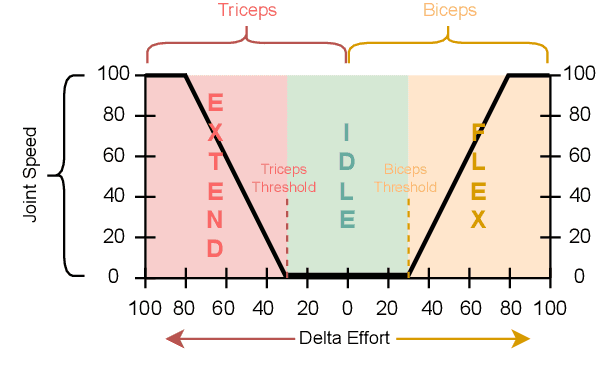

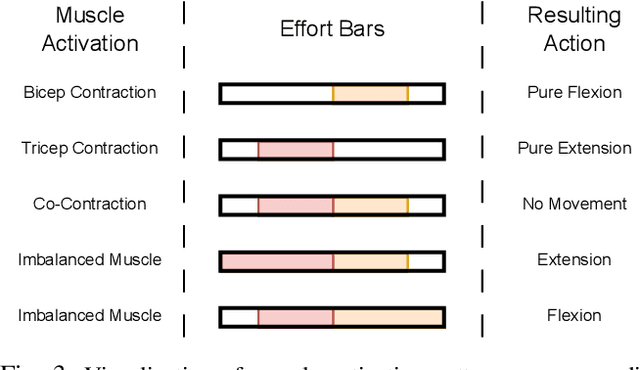

Abstract:Assistive exoskeletons have shown great potential in enhancing mobility for individuals with motor impairments, yet their effectiveness relies on precise parameter tuning for personalized assistance. In this study, we investigate the potential of offline reinforcement learning for optimizing effort thresholds in upper-limb assistive exoskeletons, aiming to reduce reliance on manual calibration. Specifically, we frame the problem as a multi-agent system where separate agents optimize biceps and triceps effort thresholds, enabling a more adaptive and data-driven approach to exoskeleton control. Mixed Q-Functionals (MQF) is employed to efficiently handle continuous action spaces while leveraging pre-collected data, thereby mitigating the risks associated with real-time exploration. Experiments were conducted using the MyoPro 2 exoskeleton across two distinct tasks involving horizontal and vertical arm movements. Our results indicate that the proposed approach can dynamically adjust threshold values based on learned patterns, potentially improving user interaction and control, though performance evaluation remains challenging due to dataset limitations.

Graph Attention Inference of Network Topology in Multi-Agent Systems

Aug 27, 2024

Abstract:Accurately identifying the underlying graph structures of multi-agent systems remains a difficult challenge. Our work introduces a novel machine learning-based solution that leverages the attention mechanism to predict future states of multi-agent systems by learning node representations. The graph structure is then inferred from the strength of the attention values. This approach is applied to both linear consensus dynamics and the non-linear dynamics of Kuramoto oscillators, resulting in implicit learning the graph by learning good agent representations. Our results demonstrate that the presented data-driven graph attention machine learning model can identify the network topology in multi-agent systems, even when the underlying dynamic model is not known, as evidenced by the F1 scores achieved in the link prediction.

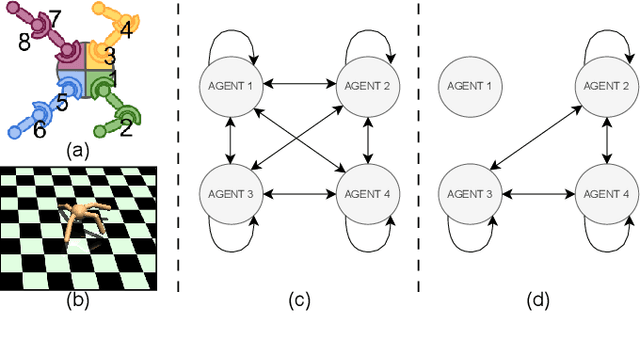

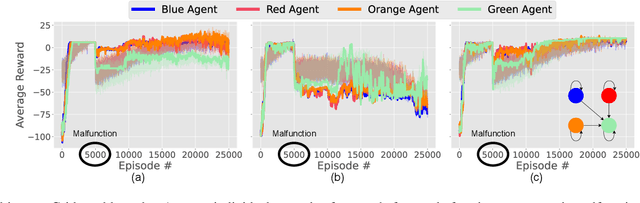

Collaborative Adaptation for Recovery from Unforeseen Malfunctions in Discrete and Continuous MARL Domains

Jul 27, 2024

Abstract:Cooperative multi-agent learning plays a crucial role for developing effective strategies to achieve individual or shared objectives in multi-agent teams. In real-world settings, agents may face unexpected failures, such as a robot's leg malfunctioning or a teammate's battery running out. These malfunctions decrease the team's ability to accomplish assigned task(s), especially if they occur after the learning algorithms have already converged onto a collaborative strategy. Current leading approaches in Multi-Agent Reinforcement Learning (MARL) often recover slowly -- if at all -- from such malfunctions. To overcome this limitation, we present the Collaborative Adaptation (CA) framework, highlighting its unique capability to operate in both continuous and discrete domains. Our framework enhances the adaptability of agents to unexpected failures by integrating inter-agent relationships into their learning processes, thereby accelerating the recovery from malfunctions. We evaluated our framework's performance through experiments in both discrete and continuous environments. Empirical results reveal that in scenarios involving unforeseen malfunction, although state-of-the-art algorithms often converge on sub-optimal solutions, the proposed CA framework mitigates and recovers more effectively.

Relational Q-Functionals: Multi-Agent Learning to Recover from Unforeseen Robot Malfunctions in Continuous Action Domains

Jul 27, 2024

Abstract:Cooperative multi-agent learning methods are essential in developing effective cooperation strategies in multi-agent domains. In robotics, these methods extend beyond multi-robot scenarios to single-robot systems, where they enable coordination among different robot modules (e.g., robot legs or joints). However, current methods often struggle to quickly adapt to unforeseen failures, such as a malfunctioning robot leg, especially after the algorithm has converged to a strategy. To overcome this, we introduce the Relational Q-Functionals (RQF) framework. RQF leverages a relational network, representing agents' relationships, to enhance adaptability, providing resilience against malfunction(s). Our algorithm also efficiently handles continuous state-action domains, making it adept for robotic learning tasks. Our empirical results show that RQF enables agents to use these relationships effectively to facilitate cooperation and recover from an unexpected malfunction in single-robot systems with multiple interacting modules. Thus, our approach offers promising applications in multi-agent systems, particularly in scenarios with unforeseen malfunctions.

An Adaptive Framework for Manipulator Skill Reproduction in Dynamic Environments

May 24, 2024

Abstract:Robot skill learning and execution in uncertain and dynamic environments is a challenging task. This paper proposes an adaptive framework that combines Learning from Demonstration (LfD), environment state prediction, and high-level decision making. Proactive adaptation prevents the need for reactive adaptation, which lags behind changes in the environment rather than anticipating them. We propose a novel LfD representation, Elastic-Laplacian Trajectory Editing (ELTE), which continuously adapts the trajectory shape to predictions of future states. Then, a high-level reactive system using an Unscented Kalman Filter (UKF) and Hidden Markov Model (HMM) prevents unsafe execution in the current state of the dynamic environment based on a discrete set of decisions. We first validate our LfD representation in simulation, then experimentally assess the entire framework using a legged mobile manipulator in 36 real-world scenarios. We show the effectiveness of the proposed framework under different dynamic changes in the environment. Our results show that the proposed framework produces robust and stable adaptive behaviors.

Investigating the Generalizability of Assistive Robots Models over Various Tasks

May 03, 2024

Abstract:In the domain of assistive robotics, the significance of effective modeling is well acknowledged. Prior research has primarily focused on enhancing model accuracy or involved the collection of extensive, often impractical amounts of data. While improving individual model accuracy is beneficial, it necessitates constant remodeling for each new task and user interaction. In this paper, we investigate the generalizability of different modeling methods. We focus on constructing the dynamic model of an assistive exoskeleton using six data-driven regression algorithms. Six tasks are considered in our experiments, including horizontal, vertical, diagonal from left leg to the right eye and the opposite, as well as eating and pushing. We constructed thirty-six unique models applying different regression methods to data gathered from each task. Each trained model's performance was evaluated in a cross-validation scenario, utilizing five folds for each dataset. These trained models are then tested on the other tasks that the model is not trained with. Finally the models in our study are assessed in terms of generalizability. Results show the superior generalizability of the task model performed along the horizontal plane, and decision tree based algorithms.

Design of Fuzzy Logic Parameter Tuners for Upper-Limb Assistive Robots

May 03, 2024Abstract:Assistive Exoskeleton Robots are helping restore functions to people suffering from underlying medical conditions. These robots require precise tuning of hyper-parameters to feel natural to the user. The device hyper-parameters often need to be re-tuned from task to task, which can be tedious and require expert knowledge. To address this issue, we develop a set of fuzzy logic controllers that can dynamically tune robot gain parameters to adapt its sensitivity to the user's intention determined from muscle activation. The designed fuzzy controllers benefit from a set of expert-defined rules and do not rely on extensive amounts of training data. We evaluate the designed controllers with three different tasks and compare our results against the manually tuned system. Our preliminary results show that our controllers reduce the amount of fighting between the device and the human, measured using a set of pressure sensors.

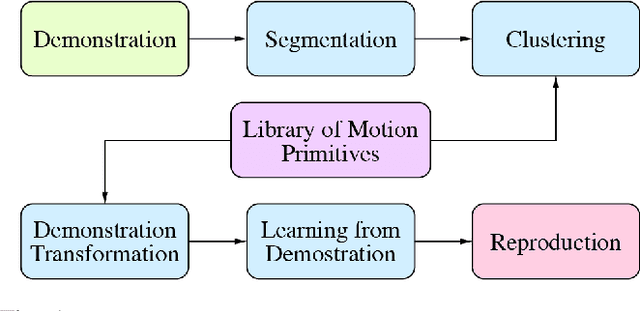

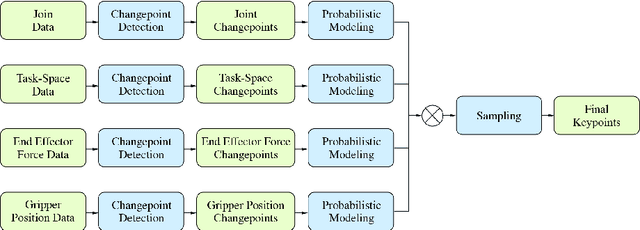

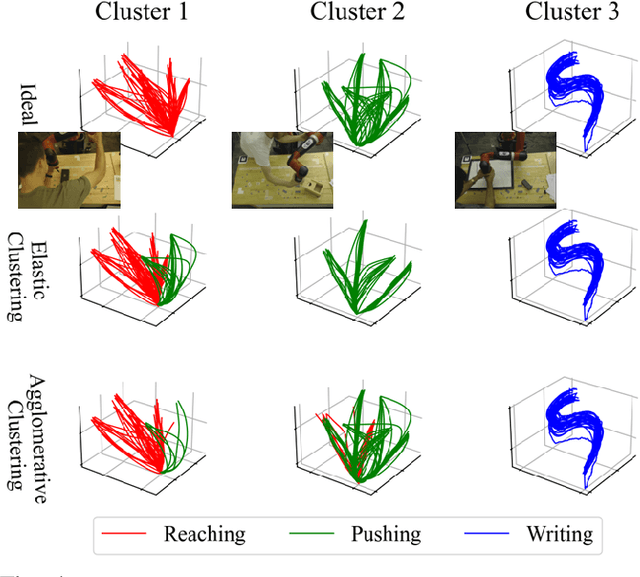

A Framework for Learning and Reusing Robotic Skills

Apr 29, 2024

Abstract:In this paper, we present our work in progress towards creating a library of motion primitives. This library facilitates easier and more intuitive learning and reusing of robotic skills. Users can teach robots complex skills through Learning from Demonstration, which is automatically segmented into primitives and stored in clusters of similar skills. We propose a novel multimodal segmentation method as well as a novel trajectory clustering method. Then, when needed for reuse, we transform primitives into new environments using trajectory editing. We present simulated results for our framework with demonstrations taken on real-world robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge