Ramji Venkataramanan

Error Propagation and Model Collapse in Diffusion Models: A Theoretical Study

Feb 18, 2026Abstract:Machine learning models are increasingly trained or fine-tuned on synthetic data. Recursively training on such data has been observed to significantly degrade performance in a wide range of tasks, often characterized by a progressive drift away from the target distribution. In this work, we theoretically analyze this phenomenon in the setting of score-based diffusion models. For a realistic pipeline where each training round uses a combination of synthetic data and fresh samples from the target distribution, we obtain upper and lower bounds on the accumulated divergence between the generated and target distributions. This allows us to characterize different regimes of drift, depending on the score estimation error and the proportion of fresh data used in each generation. We also provide empirical results on synthetic data and images to illustrate the theory.

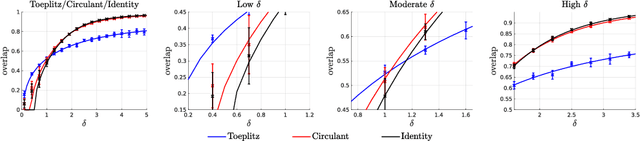

Optimal Estimation in Orthogonally Invariant Generalized Linear Models: Spectral Initialization and Approximate Message Passing

Feb 09, 2026Abstract:We consider the problem of parameter estimation from a generalized linear model with a random design matrix that is orthogonally invariant in law. Such a model allows the design have an arbitrary distribution of singular values and only assumes that its singular vectors are generic. It is a vast generalization of the i.i.d. Gaussian design typically considered in the theoretical literature, and is motivated by the fact that real data often have a complex correlation structure so that methods relying on i.i.d. assumptions can be highly suboptimal. Building on the paradigm of spectrally-initialized iterative optimization, this paper proposes optimal spectral estimators and combines them with an approximate message passing (AMP) algorithm, establishing rigorous performance guarantees for these two algorithmic steps. Both the spectral initialization and the subsequent AMP meet existing conjectures on the fundamental limits to estimation -- the former on the optimal sample complexity for efficient weak recovery, and the latter on the optimal errors. Numerical experiments suggest the effectiveness of our methods and accuracy of our theory beyond orthogonally invariant data.

Quantitative Group Testing and Pooled Data in the Linear Regime with Sublinear Tests

Aug 01, 2024Abstract:In the pooled data problem, the goal is to identify the categories associated with a large collection of items via a sequence of pooled tests. Each pooled test reveals the number of items in the pool belonging to each category. A prominent special case is quantitative group testing (QGT), which is the case of pooled data with two categories. We consider these problems in the non-adaptive and linear regime, where the fraction of items in each category is of constant order. We propose a scheme with a spatially coupled Bernoulli test matrix and an efficient approximate message passing (AMP) algorithm for recovery. We rigorously characterize its asymptotic performance in both the noiseless and noisy settings, and prove that in the noiseless case, the AMP algorithm achieves almost-exact recovery with a number of tests sublinear in the number of items. For both QGT and pooled data, this is the first efficient scheme that provably achieves recovery in the linear regime with a sublinear number of tests, with performance degrading gracefully in the presence of noise. Numerical simulations illustrate the benefits of the spatially coupled scheme at finite dimensions, showing that it outperforms i.i.d. test designs as well as other recovery algorithms based on convex programming.

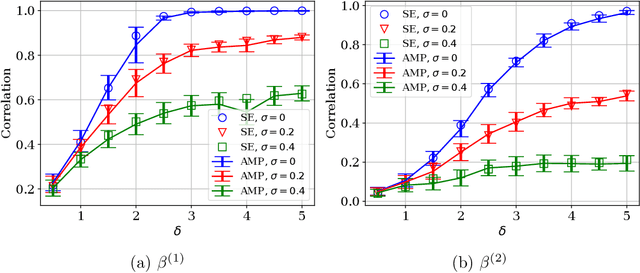

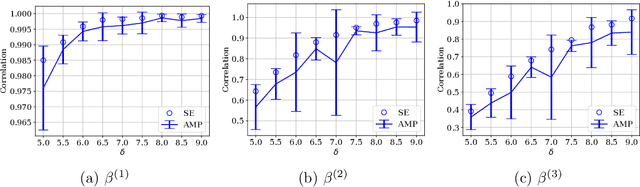

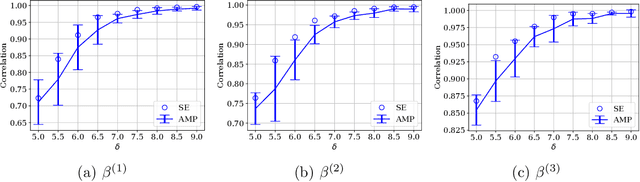

Inferring Change Points in High-Dimensional Linear Regression via Approximate Message Passing

Apr 11, 2024

Abstract:We consider the problem of localizing change points in high-dimensional linear regression. We propose an Approximate Message Passing (AMP) algorithm for estimating both the signals and the change point locations. Assuming Gaussian covariates, we give an exact asymptotic characterization of its estimation performance in the limit where the number of samples grows proportionally to the signal dimension. Our algorithm can be tailored to exploit any prior information on the signal, noise, and change points. It also enables uncertainty quantification in the form of an efficiently computable approximate posterior distribution, whose asymptotic form we characterize exactly. We validate our theory via numerical experiments, and demonstrate the favorable performance of our estimators on both synthetic data and images.

Coded Many-User Multiple Access via Approximate Message Passing

Feb 08, 2024

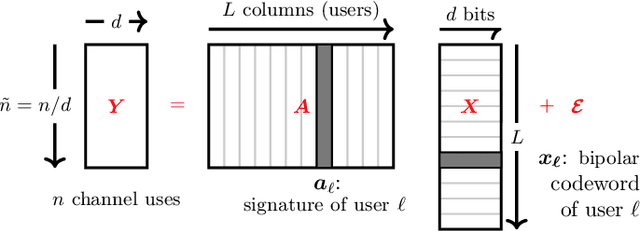

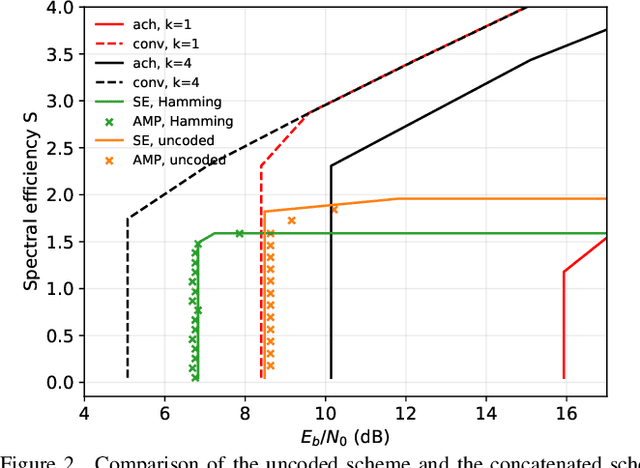

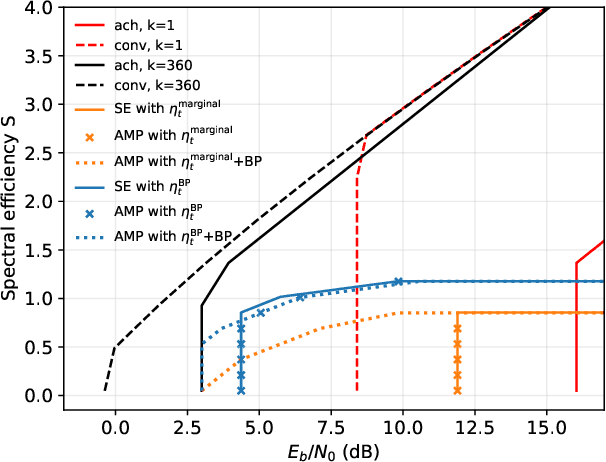

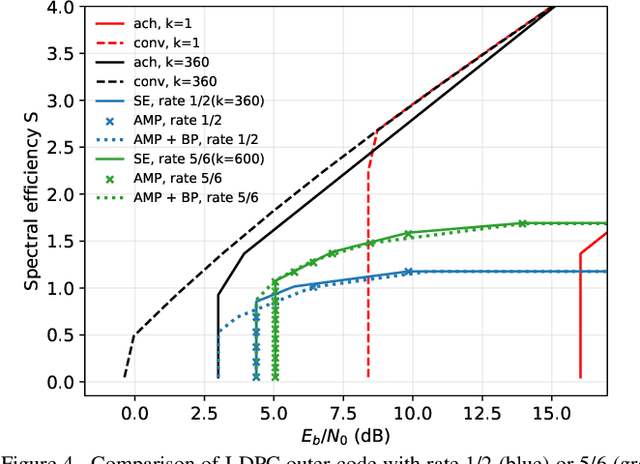

Abstract:We consider communication over the Gaussian multiple-access channel in the regime where the number of users grows linearly with the codelength. We investigate coded CDMA schemes where each user's information is encoded via a linear code before being modulated with a signature sequence. We propose an efficient approximate message passing (AMP) decoder that can be tailored to the structure of the linear code, and provide an exact asymptotic characterization of its performance. Based on this result, we consider a decoder that integrates AMP and belief propagation and characterize the tradeoff between spectral efficiency and signal-to-noise ratio, for a given target error rate. Simulation results are provided to demonstrate the benefits of the concatenated scheme at finite lengths.

Approximate Message Passing with Rigorous Guarantees for Pooled Data and Quantitative Group Testing

Sep 27, 2023Abstract:In the pooled data problem, the goal is to identify the categories associated with a large collection of items via a sequence of pooled tests. Each pooled test reveals the number of items of each category within the pool. We study an approximate message passing (AMP) algorithm for estimating the categories and rigorously characterize its performance, in both the noiseless and noisy settings. For the noiseless setting, we show that the AMP algorithm is equivalent to one recently proposed by El Alaoui et al. Our results provide a rigorous version of their performance guarantees, previously obtained via non-rigorous techniques. For the case of pooled data with two categories, known as quantitative group testing (QGT), we use the AMP guarantees to compute precise limiting values of the false positive rate and the false negative rate. Though the pooled data problem and QGT are both instances of estimation in a linear model, existing AMP theory cannot be directly applied since the design matrices are binary valued. The key technical ingredient in our result is a rigorous analysis of AMP for generalized linear models defined via generalized white noise design matrices. This result, established using a recent universality result of Wang et al., is of independent interest. Our theoretical results are validated by numerical simulations. For comparison, we propose estimators based on convex relaxation and iterative thresholding, without providing theoretical guarantees. Our simulations indicate that AMP outperforms the convex programming estimator for a range of QGT scenarios, but the convex program performs better for pooled data with three categories.

Bayes-Optimal Estimation in Generalized Linear Models via Spatial Coupling

Sep 15, 2023Abstract:We consider the problem of signal estimation in a generalized linear model (GLM). GLMs include many canonical problems in statistical estimation, such as linear regression, phase retrieval, and 1-bit compressed sensing. Recent work has precisely characterized the asymptotic minimum mean-squared error (MMSE) for GLMs with i.i.d. Gaussian sensing matrices. However, in many models there is a significant gap between the MMSE and the performance of the best known feasible estimators. In this work, we address this issue by considering GLMs defined via spatially coupled sensing matrices. We propose an efficient approximate message passing (AMP) algorithm for estimation and prove that with a simple choice of spatially coupled design, the MSE of a carefully tuned AMP estimator approaches the asymptotic MMSE in the high-dimensional limit. To prove the result, we first rigorously characterize the asymptotic performance of AMP for a GLM with a generic spatially coupled design. This characterization is in terms of a deterministic recursion (`state evolution') that depends on the parameters defining the spatial coupling. Then, using a simple spatially coupled design and judicious choice of functions defining the AMP, we analyze the fixed points of the resulting state evolution and show that it achieves the asymptotic MMSE. Numerical results for phase retrieval and rectified linear regression show that spatially coupled designs can yield substantially lower MSE than i.i.d. Gaussian designs at finite dimensions when used with AMP algorithms.

Spectral Estimators for Structured Generalized Linear Models via Approximate Message Passing

Aug 28, 2023

Abstract:We consider the problem of parameter estimation from observations given by a generalized linear model. Spectral methods are a simple yet effective approach for estimation: they estimate the parameter via the principal eigenvector of a matrix obtained by suitably preprocessing the observations. Despite their wide use, a rigorous performance characterization of spectral estimators, as well as a principled way to preprocess the data, is available only for unstructured (i.e., i.i.d. Gaussian and Haar) designs. In contrast, real-world design matrices are highly structured and exhibit non-trivial correlations. To address this problem, we consider correlated Gaussian designs which capture the anisotropic nature of the measurements via a feature covariance matrix $\Sigma$. Our main result is a precise asymptotic characterization of the performance of spectral estimators in this setting. This then allows to identify the optimal preprocessing that minimizes the number of samples needed to meaningfully estimate the parameter. Remarkably, such an optimal spectral estimator depends on $\Sigma$ only through its normalized trace, which can be consistently estimated from the data. Numerical results demonstrate the advantage of our principled approach over previous heuristic methods. Existing analyses of spectral estimators crucially rely on the rotational invariance of the design matrix. This key assumption does not hold for correlated Gaussian designs. To circumvent this difficulty, we develop a novel strategy based on designing and analyzing an approximate message passing algorithm whose fixed point coincides with the desired spectral estimator. Our methodology is general, and opens the way to the precise characterization of spiked matrices and of the corresponding spectral methods in a variety of settings.

Mixed Regression via Approximate Message Passing

Apr 05, 2023

Abstract:We study the problem of regression in a generalized linear model (GLM) with multiple signals and latent variables. This model, which we call a matrix GLM, covers many widely studied problems in statistical learning, including mixed linear regression, max-affine regression, and mixture-of-experts. In mixed linear regression, each observation comes from one of $L$ signal vectors (regressors), but we do not know which one; in max-affine regression, each observation comes from the maximum of $L$ affine functions, each defined via a different signal vector. The goal in all these problems is to estimate the signals, and possibly some of the latent variables, from the observations. We propose a novel approximate message passing (AMP) algorithm for estimation in a matrix GLM and rigorously characterize its performance in the high-dimensional limit. This characterization is in terms of a state evolution recursion, which allows us to precisely compute performance measures such as the asymptotic mean-squared error. The state evolution characterization can be used to tailor the AMP algorithm to take advantage of any structural information known about the signals. Using state evolution, we derive an optimal choice of AMP `denoising' functions that minimizes the estimation error in each iteration. The theoretical results are validated by numerical simulations for mixed linear regression, max-affine regression, and mixture-of-experts. For max-affine regression, we propose an algorithm that combines AMP with expectation-maximization to estimate intercepts of the model along with the signals. The numerical results show that AMP significantly outperforms other estimators for mixed linear regression and max-affine regression in most parameter regimes.

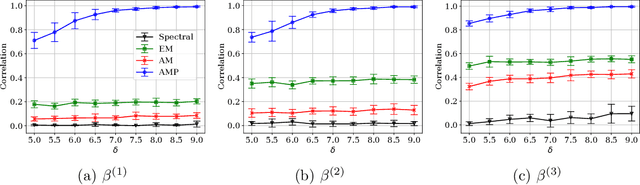

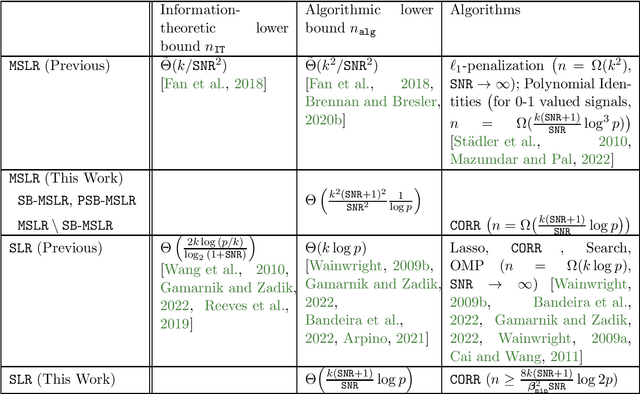

Statistical-Computational Tradeoffs in Mixed Sparse Linear Regression

Mar 03, 2023

Abstract:We consider the problem of mixed sparse linear regression with two components, where two real $k$-sparse signals $\beta_1, \beta_2$ are to be recovered from $n$ unlabelled noisy linear measurements. The sparsity is allowed to be sublinear in the dimension, and additive noise is assumed to be independent Gaussian with variance $\sigma^2$. Prior work has shown that the problem suffers from a $\frac{k}{SNR^2}$-to-$\frac{k^2}{SNR^2}$ statistical-to-computational gap, resembling other computationally challenging high-dimensional inference problems such as Sparse PCA and Robust Sparse Mean Estimation; here $SNR$ is the signal-to-noise ratio. We establish the existence of a more extensive computational barrier for this problem through the method of low-degree polynomials, but show that the problem is computationally hard only in a very narrow symmetric parameter regime. We identify a smooth information-computation tradeoff between the sample complexity $n$ and runtime for any randomized algorithm in this hard regime. Via a simple reduction, this provides novel rigorous evidence for the existence of a computational barrier to solving exact support recovery in sparse phase retrieval with sample complexity $n = \tilde{o}(k^2)$. Our second contribution is to analyze a simple thresholding algorithm which, outside of the narrow regime where the problem is hard, solves the associated mixed regression detection problem in $O(np)$ time with square-root the number of samples and matches the sample complexity required for (non-mixed) sparse linear regression; this allows the recovery problem to be subsequently solved by state-of-the-art techniques from the dense case. As a special case of our results, we show that this simple algorithm is order-optimal among a large family of algorithms in solving exact signed support recovery in sparse linear regression.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge