Rafael C. Cardoso

University of Aberdeen

Proceedings of the Second Workshop on Agents and Robots for reliable Engineered Autonomy

Jul 19, 2022Abstract:This volume contains the proceedings of the Second Workshop on Agents and Robots for reliable Engineered Autonomy (AREA 2022), co-located with the 31st International Joint Conference on Artificial Intelligence and the 25th European Conference on Artificial Intelligence (IJCAI-ECAI 2022). The AREA workshop brings together researchers from autonomous agents, software engineering and robotic communities, as combining knowledge coming from these research areas may lead to innovative approaches that solve complex problems related with the verification and validation of autonomous robotic systems.

Towards Partial Monitoring: It is Always too Soon to Give Up

Oct 25, 2021

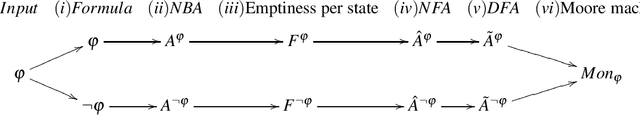

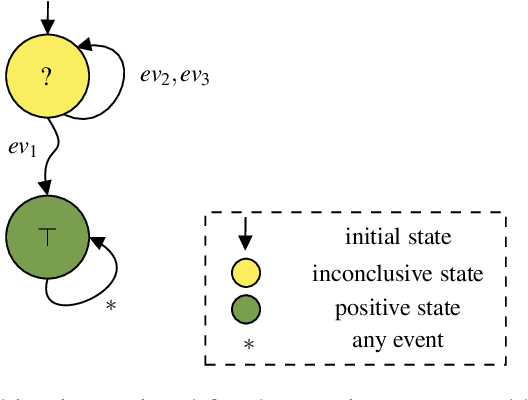

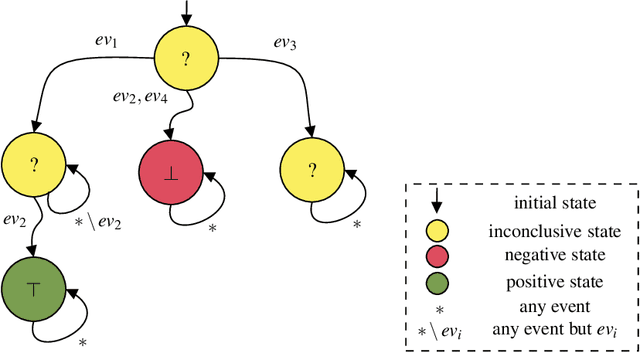

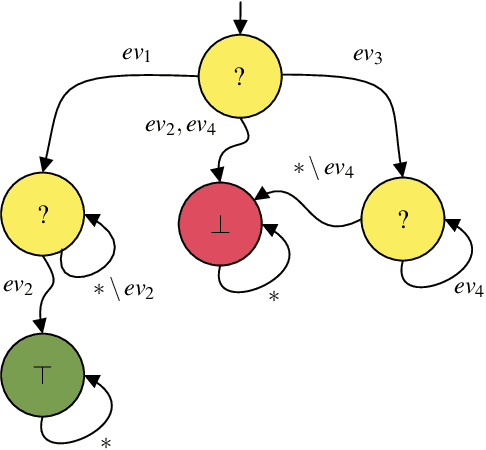

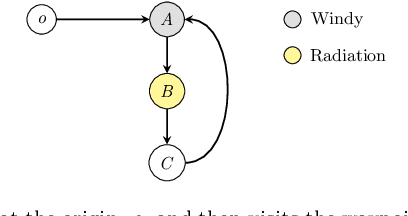

Abstract:Runtime Verification is a lightweight formal verification technique. It is used to verify at runtime whether the system under analysis behaves as expected. The expected behaviour is usually formally specified by means of properties, which are used to automatically synthesise monitors. A monitor is a device that, given a sequence of events representing a system execution, returns a verdict symbolising the satisfaction or violation of the formal property. Properties that can (resp. cannot) be verified at runtime by a monitor are called monitorable and non-monitorable, respectively. In this paper, we revise the notion of monitorability from a practical perspective, where we show how non-monitorable properties can still be used to generate partial monitors, which can partially check the properties. Finally, we present the implications both from a theoretical and practical perspectives.

* In Proceedings FMAS 2021, arXiv:2110.11527

Towards Compositional Verification for Modular Robotic Systems

Dec 03, 2020

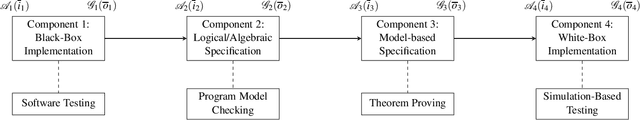

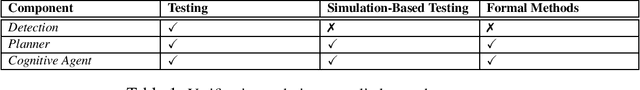

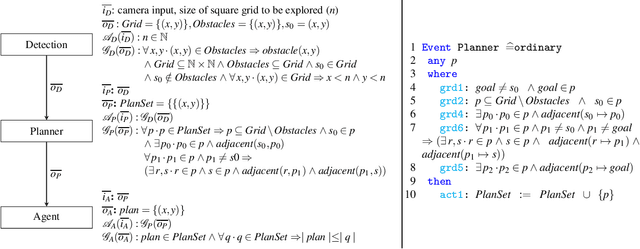

Abstract:Software engineering of modular robotic systems is a challenging task, however, verifying that the developed components all behave as they should individually and as a whole presents its own unique set of challenges. In particular, distinct components in a modular robotic system often require different verification techniques to ensure that they behave as expected. Ensuring whole system consistency when individual components are verified using a variety of techniques and formalisms is difficult. This paper discusses how to use compositional verification to integrate the various verification techniques that are applied to modular robotic software, using a First-Order Logic (FOL) contract that captures each component's assumptions and guarantees. These contracts can then be used to guide the verification of the individual components, be it by testing or the use of a formal method. We provide an illustrative example of an autonomous robot used in remote inspection. We also discuss a way of defining confidence for the verification associated with each component.

* In Proceedings FMAS 2020, arXiv:2012.01176

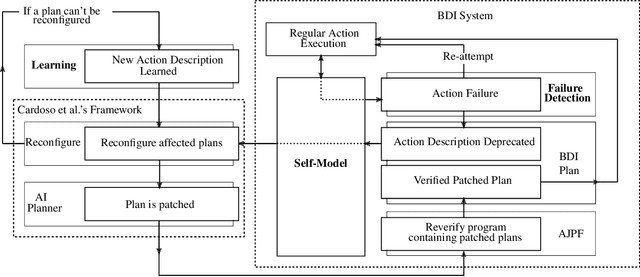

Adaptable and Verifiable BDI Reasoning

Jul 23, 2020

Abstract:Long-term autonomy requires autonomous systems to adapt as their capabilities no longer perform as expected. To achieve this, a system must first be capable of detecting such changes. In this position paper, we describe a system architecture for BDI autonomous agents capable of adapting to changes in a dynamic environment and outline the required research. Specifically, we describe an agent-maintained self-model with accompanying theories of durative actions and learning new action descriptions in BDI systems.

* In Proceedings AREA 2020, arXiv:2007.11260

Proceedings of the First Workshop on Agents and Robots for reliable Engineered Autonomy

Jul 22, 2020Abstract:This volume contains the proceedings of the First Workshop on Agents and Robots for reliable Engineered Autonomy (AREA 2020), co-located with the 24th European Conference on Artificial Intelligence (ECAI 2020). AREA brings together researchers from autonomous agents, software engineering and robotic communities, as combining knowledge coming from these research areas may lead to innovative approaches that solve complex problems related with the verification and validation of autonomous robotic systems.

Heterogeneous Verification of an Autonomous Curiosity Rover

Jul 20, 2020

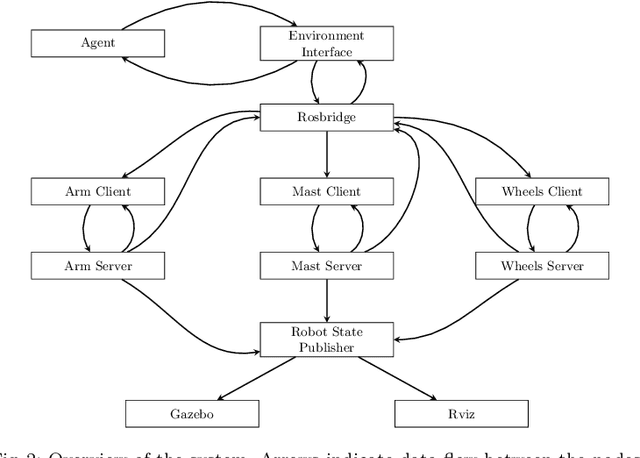

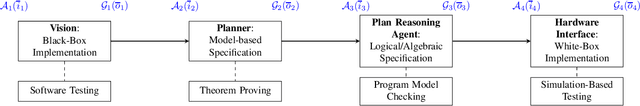

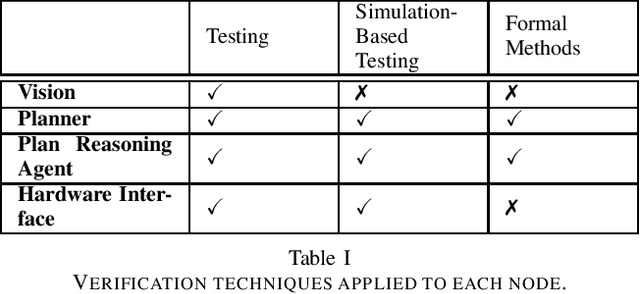

Abstract:The Curiosity rover is one of the most complex systems successfully deployed in a planetary exploration mission to date. It was sent by NASA to explore the surface of Mars and to identify potential signs of life. Even though it has limited autonomy on-board, most of its decisions are made by the ground control team. This hinders the speed at which the Curiosity reacts to its environment, due to the communication delays between Earth and Mars. Depending on the orbital position of both planets, it can take 4--24 minutes for a message to be transmitted between Earth and Mars. If the Curiosity were controlled autonomously, it would be able to perform its activities much faster and more flexibly. However, one of the major barriers to increased use of autonomy in such scenarios is the lack of assurances that the autonomous behaviour will work as expected. In this paper, we use a Robot Operating System (ROS) model of the Curiosity that is simulated in Gazebo and add an autonomous agent that is responsible for high-level decision-making. Then, we use a mixture of formal and non-formal techniques to verify the distinct system components (ROS nodes). This use of heterogeneous verification techniques is essential to provide guarantees about the nodes at different abstraction levels, and allows us to bring together relevant verification evidence to provide overall assurance.

Modular Verification of Autonomous Space Robotics

Aug 28, 2019

Abstract:Ensuring that autonomous space robot control software behaves as it should is crucial, particularly as software failure in space often equates to mission failure and could potentially endanger nearby astronauts and costly equipment. To minimise mission failure caused by software errors, we can utilise a variety of tools and techniques to verify that the software behaves as intended. In particular, distinct nodes in a robotic system often require different verification techniques to ensure that they behave as expected. This paper introduces a method for integrating the various verification techniques that are applied to robotic software, via a First-Order Logic (FOL) specification that captures each node's assumptions and guarantees. These FOL specifications are then used to guide the verification of the individual nodes, be it by testing or the use of a formal method. We also outline a way of measuring our confidence in the verification of the entire system in terms of the verification techniques used.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge