Qing Rao

ADNet: Attention-guided Deformable Convolutional Network for High Dynamic Range Imaging

May 22, 2021

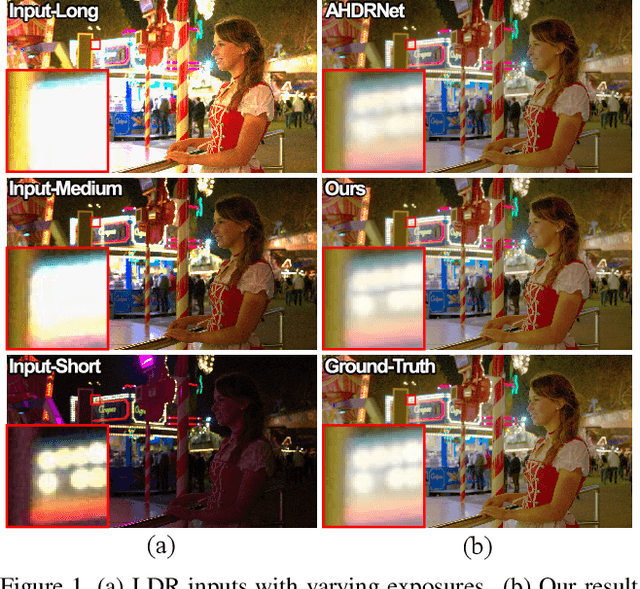

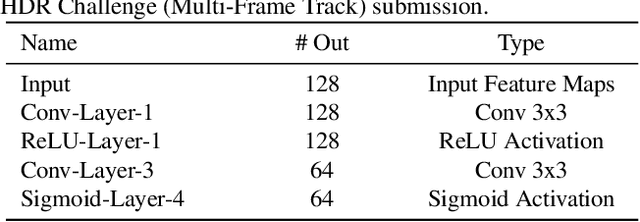

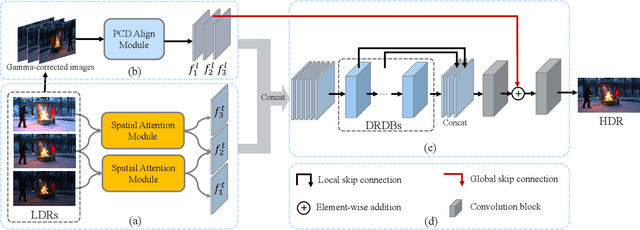

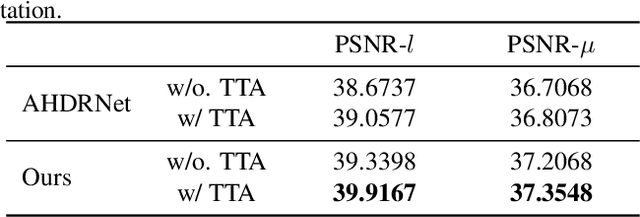

Abstract:In this paper, we present an attention-guided deformable convolutional network for hand-held multi-frame high dynamic range (HDR) imaging, namely ADNet. This problem comprises two intractable challenges of how to handle saturation and noise properly and how to tackle misalignments caused by object motion or camera jittering. To address the former, we adopt a spatial attention module to adaptively select the most appropriate regions of various exposure low dynamic range (LDR) images for fusion. For the latter one, we propose to align the gamma-corrected images in the feature-level with a Pyramid, Cascading and Deformable (PCD) alignment module. The proposed ADNet shows state-of-the-art performance compared with previous methods, achieving a PSNR-$l$ of 39.4471 and a PSNR-$\mu$ of 37.6359 in NTIRE 2021 Multi-Frame HDR Challenge.

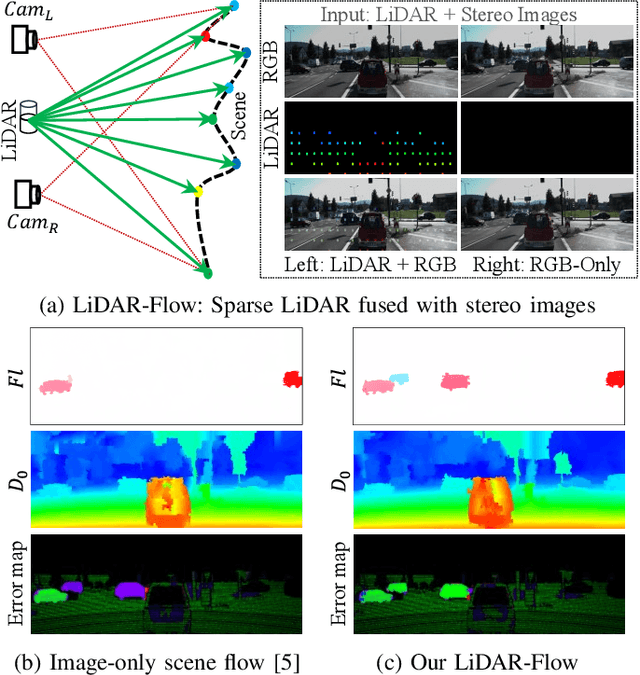

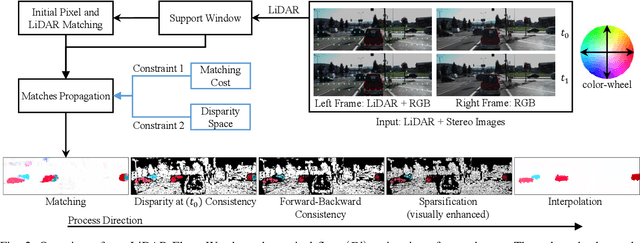

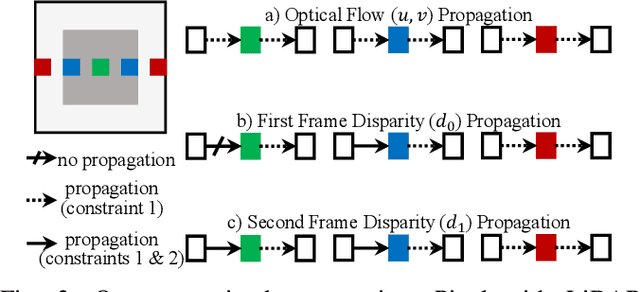

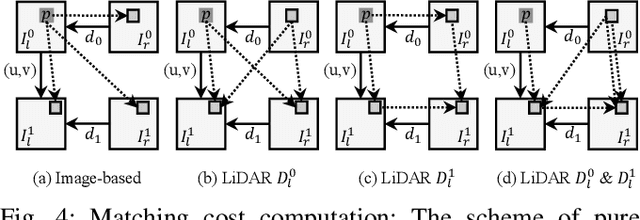

LiDAR-Flow: Dense Scene Flow Estimation from Sparse LiDAR and Stereo Images

Oct 31, 2019

Abstract:We propose a new approach called LiDAR-Flow to robustly estimate a dense scene flow by fusing a sparse LiDAR with stereo images. We take the advantage of the high accuracy of LiDAR to resolve the lack of information in some regions of stereo images due to textureless objects, shadows, ill-conditioned light environment and many more. Additionally, this fusion can overcome the difficulty of matching unstructured 3D points between LiDAR-only scans. Our LiDAR-Flow approach consists of three main steps; each of them exploits LiDAR measurements. First, we build strong seeds from LiDAR to enhance the robustness of matches between stereo images. The imagery part seeks the motion matches and increases the density of scene flow estimation. Then, a consistency check employs LiDAR seeds to remove the possible mismatches. Finally, LiDAR measurements constraint the edge-preserving interpolation method to fill the remaining gaps. In our evaluation we investigate the individual processing steps of our LiDAR-Flow approach and demonstrate the superior performance compared to image-only approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge