Pere Barlet-Ros

Barcelona Neural Networking Center, Universitat Politècnica de Catalunya, Spain

From Simulation to Deep Learning: Survey on Network Performance Modeling Approaches

Mar 30, 2026Abstract:Network performance modeling is a field that predates early computer networks and the beginning of the Internet. It aims to predict the traffic performance of packet flows in a given network. Its applications range from network planning and troubleshooting to feeding information to network controllers for configuration optimization. Traditional network performance modeling has relied heavily on Discrete Event Simulation (DES) and analytical methods grounded in mathematical theories such as Queuing Theory and Network Calculus. However, as of late, we have observed a paradigm shift, with attempts to obtain efficient Parallel DES, the surge of Machine Learning models, and their integration with other methodologies in hybrid approaches. This has resulted in a great variety of modeling approaches, each with its strengths and often tailored to specific scenarios or requirements. In this paper, we comprehensively survey the relevant network performance modeling approaches for wired networks over the last decades. With this understanding, we also define a taxonomy of approaches, summarizing our understanding of the state-of-the-art and how both technology and the concerns of the research community evolve over time. Finally, we also consider how these models are evaluated, how their different nature results in different evaluation requirements and goals, and how this may complicate their comparison.

Ordered Topological Deep Learning: a Network Modeling Case Study

Mar 20, 2025

Abstract:Computer networks are the foundation of modern digital infrastructure, facilitating global communication and data exchange. As demand for reliable high-bandwidth connectivity grows, advanced network modeling techniques become increasingly essential to optimize performance and predict network behavior. Traditional modeling methods, such as packet-level simulators and queueing theory, have notable limitations --either being computationally expensive or relying on restrictive assumptions that reduce accuracy. In this context, the deep learning-based RouteNet family of models has recently redefined network modeling by showing an unprecedented cost-performance trade-off. In this work, we revisit RouteNet's sophisticated design and uncover its hidden connection to Topological Deep Learning (TDL), an emerging field that models higher-order interactions beyond standard graph-based methods. We demonstrate that, although originally formulated as a heterogeneous Graph Neural Network, RouteNet serves as the first instantiation of a new form of TDL. More specifically, this paper presents OrdGCCN, a novel TDL framework that introduces the notion of ordered neighbors in arbitrary discrete topological spaces, and shows that RouteNet's architecture can be naturally described as an ordered topological neural network. To the best of our knowledge, this marks the first successful real-world application of state-of-the-art TDL principles --which we confirm through extensive testbed experiments--, laying the foundation for the next generation of ordered TDL-driven applications.

RouteNet-Gauss: Hardware-Enhanced Network Modeling with Machine Learning

Jan 15, 2025

Abstract:Network simulation is pivotal in network modeling, assisting with tasks ranging from capacity planning to performance estimation. Traditional approaches such as Discrete Event Simulation (DES) face limitations in terms of computational cost and accuracy. This paper introduces RouteNet-Gauss, a novel integration of a testbed network with a Machine Learning (ML) model to address these challenges. By using the testbed as a hardware accelerator, RouteNet-Gauss generates training datasets rapidly and simulates network scenarios with high fidelity to real-world conditions. Experimental results show that RouteNet-Gauss significantly reduces prediction errors by up to 95% and achieves a 488x speedup in inference time compared to state-of-the-art DES-based methods. RouteNet-Gauss's modular architecture is dynamically constructed based on the specific characteristics of the network scenario, such as topology and routing. This enables it to understand and generalize to different network configurations beyond those seen during training, including networks up to 10x larger. Additionally, it supports Temporal Aggregated Performance Estimation (TAPE), providing configurable temporal granularity and maintaining high accuracy in flow performance metrics. This approach shows promise in improving both simulation efficiency and accuracy, offering a valuable tool for network operators.

Towards a graph-based foundation model for network traffic analysis

Sep 12, 2024

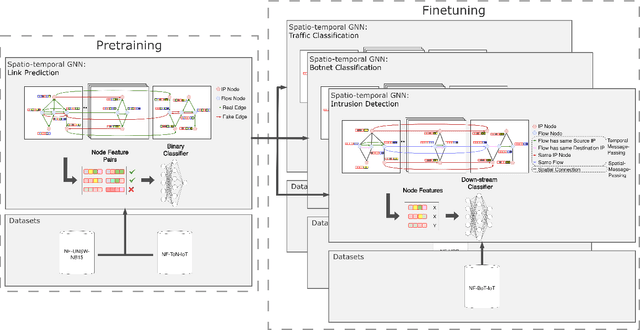

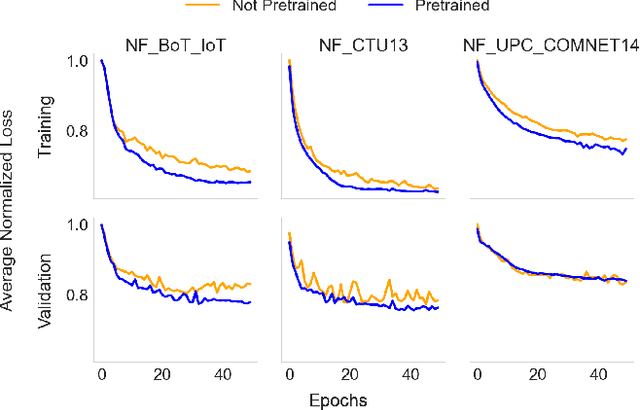

Abstract:Foundation models have shown great promise in various fields of study. A potential application of such models is in computer network traffic analysis, where these models can grasp the complexities of network traffic dynamics and adapt to any specific task or network environment with minimal fine-tuning. Previous approaches have used tokenized hex-level packet data and the model architecture of large language transformer models. We propose a new, efficient graph-based alternative at the flow-level. Our approach represents network traffic as a dynamic spatio-temporal graph, employing a self-supervised link prediction pretraining task to capture the spatial and temporal dynamics in this network graph framework. To evaluate the effectiveness of our approach, we conduct a few-shot learning experiment for three distinct downstream network tasks: intrusion detection, traffic classification, and botnet classification. Models finetuned from our pretrained base achieve an average performance increase of 6.87\% over training from scratch, demonstrating their ability to effectively learn general network traffic dynamics during pretraining. This success suggests the potential for a large-scale version to serve as an operational foundational model.

PPT-GNN: A Practical Pre-Trained Spatio-Temporal Graph Neural Network for Network Security

Jun 19, 2024

Abstract:Recent works have demonstrated the potential of Graph Neural Networks (GNN) for network intrusion detection. Despite their advantages, a significant gap persists between real-world scenarios, where detection speed is critical, and existing proposals, which operate on large graphs representing several hours of traffic. This gap results in unrealistic operational conditions and impractical detection delays. Moreover, existing models do not generalize well across different networks, hampering their deployment in production environments. To address these issues, we introduce PPTGNN, a practical spatio-temporal GNN for intrusion detection. PPTGNN enables near real-time predictions, while better capturing the spatio-temporal dynamics of network attacks. PPTGNN employs self-supervised pre-training for improved performance and reduced dependency on labeled data. We evaluate PPTGNN on three public datasets and show that it significantly outperforms state-of-the-art models, such as E-ResGAT and E-GraphSAGE, with an average accuracy improvement of 10.38%. Finally, we show that a pre-trained PPTGNN can easily be fine-tuned to unseen networks with minimal labeled examples. This highlights the potential of PPTGNN as a general, large-scale pre-trained model that can effectively operate in diverse network environments.

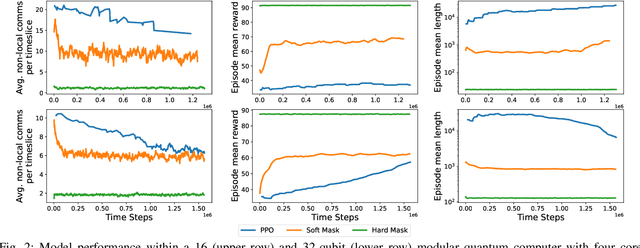

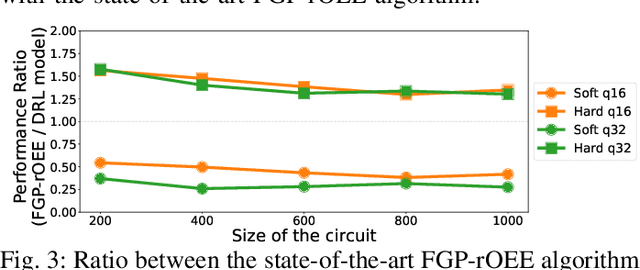

Circuit Partitioning for Multi-Core Quantum Architectures with Deep Reinforcement Learning

Jan 31, 2024

Abstract:Quantum computing holds immense potential for solving classically intractable problems by leveraging the unique properties of quantum mechanics. The scalability of quantum architectures remains a significant challenge. Multi-core quantum architectures are proposed to solve the scalability problem, arising a new set of challenges in hardware, communications and compilation, among others. One of these challenges is to adapt a quantum algorithm to fit within the different cores of the quantum computer. This paper presents a novel approach for circuit partitioning using Deep Reinforcement Learning, contributing to the advancement of both quantum computing and graph partitioning. This work is the first step in integrating Deep Reinforcement Learning techniques into Quantum Circuit Mapping, opening the door to a new paradigm of solutions to such problems.

Detecting Contextual Network Anomalies with Graph Neural Networks

Dec 11, 2023

Abstract:Detecting anomalies on network traffic is a complex task due to the massive amount of traffic flows in today's networks, as well as the highly-dynamic nature of traffic over time. In this paper, we propose the use of Graph Neural Networks (GNN) for network traffic anomaly detection. We formulate the problem as contextual anomaly detection on network traffic measurements, and propose a custom GNN-based solution that detects traffic anomalies on origin-destination flows. In our evaluation, we use real-world data from Abilene (6 months), and make a comparison with other widely used methods for the same task (PCA, EWMA, RNN). The results show that the anomalies detected by our solution are quite complementary to those captured by the baselines (with a max. of 36.33% overlapping anomalies for PCA). Moreover, we manually inspect the anomalies detected by our method, and find that a large portion of them can be visually validated by a network expert (64% with high confidence, 18% with mid confidence, 18% normal traffic). Lastly, we analyze the characteristics of the anomalies through two paradigmatic cases that are quite representative of the bulk of anomalies.

Building a Graph-based Deep Learning network model from captured traffic traces

Oct 18, 2023

Abstract:Currently the state of the art network models are based or depend on Discrete Event Simulation (DES). While DES is highly accurate, it is also computationally costly and cumbersome to parallelize, making it unpractical to simulate high performance networks. Additionally, simulated scenarios fail to capture all of the complexities present in real network scenarios. While there exists network models based on Machine Learning (ML) techniques to minimize these issues, these models are also trained with simulated data and hence vulnerable to the same pitfalls. Consequently, the Graph Neural Networking Challenge 2023 introduces a dataset of captured traffic traces that can be used to build a ML-based network model without these limitations. In this paper we propose a Graph Neural Network (GNN)-based solution specifically designed to better capture the complexities of real network scenarios. This is done through a novel encoding method to capture information from the sequence of captured packets, and an improved message passing algorithm to better represent the dependencies present in physical networks. We show that the proposed solution it is able to learn and generalize to unseen captured network scenarios.

Topological Graph Signal Compression

Aug 21, 2023Abstract:Recently emerged Topological Deep Learning (TDL) methods aim to extend current Graph Neural Networks (GNN) by naturally processing higher-order interactions, going beyond the pairwise relations and local neighborhoods defined by graph representations. In this paper we propose a novel TDL-based method for compressing signals over graphs, consisting in two main steps: first, disjoint sets of higher-order structures are inferred based on the original signal --by clustering $N$ datapoints into $K\ll N$ collections; then, a topological-inspired message passing gets a compressed representation of the signal within those multi-element sets. Our results show that our framework improves both standard GNN and feed-forward architectures in compressing temporal link-based signals from two real-word Internet Service Provider Networks' datasets --from $30\%$ up to $90\%$ better reconstruction errors across all evaluation scenarios--, suggesting that it better captures and exploits spatial and temporal correlations over the whole graph-based network structure.

GraphCC: A Practical Graph Learning-based Approach to Congestion Control in Datacenters

Aug 09, 2023

Abstract:Congestion Control (CC) plays a fundamental role in optimizing traffic in Data Center Networks (DCN). Currently, DCNs mainly implement two main CC protocols: DCTCP and DCQCN. Both protocols -- and their main variants -- are based on Explicit Congestion Notification (ECN), where intermediate switches mark packets when they detect congestion. The ECN configuration is thus a crucial aspect on the performance of CC protocols. Nowadays, network experts set static ECN parameters carefully selected to optimize the average network performance. However, today's high-speed DCNs experience quick and abrupt changes that severely change the network state (e.g., dynamic traffic workloads, incast events, failures). This leads to under-utilization and sub-optimal performance. This paper presents GraphCC, a novel Machine Learning-based framework for in-network CC optimization. Our distributed solution relies on a novel combination of Multi-agent Reinforcement Learning (MARL) and Graph Neural Networks (GNN), and it is compatible with widely deployed ECN-based CC protocols. GraphCC deploys distributed agents on switches that communicate with their neighbors to cooperate and optimize the global ECN configuration. In our evaluation, we test the performance of GraphCC under a wide variety of scenarios, focusing on the capability of this solution to adapt to new scenarios unseen during training (e.g., new traffic workloads, failures, upgrades). We compare GraphCC with a state-of-the-art MARL-based solution for ECN tuning -- ACC -- and observe that our proposed solution outperforms the state-of-the-art baseline in all of the evaluation scenarios, showing improvements up to $20\%$ in Flow Completion Time as well as significant reductions in buffer occupancy ($38.0-85.7\%$).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge