Ismael Castell-Uroz

Towards a graph-based foundation model for network traffic analysis

Sep 12, 2024

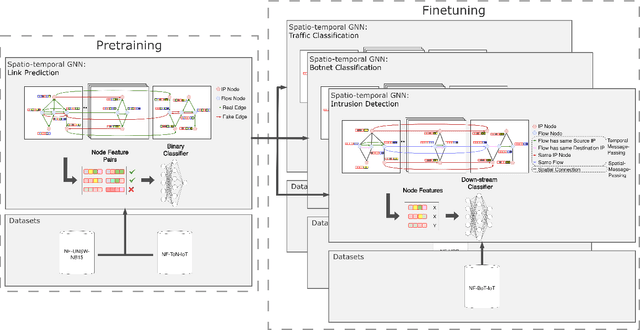

Abstract:Foundation models have shown great promise in various fields of study. A potential application of such models is in computer network traffic analysis, where these models can grasp the complexities of network traffic dynamics and adapt to any specific task or network environment with minimal fine-tuning. Previous approaches have used tokenized hex-level packet data and the model architecture of large language transformer models. We propose a new, efficient graph-based alternative at the flow-level. Our approach represents network traffic as a dynamic spatio-temporal graph, employing a self-supervised link prediction pretraining task to capture the spatial and temporal dynamics in this network graph framework. To evaluate the effectiveness of our approach, we conduct a few-shot learning experiment for three distinct downstream network tasks: intrusion detection, traffic classification, and botnet classification. Models finetuned from our pretrained base achieve an average performance increase of 6.87\% over training from scratch, demonstrating their ability to effectively learn general network traffic dynamics during pretraining. This success suggests the potential for a large-scale version to serve as an operational foundational model.

PPT-GNN: A Practical Pre-Trained Spatio-Temporal Graph Neural Network for Network Security

Jun 19, 2024

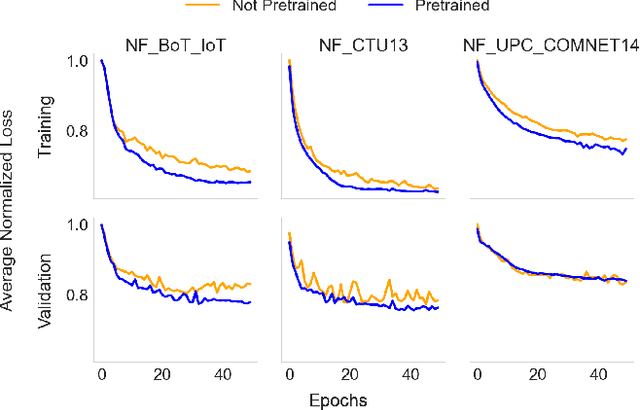

Abstract:Recent works have demonstrated the potential of Graph Neural Networks (GNN) for network intrusion detection. Despite their advantages, a significant gap persists between real-world scenarios, where detection speed is critical, and existing proposals, which operate on large graphs representing several hours of traffic. This gap results in unrealistic operational conditions and impractical detection delays. Moreover, existing models do not generalize well across different networks, hampering their deployment in production environments. To address these issues, we introduce PPTGNN, a practical spatio-temporal GNN for intrusion detection. PPTGNN enables near real-time predictions, while better capturing the spatio-temporal dynamics of network attacks. PPTGNN employs self-supervised pre-training for improved performance and reduced dependency on labeled data. We evaluate PPTGNN on three public datasets and show that it significantly outperforms state-of-the-art models, such as E-ResGAT and E-GraphSAGE, with an average accuracy improvement of 10.38%. Finally, we show that a pre-trained PPTGNN can easily be fine-tuned to unseen networks with minimal labeled examples. This highlights the potential of PPTGNN as a general, large-scale pre-trained model that can effectively operate in diverse network environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge