Pengcheng Pi

E^2VTS: Energy-Efficient Video Text Spotting from Unmanned Aerial Vehicles

Jun 05, 2022

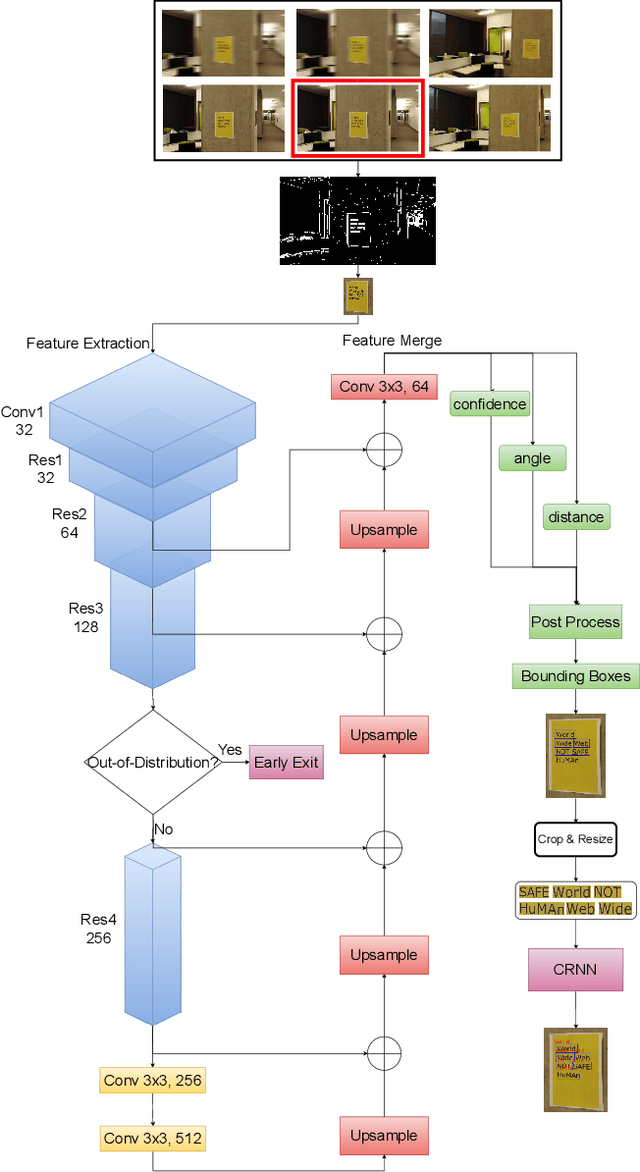

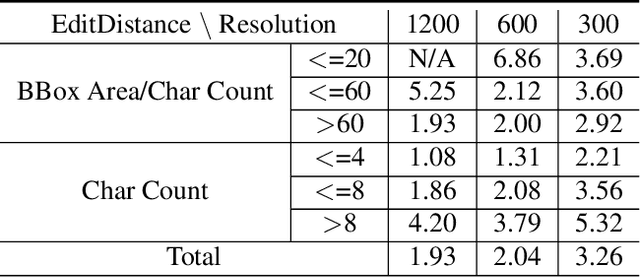

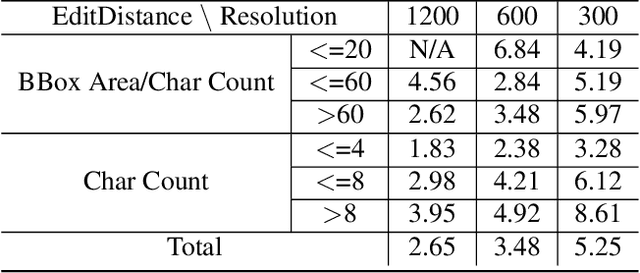

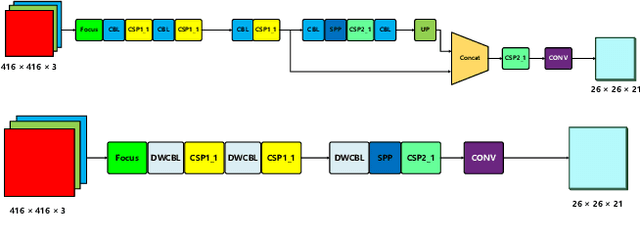

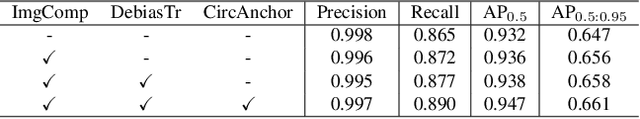

Abstract:Unmanned Aerial Vehicles (UAVs) based video text spotting has been extensively used in civil and military domains. UAV's limited battery capacity motivates us to develop an energy-efficient video text spotting solution. In this paper, we first revisit RCNN's crop & resize training strategy and empirically find that it outperforms aligned RoI sampling on a real-world video text dataset captured by UAV. To reduce energy consumption, we further propose a multi-stage image processor that takes videos' redundancy, continuity, and mixed degradation into account. Lastly, the model is pruned and quantized before deployed on Raspberry Pi. Our proposed energy-efficient video text spotting solution, dubbed as E^2VTS, outperforms all previous methods by achieving a competitive tradeoff between energy efficiency and performance. All our codes and pre-trained models are available at https://github.com/wuzhenyusjtu/LPCVC20-VideoTextSpotting.

E^2TAD: An Energy-Efficient Tracking-based Action Detector

Apr 09, 2022

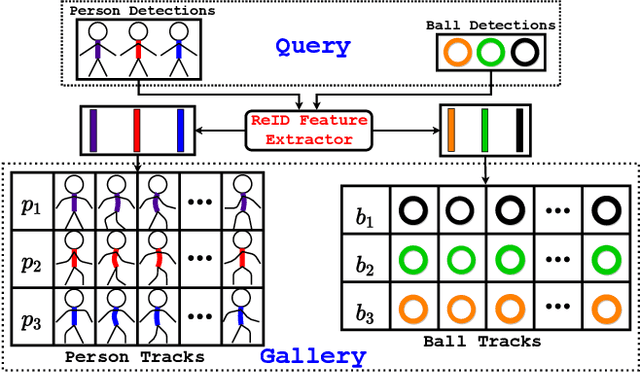

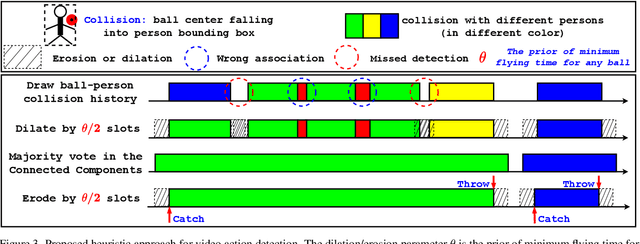

Abstract:Video action detection (spatio-temporal action localization) is usually the starting point for human-centric intelligent analysis of videos nowadays. It has high practical impacts for many applications across robotics, security, healthcare, etc. The two-stage paradigm of Faster R-CNN inspires a standard paradigm of video action detection in object detection, i.e., firstly generating person proposals and then classifying their actions. However, none of the existing solutions could provide fine-grained action detection to the "who-when-where-what" level. This paper presents a tracking-based solution to accurately and efficiently localize predefined key actions spatially (by predicting the associated target IDs and locations) and temporally (by predicting the time in exact frame indices). This solution won first place in the UAV-Video Track of 2021 Low-Power Computer Vision Challenge (LPCVC).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge