Peipei Xu

A Survey of Safety and Trustworthiness of Large Language Models through the Lens of Verification and Validation

May 19, 2023

Abstract:Large Language Models (LLMs) have exploded a new heatwave of AI, for their ability to engage end-users in human-level conversations with detailed and articulate answers across many knowledge domains. In response to their fast adoption in many industrial applications, this survey concerns their safety and trustworthiness. First, we review known vulnerabilities of the LLMs, categorising them into inherent issues, intended attacks, and unintended bugs. Then, we consider if and how the Verification and Validation (V&V) techniques, which have been widely developed for traditional software and deep learning models such as convolutional neural networks, can be integrated and further extended throughout the lifecycle of the LLMs to provide rigorous analysis to the safety and trustworthiness of LLMs and their applications. Specifically, we consider four complementary techniques: falsification and evaluation, verification, runtime monitoring, and ethical use. Considering the fast development of LLMs, this survey does not intend to be complete (although it includes 300 references), especially when it comes to the applications of LLMs in various domains, but rather a collection of organised literature reviews and discussions to support the quick understanding of the safety and trustworthiness issues from the perspective of V&V.

Model-Agnostic Reachability Analysis on Deep Neural Networks

Apr 03, 2023Abstract:Verification plays an essential role in the formal analysis of safety-critical systems. Most current verification methods have specific requirements when working on Deep Neural Networks (DNNs). They either target one particular network category, e.g., Feedforward Neural Networks (FNNs), or networks with specific activation functions, e.g., RdLU. In this paper, we develop a model-agnostic verification framework, called DeepAgn, and show that it can be applied to FNNs, Recurrent Neural Networks (RNNs), or a mixture of both. Under the assumption of Lipschitz continuity, DeepAgn analyses the reachability of DNNs based on a novel optimisation scheme with a global convergence guarantee. It does not require access to the network's internal structures, such as layers and parameters. Through reachability analysis, DeepAgn can tackle several well-known robustness problems, including computing the maximum safe radius for a given input, and generating the ground-truth adversarial examples. We also empirically demonstrate DeepAgn's superior capability and efficiency in handling a broader class of deep neural networks, including both FNNs, and RNNs with very deep layers and millions of neurons, than other state-of-the-art verification approaches.

Towards Verifying the Geometric Robustness of Large-scale Neural Networks

Jan 29, 2023Abstract:Deep neural networks (DNNs) are known to be vulnerable to adversarial geometric transformation. This paper aims to verify the robustness of large-scale DNNs against the combination of multiple geometric transformations with a provable guarantee. Given a set of transformations (e.g., rotation, scaling, etc.), we develop GeoRobust, a black-box robustness analyser built upon a novel global optimisation strategy, for locating the worst-case combination of transformations that affect and even alter a network's output. GeoRobust can provide provable guarantees on finding the worst-case combination based on recent advances in Lipschitzian theory. Due to its black-box nature, GeoRobust can be deployed on large-scale DNNs regardless of their architectures, activation functions, and the number of neurons. In practice, GeoRobust can locate the worst-case geometric transformation with high precision for the ResNet50 model on ImageNet in a few seconds on average. We examined 18 ImageNet classifiers, including the ResNet family and vision transformers, and found a positive correlation between the geometric robustness of the networks and the parameter numbers. We also observe that increasing the depth of DNN is more beneficial than increasing its width in terms of improving its geometric robustness. Our tool GeoRobust is available at https://github.com/TrustAI/GeoRobust.

Reachability Analysis of Neural Network Control Systems

Jan 28, 2023

Abstract:Neural network controllers (NNCs) have shown great promise in autonomous and cyber-physical systems. Despite the various verification approaches for neural networks, the safety analysis of NNCs remains an open problem. Existing verification approaches for neural network control systems (NNCSs) either can only work on a limited type of activation functions, or result in non-trivial over-approximation errors with time evolving. This paper proposes a verification framework for NNCS based on Lipschitzian optimisation, called DeepNNC. We first prove the Lipschitz continuity of closed-loop NNCSs by unrolling and eliminating the loops. We then reveal the working principles of applying Lipschitzian optimisation on NNCS verification and illustrate it by verifying an adaptive cruise control model. Compared to state-of-the-art verification approaches, DeepNNC shows superior performance in terms of efficiency and accuracy over a wide range of NNCs. We also provide a case study to demonstrate the capability of DeepNNC to handle a real-world, practical, and complex system. Our tool \textbf{DeepNNC} is available at \url{https://github.com/TrustAI/DeepNNC}.

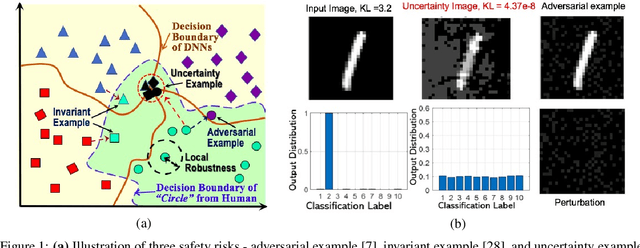

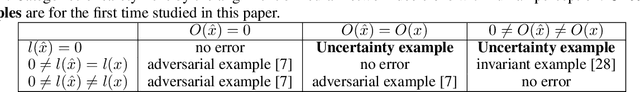

Towards the Quantification of Safety Risks in Deep Neural Networks

Sep 13, 2020

Abstract:Safety concerns on the deep neural networks (DNNs) have been raised when they are applied to critical sectors. In this paper, we define safety risks by requesting the alignment of the network's decision with human perception. To enable a general methodology for quantifying safety risks, we define a generic safety property and instantiate it to express various safety risks. For the quantification of risks, we take the maximum radius of safe norm balls, in which no safety risk exists. The computation of the maximum safe radius is reduced to the computation of their respective Lipschitz metrics - the quantities to be computed. In addition to the known adversarial example, reachability example, and invariant example, in this paper we identify a new class of risk - uncertainty example - on which humans can tell easily but the network is unsure. We develop an algorithm, inspired by derivative-free optimization techniques and accelerated by tensor-based parallelization on GPUs, to support efficient computation of the metrics. We perform evaluations on several benchmark neural networks, including ACSC-Xu, MNIST, CIFAR-10, and ImageNet networks. The experiments show that, our method can achieve competitive performance on safety quantification in terms of the tightness and the efficiency of computation. Importantly, as a generic approach, our method can work with a broad class of safety risks and without restrictions on the structure of neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge