Nicolas Rojas

Malleable Robots

Feb 06, 2025Abstract:This chapter is about the fundamentals of fabrication, control, and human-robot interaction of a new type of collaborative robotic manipulators, called malleable robots, which are based on adjustable architectures of varying stiffness for achieving high dexterity with lower mobility arms. Collaborative robots, or cobots, commonly integrate six or more degrees of freedom (DOF) in a serial arm in order to allow positioning in constrained spaces and adaptability across tasks. Increasing the dexterity of robotic arms has been indeed traditionally accomplished by increasing the number of degrees of freedom of the system; however, once a robotic task has been established (e.g., a pick-and-place operation), the motion of the end-effector can be normally achieved using less than 6-DOF (i.e., lower mobility). The aim of malleable robots is to close the technological gap that separates current cobots from achieving flexible, accessible manufacturing automation with a reduced number of actuators.

* 37 pages, 22 figures, chapter 7 of "Handbook on Soft Robotics"

A Backbone for Long-Horizon Robot Task Understanding

Aug 07, 2024

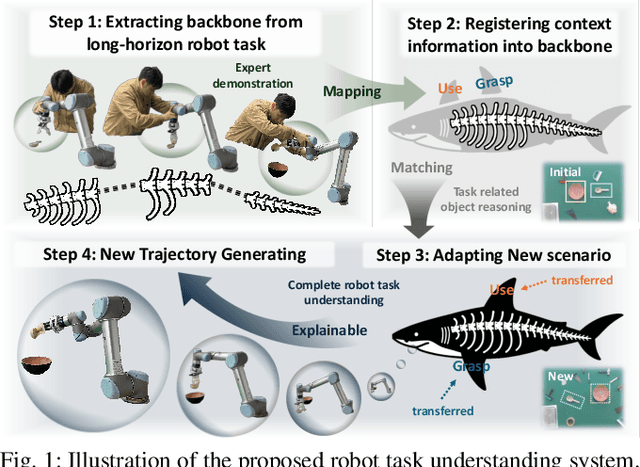

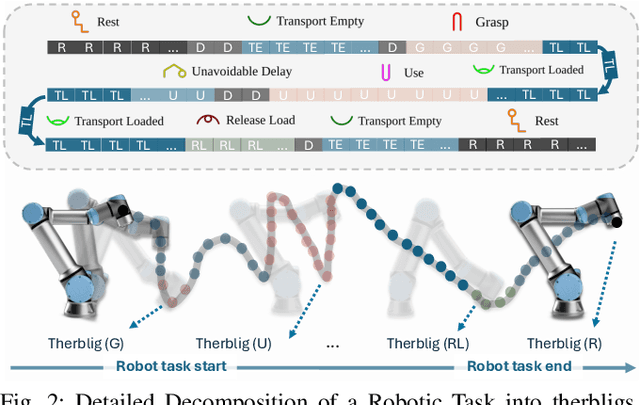

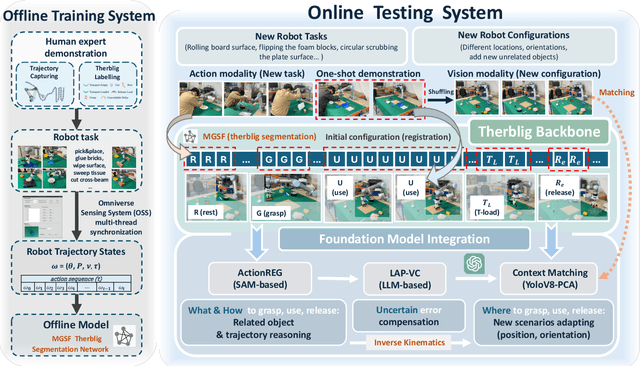

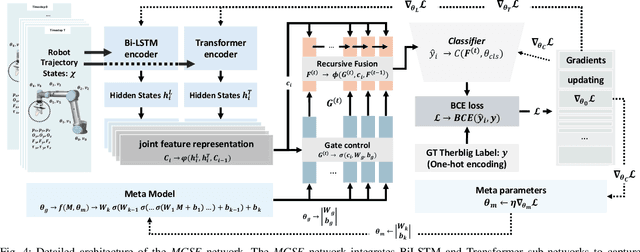

Abstract:End-to-end robot learning, particularly for long-horizon tasks, often results in unpredictable outcomes and poor generalization. To address these challenges, we propose a novel Therblig-based Backbone Framework (TBBF) to enhance robot task understanding and transferability. This framework uses therbligs (basic action elements) as the backbone to decompose high-level robot tasks into elemental robot configurations, which are then integrated with current foundation models to improve task understanding. The approach consists of two stages: offline training and online testing. During the offline training stage, we developed the Meta-RGate SynerFusion (MGSF) network for accurate therblig segmentation across various tasks. In the online testing stage, after a one-shot demonstration of a new task is collected, our MGSF network extracts high-level knowledge, which is then encoded into the image using Action Registration (ActionREG). Additionally, the Large Language Model (LLM)-Alignment Policy for Visual Correction (LAP-VC) is employed to ensure precise action execution, facilitating trajectory transfer in novel robot scenarios. Experimental results validate these methods, achieving 94.37% recall in therblig segmentation and success rates of 94.4% and 80% in real-world online robot testing for simple and complex scenarios, respectively. Supplementary material is available at: https://sites.google.com/view/therbligsbasedbackbone/home

An Origami-Inspired Variable Friction Surface for Increasing the Dexterity of Robotic Grippers

Apr 15, 2024Abstract:While the grasping capability of robotic grippers has shown significant development, the ability to manipulate objects within the hand is still limited. One explanation for this limitation is the lack of controlled contact variation between the grasped object and the gripper. For instance, human hands have the ability to firmly grip object surfaces, as well as slide over object faces, an aspect that aids the enhanced manipulation of objects within the hand without losing contact. In this letter, we present a parametric, origami-inspired thin surface capable of transitioning between a high friction and a low friction state, suitable for implementation as an epidermis in robotic fingers. A numerical analysis of the proposed surface based on its design parameters, force analysis, and performance in in-hand manipulation tasks is presented. Through the development of a simple two-fingered two-degree-of-freedom gripper utilizing the proposed variable-friction surfaces with different parameters, we experimentally demonstrate the improved manipulation capabilities of the hand when compared to the same gripper without changeable friction. Results show that the pattern density and valley gap are the main parameters that effect the in-hand manipulation performance. The origami-inspired thin surface with a higher pattern density generated a smaller valley gap and smaller height change, producing a more stable improvement of the manipulation capabilities of the hand.

* 8 pages, 11 figures

Stiffness-Tuneable Limb Segment with Flexible Spine for Malleable Robots

Apr 15, 2024Abstract:Robotic arms built from stiffness-adjustable, continuously bending segments serially connected with revolute joints have the ability to change their mechanical architecture and workspace, thus allowing high flexibility and adaptation to different tasks with less than six degrees of freedom, a concept that we call malleable robots. Known stiffening mechanisms may be used to implement suitable links for these novel robotic manipulators; however, these solutions usually show a reduced performance when bending due to structural deformation. By including an inner support structure this deformation can be minimised, resulting in an increased stiffening performance. This paper presents a new multi-material spine-inspired flexible structure for providing support in stiffness-controllable layer-jamming-based robotic links of large diameter. The proposed spine mechanism is highly movable with type and range of motions that match those of a robotic link using solely layer jamming, whilst maintaining a hollow and light structure. The mechanics and design of the flexible spine are explored, and a prototype of a link utilising it is developed and compared with limb segments based on granular jamming and layer jamming without support structure. Results of experiments verify the advantages of the proposed design, demonstrating that it maintains a constant central diameter across bending angles and presents an improvement of more than 203% of resisting force at 180 degrees.

* 7 pages, 11 figures

Cosserat Rod Modeling and Validation for a Soft Continuum Robot with Self-Controllable Variable Curvature

Feb 19, 2024Abstract:This paper introduces a Cosserat rod based mathematical model for modeling a self-controllable variable curvature soft continuum robot. This soft continuum robot has a hollow inner channel and was developed with the ability to perform variable curvature utilizing a growing spine. The growing spine is able to grow and retract while modifies its stiffness through milli-size particle (glass bubble) granular jamming. This soft continuum robot can then perform continuous curvature variation, unlike previous approaches whose curvature variation is discrete and depends on the number of locking mechanisms or manual configurations. The robot poses an emergent modeling problem due to the variable stiffness growing spine which is addressed in this paper. We investigate the property of growing spine stiffness and incorporate it into the Cosserat rod model by implementing a combined stiffness approach. We conduct experiments with the soft continuum robot in various configurations and compared the results with our developed mathematical model. The results show that the mathematical model based on the adapted Cosserat rod matches the experimental results with only a 3.3\% error with respect to the length of the soft continuum robot.

TraKDis: A Transformer-based Knowledge Distillation Approach for Visual Reinforcement Learning with Application to Cloth Manipulation

Jan 24, 2024

Abstract:Approaching robotic cloth manipulation using reinforcement learning based on visual feedback is appealing as robot perception and control can be learned simultaneously. However, major challenges result due to the intricate dynamics of cloth and the high dimensionality of the corresponding states, what shadows the practicality of the idea. To tackle these issues, we propose TraKDis, a novel Transformer-based Knowledge Distillation approach that decomposes the visual reinforcement learning problem into two distinct stages. In the first stage, a privileged agent is trained, which possesses complete knowledge of the cloth state information. This privileged agent acts as a teacher, providing valuable guidance and training signals for subsequent stages. The second stage involves a knowledge distillation procedure, where the knowledge acquired by the privileged agent is transferred to a vision-based agent by leveraging pre-trained state estimation and weight initialization. TraKDis demonstrates better performance when compared to state-of-the-art RL techniques, showing a higher performance of 21.9%, 13.8%, and 8.3% in cloth folding tasks in simulation. Furthermore, to validate robustness, we evaluate the agent in a noisy environment; the results indicate its ability to handle and adapt to environmental uncertainties effectively. Real robot experiments are also conducted to showcase the efficiency of our method in real-world scenarios.

Synthetic data enables faster annotation and robust segmentation for multi-object grasping in clutter

Jan 24, 2024Abstract:Object recognition and object pose estimation in robotic grasping continue to be significant challenges, since building a labelled dataset can be time consuming and financially costly in terms of data collection and annotation. In this work, we propose a synthetic data generation method that minimizes human intervention and makes downstream image segmentation algorithms more robust by combining a generated synthetic dataset with a smaller real-world dataset (hybrid dataset). Annotation experiments show that the proposed synthetic scene generation can diminish labelling time dramatically. RGB image segmentation is trained with hybrid dataset and combined with depth information to produce pixel-to-point correspondence of individual segmented objects. The object to grasp is then determined by the confidence score of the segmentation algorithm. Pick-and-place experiments demonstrate that segmentation trained on our hybrid dataset (98.9%, 70%) outperforms the real dataset and a publicly available dataset by (6.7%, 18.8%) and (2.8%, 10%) in terms of labelling and grasping success rate, respectively. Supplementary material is available at https://sites.google.com/view/synthetic-dataset-generation.

G.O.G: A Versatile Gripper-On-Gripper Design for Bimanual Cloth Manipulation with a Single Robotic Arm

Jan 19, 2024Abstract:The manipulation of garments poses research challenges due to their deformable nature and the extensive variability in shapes and sizes. Despite numerous attempts by researchers to address these via approaches involving robot perception and control, there has been a relatively limited interest in resolving it through the co-development of robot hardware. Consequently, the majority of studies employ off-the-shelf grippers in conjunction with dual robot arms to enable bimanual manipulation and high dexterity. However, this dual-arm system increases the overall cost of the robotic system as well as its control complexity in order to tackle robot collisions and other robot coordination issues. As an alternative approach, we propose to enable bimanual cloth manipulation using a single robot arm via novel end effector design -- sharing dexterity skills between manipulator and gripper rather than relying entirely on robot arm coordination. To this end, we introduce a new gripper, called G.O.G., based on a gripper-on-gripper structure where the first gripper independently regulates the span, up to 500mm, between its fingers which are in turn also grippers. These finger grippers consist of a variable friction module that enables two grasping modes: firm and sliding grasps. Household item and cloth object benchmarks are employed to evaluate the performance of the proposed design, encompassing both experiments on the gripper design itself and on cloth manipulation. Experimental results demonstrate the potential of the introduced ideas to undertake a range of bimanual cloth manipulation tasks with a single robot arm. Supplementary material is available at https://sites.google.com/view/gripperongripper.

* Accepted for IEEE Robotics and Automation Letters in January 2024. Dongmyoung Lee and Wei Chen contributed equally to this research

A Soft Continuum Robot with Self-Controllable Variable Curvature

Jan 03, 2024Abstract:This paper introduces a new type of soft continuum robot, called SCoReS, which is capable of self-controlling continuously its curvature at the segment level; in contrast to previous designs which either require external forces or machine elements, or whose variable curvature capabilities are discrete -- depending on the number of locking mechanisms and segments. The ability to have a variable curvature, whose control is continuous and independent from external factors, makes a soft continuum robot more adaptive in constrained environments, similar to what is observed in nature in the elephant's trunk or ostrich's neck for instance which exhibit multiple curvatures. To this end, our soft continuum robot enables reconfigurable variable curvatures utilizing a variable stiffness growing spine based on micro-particle granular jamming for the first time. We detail the design of the proposed robot, presenting its modeling through beam theory and FEA simulation -- which is validated through experiments. The robot's versatile bending profiles are then explored in experiments and an application to grasp fruits at different configurations is demonstrated.

The Hydra Hand: A Mode-Switching Underactuated Gripper with Precision and Power Grasping Modes

Sep 26, 2023Abstract:Human hands are able to grasp a wide range of object sizes, shapes, and weights, achieved via reshaping and altering their apparent grasping stiffness between compliant power and rigid precision. Achieving similar versatility in robotic hands remains a challenge, which has often been addressed by adding extra controllable degrees of freedom, tactile sensors, or specialised extra grasping hardware, at the cost of control complexity and robustness. We introduce a novel reconfigurable four-fingered two-actuator underactuated gripper -- the Hydra Hand -- that switches between compliant power and rigid precision grasps using a single motor, while generating grasps via a single hydraulic actuator -- exhibiting adaptive grasping between finger pairs, enabling the power grasping of two objects simultaneously. The mode switching mechanism and the hand's kinematics are presented and analysed, and performance is tested on two grasping benchmarks: one focused on rigid objects, and the other on items of clothing. The Hydra Hand is shown to excel at grasping large and irregular objects, and small objects with its respective compliant power and rigid precision configurations. The hand's versatility is then showcased by executing the challenging manipulation task of safely grasping and placing a bunch of grapes, and then plucking a single grape from the bunch.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge