Nicola Bellotto

Causality-enhanced Decision-Making for Autonomous Mobile Robots in Dynamic Environments

Apr 16, 2025

Abstract:The growing integration of robots in shared environments -- such as warehouses, shopping centres, and hospitals -- demands a deep understanding of the underlying dynamics and human behaviours, including how, when, and where individuals engage in various activities and interactions. This knowledge goes beyond simple correlation studies and requires a more comprehensive causal analysis. By leveraging causal inference to model cause-and-effect relationships, we can better anticipate critical environmental factors and enable autonomous robots to plan and execute tasks more effectively. To this end, we propose a novel causality-based decision-making framework that reasons over a learned causal model to predict battery usage and human obstructions, understanding how these factors could influence robot task execution. Such reasoning framework assists the robot in deciding when and how to complete a given task. To achieve this, we developed also PeopleFlow, a new Gazebo-based simulator designed to model context-sensitive human-robot spatial interactions in shared workspaces. PeopleFlow features realistic human and robot trajectories influenced by contextual factors such as time, environment layout, and robot state, and can simulate a large number of agents. While the simulator is general-purpose, in this paper we focus on a warehouse-like environment as a case study, where we conduct an extensive evaluation benchmarking our causal approach against a non-causal baseline. Our findings demonstrate the efficacy of the proposed solutions, highlighting how causal reasoning enables autonomous robots to operate more efficiently and safely in dynamic environments shared with humans.

CAnDOIT: Causal Discovery with Observational and Interventional Data from Time-Series

Oct 07, 2024Abstract:The study of cause-and-effect is of the utmost importance in many branches of science, but also for many practical applications of intelligent systems. In particular, identifying causal relationships in situations that include hidden factors is a major challenge for methods that rely solely on observational data for building causal models. This paper proposes CAnDOIT, a causal discovery method to reconstruct causal models using both observational and interventional time-series data. The use of interventional data in the causal analysis is crucial for real-world applications, such as robotics, where the scenario is highly complex and observational data alone are often insufficient to uncover the correct causal structure. Validation of the method is performed initially on randomly generated synthetic models and subsequently on a well-known benchmark for causal structure learning in a robotic manipulation environment. The experiments demonstrate that the approach can effectively handle data from interventions and exploit them to enhance the accuracy of the causal analysis. A Python implementation of CAnDOIT has also been developed and is publicly available on GitHub: https://github.com/lcastri/causalflow.

MicroFlow: An Efficient Rust-Based Inference Engine for TinyML

Sep 28, 2024

Abstract:MicroFlow is an open-source TinyML framework for the deployment of Neural Networks (NNs) on embedded systems using the Rust programming language, specifically designed for efficiency and robustness, which is suitable for applications in critical environments. To achieve these objectives, MicroFlow employs a compiler-based inference engine approach, coupled with Rust's memory safety and features. The proposed solution enables the successful deployment of NNs on highly resource-constrained devices, including bare-metal 8-bit microcontrollers with only 2kB of RAM. Furthermore, MicroFlow is able to use less Flash and RAM memory than other state-of-the-art solutions for deploying NN reference models (i.e. wake-word and person detection). It can also achieve faster inference compared to existing engines on medium-size NNs, and similar performance on bigger ones. The experimental results prove the efficiency and suitability of MicroFlow for the deployment of TinyML models in critical environments where resources are particularly limited.

Tiny Robotics Dataset and Benchmark for Continual Object Detection

Sep 24, 2024

Abstract:Detecting objects in mobile robotics is crucial for numerous applications, from autonomous navigation to inspection. However, robots are often required to perform tasks in different domains with respect to the training one and need to adapt to these changes. Tiny mobile robots, subject to size, power, and computational constraints, encounter even more difficulties in running and adapting these algorithms. Such adaptability, though, is crucial for real-world deployment, where robots must operate effectively in dynamic and unpredictable settings. In this work, we introduce a novel benchmark to evaluate the continual learning capabilities of object detection systems in tiny robotic platforms. Our contributions include: (i) Tiny Robotics Object Detection (TiROD), a comprehensive dataset collected using a small mobile robot, designed to test the adaptability of object detectors across various domains and classes; (ii) an evaluation of state-of-the-art real-time object detectors combined with different continual learning strategies on this dataset, providing detailed insights into their performance and limitations; and (iii) we publish the data and the code to replicate the results to foster continuous advancements in this field. Our benchmark results indicate key challenges that must be addressed to advance the development of robust and efficient object detection systems for tiny robotics.

Replay Consolidation with Label Propagation for Continual Object Detection

Sep 09, 2024

Abstract:Object Detection is a highly relevant computer vision problem with many applications such as robotics and autonomous driving. Continual Learning~(CL) considers a setting where a model incrementally learns new information while retaining previously acquired knowledge. This is particularly challenging since Deep Learning models tend to catastrophically forget old knowledge while training on new data. In particular, Continual Learning for Object Detection~(CLOD) poses additional difficulties compared to CL for Classification. In CLOD, images from previous tasks may contain unknown classes that could reappear labeled in future tasks. These missing annotations cause task interference issues for replay-based approaches. As a result, most works in the literature have focused on distillation-based approaches. However, these approaches are effective only when there is a strong overlap of classes across tasks. To address the issues of current methodologies, we propose a novel technique to solve CLOD called Replay Consolidation with Label Propagation for Object Detection (RCLPOD). Based on the replay method, our solution avoids task interference issues by enhancing the buffer memory samples. Our method is evaluated against existing techniques in CLOD literature, demonstrating its superior performance on established benchmarks like VOC and COCO.

Latent Distillation for Continual Object Detection at the Edge

Sep 03, 2024

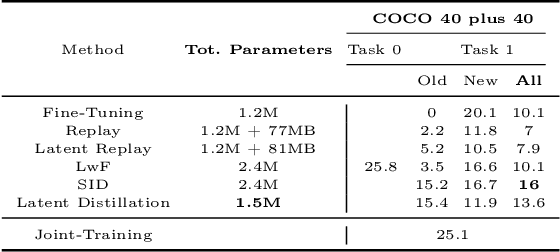

Abstract:While numerous methods achieving remarkable performance exist in the Object Detection literature, addressing data distribution shifts remains challenging. Continual Learning (CL) offers solutions to this issue, enabling models to adapt to new data while maintaining performance on previous data. This is particularly pertinent for edge devices, common in dynamic environments like automotive and robotics. In this work, we address the memory and computation constraints of edge devices in the Continual Learning for Object Detection (CLOD) scenario. Specifically, (i) we investigate the suitability of an open-source, lightweight, and fast detector, namely NanoDet, for CLOD on edge devices, improving upon larger architectures used in the literature. Moreover, (ii) we propose a novel CL method, called Latent Distillation~(LD), that reduces the number of operations and the memory required by state-of-the-art CL approaches without significantly compromising detection performance. Our approach is validated using the well-known VOC and COCO benchmarks, reducing the distillation parameter overhead by 74\% and the Floating Points Operations~(FLOPs) by 56\% per model update compared to other distillation methods.

neuROSym: Deployment and Evaluation of a ROS-based Neuro-Symbolic Model for Human Motion Prediction

Jun 24, 2024Abstract:Autonomous mobile robots can rely on several human motion detection and prediction systems for safe and efficient navigation in human environments, but the underline model architectures can have different impacts on the trustworthiness of the robot in the real world. Among existing solutions for context-aware human motion prediction, some approaches have shown the benefit of integrating symbolic knowledge with state-of-the-art neural networks. In particular, a recent neuro-symbolic architecture (NeuroSyM) has successfully embedded context with a Qualitative Trajectory Calculus (QTC) for spatial interactions representation. This work achieved better performance than neural-only baseline architectures on offline datasets. In this paper, we extend the original architecture to provide neuROSym, a ROS package for robot deployment in real-world scenarios, which can run, visualise, and evaluate previous neural-only and neuro-symbolic models for motion prediction online. We evaluated these models, NeuroSyM and a baseline SGAN, on a TIAGo robot in two scenarios with different human motion patterns. We assessed accuracy and runtime performance of the prediction models, showing a general improvement in case our neuro-symbolic architecture is used. We make the neuROSym package1 publicly available to the robotics community.

Experimental Evaluation of ROS-Causal in Real-World Human-Robot Spatial Interaction Scenarios

Jun 07, 2024

Abstract:Deploying robots in human-shared environments requires a deep understanding of how nearby agents and objects interact. Employing causal inference to model cause-and-effect relationships facilitates the prediction of human behaviours and enables the anticipation of robot interventions. However, a significant challenge arises due to the absence of implementation of existing causal discovery methods within the ROS ecosystem, the standard de-facto framework in robotics, hindering effective utilisation on real robots. To bridge this gap, in our previous work we proposed ROS-Causal, a ROS-based framework designed for onboard data collection and causal discovery in human-robot spatial interactions. In this work, we present an experimental evaluation of ROS-Causal both in simulation and on a new dataset of human-robot spatial interactions in a lab scenario, to assess its performance and effectiveness. Our analysis demonstrates the efficacy of this approach, showcasing how causal models can be extracted directly onboard by robots during data collection. The online causal models generated from the simulation are consistent with those from lab experiments. These findings can help researchers to enhance the performance of robotic systems in shared environments, firstly by studying the causal relations between variables in simulation without real people, and then facilitating the actual robot deployment in real human environments. ROS-Causal: https://lcastri.github.io/roscausal

ROS-Causal: A ROS-based Causal Analysis Framework for Human-Robot Interaction Applications

Feb 29, 2024

Abstract:Deploying robots in human-shared spaces requires understanding interactions among nearby agents and objects. Modelling cause-and-effect relations through causal inference aids in predicting human behaviours and anticipating robot interventions. However, a critical challenge arises as existing causal discovery methods currently lack an implementation inside the ROS ecosystem, the standard de facto in robotics, hindering effective utilisation in robotics. To address this gap, this paper introduces ROS-Causal, a ROS-based framework for onboard data collection and causal discovery in human-robot spatial interactions. An ad-hoc simulator, integrated with ROS, illustrates the approach's effectiveness, showcasing the robot onboard generation of causal models during data collection. ROS-Causal is available on GitHub: https://github.com/lcastri/roscausal.git.

Efficient Causal Discovery for Robotics Applications

Oct 24, 2023Abstract:Using robots for automating tasks in environments shared with humans, such as warehouses, shopping centres, or hospitals, requires these robots to comprehend the fundamental physical interactions among nearby agents and objects. Specifically, creating models to represent cause-and-effect relationships among these elements can aid in predicting unforeseen human behaviours and anticipate the outcome of particular robot actions. To be suitable for robots, causal analysis must be both fast and accurate, meeting real-time demands and the limited computational resources typical in most robotics applications. In this paper, we present a practical demonstration of our approach for fast and accurate causal analysis, known as Filtered PCMCI (F-PCMCI), along with a real-world robotics application. The provided application illustrates how our F-PCMCI can accurately and promptly reconstruct the causal model of a human-robot interaction scenario, which can then be leveraged to enhance the quality of the interaction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge