Nevrez Imamoglu

A Tutorial on ALOS2 SAR Utilization: Dataset Preparation, Self-Supervised Pretraining, and Semantic Segmentation

Mar 16, 2026Abstract:Masked auto-encoders (MAE) and related approaches have shown promise for satellite imagery, but their application to synthetic aperture radar (SAR) remains limited due to challenges in semantic labeling and high noise levels. Building on our prior work with SAR-W-MixMAE, which adds SAR-specific intensity-weighted loss to standard MixMAE for pretraining, we also introduce SAR-W-SimMIM; a weighted variant of SimMIM applied to ALOS-2 single-channel SAR imagery. This method aims to reduce the impact of speckle and extreme intensity values during self-supervised pretraining. We evaluate its effect on semantic segmentation compared to our previous trial with SAR-W-MixMAE and random initialization, observing notable improvements. In addition, pretraining and fine-tuning models on satellite imagery pose unique challenges, particularly when developing region-specific models. Imbalanced land cover distributions such as dominant water, forest, or desert areas can introduce bias, affecting both pretraining and downstream tasks like land cover segmentation. To address this, we constructed a SAR dataset using ALOS-2 single-channel (HH polarization) imagery focused on the Japan region, marking the initial phase toward a national-scale foundation model. This dataset was used to pretrain a vision transformer-based autoencoder, with the resulting encoder fine-tuned for semantic segmentation using a task-specific decoder. Initial results demonstrate significant performance improvements compared to training from scratch with random initialization. In summary, this work provides a guide to process and prepare ALOS2 observations to create dataset so that it can be taken advantage of self-supervised pretraining of models and finetuning downstream tasks such as semantic segmentation.

Enhanced LULC Segmentation via Lightweight Model Refinements on ALOS-2 SAR Data

Jan 22, 2026Abstract:This work focuses on national-scale land-use/land-cover (LULC) semantic segmentation using ALOS-2 single-polarization (HH) SAR data over Japan, together with a companion binary water detection task. Building on SAR-W-MixMAE self-supervised pretraining [1], we address common SAR dense-prediction failure modes, boundary over-smoothing, missed thin/slender structures, and rare-class degradation under long-tailed labels, without increasing pipeline complexity. We introduce three lightweight refinements: (i) injecting high-resolution features into multi-scale decoding, (ii) a progressive refine-up head that alternates convolutional refinement and stepwise upsampling, and (iii) an $α$-scale factor that tempers class reweighting within a focal+dice objective. The resulting model yields consistent improvements on the Japan-wide ALOS-2 LULC benchmark, particularly for under-represented classes, and improves water detection across standard evaluation metrics.

Exploring Object-Aware Attention Guided Frame Association for RGB-D SLAM

Oct 30, 2025Abstract:Attention models have recently emerged as a powerful approach, demonstrating significant progress in various fields. Visualization techniques, such as class activation mapping, provide visual insights into the reasoning of convolutional neural networks (CNNs). Using network gradients, it is possible to identify regions where the network pays attention during image recognition tasks. Furthermore, these gradients can be combined with CNN features to localize more generalizable, task-specific attentive (salient) regions within scenes. However, explicit use of this gradient-based attention information integrated directly into CNN representations for semantic object understanding remains limited. Such integration is particularly beneficial for visual tasks like simultaneous localization and mapping (SLAM), where CNN representations enriched with spatially attentive object locations can enhance performance. In this work, we propose utilizing task-specific network attention for RGB-D indoor SLAM. Specifically, we integrate layer-wise attention information derived from network gradients with CNN feature representations to improve frame association performance. Experimental results indicate improved performance compared to baseline methods, particularly for large environments.

SAR-W-MixMAE: SAR Foundation Model Training Using Backscatter Power Weighting

Mar 04, 2025Abstract:Foundation model approaches such as masked auto-encoders (MAE) or its variations are now being successfully applied to satellite imagery. Most of the ongoing technical validation of foundation models have been applied to optical images like RGB or multi-spectral images. Due to difficulty in semantic labeling to create datasets and higher noise content with respect to optical images, Synthetic Aperture Radar (SAR) data has not been explored a lot in the field for foundation models. Therefore, in this work as a pre-training approach, we explored masked auto-encoder, specifically MixMAE on Sentinel-1 SAR images and its impact on SAR image classification tasks. Moreover, we proposed to use the physical characteristic of SAR data for applying weighting parameter on the auto-encoder training loss (MSE) to reduce the effect of speckle noise and very high values on the SAR images. Proposed SAR intensity-based weighting of the reconstruction loss demonstrates promising results both on SAR pre-training and downstream tasks specifically on flood detection compared with the baseline model.

A Vision-Language Framework for Multispectral Scene Representation Using Language-Grounded Features

Jan 17, 2025Abstract:Scene understanding in remote sensing often faces challenges in generating accurate representations for complex environments such as various land use areas or coastal regions, which may also include snow, clouds, or haze. To address this, we present a vision-language framework named Spectral LLaVA, which integrates multispectral data with vision-language alignment techniques to enhance scene representation and description. Using the BigEarthNet v2 dataset from Sentinel-2, we establish a baseline with RGB-based scene descriptions and further demonstrate substantial improvements through the incorporation of multispectral information. Our framework optimizes a lightweight linear projection layer for alignment while keeping the vision backbone of SpectralGPT frozen. Our experiments encompass scene classification using linear probing and language modeling for jointly performing scene classification and description generation. Our results highlight Spectral LLaVA's ability to produce detailed and accurate descriptions, particularly for scenarios where RGB data alone proves inadequate, while also enhancing classification performance by refining SpectralGPT features into semantically meaningful representations.

Hyperspectral Image Denoising via Self-Modulating Convolutional Neural Networks

Sep 15, 2023

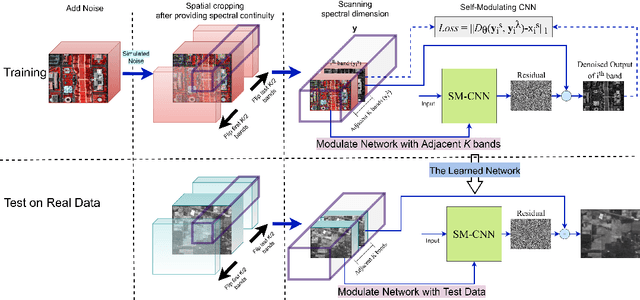

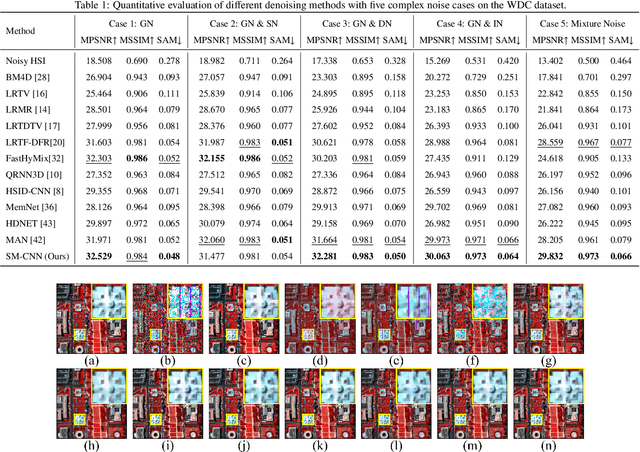

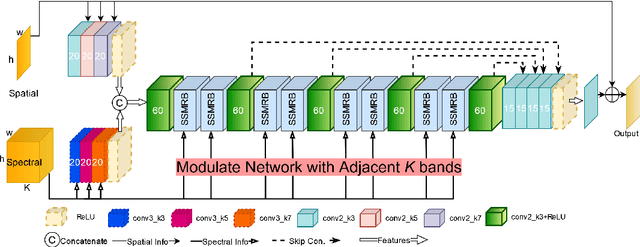

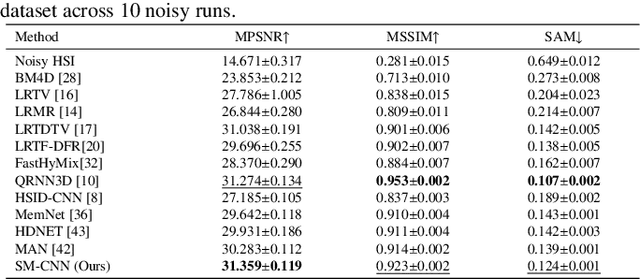

Abstract:Compared to natural images, hyperspectral images (HSIs) consist of a large number of bands, with each band capturing different spectral information from a certain wavelength, even some beyond the visible spectrum. These characteristics of HSIs make them highly effective for remote sensing applications. That said, the existing hyperspectral imaging devices introduce severe degradation in HSIs. Hence, hyperspectral image denoising has attracted lots of attention by the community lately. While recent deep HSI denoising methods have provided effective solutions, their performance under real-life complex noise remains suboptimal, as they lack adaptability to new data. To overcome these limitations, in our work, we introduce a self-modulating convolutional neural network which we refer to as SM-CNN, which utilizes correlated spectral and spatial information. At the core of the model lies a novel block, which we call spectral self-modulating residual block (SSMRB), that allows the network to transform the features in an adaptive manner based on the adjacent spectral data, enhancing the network's ability to handle complex noise. In particular, the introduction of SSMRB transforms our denoising network into a dynamic network that adapts its predicted features while denoising every input HSI with respect to its spatio-spectral characteristics. Experimental analysis on both synthetic and real data shows that the proposed SM-CNN outperforms other state-of-the-art HSI denoising methods both quantitatively and qualitatively on public benchmark datasets.

Attention-Guided Lidar Segmentation and Odometry Using Image-to-Point Cloud Saliency Transfer

Aug 28, 2023

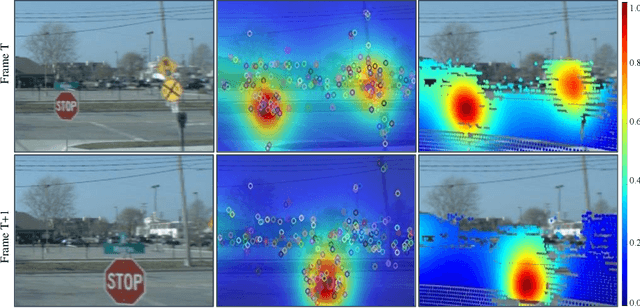

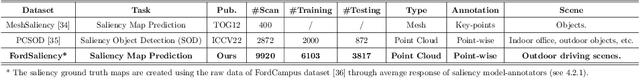

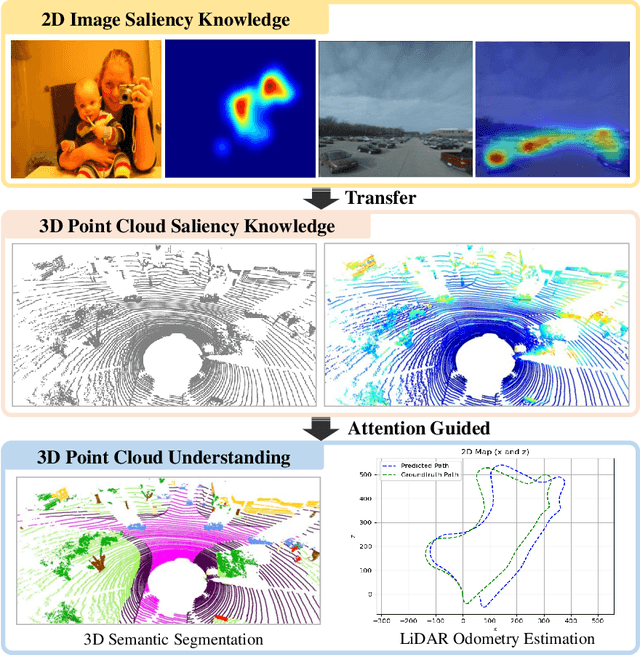

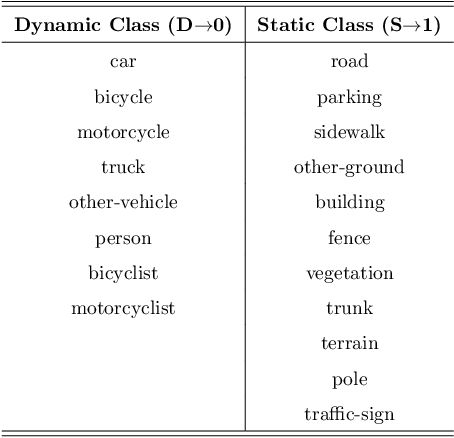

Abstract:LiDAR odometry estimation and 3D semantic segmentation are crucial for autonomous driving, which has achieved remarkable advances recently. However, these tasks are challenging due to the imbalance of points in different semantic categories for 3D semantic segmentation and the influence of dynamic objects for LiDAR odometry estimation, which increases the importance of using representative/salient landmarks as reference points for robust feature learning. To address these challenges, we propose a saliency-guided approach that leverages attention information to improve the performance of LiDAR odometry estimation and semantic segmentation models. Unlike in the image domain, only a few studies have addressed point cloud saliency information due to the lack of annotated training data. To alleviate this, we first present a universal framework to transfer saliency distribution knowledge from color images to point clouds, and use this to construct a pseudo-saliency dataset (i.e. FordSaliency) for point clouds. Then, we adopt point cloud-based backbones to learn saliency distribution from pseudo-saliency labels, which is followed by our proposed SalLiDAR module. SalLiDAR is a saliency-guided 3D semantic segmentation model that integrates saliency information to improve segmentation performance. Finally, we introduce SalLONet, a self-supervised saliency-guided LiDAR odometry network that uses the semantic and saliency predictions of SalLiDAR to achieve better odometry estimation. Our extensive experiments on benchmark datasets demonstrate that the proposed SalLiDAR and SalLONet models achieve state-of-the-art performance against existing methods, highlighting the effectiveness of image-to-LiDAR saliency knowledge transfer. Source code will be available at https://github.com/nevrez/SalLONet.

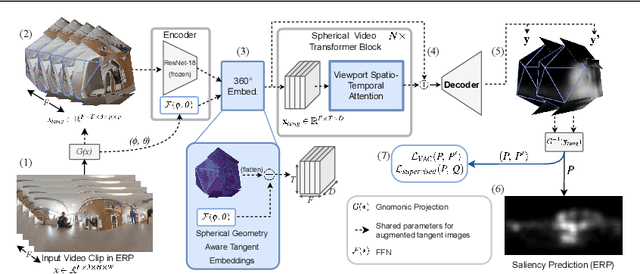

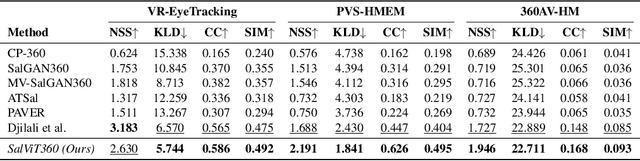

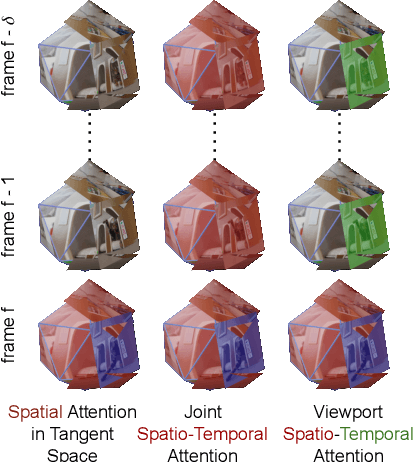

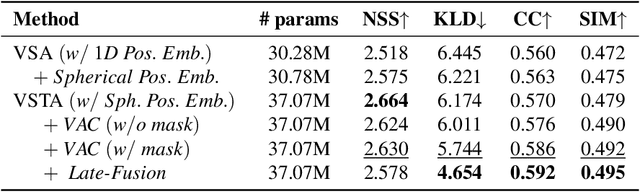

Spherical Vision Transformer for 360-degree Video Saliency Prediction

Aug 24, 2023

Abstract:The growing interest in omnidirectional videos (ODVs) that capture the full field-of-view (FOV) has gained 360-degree saliency prediction importance in computer vision. However, predicting where humans look in 360-degree scenes presents unique challenges, including spherical distortion, high resolution, and limited labelled data. We propose a novel vision-transformer-based model for omnidirectional videos named SalViT360 that leverages tangent image representations. We introduce a spherical geometry-aware spatiotemporal self-attention mechanism that is capable of effective omnidirectional video understanding. Furthermore, we present a consistency-based unsupervised regularization term for projection-based 360-degree dense-prediction models to reduce artefacts in the predictions that occur after inverse projection. Our approach is the first to employ tangent images for omnidirectional saliency prediction, and our experimental results on three ODV saliency datasets demonstrate its effectiveness compared to the state-of-the-art.

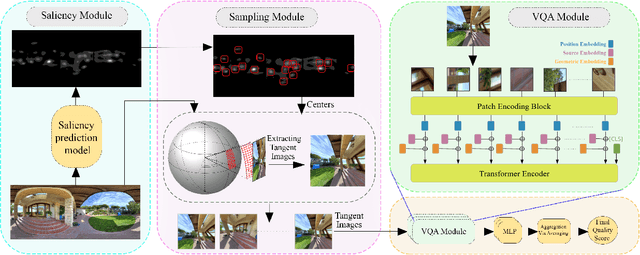

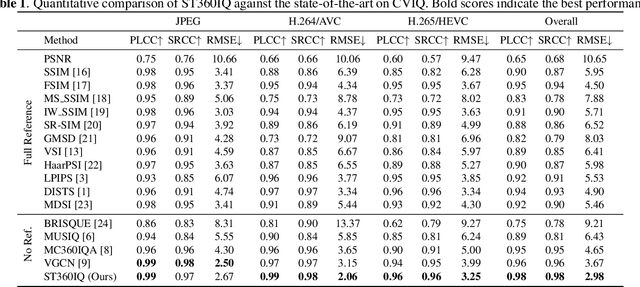

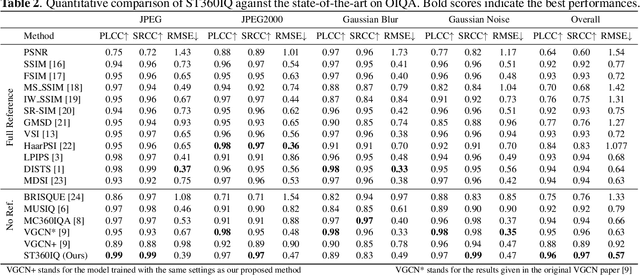

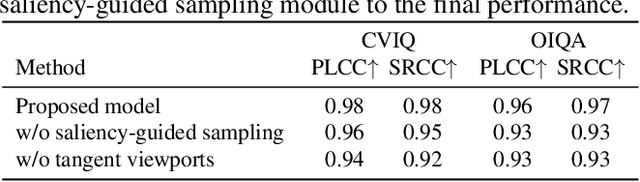

ST360IQ: No-Reference Omnidirectional Image Quality Assessment with Spherical Vision Transformers

Mar 13, 2023

Abstract:Omnidirectional images, aka 360 images, can deliver immersive and interactive visual experiences. As their popularity has increased dramatically in recent years, evaluating the quality of 360 images has become a problem of interest since it provides insights for capturing, transmitting, and consuming this new media. However, directly adapting quality assessment methods proposed for standard natural images for omnidirectional data poses certain challenges. These models need to deal with very high-resolution data and implicit distortions due to the spherical form of the images. In this study, we present a method for no-reference 360 image quality assessment. Our proposed ST360IQ model extracts tangent viewports from the salient parts of the input omnidirectional image and employs a vision-transformers based module processing saliency selective patches/tokens that estimates a quality score from each viewport. Then, it aggregates these scores to give a final quality score. Our experiments on two benchmark datasets, namely OIQA and CVIQ datasets, demonstrate that as compared to the state-of-the-art, our approach predicts the quality of an omnidirectional image correlated with the human-perceived image quality. The code has been available on https://github.com/Nafiseh-Tofighi/ST360IQ

RGB-D SLAM Using Attention Guided Frame Association

Jan 28, 2022

Abstract:Deep learning models as an emerging topic have shown great progress in various fields. Especially, visualization tools such as class activation mapping methods provided visual explanation on the reasoning of convolutional neural networks (CNNs). By using the gradients of the network layers, it is possible to demonstrate where the networks pay attention during a specific image recognition task. Moreover, these gradients can be integrated with CNN features for localizing more generalized task dependent attentive (salient) objects in scenes. Despite this progress, there is not much explicit usage of this gradient (network attention) information to integrate with CNN representations for object semantics. This can be very useful for visual tasks such as simultaneous localization and mapping (SLAM) where CNN representations of spatially attentive object locations may lead to improved performance. Therefore, in this work, we propose the use of task specific network attention for RGB-D indoor SLAM. To do so, we integrate layer-wise object attention information (layer gradients) with CNN layer representations to improve frame association performance in a state-of-the-art RGB-D indoor SLAM method. Experiments show promising initial results with improved performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge