Menghui Jiang

High-Resolution Global Land Surface Temperature Retrieval via a Coupled Mechanism-Machine Learning Framework

Sep 05, 2025Abstract:Land surface temperature (LST) is vital for land-atmosphere interactions and climate processes. Accurate LST retrieval remains challenging under heterogeneous land cover and extreme atmospheric conditions. Traditional split window (SW) algorithms show biases in humid environments; purely machine learning (ML) methods lack interpretability and generalize poorly with limited data. We propose a coupled mechanism model-ML (MM-ML) framework integrating physical constraints with data-driven learning for robust LST retrieval. Our approach fuses radiative transfer modeling with data components, uses MODTRAN simulations with global atmospheric profiles, and employs physics-constrained optimization. Validation against 4,450 observations from 29 global sites shows MM-ML achieves MAE=1.84K, RMSE=2.55K, and R-squared=0.966, outperforming conventional methods. Under extreme conditions, MM-ML reduces errors by over 50%. Sensitivity analysis indicates LST estimates are most sensitive to sensor radiance, then water vapor, and less to emissivity, with MM-ML showing superior stability. These results demonstrate the effectiveness of our coupled modeling strategy for retrieving geophysical parameters. The MM-ML framework combines physical interpretability with nonlinear modeling capacity, enabling reliable LST retrieval in complex environments and supporting climate monitoring and ecosystem studies.

A Mechanism-Learning Deeply Coupled Model for Remote Sensing Retrieval of Global Land Surface Temperature

Apr 10, 2025Abstract:Land surface temperature (LST) retrieval from remote sensing data is pivotal for analyzing climate processes and surface energy budgets. However, LST retrieval is an ill-posed inverse problem, which becomes particularly severe when only a single band is available. In this paper, we propose a deeply coupled framework integrating mechanistic modeling and machine learning to enhance the accuracy and generalizability of single-channel LST retrieval. Training samples are generated using a physically-based radiative transfer model and a global collection of 5810 atmospheric profiles. A physics-informed machine learning framework is proposed to systematically incorporate the first principles from classical physical inversion models into the learning workflow, with optimization constrained by radiative transfer equations. Global validation demonstrated a 30% reduction in root-mean-square error versus standalone methods. Under extreme humidity, the mean absolute error decreased from 4.87 K to 2.29 K (53% improvement). Continental-scale tests across five continents confirmed the superior generalizability of this model.

A physics-constrained machine learning method for mapping gapless land surface temperature

Jul 03, 2023Abstract:More accurate, spatio-temporally, and physically consistent LST estimation has been a main interest in Earth system research. Developing physics-driven mechanism models and data-driven machine learning (ML) models are two major paradigms for gapless LST estimation, which have their respective advantages and disadvantages. In this paper, a physics-constrained ML model, which combines the strengths in the mechanism model and ML model, is proposed to generate gapless LST with physical meanings and high accuracy. The hybrid model employs ML as the primary architecture, under which the input variable physical constraints are incorporated to enhance the interpretability and extrapolation ability of the model. Specifically, the light gradient-boosting machine (LGBM) model, which uses only remote sensing data as input, serves as the pure ML model. Physical constraints (PCs) are coupled by further incorporating key Community Land Model (CLM) forcing data (cause) and CLM simulation data (effect) as inputs into the LGBM model. This integration forms the PC-LGBM model, which incorporates surface energy balance (SEB) constraints underlying the data in CLM-LST modeling within a biophysical framework. Compared with a pure physical method and pure ML methods, the PC-LGBM model improves the prediction accuracy and physical interpretability of LST. It also demonstrates a good extrapolation ability for the responses to extreme weather cases, suggesting that the PC-LGBM model enables not only empirical learning from data but also rationally derived from theory. The proposed method represents an innovative way to map accurate and physically interpretable gapless LST, and could provide insights to accelerate knowledge discovery in land surface processes and data mining in geographical parameter estimation.

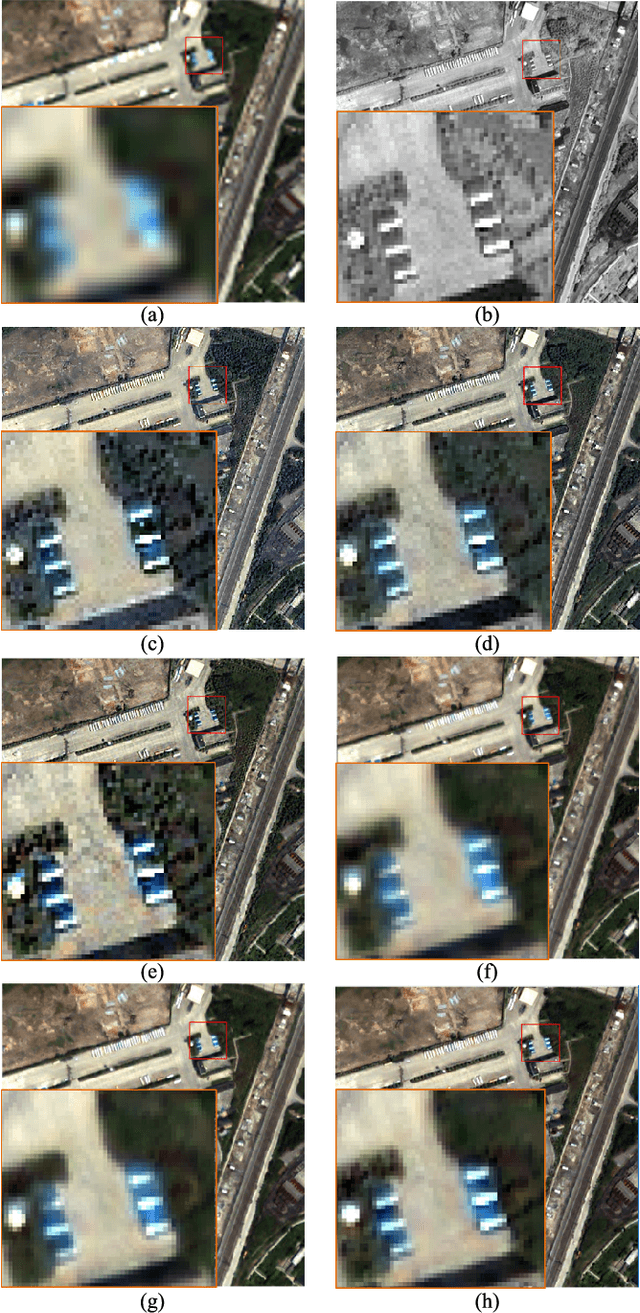

An Integrated Framework for the Heterogeneous Spatio-Spectral-Temporal Fusion of Remote Sensing Images

Sep 01, 2021

Abstract:Image fusion technology is widely used to fuse the complementary information between multi-source remote sensing images. Inspired by the frontier of deep learning, this paper first proposes a heterogeneous-integrated framework based on a novel deep residual cycle GAN. The proposed network consists of a forward fusion part and a backward degeneration feedback part. The forward part generates the desired fusion result from the various observations; the backward degeneration feedback part considers the imaging degradation process and regenerates the observations inversely from the fusion result. The proposed network can effectively fuse not only the homogeneous but also the heterogeneous information. In addition, for the first time, a heterogeneous-integrated fusion framework is proposed to simultaneously merge the complementary heterogeneous spatial, spectral and temporal information of multi-source heterogeneous observations. The proposed heterogeneous-integrated framework also provides a uniform mode that can complete various fusion tasks, including heterogeneous spatio-spectral fusion, spatio-temporal fusion, and heterogeneous spatio-spectral-temporal fusion. Experiments are conducted for two challenging scenarios of land cover changes and thick cloud coverage. Images from many remote sensing satellites, including MODIS, Landsat-8, Sentinel-1, and Sentinel-2, are utilized in the experiments. Both qualitative and quantitative evaluations confirm the effectiveness of the proposed method.

Coupling Model-Driven and Data-Driven Methods for Remote Sensing Image Restoration and Fusion

Aug 13, 2021

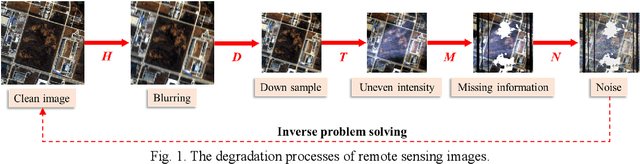

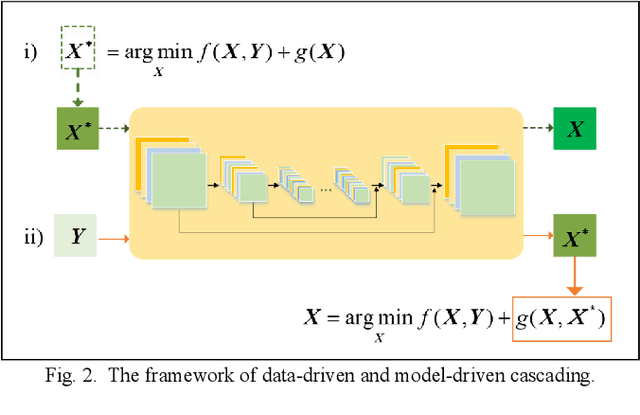

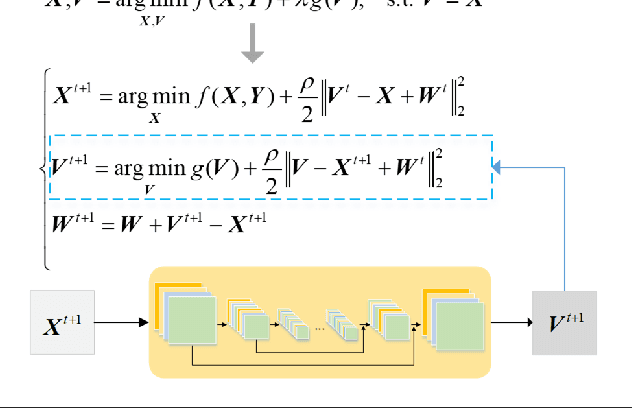

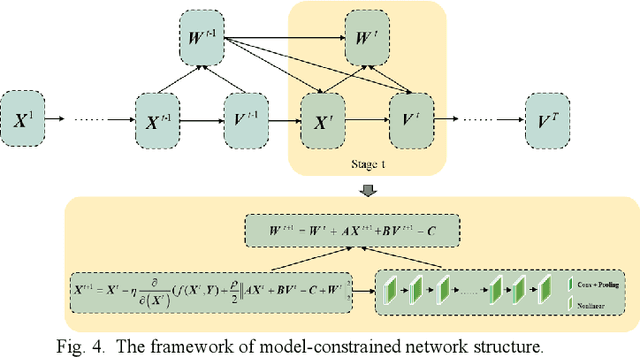

Abstract:In the fields of image restoration and image fusion, model-driven methods and data-driven methods are the two representative frameworks. However, both approaches have their respective advantages and disadvantages. The model-driven methods consider the imaging mechanism, which is deterministic and theoretically reasonable; however, they cannot easily model complicated nonlinear problems. The data-driven methods have a stronger prior knowledge learning capability for huge data, especially for nonlinear statistical features; however, the interpretability of the networks is poor, and they are over-dependent on training data. In this paper, we systematically investigate the coupling of model-driven and data-driven methods, which has rarely been considered in the remote sensing image restoration and fusion communities. We are the first to summarize the coupling approaches into the following three categories: 1) data-driven and model-driven cascading methods; 2) variational models with embedded learning; and 3) model-constrained network learning methods. The typical existing and potential coupling methods for remote sensing image restoration and fusion are introduced with application examples. This paper also gives some new insights into the potential future directions, in terms of both methods and applications.

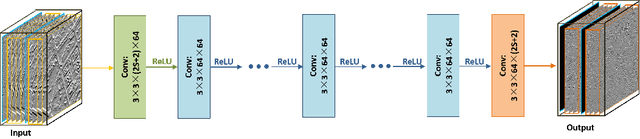

Spatial-Spectral Fusion by Combining Deep Learning and Variation Model

Sep 04, 2018

Abstract:In the field of spatial-spectral fusion, the model-based method and the deep learning (DL)-based method are state-of-the-art. This paper presents a fusion method that incorporates the deep neural network into the model-based method for the most common case in the spatial-spectral fusion: PAN/multispectral (MS) fusion. Specifically, we first map the gradient of the high spatial resolution panchromatic image (HR-PAN) and the low spatial resolution multispectral image (LR-MS) to the gradient of the high spatial resolution multispectral image (HR-MS) via a deep residual convolutional neural network (CNN). Then we construct a fusion framework by the LR-MS image, the gradient prior learned from the gradient network, and the ideal fused image. Finally, an iterative optimization algorithm is used to solve the fusion model. Both quantitative and visual assessments on high-quality images from various sources demonstrate that the proposed fusion method is superior to all the mainstream algorithms included in the comparison in terms of overall fusion accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge