Medb Corcoran

Generating Interpretable Counterfactual Explanations By Implicit Minimisation of Epistemic and Aleatoric Uncertainties

Mar 16, 2021

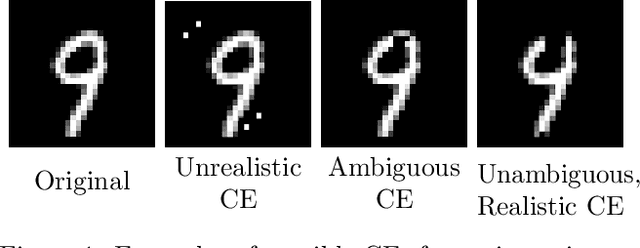

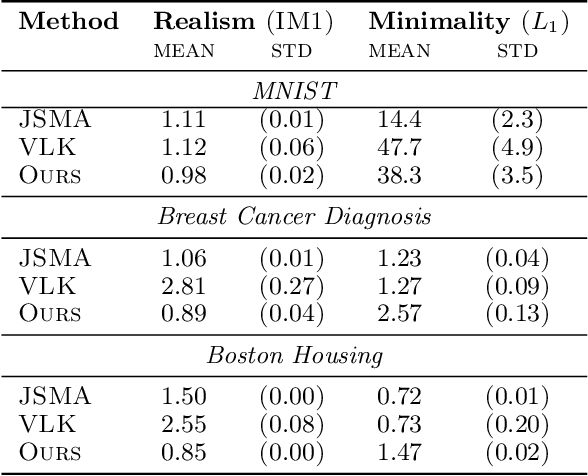

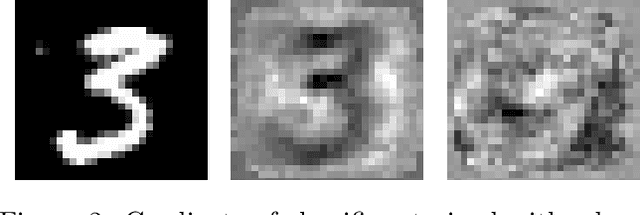

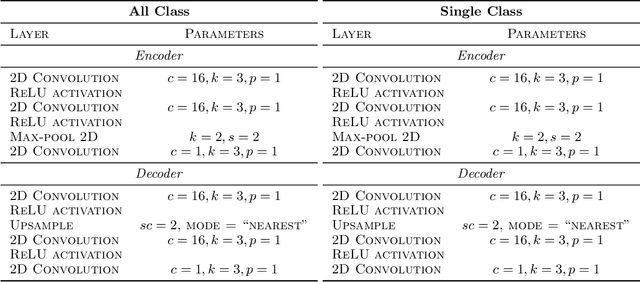

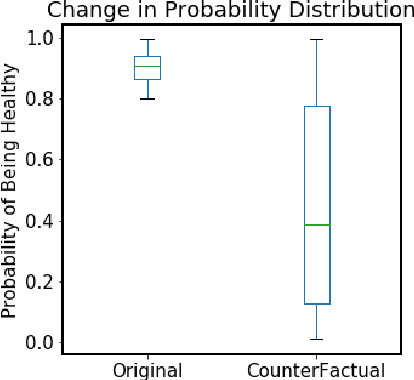

Abstract:Counterfactual explanations (CEs) are a practical tool for demonstrating why machine learning classifiers make particular decisions. For CEs to be useful, it is important that they are easy for users to interpret. Existing methods for generating interpretable CEs rely on auxiliary generative models, which may not be suitable for complex datasets, and incur engineering overhead. We introduce a simple and fast method for generating interpretable CEs in a white-box setting without an auxiliary model, by using the predictive uncertainty of the classifier. Our experiments show that our proposed algorithm generates more interpretable CEs, according to IM1 scores, than existing methods. Additionally, our approach allows us to estimate the uncertainty of a CE, which may be important in safety-critical applications, such as those in the medical domain.

* 21 pages, 13 Figures

Predicting Illness for a Sustainable Dairy Agriculture: Predicting and Explaining the Onset of Mastitis in Dairy Cows

Jan 07, 2021

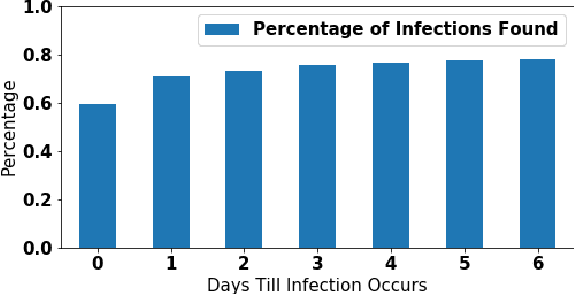

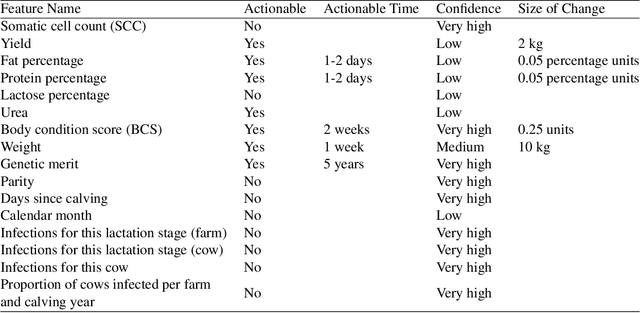

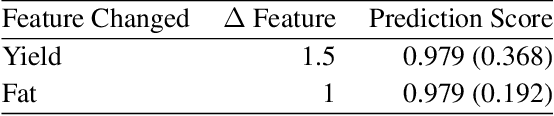

Abstract:Mastitis is a billion dollar health problem for the modern dairy industry, with implications for antibiotic resistance. The use of AI techniques to identify the early onset of this disease, thus has significant implications for the sustainability of this agricultural sector. Current approaches to treating mastitis involve antibiotics and this practice is coming under ever increasing scrutiny. Using machine learning models to identify cows at risk of developing mastitis and applying targeted treatment regimes to only those animals promotes a more sustainable approach. Incorrect predictions from such models, however, can lead to monetary losses, unnecessary use of antibiotics, and even the premature death of animals, so it is important to generate compelling explanations for predictions to build trust with users and to better support their decision making. In this paper we demonstrate a system developed to predict mastitis infections in cows and provide explanations of these predictions using counterfactuals. We demonstrate the system and describe the engagement with farmers undertaken to build it.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge