Md Hasibul Amin

University Of Houston

Multilingual Financial Fraud Detection Using Machine Learning and Transformer Models: A Bangla-English Study

Mar 11, 2026Abstract:Financial fraud detection has emerged as a critical research challenge amid the rapid expansion of digital financial platforms. Although machine learning approaches have demonstrated strong performance in identifying fraudulent activities, most existing research focuses exclusively on English-language data, limiting applicability to multilingual contexts. Bangla (Bengali), despite being spoken by over 250 million people, remains largely unexplored in this domain. In this work, we investigate financial fraud detection in a multilingual Bangla-English setting using a dataset comprising legitimate and fraudulent financial messages. We evaluate classical machine learning models (Logistic Regression, Linear SVM, and Ensemble classifiers) using TF-IDF features alongside transformer-based architectures. Experimental results using 5-fold stratified cross-validation demonstrate that Linear SVM achieves the best performance with 91.59 percent accuracy and 91.30 percent F1 score, outperforming the transformer model (89.49 percent accuracy, 88.88 percent F1) by approximately 2 percentage points. The transformer exhibits higher fraud recall (94.19 percent) but suffers from elevated false positive rates. Exploratory analysis reveals distinctive patterns: scam messages are longer, contain urgency-inducing terms, and frequently include URLs (32 percent) and phone numbers (97 percent), while legitimate messages feature transactional confirmations and specific currency references. Our findings highlight that classical machine learning with well-crafted features remains competitive for multilingual fraud detection, while also underscoring the challenges posed by linguistic diversity, code-mixing, and low-resource language constraints.

CrossNAS: A Cross-Layer Neural Architecture Search Framework for PIM Systems

May 28, 2025Abstract:In this paper, we propose the CrossNAS framework, an automated approach for exploring a vast, multidimensional search space that spans various design abstraction layers-circuits, architecture, and systems-to optimize the deployment of machine learning workloads on analog processing-in-memory (PIM) systems. CrossNAS leverages the single-path one-shot weight-sharing strategy combined with the evolutionary search for the first time in the context of PIM system mapping and optimization. CrossNAS sets a new benchmark for PIM neural architecture search (NAS), outperforming previous methods in both accuracy and energy efficiency while maintaining comparable or shorter search times.

PIM-LLM: A High-Throughput Hybrid PIM Architecture for 1-bit LLMs

Mar 31, 2025

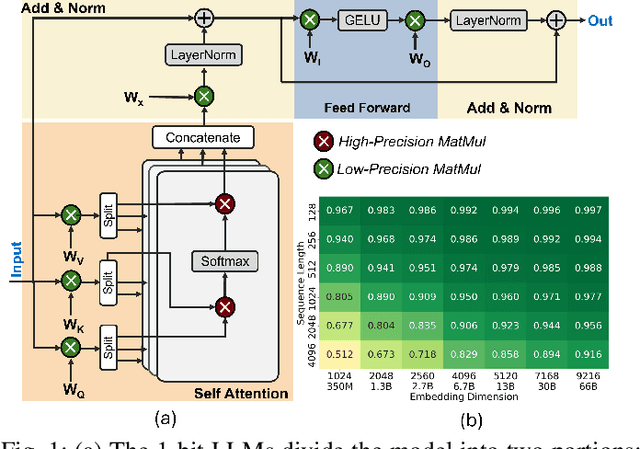

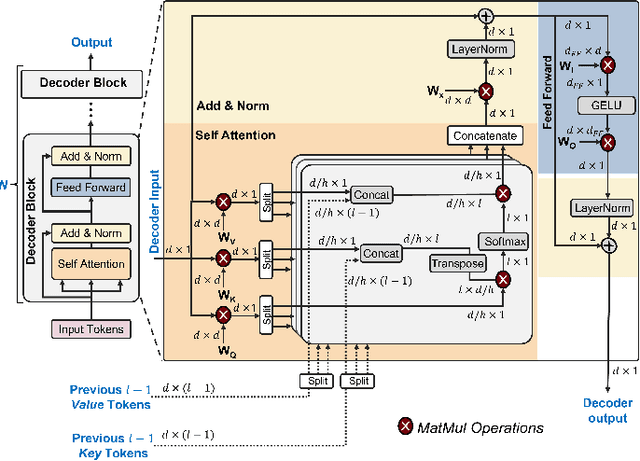

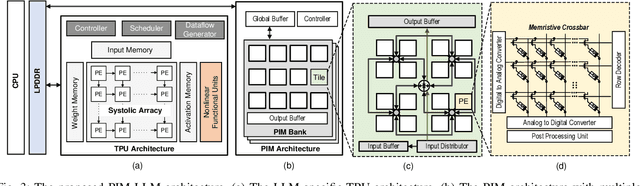

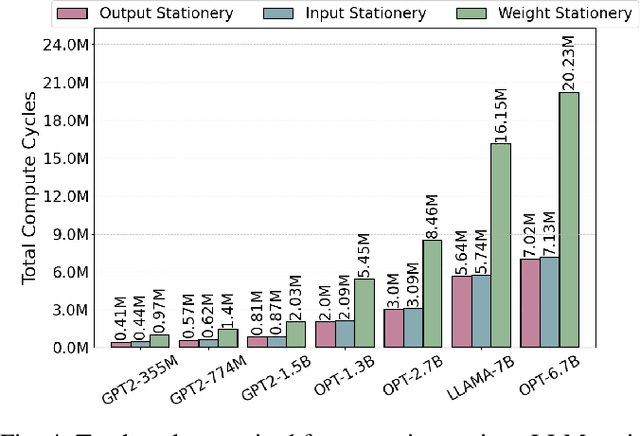

Abstract:In this paper, we propose PIM-LLM, a hybrid architecture developed to accelerate 1-bit large language models (LLMs). PIM-LLM leverages analog processing-in-memory (PIM) architectures and digital systolic arrays to accelerate low-precision matrix multiplication (MatMul) operations in projection layers and high-precision MatMul operations in attention heads of 1-bit LLMs, respectively. Our design achieves up to roughly 80x improvement in tokens per second and a 70% increase in tokens per joule compared to conventional hardware accelerators. Additionally, PIM-LLM outperforms previous PIM-based LLM accelerators, setting a new benchmark with at least 2x and 5x improvement in GOPS and GOPS/W, respectively.

IMAC-Sim: A Circuit-level Simulator For In-Memory Analog Computing Architectures

Apr 18, 2023

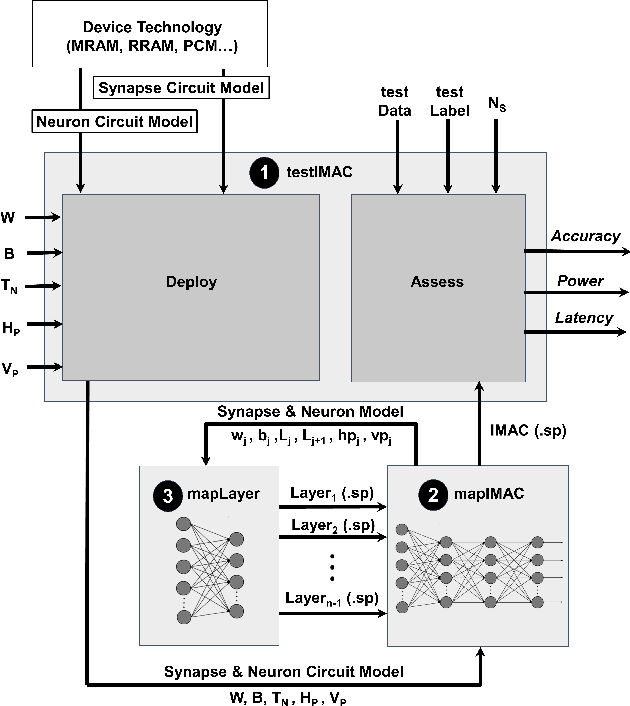

Abstract:With the increased attention to memristive-based in-memory analog computing (IMAC) architectures as an alternative for energy-hungry computer systems for machine learning applications, a tool that enables exploring their device- and circuit-level design space can significantly boost the research and development in this area. Thus, in this paper, we develop IMAC-Sim, a circuit-level simulator for the design space exploration of IMAC architectures. IMAC-Sim is a Python-based simulation framework, which creates the SPICE netlist of the IMAC circuit based on various device- and circuit-level hyperparameters selected by the user, and automatically evaluates the accuracy, power consumption, and latency of the developed circuit using a user-specified dataset. Moreover, IMAC-Sim simulates the interconnect parasitic resistance and capacitance in the IMAC architectures and is also equipped with horizontal and vertical partitioning techniques to surmount these reliability challenges. IMAC-Sim is a flexible tool that supports a broad range of device- and circuit-level hyperparameters. In this paper, we perform controlled experiments to exhibit some of the important capabilities of the IMAC-Sim, while the entirety of its features is available for researchers via an open-source tool.

Heterogeneous Integration of In-Memory Analog Computing Architectures with Tensor Processing Units

Apr 18, 2023

Abstract:Tensor processing units (TPUs), specialized hardware accelerators for machine learning tasks, have shown significant performance improvements when executing convolutional layers in convolutional neural networks (CNNs). However, they struggle to maintain the same efficiency in fully connected (FC) layers, leading to suboptimal hardware utilization. In-memory analog computing (IMAC) architectures, on the other hand, have demonstrated notable speedup in executing FC layers. This paper introduces a novel, heterogeneous, mixed-signal, and mixed-precision architecture that integrates an IMAC unit with an edge TPU to enhance mobile CNN performance. To leverage the strengths of TPUs for convolutional layers and IMAC circuits for dense layers, we propose a unified learning algorithm that incorporates mixed-precision training techniques to mitigate potential accuracy drops when deploying models on the TPU-IMAC architecture. The simulations demonstrate that the TPU-IMAC configuration achieves up to $2.59\times$ performance improvements, and $88\%$ memory reductions compared to conventional TPU architectures for various CNN models while maintaining comparable accuracy. The TPU-IMAC architecture shows potential for various applications where energy efficiency and high performance are essential, such as edge computing and real-time processing in mobile devices. The unified training algorithm and the integration of IMAC and TPU architectures contribute to the potential impact of this research on the broader machine learning landscape.

Detecting Conspiracy Theory Against COVID-19 Vaccines

Nov 20, 2022

Abstract:Since the beginning of the vaccination trial, social media has been flooded with anti-vaccination comments and conspiracy beliefs. As the day passes, the number of COVID- 19 cases increases, and online platforms and a few news portals entertain sharing different conspiracy theories. The most popular conspiracy belief was the link between the 5G network spreading COVID-19 and the Chinese government spreading the virus as a bioweapon, which initially created racial hatred. Although some disbelief has less impact on society, others create massive destruction. For example, the 5G conspiracy led to the burn of the 5G Tower, and belief in the Chinese bioweapon story promoted an attack on the Asian-Americans. Another popular conspiracy belief was that Bill Gates spread this Coronavirus disease (COVID-19) by launching a mass vaccination program to track everyone. This Conspiracy belief creates distrust issues among laypeople and creates vaccine hesitancy. This study aims to discover the conspiracy theory against the vaccine on social platforms. We performed a sentiment analysis on the 598 unique sample comments related to COVID-19 vaccines. We used two different models, BERT and Perspective API, to find out the sentiment and toxicity of the sentence toward the COVID-19 vaccine.

MRAM-based Analog Sigmoid Function for In-memory Computing

Apr 21, 2022

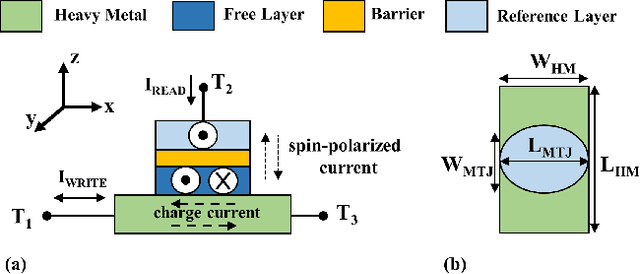

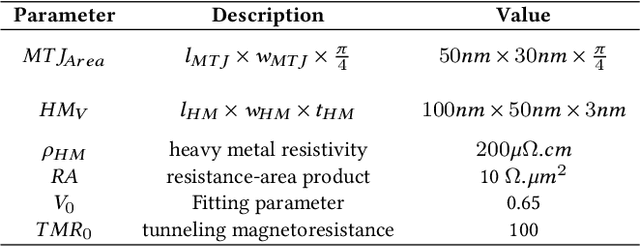

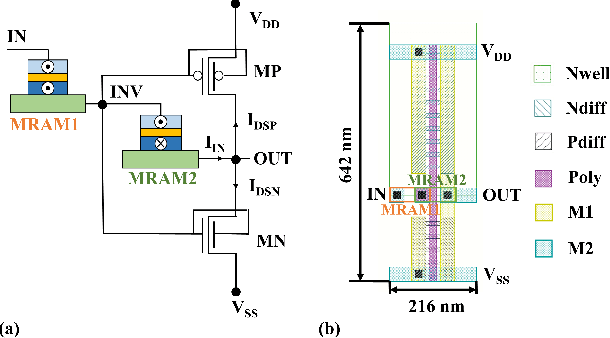

Abstract:We propose an analog implementation of the transcendental activation function leveraging two spin-orbit torque magnetoresistive random-access memory (SOT-MRAM) devices and a CMOS inverter. The proposed analog neuron circuit consumes 1.8-27x less power, and occupies 2.5-4931x smaller area, compared to the state-of-the-art analog and digital implementations. Moreover, the developed neuron can be readily integrated with memristive crossbars without requiring any intermediate signal conversion units. The architecture-level analyses show that a fully-analog in-memory computing (IMC) circuit that use our SOT-MRAM neuron along with an SOT-MRAM based crossbar can achieve more than 1.1x, 12x, and 13.3x reduction in power, latency, and energy, respectively, compared to a mixed-signal implementation with analog memristive crossbars and digital neurons. Finally, through cross-layer analyses, we provide a guide on how varying the device-level parameters in our neuron can affect the accuracy of multilayer perceptron (MLP) for MNIST classification.

Interconnect Parasitics and Partitioning in Fully-Analog In-Memory Computing Architectures

Jan 29, 2022

Abstract:Fully-analog in-memory computing (IMC) architectures that implement both matrix-vector multiplication and non-linear vector operations within the same memory array have shown promising performance benefits over conventional IMC systems due to the removal of energy-hungry signal conversion units. However, maintaining the computation in the analog domain for the entire deep neural network (DNN) comes with potential sensitivity to interconnect parasitics. Thus, in this paper, we investigate the effect of wire parasitic resistance and capacitance on the accuracy of DNN models deployed on fully-analog IMC architectures. Moreover, we propose a partitioning mechanism to alleviate the impact of the parasitic while keeping the computation in the analog domain through dividing large arrays into multiple partitions. The SPICE circuit simulation results for a 400 X 120 X 84 X 10 DNN model deployed on a fully-analog IMC circuit show that a 94.84% accuracy could be achieved for MNIST classification application with 16, 8, and 8 horizontal partitions, as well as 8, 8, and 1 vertical partitions for first, second, and third layers of the DNN, respectively, which is comparable to the ~97% accuracy realized by digital implementation on CPU. It is shown that accuracy benefits are achieved at the cost of higher power consumption due to the extra circuitry required for handling partitioning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge