Maria Dronova

AirNeRF: 3D Reconstruction of Human with Drone and NeRF for Future Communication Systems

Jul 15, 2024

Abstract:In the rapidly evolving landscape of digital content creation, the demand for fast, convenient, and autonomous methods of crafting detailed 3D reconstructions of humans has grown significantly. Addressing this pressing need, our AirNeRF system presents an innovative pathway to the creation of a realistic 3D human avatar. Our approach leverages Neural Radiance Fields (NeRF) with an automated drone-based video capturing method. The acquired data provides a swift and precise way to create high-quality human body reconstructions following several stages of our system. The rigged mesh derived from our system proves to be an excellent foundation for free-view synthesis of dynamic humans, particularly well-suited for the immersive experiences within gaming and virtual reality.

FlyNeRF: NeRF-Based Aerial Mapping for High-Quality 3D Scene Reconstruction

Apr 19, 2024

Abstract:Current methods for 3D reconstruction and environmental mapping frequently face challenges in achieving high precision, highlighting the need for practical and effective solutions. In response to this issue, our study introduces FlyNeRF, a system integrating Neural Radiance Fields (NeRF) with drone-based data acquisition for high-quality 3D reconstruction. Utilizing unmanned aerial vehicle (UAV) for capturing images and corresponding spatial coordinates, the obtained data is subsequently used for the initial NeRF-based 3D reconstruction of the environment. Further evaluation of the reconstruction render quality is accomplished by the image evaluation neural network developed within the scope of our system. According to the results of the image evaluation module, an autonomous algorithm determines the position for additional image capture, thereby improving the reconstruction quality. The neural network introduced for render quality assessment demonstrates an accuracy of 97%. Furthermore, our adaptive methodology enhances the overall reconstruction quality, resulting in an average improvement of 2.5 dB in Peak Signal-to-Noise Ratio (PSNR) for the 10% quantile. The FlyNeRF demonstrates promising results, offering advancements in such fields as environmental monitoring, surveillance, and digital twins, where high-fidelity 3D reconstructions are crucial.

LLM-MARS: Large Language Model for Behavior Tree Generation and NLP-enhanced Dialogue in Multi-Agent Robot Systems

Dec 14, 2023

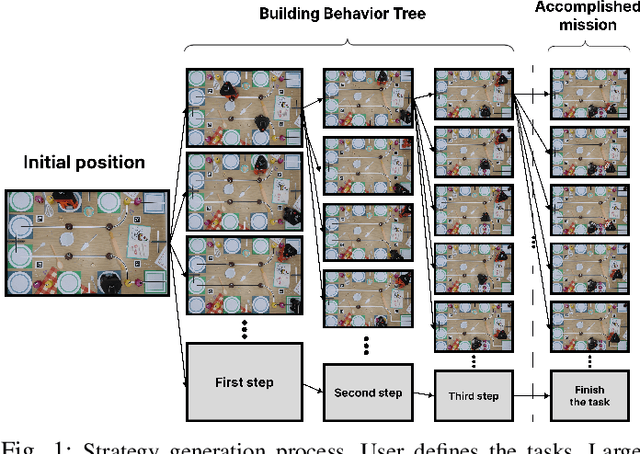

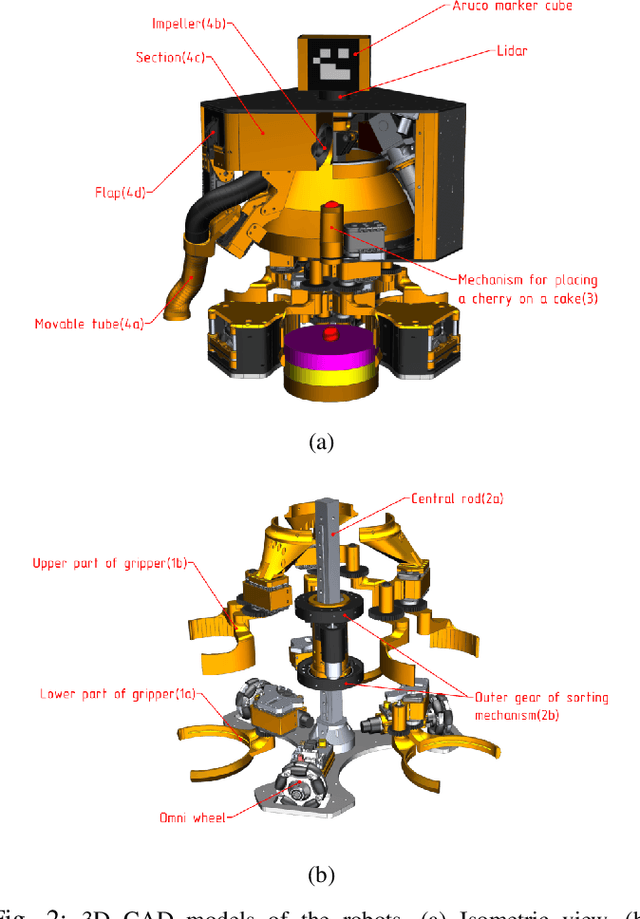

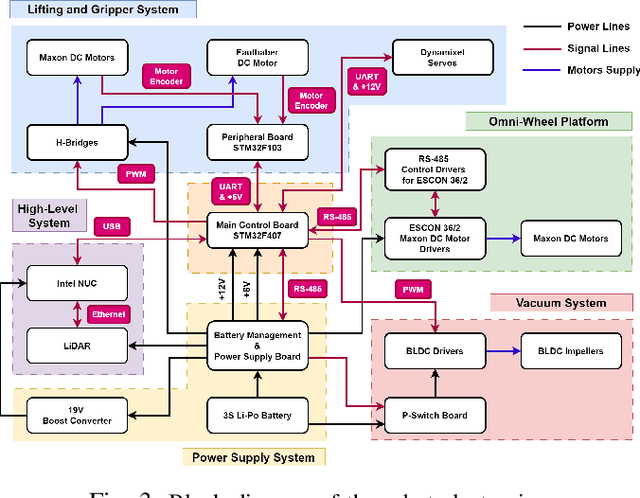

Abstract:This paper introduces LLM-MARS, first technology that utilizes a Large Language Model based Artificial Intelligence for Multi-Agent Robot Systems. LLM-MARS enables dynamic dialogues between humans and robots, allowing the latter to generate behavior based on operator commands and provide informative answers to questions about their actions. LLM-MARS is built on a transformer-based Large Language Model, fine-tuned from the Falcon 7B model. We employ a multimodal approach using LoRa adapters for different tasks. The first LoRa adapter was developed by fine-tuning the base model on examples of Behavior Trees and their corresponding commands. The second LoRa adapter was developed by fine-tuning on question-answering examples. Practical trials on a multi-agent system of two robots within the Eurobot 2023 game rules demonstrate promising results. The robots achieve an average task execution accuracy of 79.28% in compound commands. With commands containing up to two tasks accuracy exceeded 90%. Evaluation confirms the system's answers on operators questions exhibit high accuracy, relevance, and informativeness. LLM-MARS and similar multi-agent robotic systems hold significant potential to revolutionize logistics, enabling autonomous exploration missions and advancing Industry 5.0.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge