Marco Maggini

From Arabic Text to Puzzles: LLM-Driven Development of Arabic Educational Crosswords

Jan 19, 2025Abstract:We present an Arabic crossword puzzle generator from a given text that utilizes advanced language models such as GPT-4-Turbo, GPT-3.5-Turbo and Llama3-8B-Instruct, specifically developed for educational purposes, this innovative generator leverages a meticulously compiled dataset named Arabic-Clue-Instruct with over 50,000 entries encompassing text, answers, clues, and categories. This dataset is intricately designed to aid in the generation of pertinent clues linked to specific texts and keywords within defined categories. This project addresses the scarcity of advanced educational tools tailored for the Arabic language, promoting enhanced language learning and cognitive development. By providing a culturally and linguistically relevant tool, our objective is to make learning more engaging and effective through gamification and interactivity. Integrating state-of-the-art artificial intelligence with contemporary learning methodologies, this tool can generate crossword puzzles from any given educational text, thereby facilitating an interactive and enjoyable learning experience. This tool not only advances educational paradigms but also sets a new standard in interactive and cognitive learning technologies. The model and dataset are publicly available.

Advancing Student Writing Through Automated Syntax Feedback

Jan 13, 2025

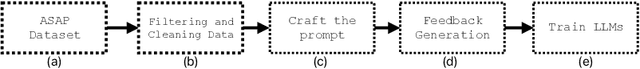

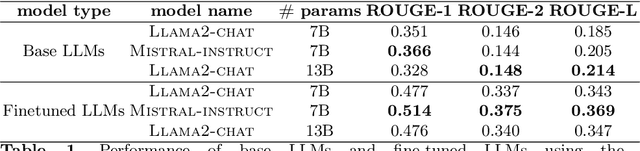

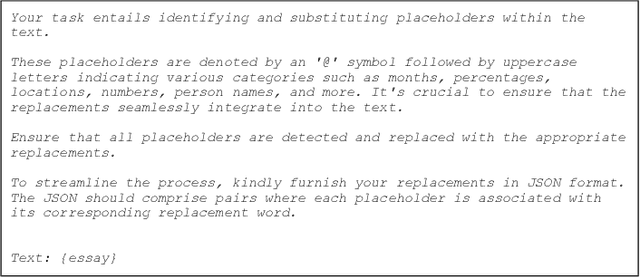

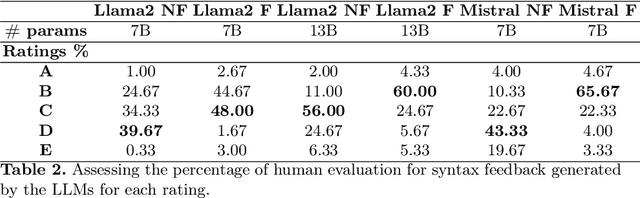

Abstract:This study underscores the pivotal role of syntax feedback in augmenting the syntactic proficiency of students. Recognizing the challenges faced by learners in mastering syntactic nuances, we introduce a specialized dataset named Essay-Syntax-Instruct designed to enhance the understanding and application of English syntax among these students. Leveraging the capabilities of Large Language Models (LLMs) such as GPT3.5-Turbo, Llama-2-7b-chat-hf, Llama-2-13b-chat-hf, and Mistral-7B-Instruct-v0.2, this work embarks on a comprehensive fine-tuning process tailored to the syntax improvement task. Through meticulous evaluation, we demonstrate that the fine-tuned LLMs exhibit a marked improvement in addressing syntax-related challenges, thereby serving as a potent tool for students to identify and rectify their syntactic errors. The findings not only highlight the effectiveness of the proposed dataset in elevating the performance of LLMs for syntax enhancement but also illuminate a promising path for utilizing advanced language models to support language acquisition efforts. This research contributes to the broader field of language learning technology by showcasing the potential of LLMs in facilitating the linguistic development of Students.

Pirates of the RAG: Adaptively Attacking LLMs to Leak Knowledge Bases

Dec 24, 2024

Abstract:The growing ubiquity of Retrieval-Augmented Generation (RAG) systems in several real-world services triggers severe concerns about their security. A RAG system improves the generative capabilities of a Large Language Models (LLM) by a retrieval mechanism which operates on a private knowledge base, whose unintended exposure could lead to severe consequences, including breaches of private and sensitive information. This paper presents a black-box attack to force a RAG system to leak its private knowledge base which, differently from existing approaches, is adaptive and automatic. A relevance-based mechanism and an attacker-side open-source LLM favor the generation of effective queries to leak most of the (hidden) knowledge base. Extensive experimentation proves the quality of the proposed algorithm in different RAG pipelines and domains, comparing to very recent related approaches, which turn out to be either not fully black-box, not adaptive, or not based on open-source models. The findings from our study remark the urgent need for more robust privacy safeguards in the design and deployment of RAG systems.

Harnessing LLMs for Educational Content-Driven Italian Crossword Generation

Nov 25, 2024Abstract:In this work, we unveil a novel tool for generating Italian crossword puzzles from text, utilizing advanced language models such as GPT-4o, Mistral-7B-Instruct-v0.3, and Llama3-8b-Instruct. Crafted specifically for educational applications, this cutting-edge generator makes use of the comprehensive Italian-Clue-Instruct dataset, which comprises over 30,000 entries including diverse text, solutions, and types of clues. This carefully assembled dataset is designed to facilitate the creation of contextually relevant clues in various styles associated with specific texts and keywords. The study delves into four distinctive styles of crossword clues: those without format constraints, those formed as definite determiner phrases, copular sentences, and bare noun phrases. Each style introduces unique linguistic structures to diversify clue presentation. Given the lack of sophisticated educational tools tailored to the Italian language, this project seeks to enhance learning experiences and cognitive development through an engaging, interactive platform. By meshing state-of-the-art AI with contemporary educational strategies, our tool can dynamically generate crossword puzzles from Italian educational materials, thereby providing an enjoyable and interactive learning environment. This technological advancement not only redefines educational paradigms but also sets a new benchmark for interactive and cognitive language learning solutions.

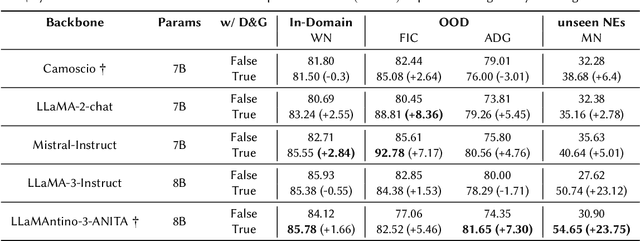

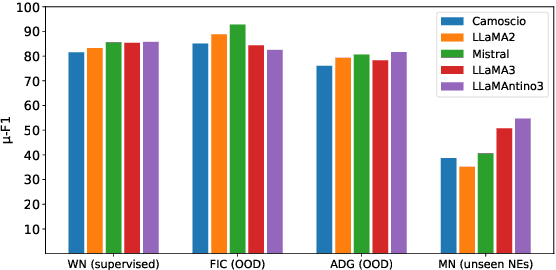

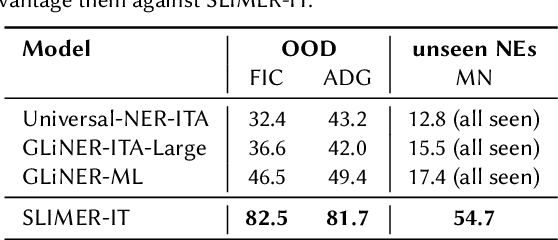

SLIMER-IT: Zero-Shot NER on Italian Language

Sep 24, 2024

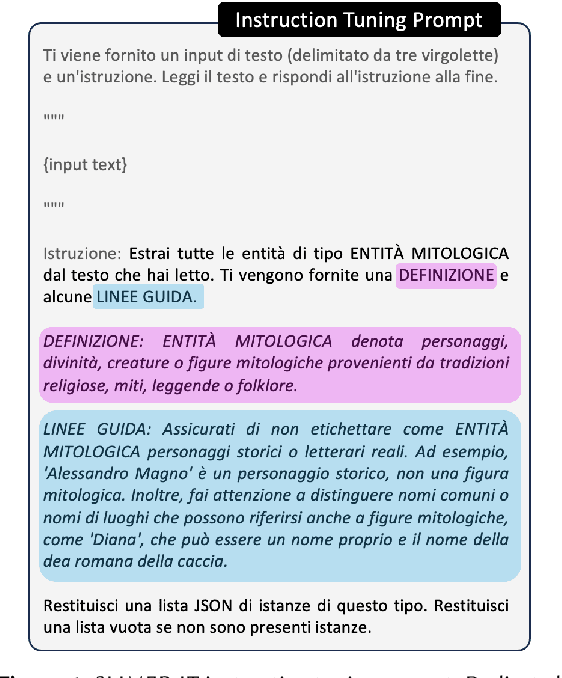

Abstract:Traditional approaches to Named Entity Recognition (NER) frame the task into a BIO sequence labeling problem. Although these systems often excel in the downstream task at hand, they require extensive annotated data and struggle to generalize to out-of-distribution input domains and unseen entity types. On the contrary, Large Language Models (LLMs) have demonstrated strong zero-shot capabilities. While several works address Zero-Shot NER in English, little has been done in other languages. In this paper, we define an evaluation framework for Zero-Shot NER, applying it to the Italian language. Furthermore, we introduce SLIMER-IT, the Italian version of SLIMER, an instruction-tuning approach for zero-shot NER leveraging prompts enriched with definition and guidelines. Comparisons with other state-of-the-art models, demonstrate the superiority of SLIMER-IT on never-seen-before entity tags.

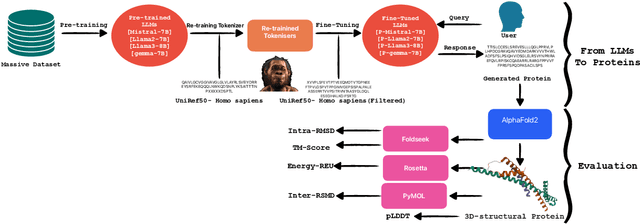

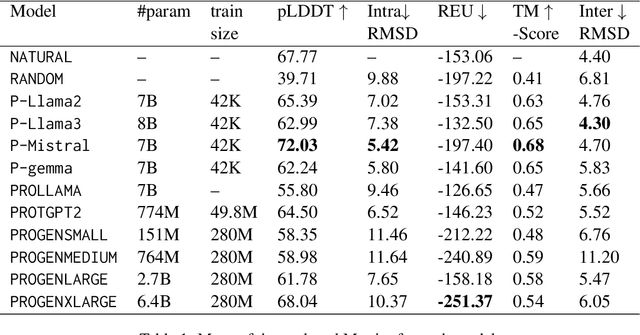

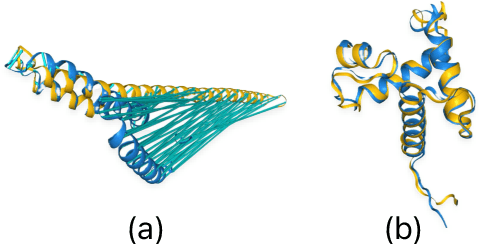

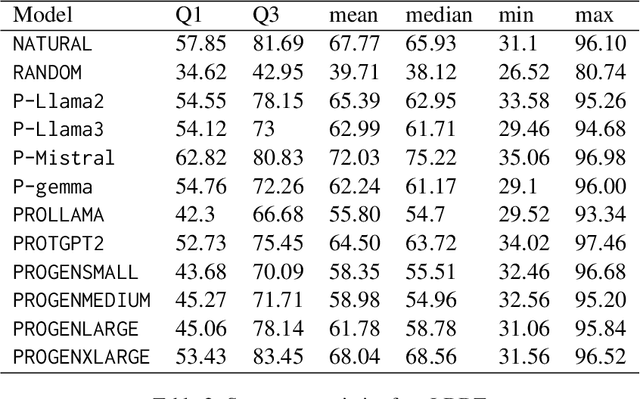

Design Proteins Using Large Language Models: Enhancements and Comparative Analyses

Aug 12, 2024

Abstract:Pre-trained LLMs have demonstrated substantial capabilities across a range of conventional natural language processing (NLP) tasks, such as summarization and entity recognition. In this paper, we explore the application of LLMs in the generation of high-quality protein sequences. Specifically, we adopt a suite of pre-trained LLMs, including Mistral-7B1, Llama-2-7B2, Llama-3-8B3, and gemma-7B4, to produce valid protein sequences. All of these models are publicly available.5 Unlike previous work in this field, our approach utilizes a relatively small dataset comprising 42,000 distinct human protein sequences. We retrain these models to process protein-related data, ensuring the generation of biologically feasible protein structures. Our findings demonstrate that even with limited data, the adapted models exhibit efficiency comparable to established protein-focused models such as ProGen varieties, ProtGPT2, and ProLLaMA, which were trained on millions of protein sequences. To validate and quantify the performance of our models, we conduct comparative analyses employing standard metrics such as pLDDT, RMSD, TM-score, and REU. Furthermore, we commit to making the trained versions of all four models publicly available, fostering greater transparency and collaboration in the field of computational biology.

Show Less, Instruct More: Enriching Prompts with Definitions and Guidelines for Zero-Shot NER

Jul 02, 2024Abstract:Recently, several specialized instruction-tuned Large Language Models (LLMs) for Named Entity Recognition (NER) have emerged. Compared to traditional NER approaches, these models have strong generalization capabilities. Existing LLMs mainly focus on zero-shot NER in out-of-domain distributions, being fine-tuned on an extensive number of entity classes that often highly or completely overlap with test sets. In this work instead, we propose SLIMER, an approach designed to tackle never-seen-before named entity tags by instructing the model on fewer examples, and by leveraging a prompt enriched with definition and guidelines. Experiments demonstrate that definition and guidelines yield better performance, faster and more robust learning, particularly when labelling unseen Named Entities. Furthermore, SLIMER performs comparably to state-of-the-art approaches in out-of-domain zero-shot NER, while being trained on a reduced tag set.

Automating Turkish Educational Quiz Generation Using Large Language Models

Jun 05, 2024Abstract:Crafting quizzes from educational content is a pivotal activity that benefits both teachers and students by reinforcing learning and evaluating understanding. In this study, we introduce a novel approach to generate quizzes from Turkish educational texts, marking a pioneering endeavor in educational technology specifically tailored to the Turkish educational context. We present a specialized dataset, named the Turkish-Quiz-Instruct, comprising an extensive collection of Turkish educational texts accompanied by multiple-choice and short-answer quizzes. This research leverages the capabilities of Large Language Models (LLMs), including GPT-4-Turbo, GPT-3.5-Turbo, Llama-2-7b-chat-hf, and Llama-2-13b-chat-hf, to automatically generate quiz questions and answers from the Turkish educational content. Our work delineates the methodology for employing these LLMs in the context of Turkish educational material, thereby opening new avenues for automated Turkish quiz generation. The study not only demonstrates the efficacy of using such models for generating coherent and relevant quiz content but also sets a precedent for future research in the domain of automated educational content creation for languages other than English. The Turkish-Quiz-Instruct dataset is introduced as a valuable resource for researchers and practitioners aiming to explore the boundaries of educational technology and language-specific applications of LLMs in Turkish. By addressing the challenges of quiz generation in a non-English context specifically Turkish, this study contributes significantly to the field of Turkish educational technology, providing insights into the potential of leveraging LLMs for educational purposes across diverse linguistic landscapes.

A Turkish Educational Crossword Puzzle Generator

May 15, 2024

Abstract:This paper introduces the first Turkish crossword puzzle generator designed to leverage the capabilities of large language models (LLMs) for educational purposes. In this work, we introduced two specially created datasets: one with over 180,000 unique answer-clue pairs for generating relevant clues from the given answer, and another with over 35,000 samples containing text, answer, category, and clue data, aimed at producing clues for specific texts and keywords within certain categories. Beyond entertainment, this generator emerges as an interactive educational tool that enhances memory, vocabulary, and problem-solving skills. It's a notable step in AI-enhanced education, merging game-like engagement with learning for Turkish and setting new standards for interactive, intelligent learning tools in Turkish.

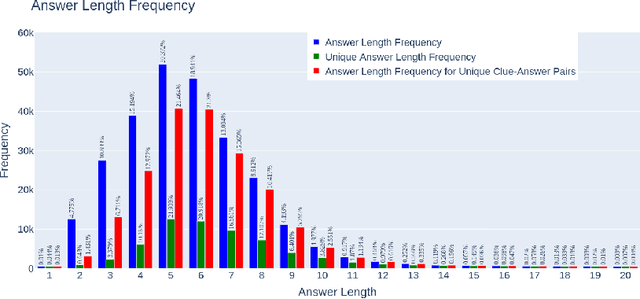

Clue-Instruct: Text-Based Clue Generation for Educational Crossword Puzzles

Apr 09, 2024Abstract:Crossword puzzles are popular linguistic games often used as tools to engage students in learning. Educational crosswords are characterized by less cryptic and more factual clues that distinguish them from traditional crossword puzzles. Despite there exist several publicly available clue-answer pair databases for traditional crosswords, educational clue-answer pairs datasets are missing. In this article, we propose a methodology to build educational clue generation datasets that can be used to instruct Large Language Models (LLMs). By gathering from Wikipedia pages informative content associated with relevant keywords, we use Large Language Models to automatically generate pedagogical clues related to the given input keyword and its context. With such an approach, we created clue-instruct, a dataset containing 44,075 unique examples with text-keyword pairs associated with three distinct crossword clues. We used clue-instruct to instruct different LLMs to generate educational clues from a given input content and keyword. Both human and automatic evaluations confirmed the quality of the generated clues, thus validating the effectiveness of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge