Lucas C. Ribas

VORTEX: Challenging CNNs at Texture Recognition by using Vision Transformers with Orderless and Randomized Token Encodings

Mar 09, 2025

Abstract:Texture recognition has recently been dominated by ImageNet-pre-trained deep Convolutional Neural Networks (CNNs), with specialized modifications and feature engineering required to achieve state-of-the-art (SOTA) performance. However, although Vision Transformers (ViTs) were introduced a few years ago, little is known about their texture recognition ability. Therefore, in this work, we introduce VORTEX (ViTs with Orderless and Randomized Token Encodings for Texture Recognition), a novel method that enables the effective use of ViTs for texture analysis. VORTEX extracts multi-depth token embeddings from pre-trained ViT backbones and employs a lightweight module to aggregate hierarchical features and perform orderless encoding, obtaining a better image representation for texture recognition tasks. This approach allows seamless integration with any ViT with the common transformer architecture. Moreover, no fine-tuning of the backbone is performed, since they are used only as frozen feature extractors, and the features are fed to a linear SVM. We evaluate VORTEX on nine diverse texture datasets, demonstrating its ability to achieve or surpass SOTA performance in a variety of texture analysis scenarios. By bridging the gap between texture recognition with CNNs and transformer-based architectures, VORTEX paves the way for adopting emerging transformer foundation models. Furthermore, VORTEX demonstrates robust computational efficiency when coupled with ViT backbones compared to CNNs with similar costs. The method implementation and experimental scripts are publicly available in our online repository.

A Comparative Survey of Vision Transformers for Feature Extraction in Texture Analysis

Jun 10, 2024

Abstract:Texture, a significant visual attribute in images, has been extensively investigated across various image recognition applications. Convolutional Neural Networks (CNNs), which have been successful in many computer vision tasks, are currently among the best texture analysis approaches. On the other hand, Vision Transformers (ViTs) have been surpassing the performance of CNNs on tasks such as object recognition, causing a paradigm shift in the field. However, ViTs have so far not been scrutinized for texture recognition, hindering a proper appreciation of their potential in this specific setting. For this reason, this work explores various pre-trained ViT architectures when transferred to tasks that rely on textures. We review 21 different ViT variants and perform an extensive evaluation and comparison with CNNs and hand-engineered models on several tasks, such as assessing robustness to changes in texture rotation, scale, and illumination, and distinguishing color textures, material textures, and texture attributes. The goal is to understand the potential and differences among these models when directly applied to texture recognition, using pre-trained ViTs primarily for feature extraction and employing linear classifiers for evaluation. We also evaluate their efficiency, which is one of the main drawbacks in contrast to other methods. Our results show that ViTs generally outperform both CNNs and hand-engineered models, especially when using stronger pre-training and tasks involving in-the-wild textures (images from the internet). We highlight the following promising models: ViT-B with DINO pre-training, BeiTv2, and the Swin architecture, as well as the EfficientFormer as a low-cost alternative. In terms of efficiency, although having a higher number of GFLOPs and parameters, ViT-B and BeiT(v2) can achieve a lower feature extraction time on GPUs compared to ResNet50.

Advanced wood species identification based on multiple anatomical sections and using deep feature transfer and fusion

Apr 12, 2024Abstract:In recent years, we have seen many advancements in wood species identification. Methods like DNA analysis, Near Infrared (NIR) spectroscopy, and Direct Analysis in Real Time (DART) mass spectrometry complement the long-established wood anatomical assessment of cell and tissue morphology. However, most of these methods have some limitations such as high costs, the need for skilled experts for data interpretation, and the lack of good datasets for professional reference. Therefore, most of these methods, and certainly the wood anatomical assessment, may benefit from tools based on Artificial Intelligence. In this paper, we apply two transfer learning techniques with Convolutional Neural Networks (CNNs) to a multi-view Congolese wood species dataset including sections from different orientations and viewed at different microscopic magnifications. We explore two feature extraction methods in detail, namely Global Average Pooling (GAP) and Random Encoding of Aggregated Deep Activation Maps (RADAM), for efficient and accurate wood species identification. Our results indicate superior accuracy on diverse datasets and anatomical sections, surpassing the results of other methods. Our proposal represents a significant advancement in wood species identification, offering a robust tool to support the conservation of forest ecosystems and promote sustainable forestry practices.

RADAM: Texture Recognition through Randomized Aggregated Encoding of Deep Activation Maps

Mar 08, 2023

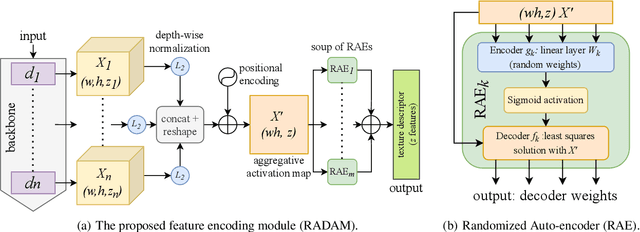

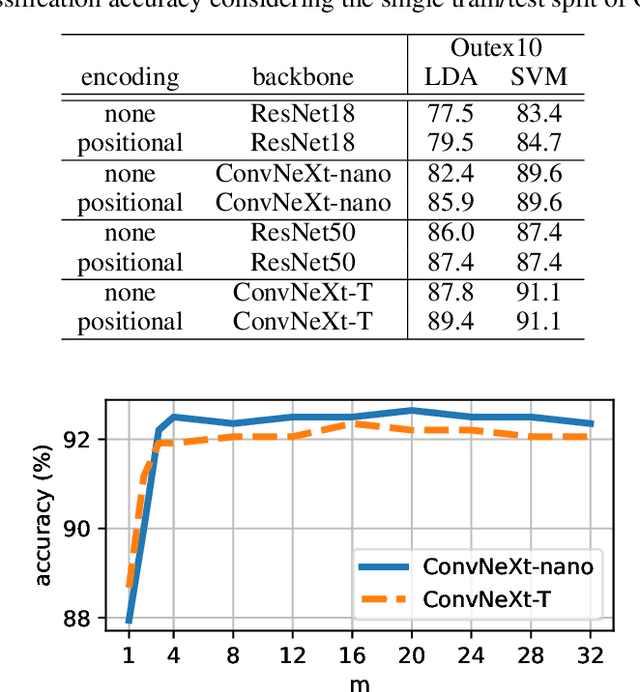

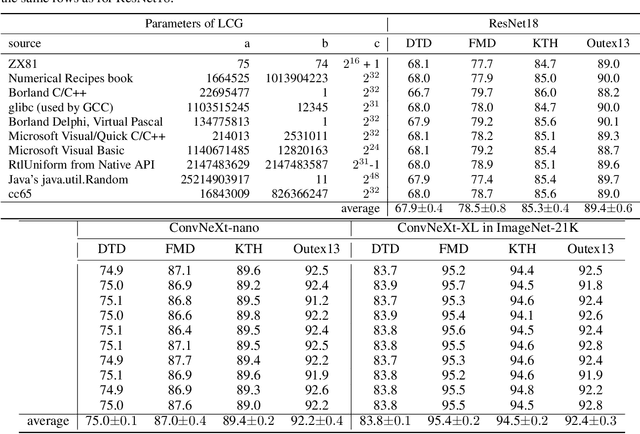

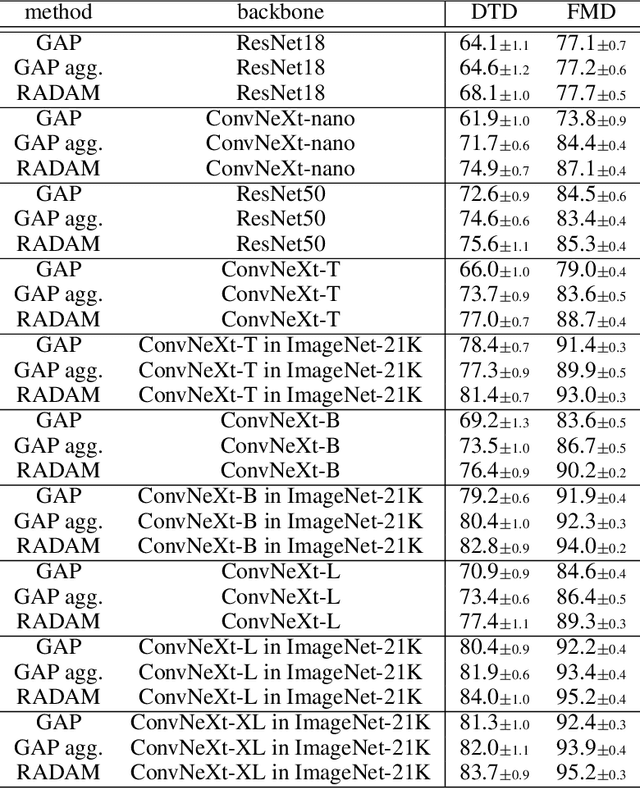

Abstract:Texture analysis is a classical yet challenging task in computer vision for which deep neural networks are actively being applied. Most approaches are based on building feature aggregation modules around a pre-trained backbone and then fine-tuning the new architecture on specific texture recognition tasks. Here we propose a new method named \textbf{R}andom encoding of \textbf{A}ggregated \textbf{D}eep \textbf{A}ctivation \textbf{M}aps (RADAM) which extracts rich texture representations without ever changing the backbone. The technique consists of encoding the output at different depths of a pre-trained deep convolutional network using a Randomized Autoencoder (RAE). The RAE is trained locally to each image using a closed-form solution, and its decoder weights are used to compose a 1-dimensional texture representation that is fed into a linear SVM. This means that no fine-tuning or backpropagation is needed. We explore RADAM on several texture benchmarks and achieve state-of-the-art results with different computational budgets. Our results suggest that pre-trained backbones may not require additional fine-tuning for texture recognition if their learned representations are better encoded.

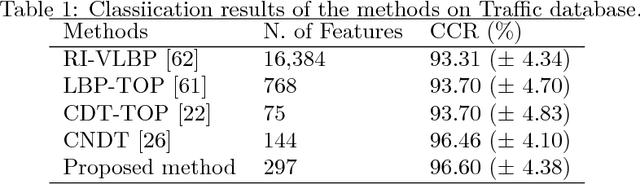

A Network Classification Method based on Density Time Evolution Patterns Extracted from Network Automata

Nov 18, 2022Abstract:Network modeling has proven to be an efficient tool for many interdisciplinary areas, including social, biological, transport, and many other real world complex systems. In addition, cellular automata (CA) are a formalism that has been studied in the last decades as a model for exploring patterns in the dynamic spatio-temporal behavior of these systems based on local rules. Some studies explore the use of cellular automata to analyze the dynamic behavior of networks, denominating them as network automata (NA). Recently, NA proved to be efficient for network classification, since it uses a time-evolution pattern (TEP) for the feature extraction. However, the TEPs explored by previous studies are composed of binary values, which does not represent detailed information on the network analyzed. Therefore, in this paper, we propose alternate sources of information to use as descriptor for the classification task, which we denominate as density time-evolution pattern (D-TEP) and state density time-evolution pattern (SD-TEP). We explore the density of alive neighbors of each node, which is a continuous value, and compute feature vectors based on histograms of the TEPs. Our results show a significant improvement compared to previous studies at five synthetic network databases and also seven real world databases. Our proposed method demonstrates not only a good approach for pattern recognition in networks, but also shows great potential for other kinds of data, such as images.

Learning Local Complex Features using Randomized Neural Networks for Texture Analysis

Jul 10, 2020

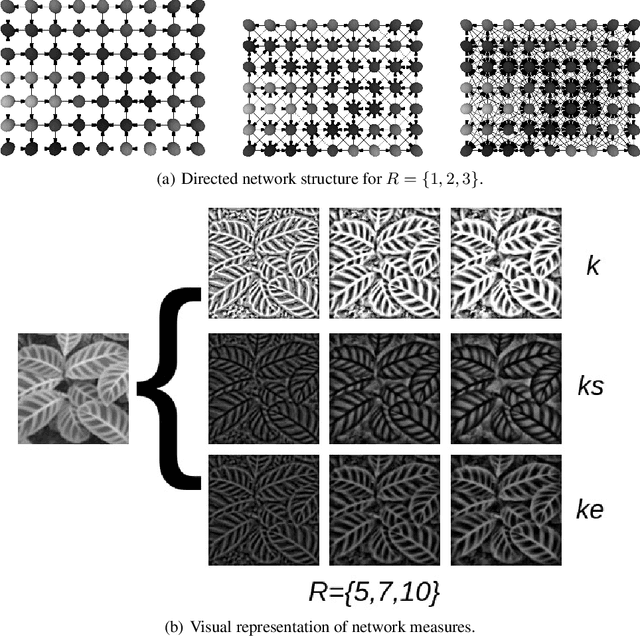

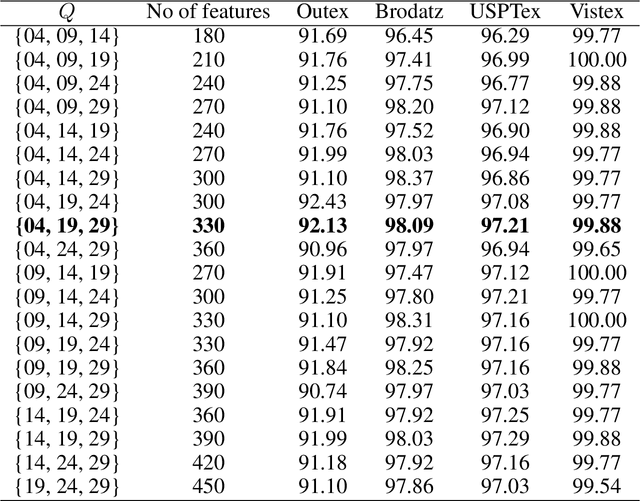

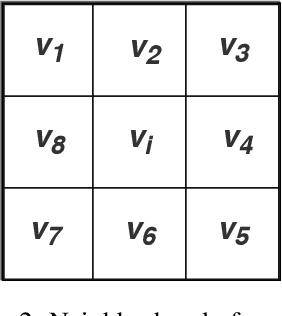

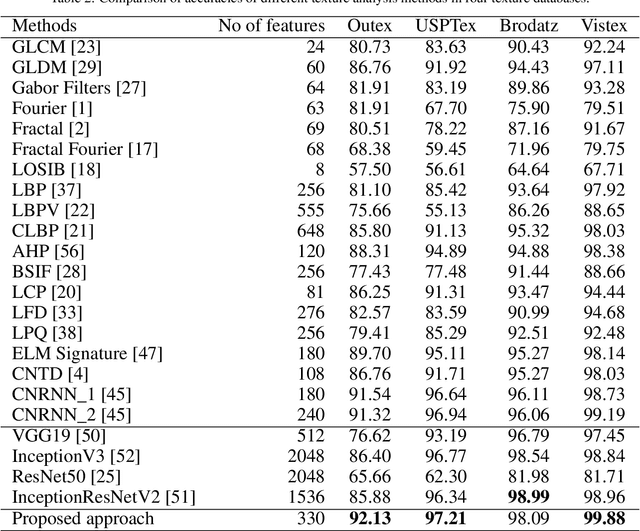

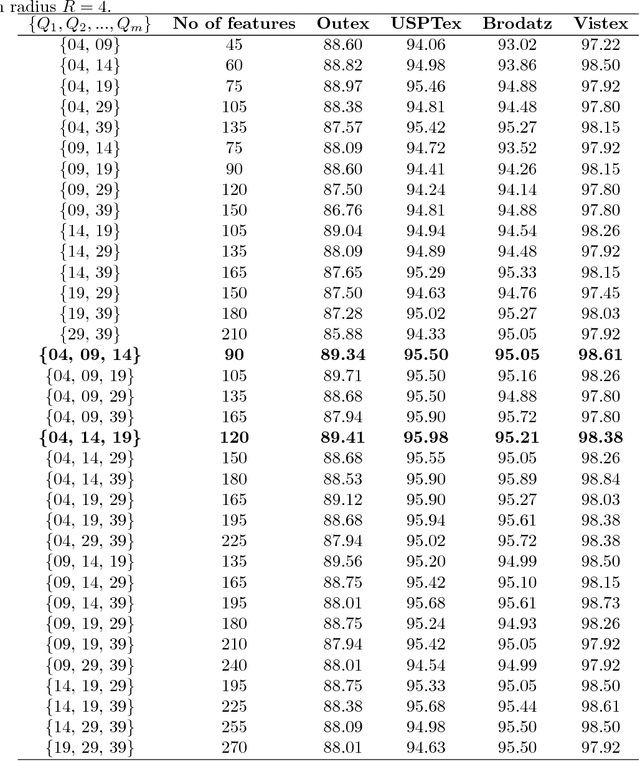

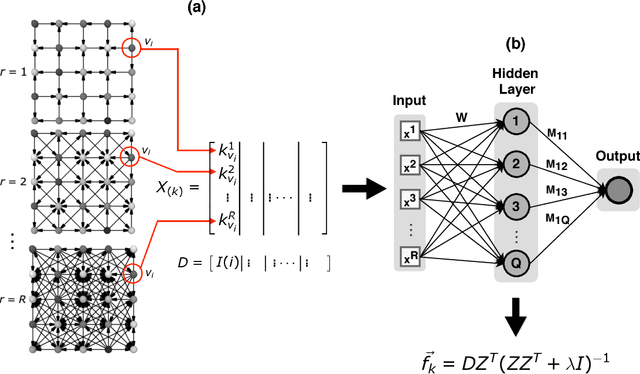

Abstract:Texture is a visual attribute largely used in many problems of image analysis. Currently, many methods that use learning techniques have been proposed for texture discrimination, achieving improved performance over previous handcrafted methods. In this paper, we present a new approach that combines a learning technique and the Complex Network (CN) theory for texture analysis. This method takes advantage of the representation capacity of CN to model a texture image as a directed network and uses the topological information of vertices to train a randomized neural network. This neural network has a single hidden layer and uses a fast learning algorithm, which is able to learn local CN patterns for texture characterization. Thus, we use the weighs of the trained neural network to compose a feature vector. These feature vectors are evaluated in a classification experiment in four widely used image databases. Experimental results show a high classification performance of the proposed method when compared to other methods, indicating that our approach can be used in many image analysis problems.

Spatio-spectral networks for color-texture analysis

Sep 13, 2019

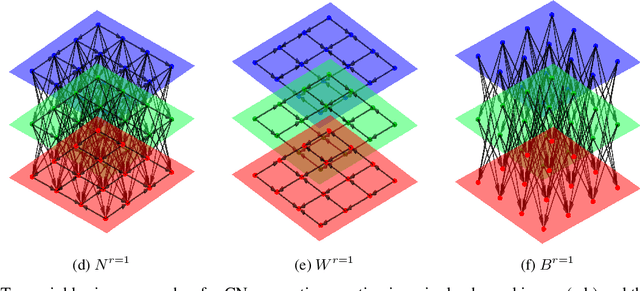

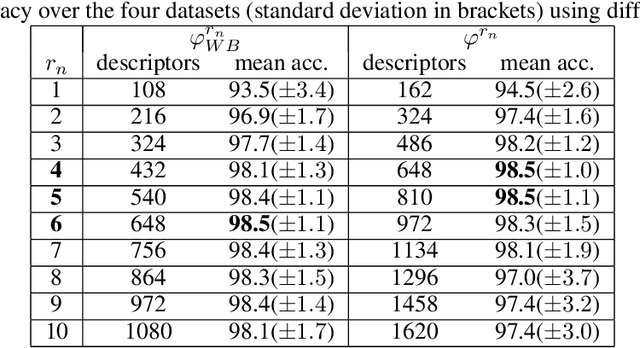

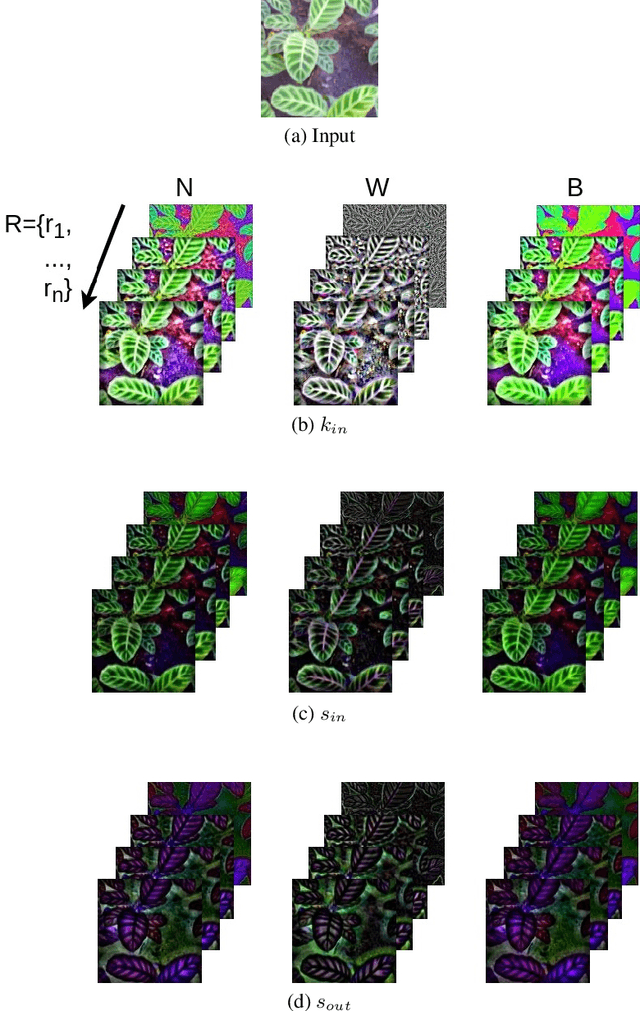

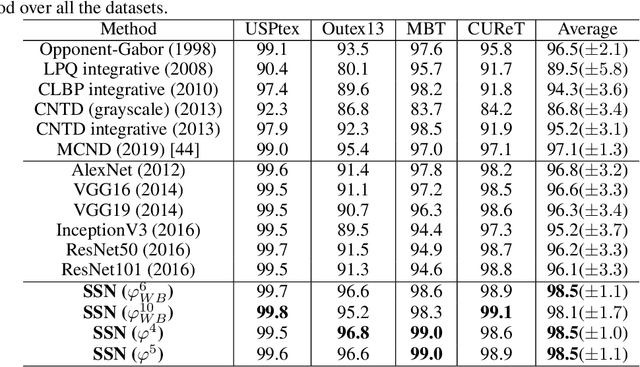

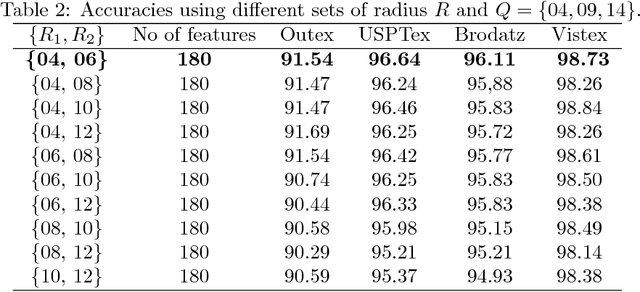

Abstract:Texture is one of the most-studied visual attribute for image characterization since the 1960s. However, most hand-crafted descriptors are monochromatic, focusing on the gray scale images and discarding the color information. In this context, this work focus on a new method for color texture analysis considering all color channels in a more intrinsic approach. Our proposal consists of modeling color images as directed complex networks that we named Spatio-Spectral Network (SSN). Its topology includes within-channel edges that cover spatial patterns throughout individual image color channels, while between-channel edges tackle spectral properties of channel pairs in an opponent fashion. Image descriptors are obtained through a concise topological characterization of the modeled network in a multiscale approach with radially symmetric neighborhoods. Experiments with four datasets cover several aspects of color-texture analysis, and results demonstrate that SSN overcomes all the compared literature methods, including known deep convolutional networks, and also has the most stable performance between datasets, achieving $98.5(\pm1.1)$ of average accuracy against $97.1(\pm1.3)$ of MCND and $96.8(\pm3.2)$ of AlexNet. Additionally, an experiment verifies the performance of the methods under different color spaces, where results show that SSN also has higher performance and robustness.

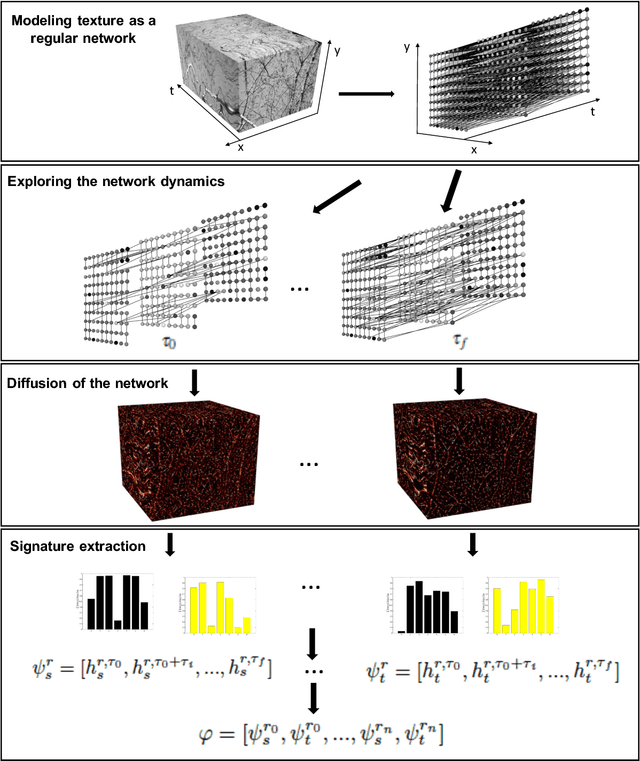

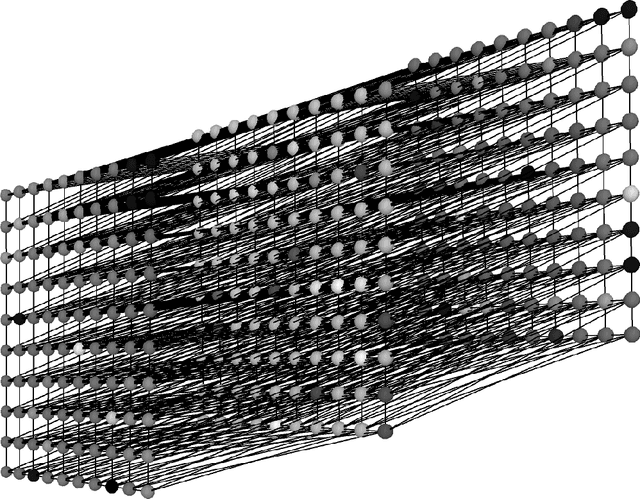

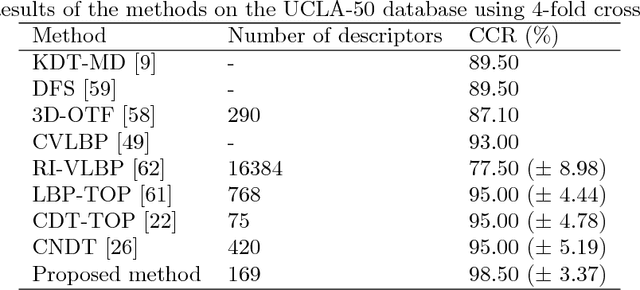

Dynamic texture analysis with diffusion in networks

Jun 27, 2018

Abstract:Dynamic texture is a field of research that has gained considerable interest from computer vision community due to the explosive growth of multimedia databases. In addition, dynamic texture is present in a wide range of videos, which makes it very important in expert systems based on videos such as medical systems, traffic monitoring systems, forest fire detection system, among others. In this paper, a new method for dynamic texture characterization based on diffusion in directed networks is proposed. The dynamic texture is modeled as a directed network. The method consists in the analysis of the dynamic of this network after a series of graph cut transformations based on the edge weights. For each network transformation, the activity for each vertex is estimated. The activity is the relative frequency that one vertex is visited by random walks in balance. Then, texture descriptor is constructed by concatenating the activity histograms. The main contributions of this paper are the use of directed network modeling and diffusion in network to dynamic texture characterization. These tend to provide better performance in dynamic textures classification. Experiments with rotation and interference of the motion pattern were conducted in order to demonstrate the robustness of the method. The proposed approach is compared to other dynamic texture methods on two very well know dynamic texture database and on traffic condition classification, and outperform in most of the cases.

Fusion of complex networks and randomized neural networks for texture analysis

Jun 24, 2018

Abstract:This paper presents a high discriminative texture analysis method based on the fusion of complex networks and randomized neural networks. In this approach, the input image is modeled as a complex networks and its topological properties as well as the image pixels are used to train randomized neural networks in order to create a signature that represents the deep characteristics of the texture. The results obtained surpassed the accuracies of many methods available in the literature. This performance demonstrates that our proposed approach opens a promising source of research, which consists of exploring the synergy of neural networks and complex networks in the texture analysis field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge