Liane Lewin-Eytan

The Cross-Lingual Cost: Retrieval Biases in RAG over Arabic-English Corpora

Jul 10, 2025Abstract:Cross-lingual retrieval-augmented generation (RAG) is a critical capability for retrieving and generating answers across languages. Prior work in this context has mostly focused on generation and relied on benchmarks derived from open-domain sources, most notably Wikipedia. In such settings, retrieval challenges often remain hidden due to language imbalances, overlap with pretraining data, and memorized content. To address this gap, we study Arabic-English RAG in a domain-specific setting using benchmarks derived from real-world corporate datasets. Our benchmarks include all combinations of languages for the user query and the supporting document, drawn independently and uniformly at random. This enables a systematic study of multilingual retrieval behavior. Our findings reveal that retrieval is a critical bottleneck in cross-lingual domain-specific scenarios, with significant performance drops occurring when the user query and supporting document languages differ. A key insight is that these failures stem primarily from the retriever's difficulty in ranking documents across languages. Finally, we propose a simple retrieval strategy that addresses this source of failure by enforcing equal retrieval from both languages, resulting in substantial improvements in cross-lingual and overall performance. These results highlight meaningful opportunities for improving multilingual retrieval, particularly in practical, real-world RAG applications.

Identifying Shopping Intent in Product QA for Proactive Recommendations

Apr 09, 2024

Abstract:Voice assistants have become ubiquitous in smart devices allowing users to instantly access information via voice questions. While extensive research has been conducted in question answering for voice search, little attention has been paid on how to enable proactive recommendations from a voice assistant to its users. This is a highly challenging problem that often leads to user friction, mainly due to recommendations provided to the users at the wrong time. We focus on the domain of e-commerce, namely in identifying Shopping Product Questions (SPQs), where the user asking a product-related question may have an underlying shopping need. Identifying a user's shopping need allows voice assistants to enhance shopping experience by determining when to provide recommendations, such as product or deal recommendations, or proactive shopping actions recommendation. Identifying SPQs is a challenging problem and cannot be done from question text alone, and thus requires to infer latent user behavior patterns inferred from user's past shopping history. We propose features that capture the user's latent shopping behavior from their purchase history, and combine them using a novel Mixture-of-Experts (MoE) model. Our evaluation shows that the proposed approach is able to identify SPQs with a high score of F1=0.91. Furthermore, based on an online evaluation with real voice assistant users, we identify SPQs in real-time and recommend shopping actions to users to add the queried product into their shopping list. We demonstrate that we are able to accurately identify SPQs, as indicated by the significantly higher rate of added products to users' shopping lists when being prompted after SPQs vs random PQs.

"Alexa, what do you do for fun?" Characterizing playful requests with virtual assistants

May 12, 2021

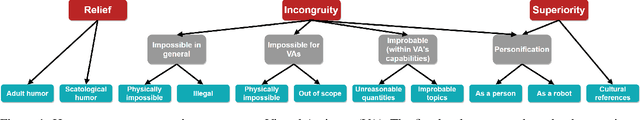

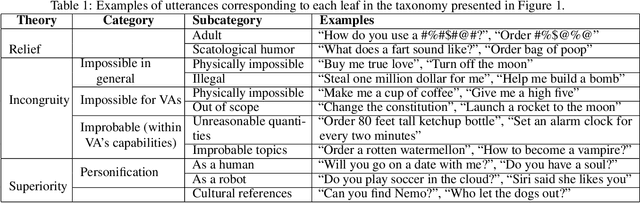

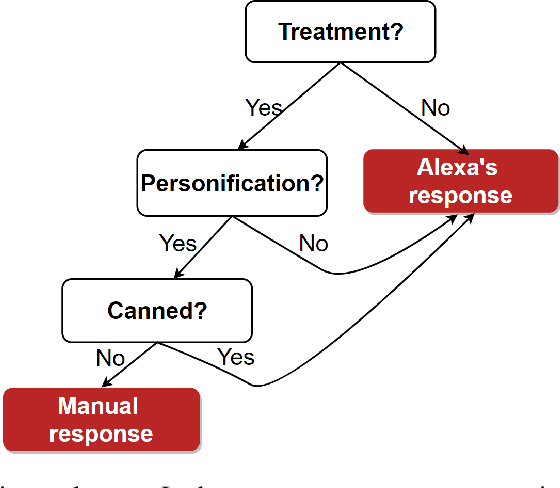

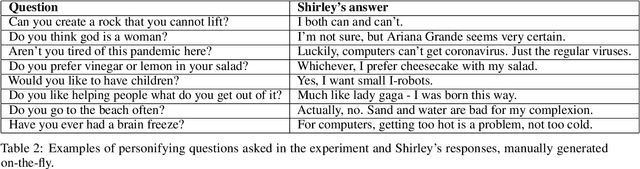

Abstract:Virtual assistants such as Amazon's Alexa, Apple's Siri, Google Home, and Microsoft's Cortana, are becoming ubiquitous in our daily lives and successfully help users in various daily tasks, such as making phone calls or playing music. Yet, they still struggle with playful utterances, which are not meant to be interpreted literally. Examples include jokes or absurd requests or questions such as, "Are you afraid of the dark?", "Who let the dogs out?", or "Order a zillion gummy bears". Today, virtual assistants often return irrelevant answers to such utterances, except for hard-coded ones addressed by canned replies. To address the challenge of automatically detecting playful utterances, we first characterize the different types of playful human-virtual assistant interaction. We introduce a taxonomy of playful requests rooted in theories of humor and refined by analyzing real-world traffic from Alexa. We then focus on one node, personification, where users refer to the virtual assistant as a person ("What do you do for fun?"). Our conjecture is that understanding such utterances will improve user experience with virtual assistants. We conducted a Wizard-of-Oz user study and showed that endowing virtual assistant s with the ability to identify humorous opportunities indeed has the potential to increase user satisfaction. We hope this work will contribute to the understanding of the landscape of the problem and inspire novel ideas and techniques towards the vision of giving virtual assistants a sense of humor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge