Kshitij P. Fadnis

Quantized-Dialog Language Model for Goal-Oriented Conversational Systems

Dec 26, 2018

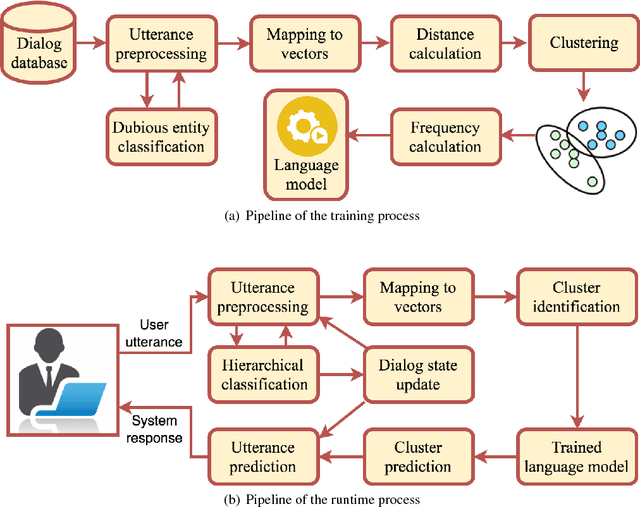

Abstract:We propose a novel methodology to address dialog learning in the context of goal-oriented conversational systems. The key idea is to quantize the dialog space into clusters and create a language model across the clusters, thus allowing for an accurate choice of the next utterance in the conversation. The language model relies on n-grams associated with clusters of utterances. This quantized-dialog language model methodology has been applied to the end-to-end goal-oriented track of the latest Dialog System Technology Challenges (DSTC6). The objective is to find the correct system utterance from a pool of candidates in order to complete a dialog between a user and an automated restaurant-reservation system. Our results show that the technique proposed in this paper achieves high accuracy regarding selection of the correct candidate utterance, and outperforms other state-of-the-art approaches based on neural networks.

A Unified Implicit Dialog Framework for Conversational Search

Feb 12, 2018

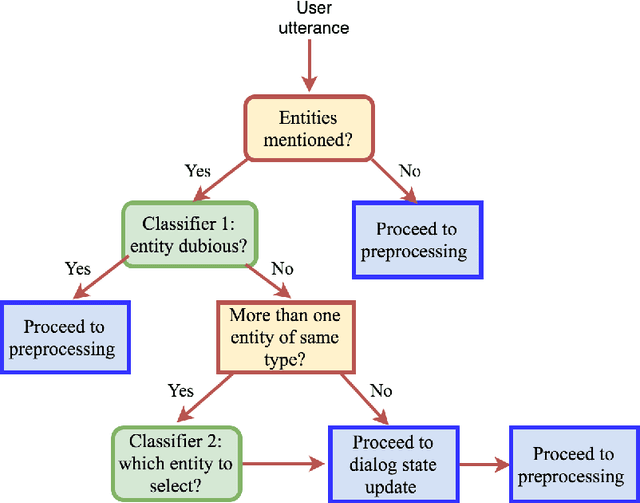

Abstract:We propose a unified Implicit Dialog framework for goal-oriented, information seeking tasks of Conversational Search applications. It aims to enable dialog interactions with domain data without replying on explicitly encoded the rules but utilizing the underlying data representation to build the components required for dialog interaction, which we refer as Implicit Dialog in this work. The proposed framework consists of a pipeline of End-to-End trainable modules. A centralized knowledge representation is used to semantically ground multiple dialog modules. An associated set of tools are integrated with the framework to gather end users' input for continuous improvement of the system. The goal is to facilitate development of conversational systems by identifying the components and the data that can be adapted and reused across many end-user applications. We demonstrate our approach by creating conversational agents for several independent domains.

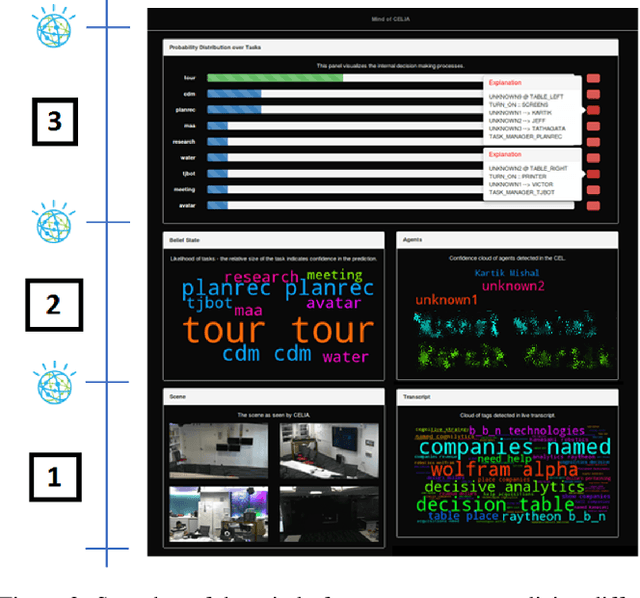

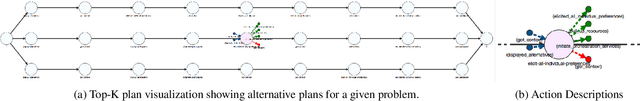

Visualizations for an Explainable Planning Agent

Feb 08, 2018

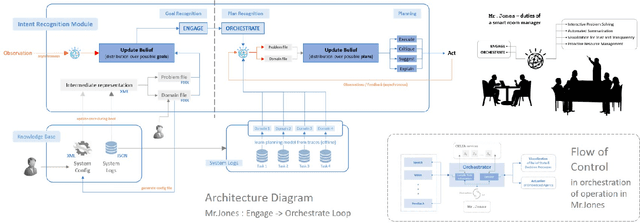

Abstract:In this paper, we report on the visualization capabilities of an Explainable AI Planning (XAIP) agent that can support human in the loop decision making. Imposing transparency and explainability requirements on such agents is especially important in order to establish trust and common ground with the end-to-end automated planning system. Visualizing the agent's internal decision-making processes is a crucial step towards achieving this. This may include externalizing the "brain" of the agent -- starting from its sensory inputs, to progressively higher order decisions made by it in order to drive its planning components. We also show how the planner can bootstrap on the latest techniques in explainable planning to cast plan visualization as a plan explanation problem, and thus provide concise model-based visualization of its plans. We demonstrate these functionalities in the context of the automated planning components of a smart assistant in an instrumented meeting space.

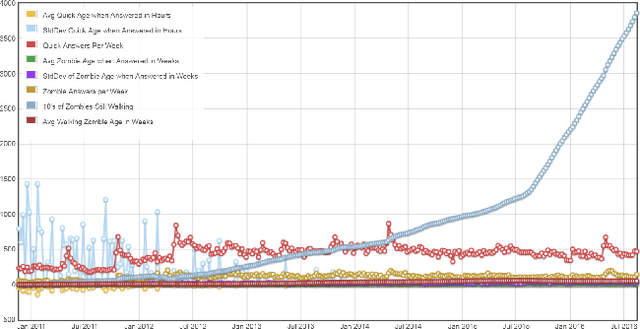

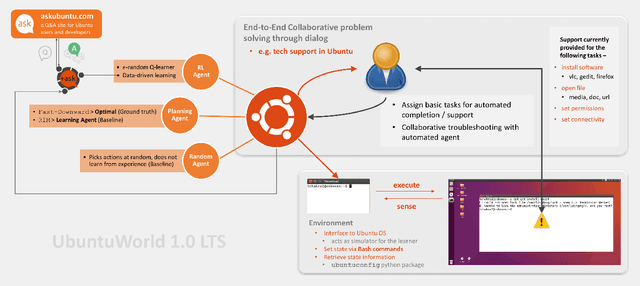

UbuntuWorld 1.0 LTS - A Platform for Automated Problem Solving & Troubleshooting in the Ubuntu OS

Aug 12, 2017

Abstract:In this paper, we present UbuntuWorld 1.0 LTS - a platform for developing automated technical support agents in the Ubuntu operating system. Specifically, we propose to use the Bash terminal as a simulator of the Ubuntu environment for a learning-based agent and demonstrate the usefulness of adopting reinforcement learning (RL) techniques for basic problem solving and troubleshooting in this environment. We provide a plug-and-play interface to the simulator as a python package where different types of agents can be plugged in and evaluated, and provide pathways for integrating data from online support forums like AskUbuntu into an automated agent's learning process. Finally, we show that the use of this data significantly improves the agent's learning efficiency. We believe that this platform can be adopted as a real-world test bed for research on automated technical support.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge