Kexuan Zhang

Probing the Limits of Compressive Memory: A Study of Infini-Attention in Small-Scale Pretraining

Dec 29, 2025Abstract:This study investigates small-scale pretraining for Small Language Models (SLMs) to enable efficient use of limited data and compute, improve accessibility in low-resource settings and reduce costs. To enhance long-context extrapolation in compact models, we focus on Infini-attention, which builds a compressed memory from past segments while preserving local attention. In our work, we conduct an empirical study using 300M-parameter LLaMA models pretrained with Infini-attention. The model demonstrates training stability and outperforms the baseline in long-context retrieval. We identify the balance factor as a key part of the model performance, and we found that retrieval accuracy drops with repeated memory compressions over long sequences. Even so, Infini-attention still effectively compensates for the SLM's limited parameters. Particularly, despite performance degradation at a 16,384-token context, the Infini-attention model achieves up to 31% higher accuracy than the baseline. Our findings suggest that achieving robust long-context capability in SLMs benefits from architectural memory like Infini-attention.

SAS-Bench: A Fine-Grained Benchmark for Evaluating Short Answer Scoring with Large Language Models

May 15, 2025Abstract:Subjective Answer Grading (SAG) plays a crucial role in education, standardized testing, and automated assessment systems, particularly for evaluating short-form responses in Short Answer Scoring (SAS). However, existing approaches often produce coarse-grained scores and lack detailed reasoning. Although large language models (LLMs) have demonstrated potential as zero-shot evaluators, they remain susceptible to bias, inconsistencies with human judgment, and limited transparency in scoring decisions. To overcome these limitations, we introduce SAS-Bench, a benchmark specifically designed for LLM-based SAS tasks. SAS-Bench provides fine-grained, step-wise scoring, expert-annotated error categories, and a diverse range of question types derived from real-world subject-specific exams. This benchmark facilitates detailed evaluation of model reasoning processes and explainability. We also release an open-source dataset containing 1,030 questions and 4,109 student responses, each annotated by domain experts. Furthermore, we conduct comprehensive experiments with various LLMs, identifying major challenges in scoring science-related questions and highlighting the effectiveness of few-shot prompting in improving scoring accuracy. Our work offers valuable insights into the development of more robust, fair, and educationally meaningful LLM-based evaluation systems.

DRAN: A Distribution and Relation Adaptive Network for Spatio-temporal Forecasting

Apr 02, 2025

Abstract:Accurate predictions of spatio-temporal systems' states are crucial for tasks such as system management, control, and crisis prevention. However, the inherent time variance of spatio-temporal systems poses challenges to achieving accurate predictions whenever stationarity is not granted. To address non-stationarity frameworks, we propose a Distribution and Relation Adaptive Network (DRAN) capable of dynamically adapting to relation and distribution changes over time. While temporal normalization and de-normalization are frequently used techniques to adapt to distribution shifts, this operation is not suitable for the spatio-temporal context as temporal normalization scales the time series of nodes and possibly disrupts the spatial relations among nodes. In order to address this problem, we develop a Spatial Factor Learner (SFL) module that enables the normalization and de-normalization process in spatio-temporal systems. To adapt to dynamic changes in spatial relationships among sensors, we propose a Dynamic-Static Fusion Learner (DSFL) module that effectively integrates features learned from both dynamic and static relations through an adaptive fusion ratio mechanism. Furthermore, we introduce a Stochastic Learner to capture the noisy components of spatio-temporal representations. Our approach outperforms state of the art methods in weather prediction and traffic flows forecasting tasks. Experimental results show that our SFL efficiently preserves spatial relationships across various temporal normalization operations. Visualizations of the learned dynamic and static relations demonstrate that DSFL can capture both local and distant relationships between nodes. Moreover, ablation studies confirm the effectiveness of each component.

Causal Learning for Heterogeneous Subgroups Based on Nonlinear Causal Kernel Clustering

Jan 20, 2025Abstract:Due to the challenge posed by multi-source and heterogeneous data collected from diverse environments, causal relationships among features can exhibit variations influenced by different time spans, regions, or strategies. This diversity makes a single causal model inadequate for accurately representing complex causal relationships in all observational data, a crucial consideration in causal learning. To address this challenge, we introduce the nonlinear Causal Kernel Clustering method designed for heterogeneous subgroup causal learning, illuminating variations in causal relationships across diverse subgroups. It comprises two primary components. First, the construction of a sample mapping function forms the basis of the subsequent nonlinear causal kernel. This function assesses the differences in potential nonlinear causal relationships in various samples, supported by our causal identifiability theory. Second, a nonlinear causal kernel is proposed for clustering heterogeneous subgroups. Experimental results showcase the exceptional performance of our method in accurately identifying heterogeneous subgroups and effectively enhancing causal learning, leading to a great reduction in prediction error.

Caformer: Rethinking Time Series Analysis from Causal Perspective

Mar 13, 2024

Abstract:Time series analysis is a vital task with broad applications in various domains. However, effectively capturing cross-dimension and cross-time dependencies in non-stationary time series poses significant challenges, particularly in the context of environmental factors. The spurious correlation induced by the environment confounds the causal relationships between cross-dimension and cross-time dependencies. In this paper, we introduce a novel framework called Caformer (\underline{\textbf{Ca}}usal Trans\underline{\textbf{former}}) for time series analysis from a causal perspective. Specifically, our framework comprises three components: Dynamic Learner, Environment Learner, and Dependency Learner. The Dynamic Learner unveils dynamic interactions among dimensions, the Environment Learner mitigates spurious correlations caused by environment with a back-door adjustment, and the Dependency Learner aims to infer robust interactions across both time and dimensions. Our Caformer demonstrates consistent state-of-the-art performance across five mainstream time series analysis tasks, including long- and short-term forecasting, imputation, classification, and anomaly detection, with proper interpretability.

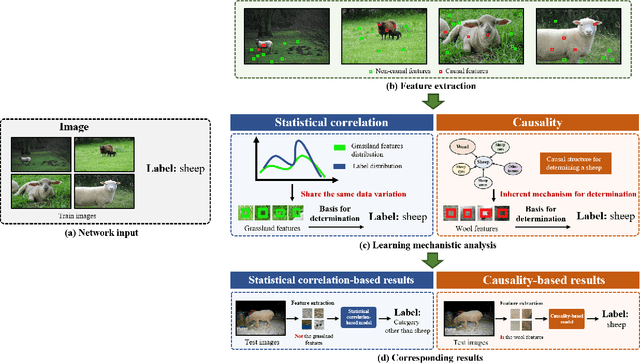

Causal reasoning in typical computer vision tasks

Jul 31, 2023

Abstract:Deep learning has revolutionized the field of artificial intelligence. Based on the statistical correlations uncovered by deep learning-based methods, computer vision has contributed to tremendous growth in areas like autonomous driving and robotics. Despite being the basis of deep learning, such correlation is not stable and is susceptible to uncontrolled factors. In the absence of the guidance of prior knowledge, statistical correlations can easily turn into spurious correlations and cause confounders. As a result, researchers are now trying to enhance deep learning methods with causal theory. Causal theory models the intrinsic causal structure unaffected by data bias and is effective in avoiding spurious correlations. This paper aims to comprehensively review the existing causal methods in typical vision and vision-language tasks such as semantic segmentation, object detection, and image captioning. The advantages of causality and the approaches for building causal paradigms will be summarized. Future roadmaps are also proposed, including facilitating the development of causal theory and its application in other complex scenes and systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge