Kevin Yager

VISION: A Modular AI Assistant for Natural Human-Instrument Interaction at Scientific User Facilities

Dec 24, 2024Abstract:Scientific user facilities, such as synchrotron beamlines, are equipped with a wide array of hardware and software tools that require a codebase for human-computer-interaction. This often necessitates developers to be involved to establish connection between users/researchers and the complex instrumentation. The advent of generative AI presents an opportunity to bridge this knowledge gap, enabling seamless communication and efficient experimental workflows. Here we present a modular architecture for the Virtual Scientific Companion (VISION) by assembling multiple AI-enabled cognitive blocks that each scaffolds large language models (LLMs) for a specialized task. With VISION, we performed LLM-based operation on the beamline workstation with low latency and demonstrated the first voice-controlled experiment at an X-ray scattering beamline. The modular and scalable architecture allows for easy adaptation to new instrument and capabilities. Development on natural language-based scientific experimentation is a building block for an impending future where a science exocortex -- a synthetic extension to the cognition of scientists -- may radically transform scientific practice and discovery.

Virtual Scientific Companion for Synchrotron Beamlines: A Prototype

Dec 28, 2023Abstract:The extraordinarily high X-ray flux and specialized instrumentation at synchrotron beamlines have enabled versatile in-situ and high throughput studies that are impossible elsewhere. Dexterous and efficient control of experiments are thus crucial for efficient beamline operation. Artificial intelligence and machine learning methods are constantly being developed to enhance facility performance, but the full potential of these developments can only be reached with efficient human-computer-interaction. Natural language is the most intuitive and efficient way for humans to communicate. However, the low credibility and reproducibility of existing large language models and tools demand extensive development to be made for robust and reliable performance for scientific purposes. In this work, we introduce the prototype of virtual scientific companion (VISION) and demonstrate that it is possible to control basic beamline operations through natural language with open-source language model and the limited computational resources at beamline. The human-AI nature of VISION leverages existing automation systems and data framework at synchrotron beamlines.

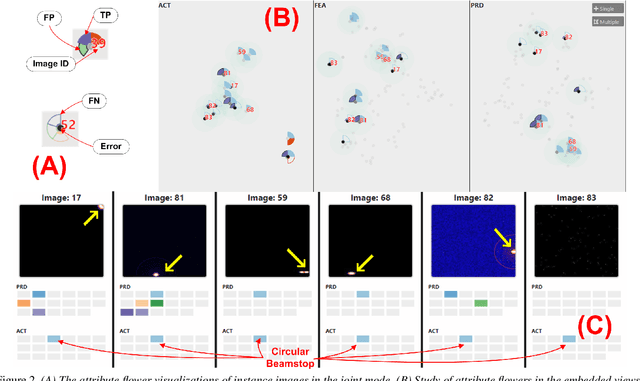

Interactive Visual Study of Multiple Attributes Learning Model of X-Ray Scattering Images

Sep 03, 2020

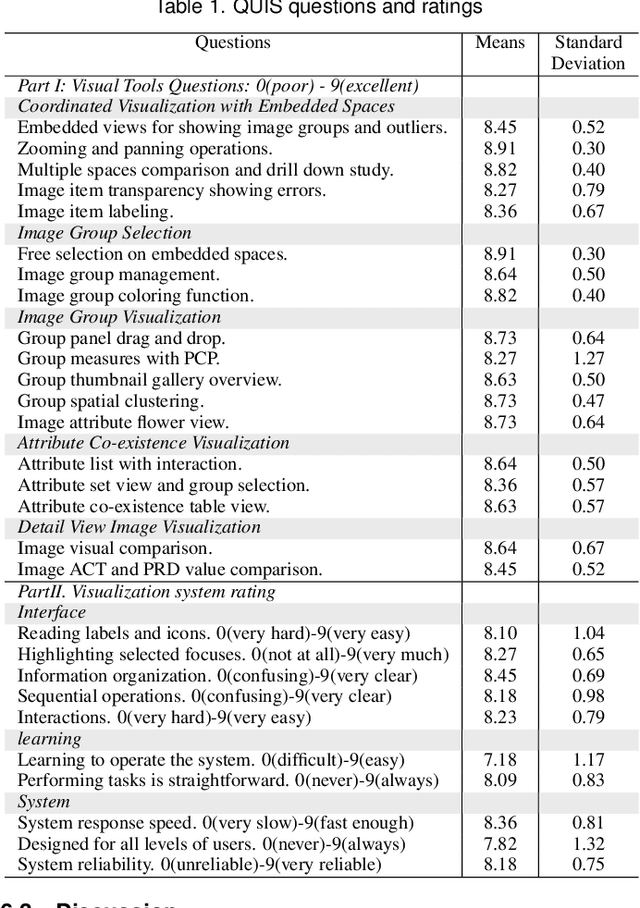

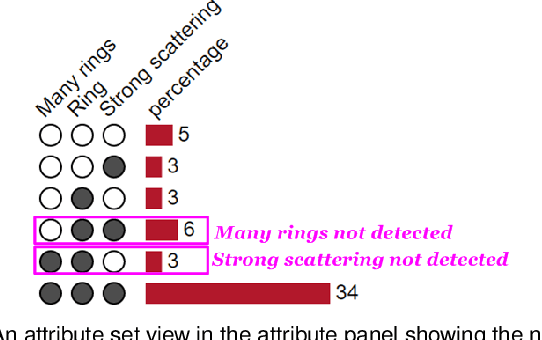

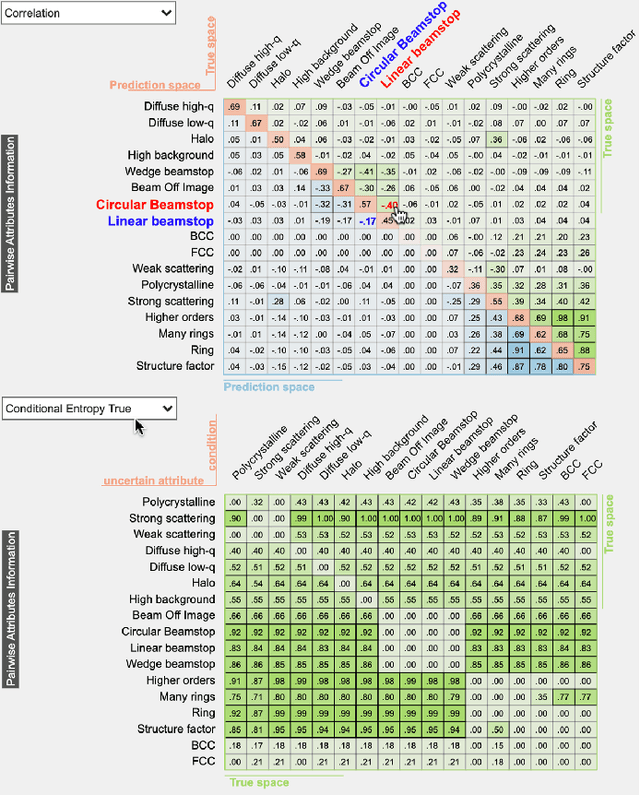

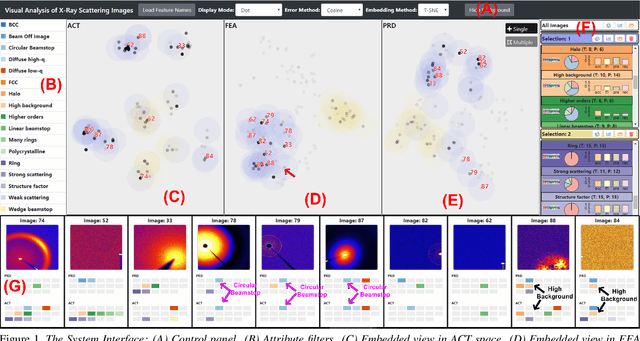

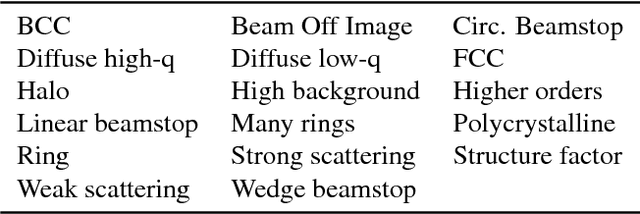

Abstract:Existing interactive visualization tools for deep learning are mostly applied to the training, debugging, and refinement of neural network models working on natural images. However, visual analytics tools are lacking for the specific application of x-ray image classification with multiple structural attributes. In this paper, we present an interactive system for domain scientists to visually study the multiple attributes learning models applied to x-ray scattering images. It allows domain scientists to interactively explore this important type of scientific images in embedded spaces that are defined on the model prediction output, the actual labels, and the discovered feature space of neural networks. Users are allowed to flexibly select instance images, their clusters, and compare them regarding the specified visual representation of attributes. The exploration is guided by the manifestation of model performance related to mutual relationships among attributes, which often affect the learning accuracy and effectiveness. The system thus supports domain scientists to improve the training dataset and model, find questionable attributes labels, and identify outlier images or spurious data clusters. Case studies and scientists feedback demonstrate its functionalities and usefulness.

* IEEE SciVis Conference 2020

Visual Understanding of Multiple Attributes Learning Model of X-Ray Scattering Images

Oct 10, 2019

Abstract:This extended abstract presents a visualization system, which is designed for domain scientists to visually understand their deep learning model of extracting multiple attributes in x-ray scattering images. The system focuses on studying the model behaviors related to multiple structural attributes. It allows users to explore the images in the feature space, the classification output of different attributes, with respect to the actual attributes labelled by domain scientists. Abundant interactions allow users to flexibly select instance images, their clusters, and compare them visually in details. Two preliminary case studies demonstrate its functionalities and usefulness.

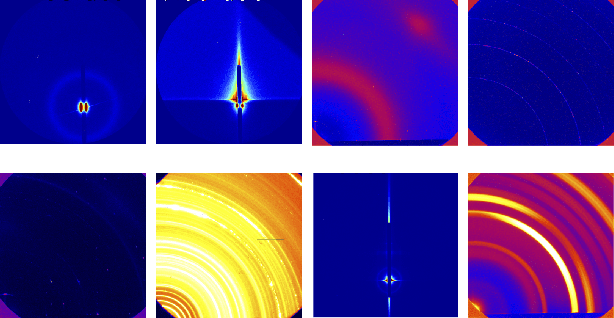

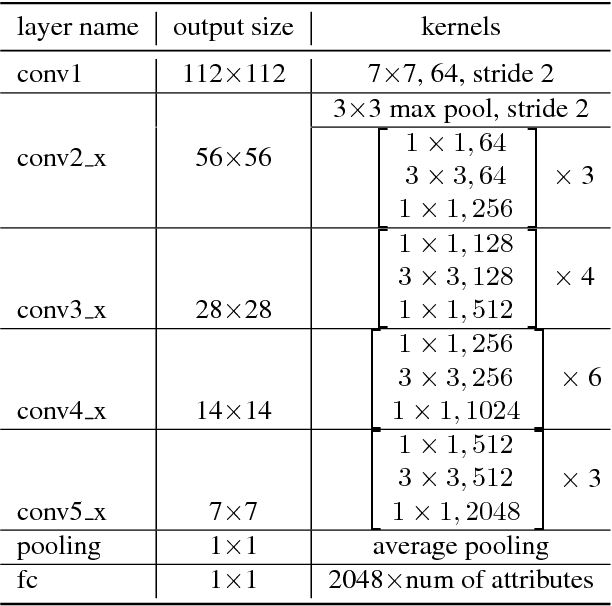

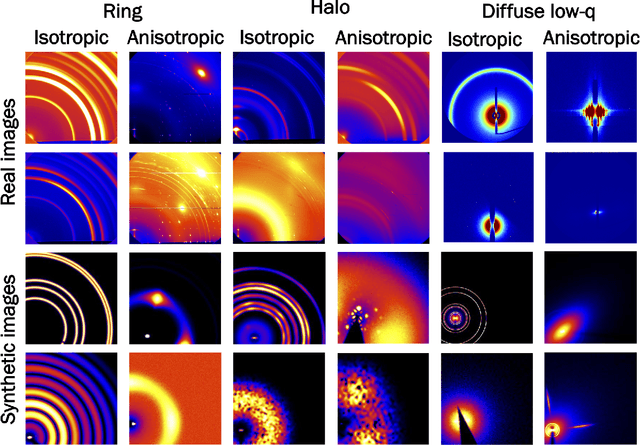

X-ray Scattering Image Classification Using Deep Learning

Nov 10, 2016

Abstract:Visual inspection of x-ray scattering images is a powerful technique for probing the physical structure of materials at the molecular scale. In this paper, we explore the use of deep learning to develop methods for automatically analyzing x-ray scattering images. In particular, we apply Convolutional Neural Networks and Convolutional Autoencoders for x-ray scattering image classification. To acquire enough training data for deep learning, we use simulation software to generate synthetic x-ray scattering images. Experiments show that deep learning methods outperform previously published methods by 10\% on synthetic and real datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge