Jishun Guo

Progressive Transfer Learning for Person Re-identification

Aug 08, 2019

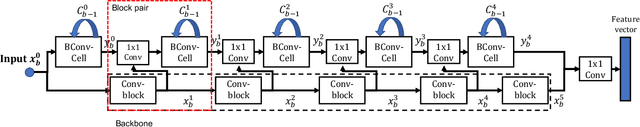

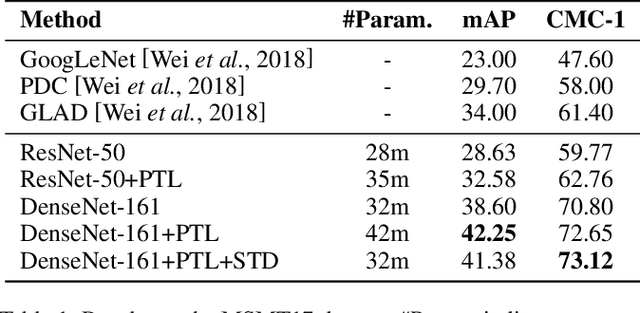

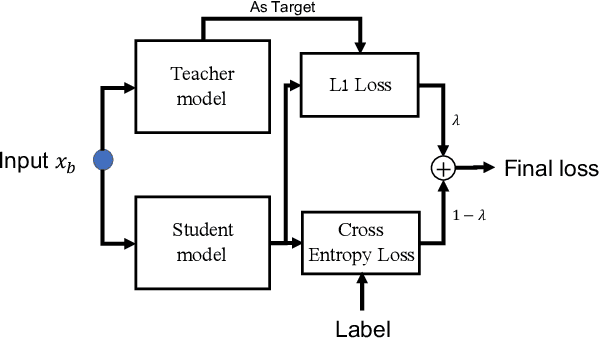

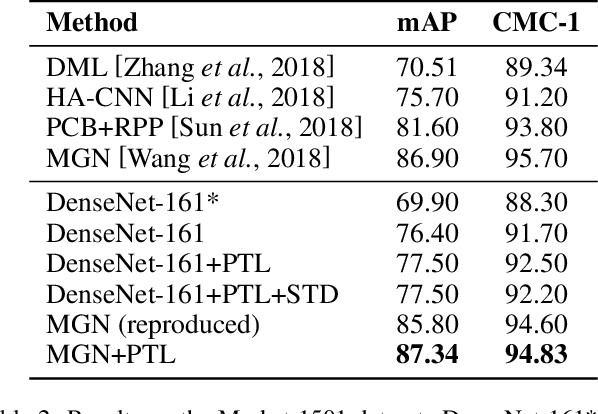

Abstract:Model fine-tuning is a widely used transfer learning approach in person Re-identification (ReID) applications, which fine-tuning a pre-trained feature extraction model into the target scenario instead of training a model from scratch. It is challenging due to the significant variations inside the target scenario, e.g., different camera viewpoint, illumination changes, and occlusion. These variations result in a gap between the distribution of each mini-batch and the distribution of the whole dataset when using mini-batch training. In this paper, we study model fine-tuning from the perspective of the aggregation and utilization of the global information of the dataset when using mini-batch training. Specifically, we introduce a novel network structure called Batch-related Convolutional Cell (BConv-Cell), which progressively collects the global information of the dataset into a latent state and uses this latent state to rectify the extracted feature. Based on BConv-Cells, we further proposed the Progressive Transfer Learning (PTL) method to facilitate the model fine-tuning process by joint training the BConv-Cells and the pre-trained ReID model. Empirical experiments show that our proposal can improve the performance of the ReID model greatly on MSMT17, Market-1501, CUHK03 and DukeMTMC-reID datasets. The code will be released later on at \url{https://github.com/ZJULearning/PTL}

COP: Customized Deep Model Compression via Regularized Correlation-Based Filter-Level Pruning

Jun 25, 2019

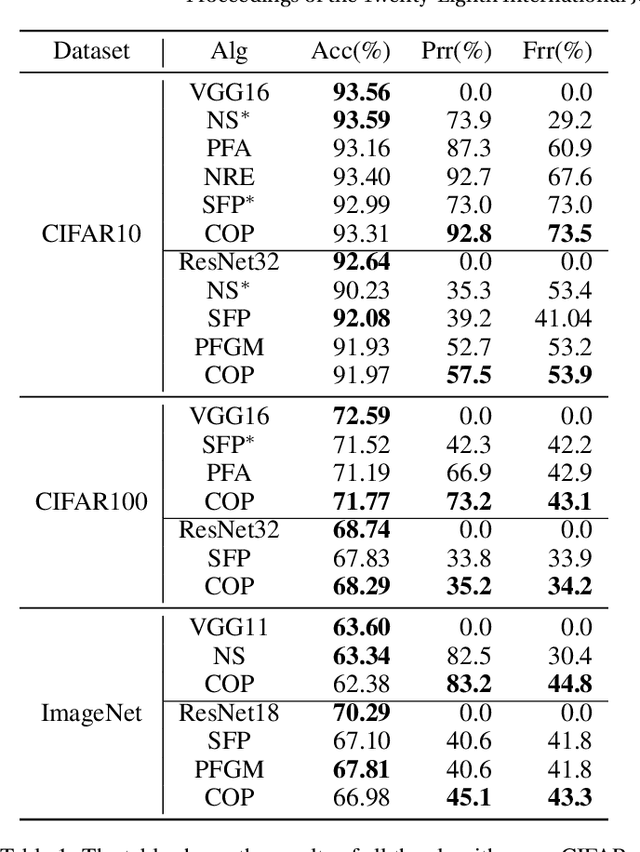

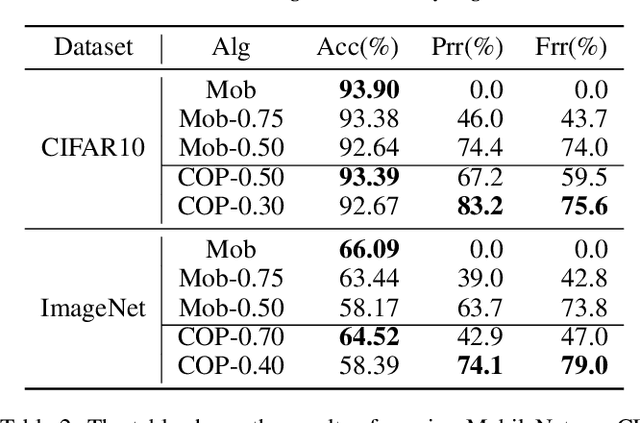

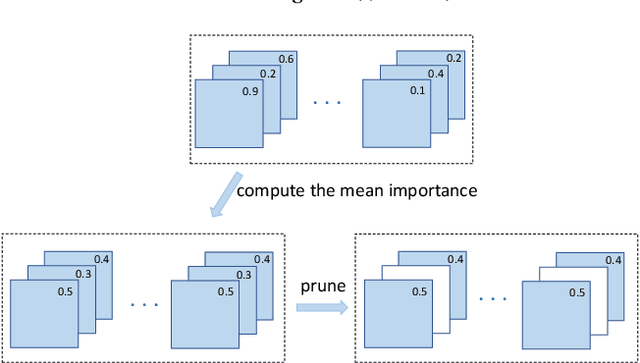

Abstract:Neural network compression empowers the effective yet unwieldy deep convolutional neural networks (CNN) to be deployed in resource-constrained scenarios. Most state-of-the-art approaches prune the model in filter-level according to the "importance" of filters. Despite their success, we notice they suffer from at least two of the following problems: 1) The redundancy among filters is not considered because the importance is evaluated independently. 2) Cross-layer filter comparison is unachievable since the importance is defined locally within each layer. Consequently, we must manually specify layer-wise pruning ratios. 3) They are prone to generate sub-optimal solutions because they neglect the inequality between reducing parameters and reducing computational cost. Reducing the same number of parameters in different positions in the network may reduce different computational cost. To address the above problems, we develop a novel algorithm named as COP (correlation-based pruning), which can detect the redundant filters efficiently. We enable the cross-layer filter comparison through global normalization. We add parameter-quantity and computational-cost regularization terms to the importance, which enables the users to customize the compression according to their preference (smaller or faster). Extensive experiments have shown COP outperforms the others significantly. The code is released at https://github.com/ZJULearning/COP.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge