Jeongran Lee

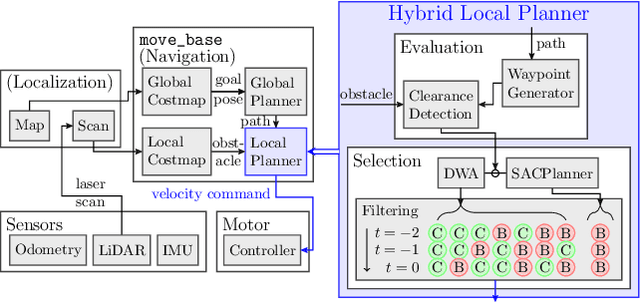

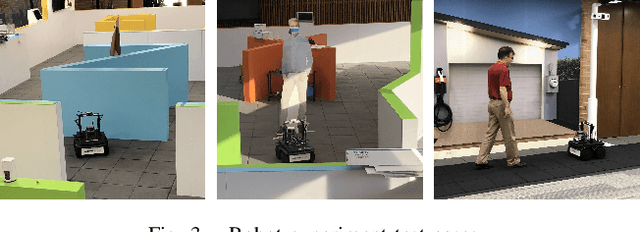

Hybrid Classical/RL Local Planner for Ground Robot Navigation

Oct 04, 2024

Abstract:Local planning is an optimization process within a mobile robot navigation stack that searches for the best velocity vector, given the robot and environment state. Depending on how the optimization criteria and constraints are defined, some planners may be better than others in specific situations. We consider two conceptually different planners. The first planner explores the velocity space in real-time and has superior path-tracking and motion smoothness performance. The second planner was trained using reinforcement learning methods to produce the best velocity based on its training $"$experience$"$. It is better at avoiding dynamic obstacles but at the expense of motion smoothness. We propose a simple yet effective meta-reasoning approach that takes advantage of both approaches by switching between planners based on the surroundings. We demonstrate the superiority of our hybrid planner, both qualitatively and quantitatively, over the individual planners on a live robot in different scenarios, achieving an improvement of 26% in the navigation time.

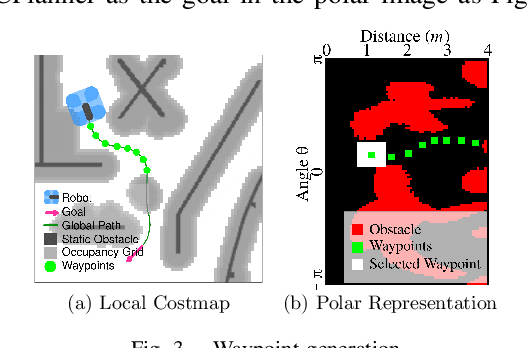

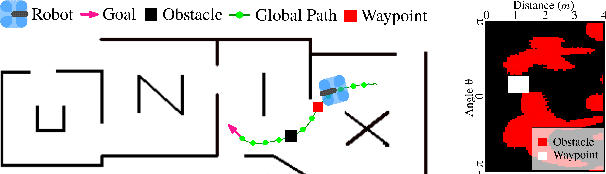

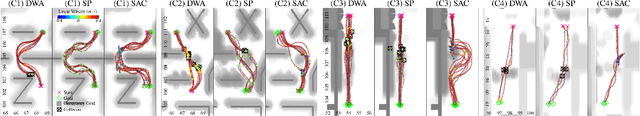

SACPlanner: Real-World Collision Avoidance with a Soft Actor Critic Local Planner and Polar State Representations

Mar 21, 2023

Abstract:We study the training performance of ROS local planners based on Reinforcement Learning (RL), and the trajectories they produce on real-world robots. We show that recent enhancements to the Soft Actor Critic (SAC) algorithm such as RAD and DrQ achieve almost perfect training after only 10000 episodes. We also observe that on real-world robots the resulting SACPlanner is more reactive to obstacles than traditional ROS local planners such as DWA.

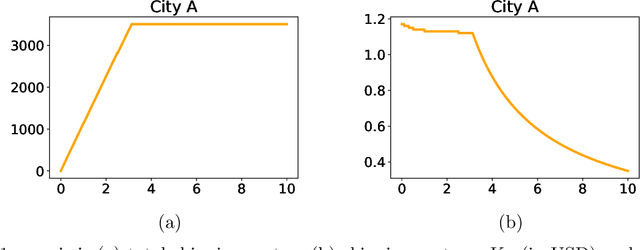

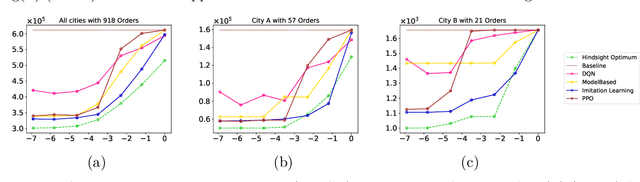

Learning Algorithms for Regenerative Stopping Problems with Applications to Shipping Consolidation in Logistics

May 05, 2021

Abstract:We study regenerative stopping problems in which the system starts anew whenever the controller decides to stop and the long-term average cost is to be minimized. Traditional model-based solutions involve estimating the underlying process from data and computing strategies for the estimated model. In this paper, we compare such solutions to deep reinforcement learning and imitation learning which involve learning a neural network policy from simulations. We evaluate the different approaches on a real-world problem of shipping consolidation in logistics and demonstrate that deep learning can be effectively used to solve such problems.

Augmented Data Science: Towards Industrialization and Democratization of Data Science

Sep 12, 2019

Abstract:Conversion of raw data into insights and knowledge requires substantial amounts of effort from data scientists. Despite breathtaking advances in Machine Learning (ML) and Artificial Intelligence (AI), data scientists still spend the majority of their effort in understanding and then preparing the raw data for ML/AI. The effort is often manual and ad hoc, and requires some level of domain knowledge. The complexity of the effort increases dramatically when data diversity, both in form and context, increases. In this paper, we introduce our solution, Augmented Data Science (ADS), towards addressing this "human bottleneck" in creating value from diverse datasets. ADS is a data-driven approach and relies on statistics and ML to extract insights from any data set in a domain-agnostic way to facilitate the data science process. Key features of ADS are the replacement of rudimentary data exploration and processing steps with automation and the augmentation of data scientist judgment with automatically-generated insights. We present building blocks of our end-to-end solution and provide a case study to exemplify its capabilities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge