Janis Fluri

Scaling Behavior of Discrete Diffusion Language Models

Dec 11, 2025

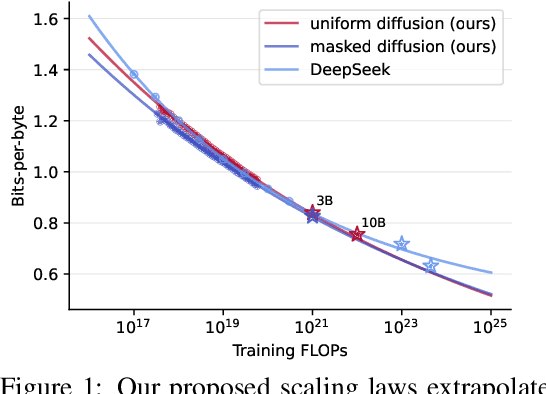

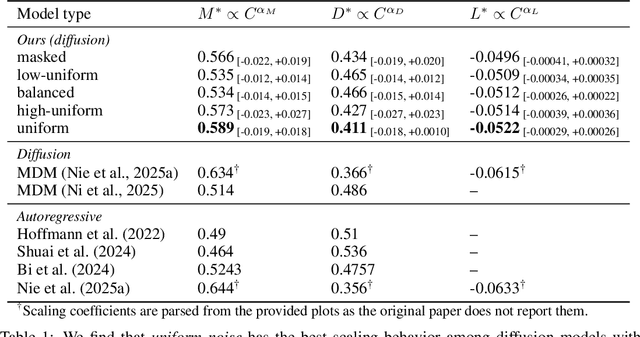

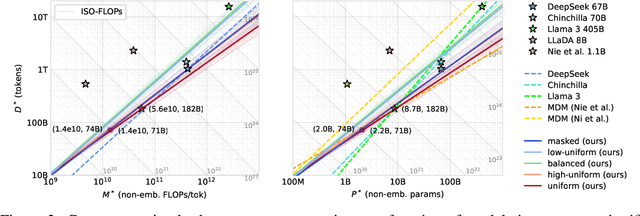

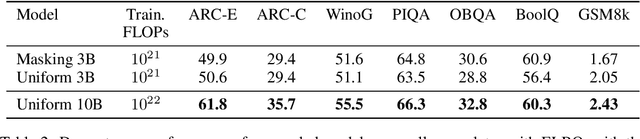

Abstract:Modern LLM pre-training consumes vast amounts of compute and training data, making the scaling behavior, or scaling laws, of different models a key distinguishing factor. Discrete diffusion language models (DLMs) have been proposed as an alternative to autoregressive language models (ALMs). However, their scaling behavior has not yet been fully explored, with prior work suggesting that they require more data and compute to match the performance of ALMs. We study the scaling behavior of DLMs on different noise types by smoothly interpolating between masked and uniform diffusion while paying close attention to crucial hyperparameters such as batch size and learning rate. Our experiments reveal that the scaling behavior of DLMs strongly depends on the noise type and is considerably different from ALMs. While all noise types converge to similar loss values in compute-bound scaling, we find that uniform diffusion requires more parameters and less data for compute-efficient training compared to masked diffusion, making them a promising candidate in data-bound settings. We scale our uniform diffusion model up to 10B parameters trained for $10^{22}$ FLOPs, confirming the predicted scaling behavior and making it the largest publicly known uniform diffusion model to date.

Generalized Interpolating Discrete Diffusion

Mar 06, 2025

Abstract:While state-of-the-art language models achieve impressive results through next-token prediction, they have inherent limitations such as the inability to revise already generated tokens. This has prompted exploration of alternative approaches such as discrete diffusion. However, masked diffusion, which has emerged as a popular choice due to its simplicity and effectiveness, reintroduces this inability to revise words. To overcome this, we generalize masked diffusion and derive the theoretical backbone of a family of general interpolating discrete diffusion (GIDD) processes offering greater flexibility in the design of the noising processes. Leveraging a novel diffusion ELBO, we achieve compute-matched state-of-the-art performance in diffusion language modeling. Exploiting GIDD's flexibility, we explore a hybrid approach combining masking and uniform noise, leading to improved sample quality and unlocking the ability for the model to correct its own mistakes, an area where autoregressive models notoriously have struggled. Our code and models are open-source: https://github.com/dvruette/gidd/

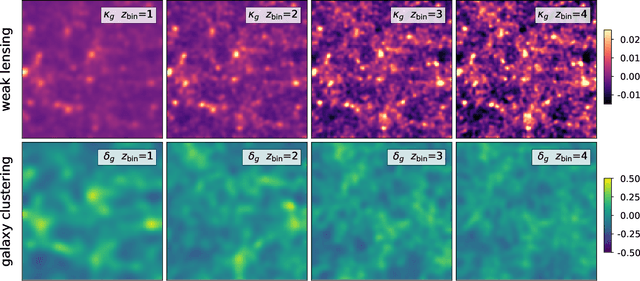

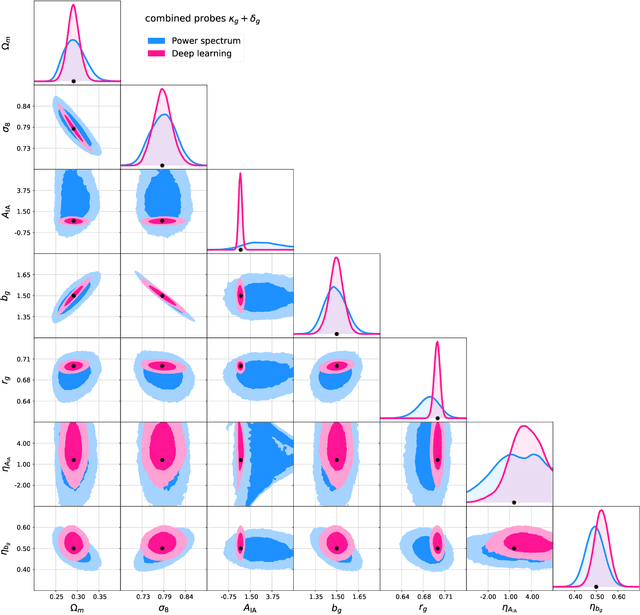

DeepLSS: breaking parameter degeneracies in large scale structure with deep learning analysis of combined probes

Mar 17, 2022

Abstract:In classical cosmological analysis of large scale structure surveys with 2-pt functions, the parameter measurement precision is limited by several key degeneracies within the cosmology and astrophysics sectors. For cosmic shear, clustering amplitude $\sigma_8$ and matter density $\Omega_m$ roughly follow the $S_8=\sigma_8(\Omega_m/0.3)^{0.5}$ relation. In turn, $S_8$ is highly correlated with the intrinsic galaxy alignment amplitude $A_{\rm{IA}}$. For galaxy clustering, the bias $b_g$ is degenerate with both $\sigma_8$ and $\Omega_m$, as well as the stochasticity $r_g$. Moreover, the redshift evolution of IA and bias can cause further parameter confusion. A tomographic 2-pt probe combination can partially lift these degeneracies. In this work we demonstrate that a deep learning analysis of combined probes of weak gravitational lensing and galaxy clustering, which we call DeepLSS, can effectively break these degeneracies and yield significantly more precise constraints on $\sigma_8$, $\Omega_m$, $A_{\rm{IA}}$, $b_g$, $r_g$, and IA redshift evolution parameter $\eta_{\rm{IA}}$. The most significant gains are in the IA sector: the precision of $A_{\rm{IA}}$ is increased by approximately 8x and is almost perfectly decorrelated from $S_8$. Galaxy bias $b_g$ is improved by 1.5x, stochasticity $r_g$ by 3x, and the redshift evolution $\eta_{\rm{IA}}$ and $\eta_b$ by 1.6x. Breaking these degeneracies leads to a significant gain in constraining power for $\sigma_8$ and $\Omega_m$, with the figure of merit improved by 15x. We give an intuitive explanation for the origin of this information gain using sensitivity maps. These results indicate that the fully numerical, map-based forward modeling approach to cosmological inference with machine learning may play an important role in upcoming LSS surveys. We discuss perspectives and challenges in its practical deployment for a full survey analysis.

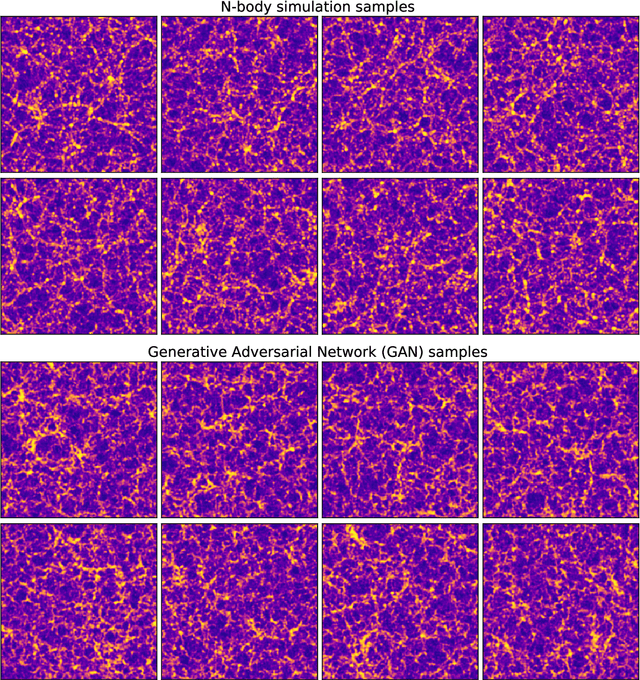

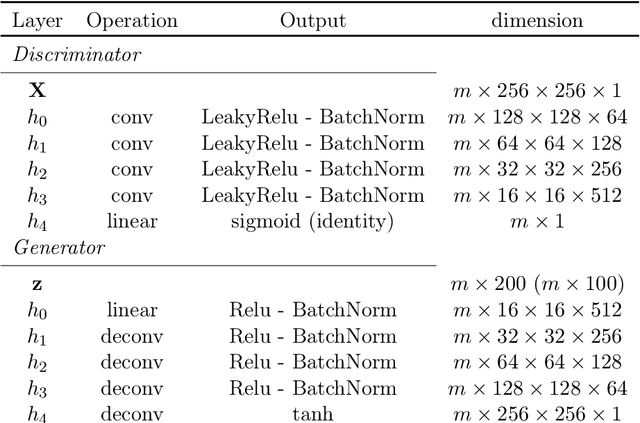

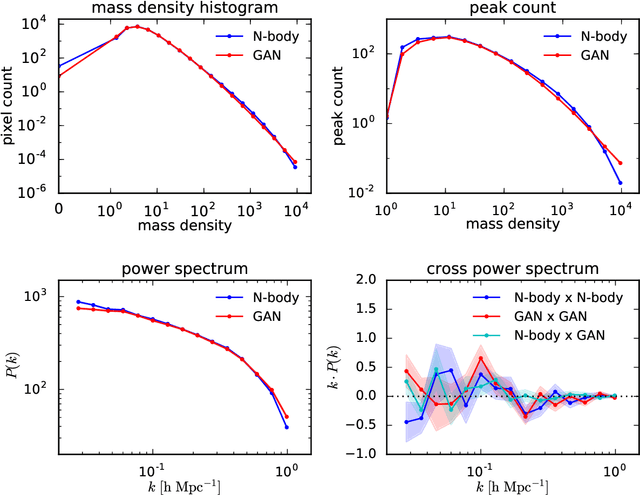

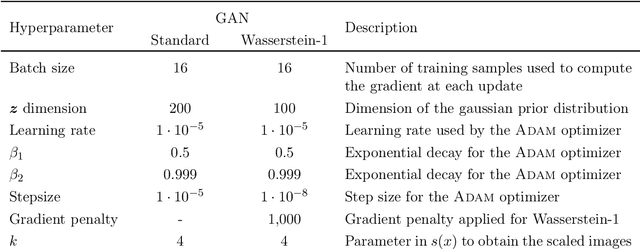

Fast Cosmic Web Simulations with Generative Adversarial Networks

Sep 20, 2018

Abstract:Dark matter in the universe evolves through gravity to form a complex network of halos, filaments, sheets and voids, that is known as the cosmic web. Computational models of the underlying physical processes, such as classical N-body simulations, are extremely resource intensive, as they track the action of gravity in an expanding universe using billions of particles as tracers of the cosmic matter distribution. Therefore, upcoming cosmology experiments will face a computational bottleneck that may limit the exploitation of their full scientific potential. To address this challenge, we demonstrate the application of a machine learning technique called Generative Adversarial Networks (GAN) to learn models that can efficiently generate new, physically realistic realizations of the cosmic web. Our training set is a small, representative sample of 2D image snapshots from N-body simulations of size 500 and 100 Mpc. We show that the GAN-produced results are qualitatively and quantitatively very similar to the originals. Generation of a new cosmic web realization with a GAN takes a fraction of a second, compared to the many hours needed by the N-body technique. We anticipate that GANs will therefore play an important role in providing extremely fast and precise simulations of cosmic web in the era of large cosmological surveys, such as Euclid and LSST.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge