Hyesu Jang

XPRESS: X-Band Radar Place Recognition via Elliptical Scan Shaping

Nov 12, 2025

Abstract:X-band radar serves as the primary sensor on maritime vessels, however, its application in autonomous navigation has been limited due to low sensor resolution and insufficient information content. To enable X-band radar-only autonomous navigation in maritime environments, this paper proposes a place recognition algorithm specifically tailored for X-band radar, incorporating an object density-based rule for efficient candidate selection and intentional degradation of radar detections to achieve robust retrieval performance. The proposed algorithm was evaluated on both public maritime radar datasets and our own collected dataset, and its performance was compared against state-of-the-art radar place recognition methods. An ablation study was conducted to assess the algorithm's performance sensitivity with respect to key parameters.

* 9 pages, 9 figures, Published in IEEE RA-L

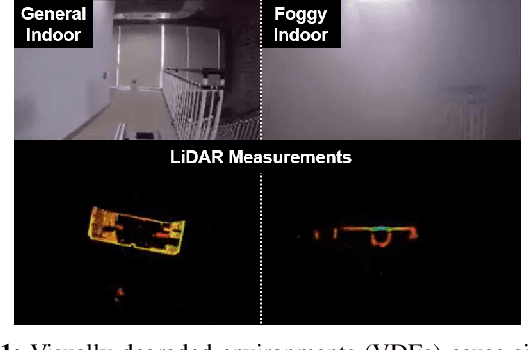

Ground-Optimized 4D Radar-Inertial Odometry via Continuous Velocity Integration using Gaussian Process

Feb 12, 2025

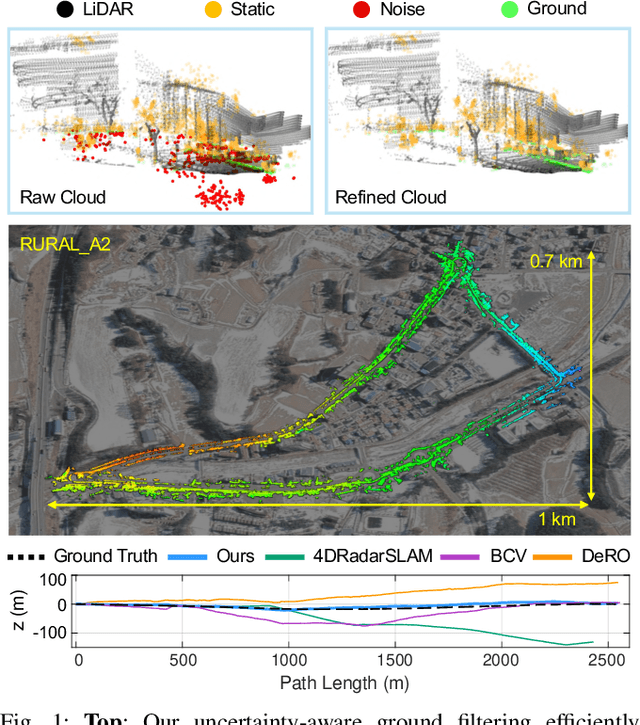

Abstract:Radar ensures robust sensing capabilities in adverse weather conditions, yet challenges remain due to its high inherent noise level. Existing radar odometry has overcome these challenges with strategies such as filtering spurious points, exploiting Doppler velocity, or integrating with inertial measurements. This paper presents two novel improvements beyond the existing radar-inertial odometry: ground-optimized noise filtering and continuous velocity preintegration. Despite the widespread use of ground planes in LiDAR odometry, imprecise ground point distributions of radar measurements cause naive plane fitting to fail. Unlike plane fitting in LiDAR, we introduce a zone-based uncertainty-aware ground modeling specifically designed for radar. Secondly, we note that radar velocity measurements can be better combined with IMU for a more accurate preintegration in radar-inertial odometry. Existing methods often ignore temporal discrepancies between radar and IMU by simplifying the complexities of asynchronous data streams with discretized propagation models. Tackling this issue, we leverage GP and formulate a continuous preintegration method for tightly integrating 3-DOF linear velocity with IMU, facilitating full 6-DOF motion directly from the raw measurements. Our approach demonstrates remarkable performance (less than 1% vertical drift) in public datasets with meticulous conditions, illustrating substantial improvement in elevation accuracy. The code will be released as open source for the community: https://github.com/wooseongY/Go-RIO.

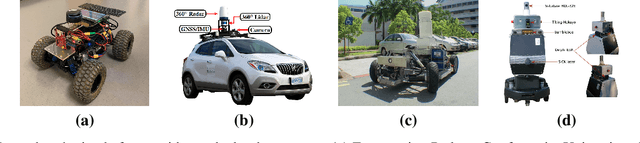

HeRCULES: Heterogeneous Radar Dataset in Complex Urban Environment for Multi-session Radar SLAM

Feb 04, 2025

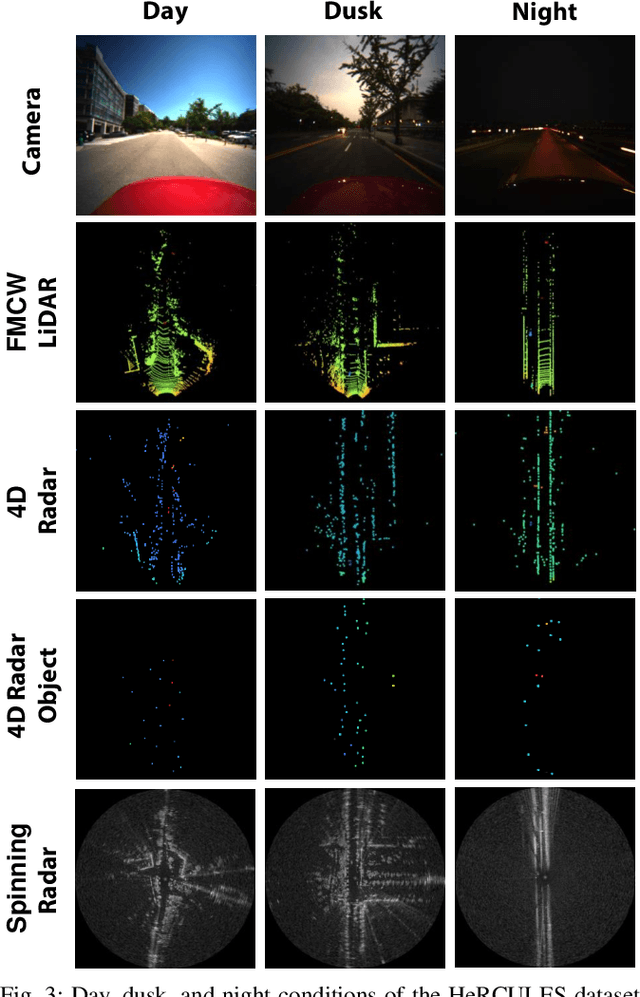

Abstract:Recently, radars have been widely featured in robotics for their robustness in challenging weather conditions. Two commonly used radar types are spinning radars and phased-array radars, each offering distinct sensor characteristics. Existing datasets typically feature only a single type of radar, leading to the development of algorithms limited to that specific kind. In this work, we highlight that combining different radar types offers complementary advantages, which can be leveraged through a heterogeneous radar dataset. Moreover, this new dataset fosters research in multi-session and multi-robot scenarios where robots are equipped with different types of radars. In this context, we introduce the HeRCULES dataset, a comprehensive, multi-modal dataset with heterogeneous radars, FMCW LiDAR, IMU, GPS, and cameras. This is the first dataset to integrate 4D radar and spinning radar alongside FMCW LiDAR, offering unparalleled localization, mapping, and place recognition capabilities. The dataset covers diverse weather and lighting conditions and a range of urban traffic scenarios, enabling a comprehensive analysis across various environments. The sequence paths with multiple revisits and ground truth pose for each sensor enhance its suitability for place recognition research. We expect the HeRCULES dataset to facilitate odometry, mapping, place recognition, and sensor fusion research. The dataset and development tools are available at https://sites.google.com/view/herculesdataset.

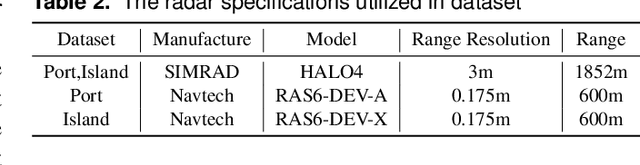

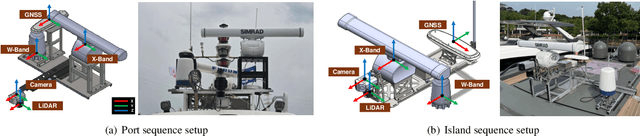

MOANA: Multi-Radar Dataset for Maritime Odometry and Autonomous Navigation Application

Dec 05, 2024

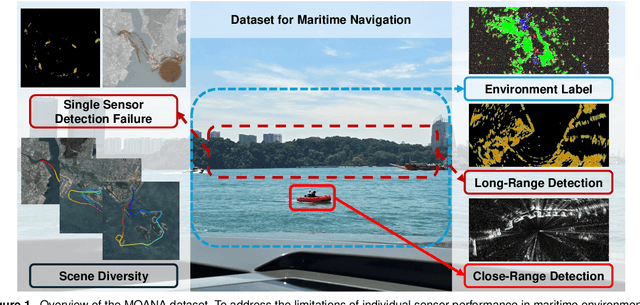

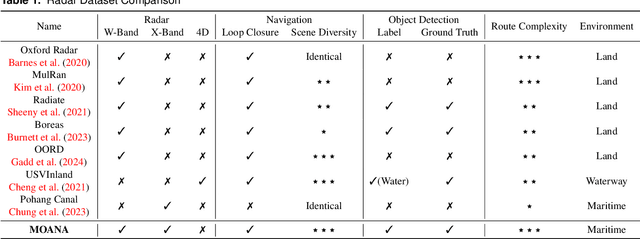

Abstract:Maritime environmental sensing requires overcoming challenges from complex conditions such as harsh weather, platform perturbations, large dynamic objects, and the requirement for long detection ranges. While cameras and LiDAR are commonly used in ground vehicle navigation, their applicability in maritime settings is limited by range constraints and hardware maintenance issues. Radar sensors, however, offer robust long-range detection capabilities and resilience to physical contamination from weather and saline conditions, making it a powerful sensor for maritime navigation. Among various radar types, X-band radar (e.g., marine radar) is widely employed for maritime vessel navigation, providing effective long-range detection essential for situational awareness and collision avoidance. Nevertheless, it exhibits limitations during berthing operations where close-range object detection is critical. To address this shortcoming, we incorporate W-band radar (e.g., Navtech imaging radar), which excels in detecting nearby objects with a higher update rate. We present a comprehensive maritime sensor dataset featuring multi-range detection capabilities. This dataset integrates short-range LiDAR data, medium-range W-band radar data, and long-range X-band radar data into a unified framework. Additionally, it includes object labels for oceanic object detection usage, derived from radar and stereo camera images. The dataset comprises seven sequences collected from diverse regions with varying levels of estimation difficulty, ranging from easy to challenging, and includes common locations suitable for global localization tasks. This dataset serves as a valuable resource for advancing research in place recognition, odometry estimation, SLAM, object detection, and dynamic object elimination within maritime environments. Dataset can be found in following link: https://sites.google.com/view/rpmmoana

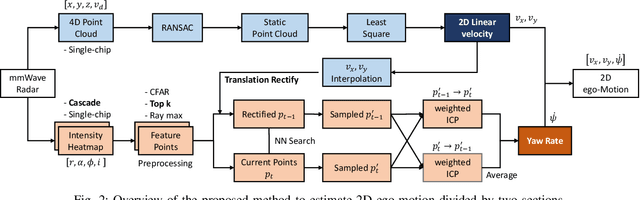

2D Ego-Motion with Yaw Estimation using Only mmWave Radars via Two-Way weighted ICP

Mar 31, 2024

Abstract:The interest in single-chip mmWave Radar is driven by their compact form factor, cost-effectiveness, and robustness under harsh environmental conditions. Despite its promising attributes, the principal limitation of mmWave radar lies in its capacity for autonomous yaw rate estimation. Conventional solutions have often resorted to integrating inertial measurement unit (IMU) or deploying multiple radar units to circumvent this shortcoming. This paper introduces an innovative methodology for two-dimensional ego-motion estimation, focusing on yaw rate deduction, utilizing solely mmWave radar sensors. By applying a weighted Iterated Closest Point (ICP) algorithm to register processed points derived from heatmap data, our method facilitates 2D ego-motion estimation devoid of prior information. Through experimental validation, we verified the effectiveness and promise of our technique for ego-motion estimation using exclusively radar data.

Imaging radar and LiDAR image translation for 3-DOF extrinsic calibration

Mar 27, 2024Abstract:The integration of sensor data is crucial in the field of robotics to take full advantage of the various sensors employed. One critical aspect of this integration is determining the extrinsic calibration parameters, such as the relative transformation, between each sensor. The use of data fusion between complementary sensors, such as radar and LiDAR, can provide significant benefits, particularly in harsh environments where accurate depth data is required. However, noise included in radar sensor data can make the estimation of extrinsic calibration challenging. To address this issue, we present a novel framework for the extrinsic calibration of radar and LiDAR sensors, utilizing CycleGAN as amethod of image-to-image translation. Our proposed method employs translating radar bird-eye-view images into LiDAR-style images to estimate the 3-DOF extrinsic parameters. The use of image registration techniques, as well as deskewing based on sensor odometry and B-spline interpolation, is employed to address the rolling shutter effect commonly present in spinning sensors. Our method demonstrates a notable improvement in extrinsic calibration compared to filter-based methods using the MulRan dataset.

LodeStar: Maritime Radar Descriptor for Semi-Direct Radar Odometry

Mar 05, 2024

Abstract:Maritime radars are prevalently adopted to capture the vessel's omnidirectional data as imagery. Nevertheless, inherent challenges persist with marine radars, including limited frequency, suboptimal resolution, and indeterminate detections. Additionally, the scarcity of discernible landmarks in the vast marine expanses remains a challenge, resulting in consecutive scenes that often lack matching feature points. In this context, we introduce a resilient maritime radar scan representation LodeStar, and an enhanced feature extraction technique tailored for marine radar applications. Moreover, we embark on estimating marine radar odometry utilizing a semi-direct approach. LodeStar-based approach markedly attenuates the errors in odometry estimation, and our assertion is corroborated through meticulous experimental validation.

* IEEE Robotics and Automation Letter

RaPlace: Place Recognition for Imaging Radar using Radon Transform and Mutable Threshold

Jul 10, 2023

Abstract:Due to the robustness in sensing, radar has been highlighted, overcoming harsh weather conditions such as fog and heavy snow. In this paper, we present a novel radar-only place recognition that measures the similarity score by utilizing Radon-transformed sinogram images and cross-correlation in frequency domain. Doing so achieves rigid transform invariance during place recognition, while ignoring the effects of radar multipath and ring noises. In addition, we compute the radar similarity distance using mutable threshold to mitigate variability of the similarity score, and reduce the time complexity of processing a copious radar data with hierarchical retrieval. We demonstrate the matching performance for both intra-session loop-closure detection and global place recognition using a publicly available imaging radar datasets. We verify reliable performance compared to existing stable radar place recognition method. Furthermore, codes for the proposed imaging radar place recognition is released for community.

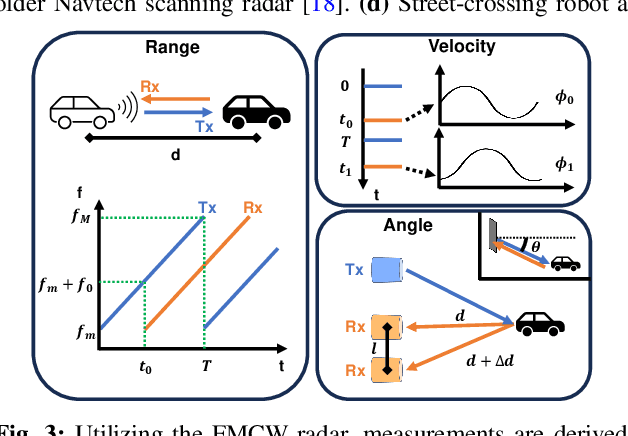

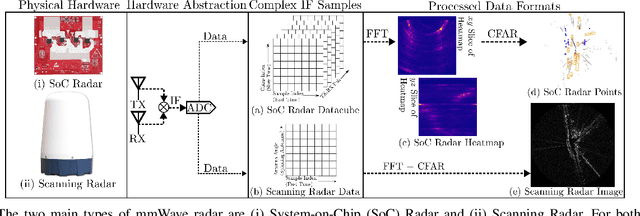

A New Wave in Robotics: Survey on Recent mmWave Radar Applications in Robotics

May 02, 2023

Abstract:We survey the current state of millimeterwave (mmWave) radar applications in robotics with a focus on unique capabilities, and discuss future opportunities based on the state of the art. Frequency Modulated Continuous Wave (FMCW) mmWave radars operating in the 76--81GHz range are an appealing alternative to lidars, cameras and other sensors operating in the near visual spectrum. Radar has been made more widely available in new packaging classes, more convenient for robotics and its longer wavelengths have the ability to bypass visual clutter such as fog, dust, and smoke. We begin by covering radar principles as they relate to robotics. We then review the relevant new research across a broad spectrum of robotics applications beginning with motion estimation, localization, and mapping. We then cover object detection and classification, and then close with an analysis of current datasets and calibration techniques that provide entry points into radar research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge