Hongguang Fu

MultiMath: Bridging Visual and Mathematical Reasoning for Large Language Models

Aug 30, 2024

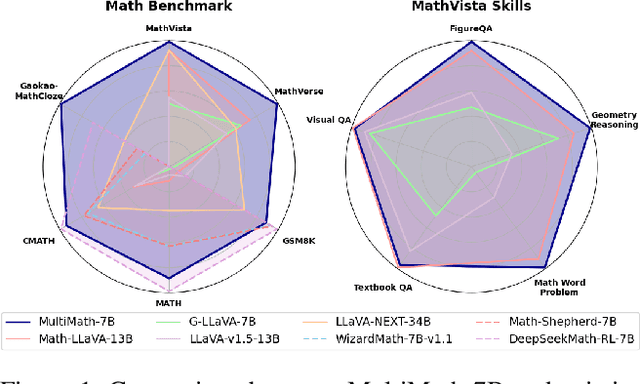

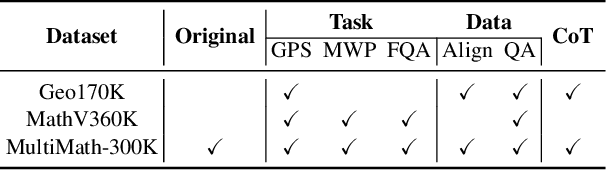

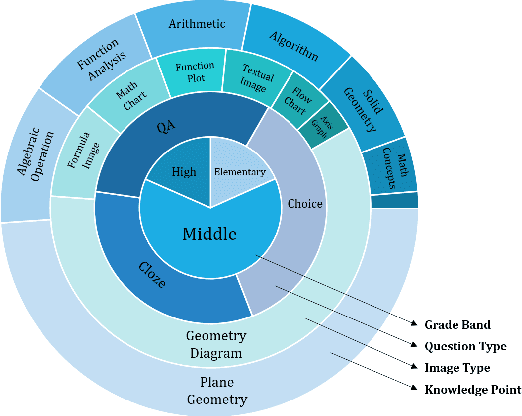

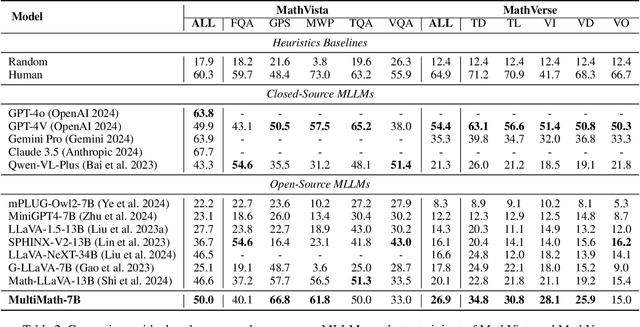

Abstract:The rapid development of large language models (LLMs) has spurred extensive research into their domain-specific capabilities, particularly mathematical reasoning. However, most open-source LLMs focus solely on mathematical reasoning, neglecting the integration with visual injection, despite the fact that many mathematical tasks rely on visual inputs such as geometric diagrams, charts, and function plots. To fill this gap, we introduce \textbf{MultiMath-7B}, a multimodal large language model that bridges the gap between math and vision. \textbf{MultiMath-7B} is trained through a four-stage process, focusing on vision-language alignment, visual and math instruction-tuning, and process-supervised reinforcement learning. We also construct a novel, diverse and comprehensive multimodal mathematical dataset, \textbf{MultiMath-300K}, which spans K-12 levels with image captions and step-wise solutions. MultiMath-7B achieves state-of-the-art (SOTA) performance among open-source models on existing multimodal mathematical benchmarks and also excels on text-only mathematical benchmarks. Our model and dataset are available at {\textcolor{blue}{\url{https://github.com/pengshuai-rin/MultiMath}}}.

Incorporating Graph Attention Mechanism into Geometric Problem Solving Based on Deep Reinforcement Learning

Mar 14, 2024Abstract:In the context of online education, designing an automatic solver for geometric problems has been considered a crucial step towards general math Artificial Intelligence (AI), empowered by natural language understanding and traditional logical inference. In most instances, problems are addressed by adding auxiliary components such as lines or points. However, adding auxiliary components automatically is challenging due to the complexity in selecting suitable auxiliary components especially when pivotal decisions have to be made. The state-of-the-art performance has been achieved by exhausting all possible strategies from the category library to identify the one with the maximum likelihood. However, an extensive strategy search have to be applied to trade accuracy for ef-ficiency. To add auxiliary components automatically and efficiently, we present deep reinforcement learning framework based on the language model, such as BERT. We firstly apply the graph attention mechanism to reduce the strategy searching space, called AttnStrategy, which only focus on the conclusion-related components. Meanwhile, a novel algorithm, named Automatically Adding Auxiliary Components using Reinforcement Learning framework (A3C-RL), is proposed by forcing an agent to select top strategies, which incorporates the AttnStrategy and BERT as the memory components. Results from extensive experiments show that the proposed A3C-RL algorithm can substantially enhance the average precision by 32.7% compared to the traditional MCTS. In addition, the A3C-RL algorithm outperforms humans on the geometric questions from the annual University Entrance Mathematical Examination of China.

Unsupervised Sentiment Analysis by Transferring Multi-source Knowledge

May 09, 2021

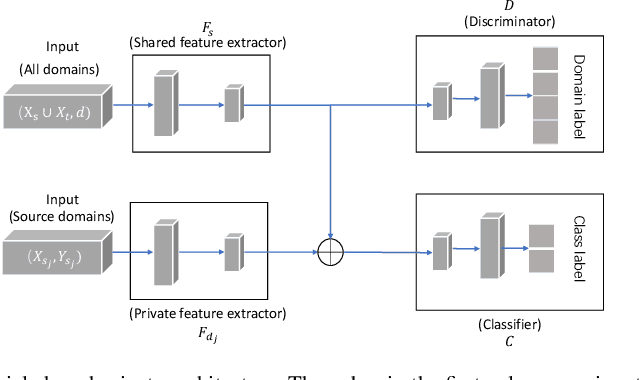

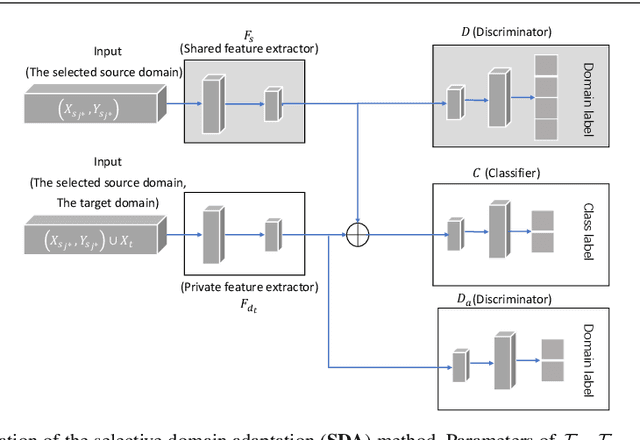

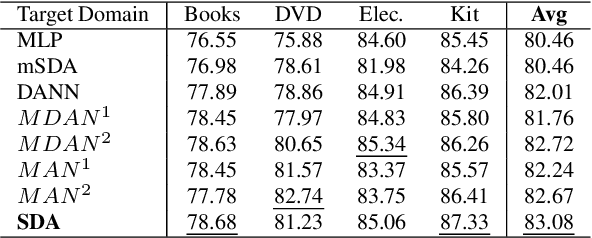

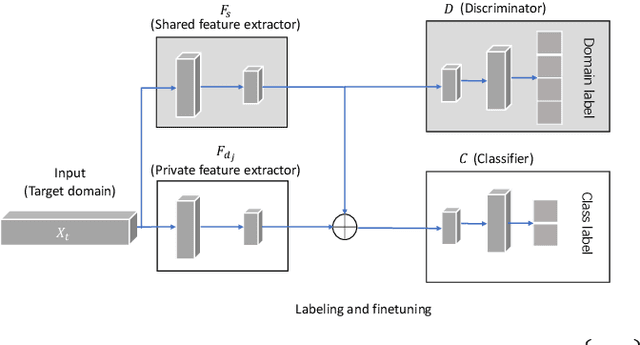

Abstract:Sentiment analysis (SA) is an important research area in cognitive computation-thus in-depth studies of patterns of sentiment analysis are necessary. At present, rich resource data-based SA has been well developed, while the more challenging and practical multi-source unsupervised SA (i.e. a target domain SA by transferring from multiple source domains) is seldom studied. The challenges behind this problem mainly locate in the lack of supervision information, the semantic gaps among domains (i.e., domain shifts), and the loss of knowledge. However, existing methods either lack the distinguishable capacity of the semantic gaps among domains or lose private knowledge. To alleviate these problems, we propose a two-stage domain adaptation framework. In the first stage, a multi-task methodology-based shared-private architecture is employed to explicitly model the domain common features and the domain-specific features for the labeled source domains. In the second stage, two elaborate mechanisms are embedded in the shared private architecture to transfer knowledge from multiple source domains. The first mechanism is a selective domain adaptation (SDA) method, which transfers knowledge from the closest source domain. And the second mechanism is a target-oriented ensemble (TOE) method, in which knowledge is transferred through a well-designed ensemble method. Extensive experiment evaluations verify that the performance of the proposed framework outperforms unsupervised state-of-the-art competitors. What can be concluded from the experiments is that transferring from very different distributed source domains may degrade the target-domain performance, and it is crucial to choose the proper source domains to transfer from.

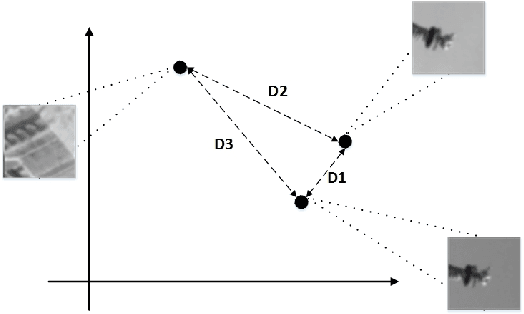

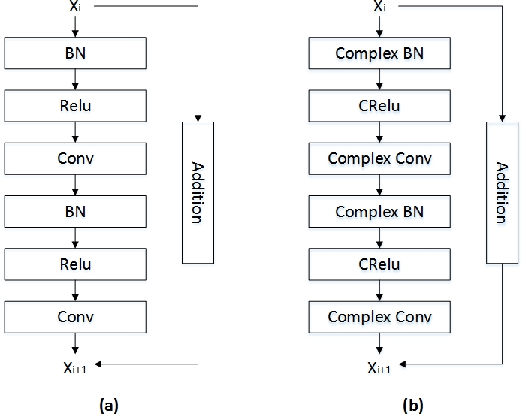

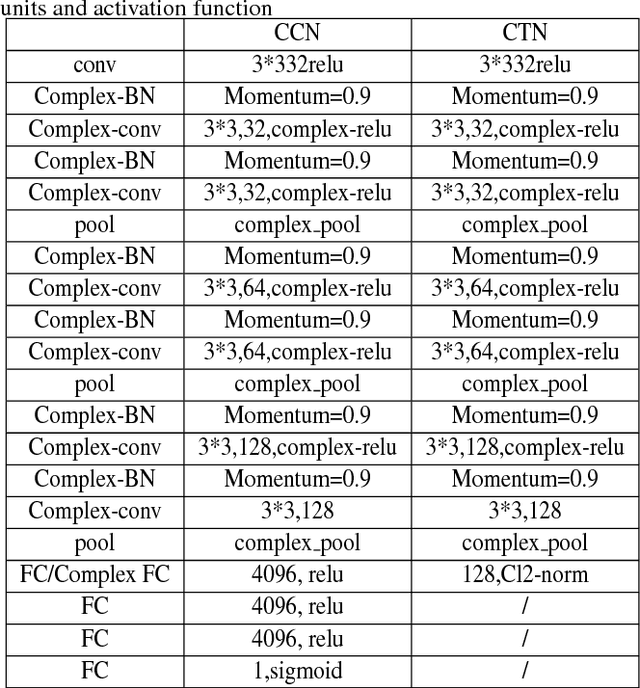

Utilizing Complex-valued Network for Learning to Compare Image Patches

Nov 29, 2018

Abstract:At present, the great achievements of convolutional neural network(CNN) in feature and metric learning have attracted many researchers. However, the vast majority of deep network architectures have been used to represent based on real values. The research of complex-valued networks is seldom concerned due to the absence of effective models and suitable distance of complex-valued vector. Motived by recent works, complex vectors have been shown to have a richer representational capacity and efficient complex blocks have been reported, we propose a new approach for learning image descriptors with complex numbers to compare image patches. We also propose a new architecture to learn image similarity function directly based on complex-valued network. We show that our models can significantly outperform the state-of-the art on benchmark datasets. We make the source code of our models publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge