Holger Schulze

Classification at the Accuracy Limit -- Facing the Problem of Data Ambiguity

Jun 04, 2022

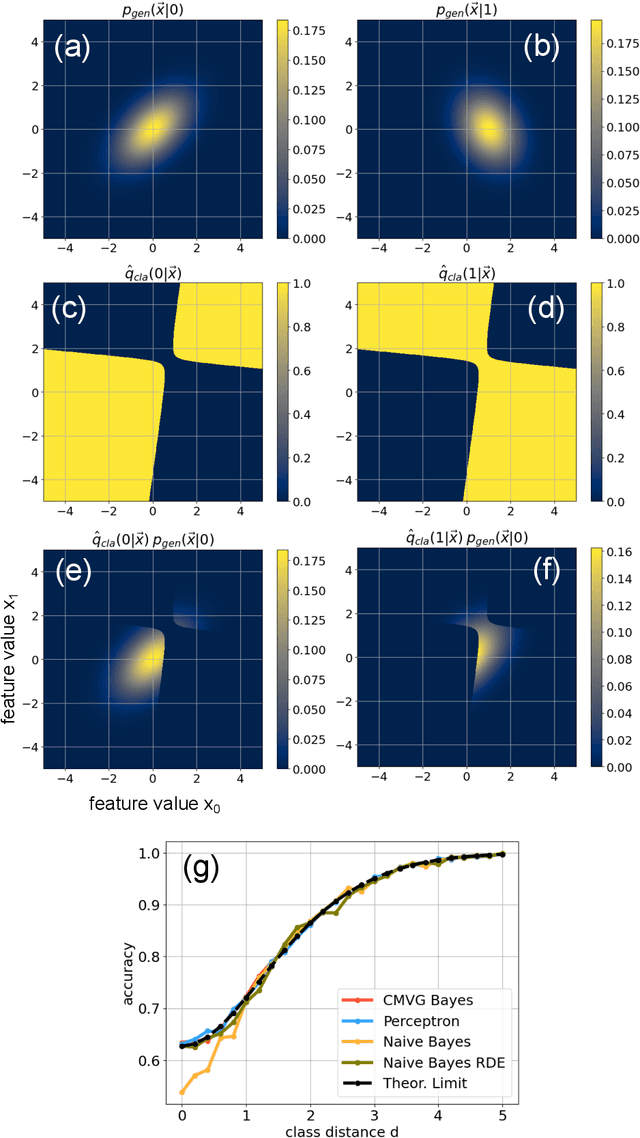

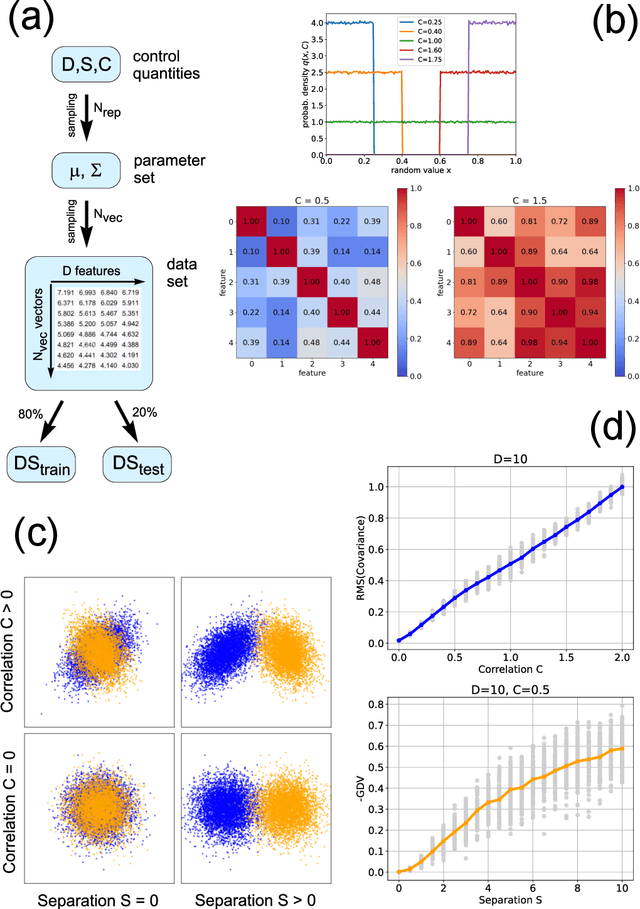

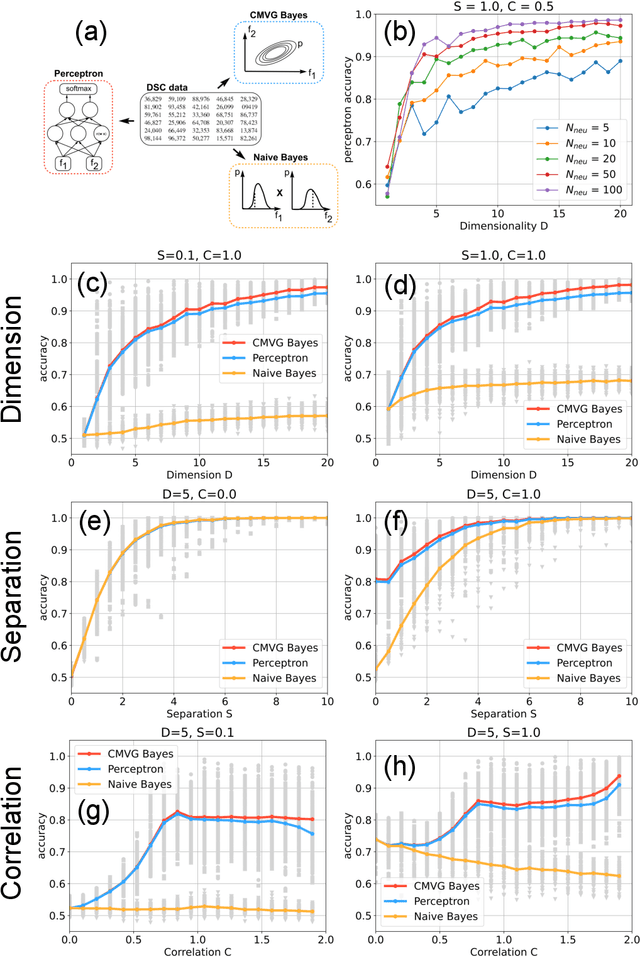

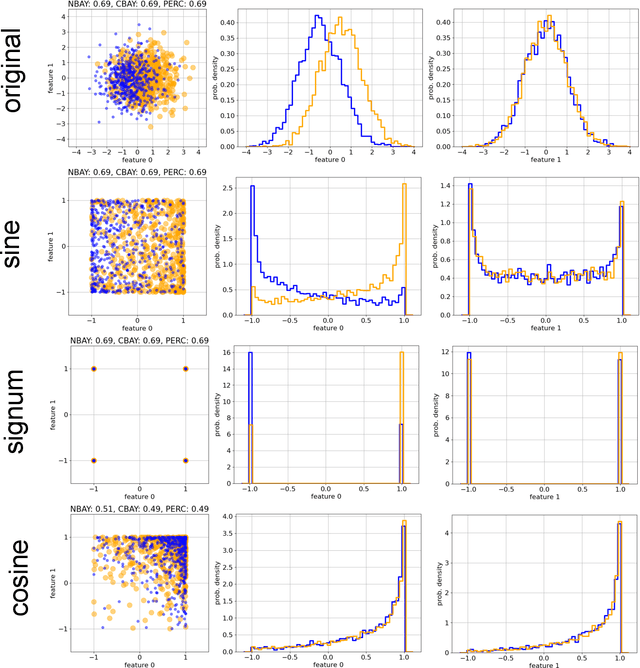

Abstract:Data classification, the process of analyzing data and organizing it into categories, is a fundamental computing problem of natural and artificial information processing systems. Ideally, the performance of classifier models would be evaluated using unambiguous data sets, where the 'correct' assignment of category labels to the input data vectors is unequivocal. In real-world problems, however, a significant fraction of actually occurring data vectors will be located in a boundary zone between or outside of all categories, so that perfect classification cannot even in principle be achieved. We derive the theoretical limit for classification accuracy that arises from the overlap of data categories. By using a surrogate data generation model with adjustable statistical properties, we show that sufficiently powerful classifiers based on completely different principles, such as perceptrons and Bayesian models, all perform at this universal accuracy limit. Remarkably, the accuracy limit is not affected by applying non-linear transformations to the data, even if these transformations are non-reversible and drastically reduce the information content of the input data. We compare emerging data embeddings produced by supervised and unsupervised training, using MNIST and human EEG recordings during sleep. We find that categories are not only well separated in the final layers of classifiers trained with back-propagation, but to a smaller degree also after unsupervised dimensionality reduction. This suggests that human-defined categories, such as hand-written digits or sleep stages, can indeed be considered as 'natural kinds'.

Predictive Coding and Stochastic Resonance: Towards a Unified Theory of Auditory (Phantom) Perception

Apr 07, 2022

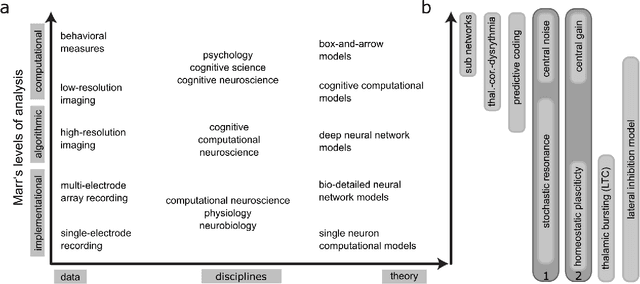

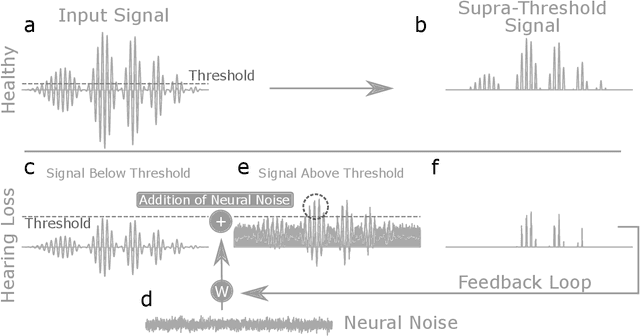

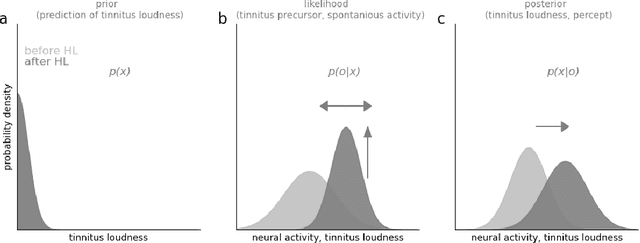

Abstract:Cognitive computational neuroscience (CCN) suggests that to gain a mechanistic understanding of brain function, hypothesis driven experiments should be accompanied by biologically plausible computational models. This novel research paradigm offers a way from alchemy to chemistry, in auditory neuroscience. With a special focus on tinnitus - as the prime example of auditory phantom perception - we review recent work at the intersection of artificial intelligence, psychology, and neuroscience, foregrounding the idea that experiments will yield mechanistic insight only when employed to test formal or computational models. This view challenges the popular notion that tinnitus research is primarily data limited, and that producing large, multi-modal, and complex data-sets, analyzed with advanced data analysis algorithms, will lead to fundamental insights into how tinnitus emerges. We conclude that two fundamental processing principles - being ubiquitous in the brain - best fit to a vast number of experimental results and therefore provide the most explanatory power: predictive coding as a top-down, and stochastic resonance as a complementary bottom-up mechanism. Furthermore, we argue that even though contemporary artificial intelligence and machine learning approaches largely lack biological plausibility, the models to be constructed will have to draw on concepts from these fields; since they provide a formal account of the requisite computations that underlie brain function. Nevertheless, biological fidelity will have to be addressed, allowing for testing possible treatment strategies in silico, before application in animal or patient studies. This iteration of computational and empirical studies may help to open the "black boxes" of both machine learning and the human brain.

How deep is deep enough? - Optimizing deep neural network architecture

Nov 05, 2018

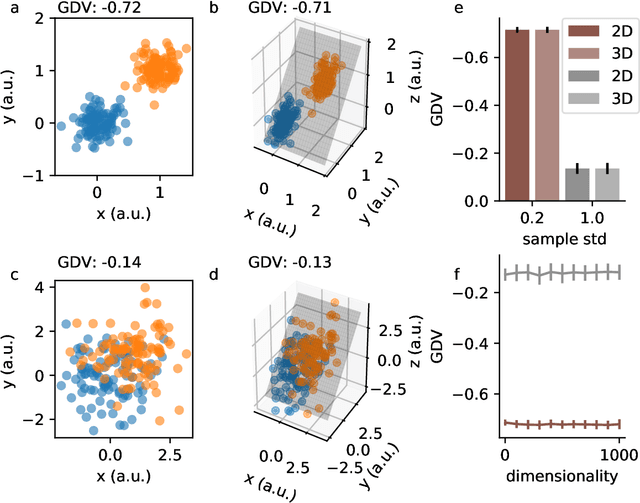

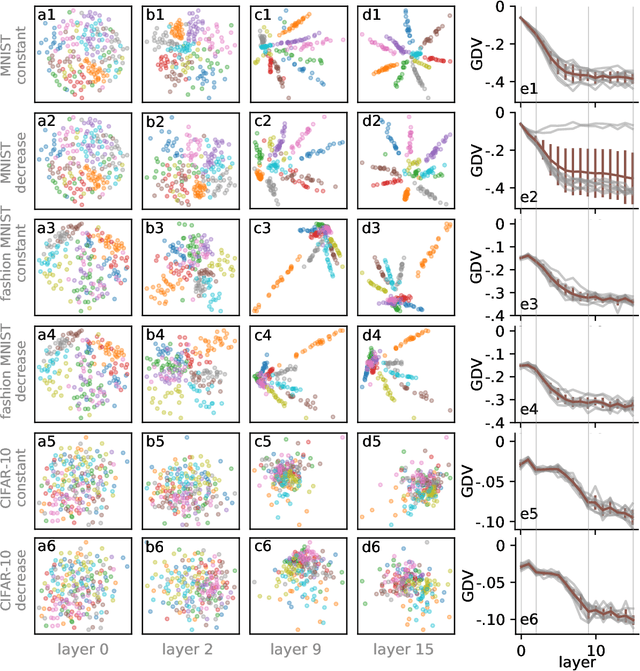

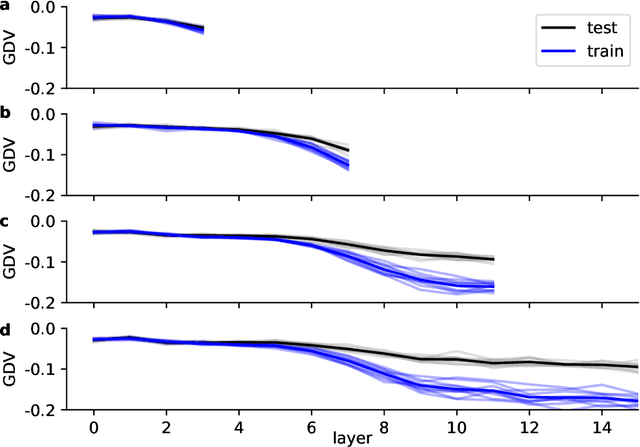

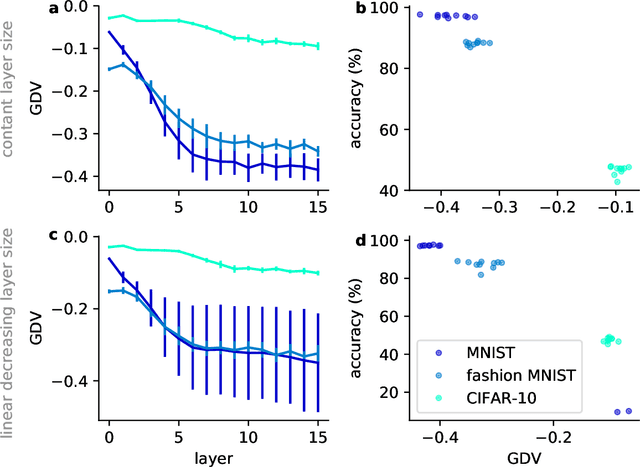

Abstract:Deep neural networks use stacked layers of feature detectors to repeatedly transform the input data, so that structurally different classes of input become well separated in the final layer. While the method has turned out extremely powerful in many applications, its success depends critically on the correct choice of hyperparameters, in particular the number of network layers. Here, we introduce a new measure, called the generalized discrimination value (GDV), which quantifies how well different object classes separate in each layer. Due to its definition, the GDV is invariant to translation and scaling of the input data, independent of the number of features, as well as independent of the number and permutation of the neurons within a layer. We compute the GDV in each layer of a Deep Belief Network that was trained unsupervised on the MNIST data set. Strikingly, we find that the GDV first improves with each successive network layer, but then gets worse again beyond layer 30, thus indicating the optimal network depth for this data classification task. Our further investigations suggest that the GDV can serve as a universal tool to determine the optimal number of layers in deep neural networks for any type of input data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge