Holger Banzhaf

Highly Accurate and Diverse Traffic Data: The DeepScenario Open 3D Dataset

Apr 24, 2025

Abstract:Accurate 3D trajectory data is crucial for advancing autonomous driving. Yet, traditional datasets are usually captured by fixed sensors mounted on a car and are susceptible to occlusion. Additionally, such an approach can precisely reconstruct the dynamic environment in the close vicinity of the measurement vehicle only, while neglecting objects that are further away. In this paper, we introduce the DeepScenario Open 3D Dataset (DSC3D), a high-quality, occlusion-free dataset of 6 degrees of freedom bounding box trajectories acquired through a novel monocular camera drone tracking pipeline. Our dataset includes more than 175,000 trajectories of 14 types of traffic participants and significantly exceeds existing datasets in terms of diversity and scale, containing many unprecedented scenarios such as complex vehicle-pedestrian interaction on highly populated urban streets and comprehensive parking maneuvers from entry to exit. DSC3D dataset was captured in five various locations in Europe and the United States and include: a parking lot, a crowded inner-city, a steep urban intersection, a federal highway, and a suburban intersection. Our 3D trajectory dataset aims to enhance autonomous driving systems by providing detailed environmental 3D representations, which could lead to improved obstacle interactions and safety. We demonstrate its utility across multiple applications including motion prediction, motion planning, scenario mining, and generative reactive traffic agents. Our interactive online visualization platform and the complete dataset are publicly available at app.deepscenario.com, facilitating research in motion prediction, behavior modeling, and safety validation.

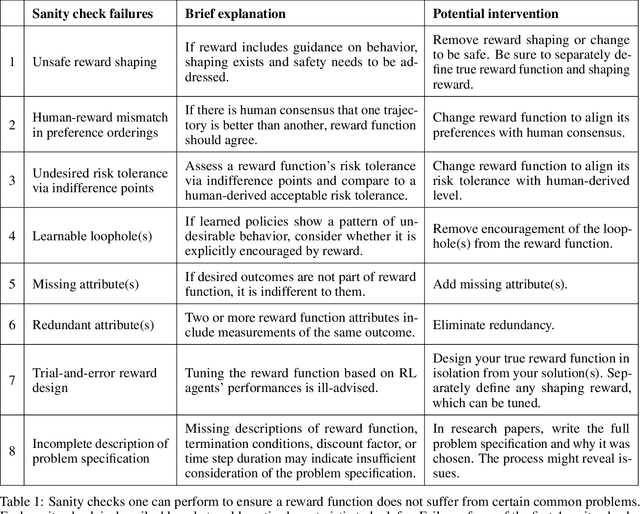

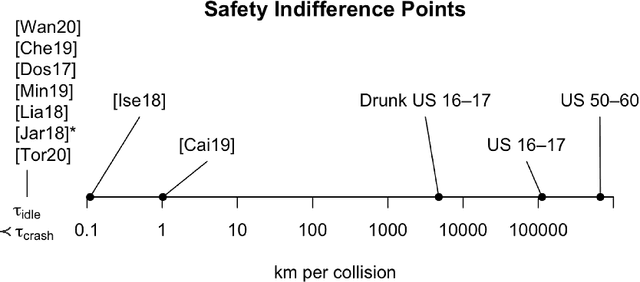

Reward (Mis)design for Autonomous Driving

Apr 28, 2021

Abstract:This paper considers the problem of reward design for autonomous driving (AD), with insights that are also applicable to the design of cost functions and performance metrics more generally. Herein we develop 8 simple sanity checks for identifying flaws in reward functions. The sanity checks are applied to reward functions from past work on reinforcement learning (RL) for autonomous driving, revealing near-universal flaws in reward design for AD that might also exist pervasively across reward design for other tasks. Lastly, we explore promising directions that may help future researchers design reward functions for AD.

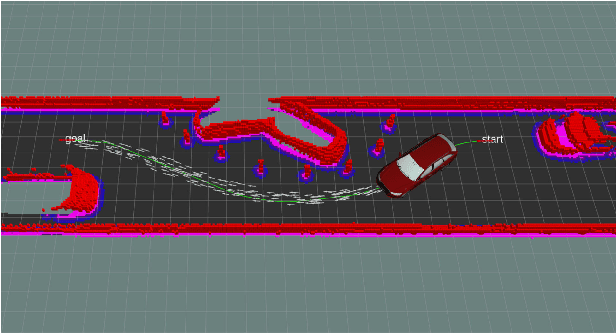

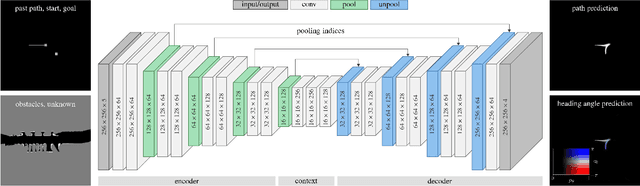

Learning to Predict Ego-Vehicle Poses for Sampling-Based Nonholonomic Motion Planning

Feb 01, 2019

Abstract:Sampling-based motion planning is an effective tool to compute safe trajectories for automated vehicles in complex environments. However, a fast convergence to the optimal solution can only be ensured with the use of problem-specific sampling distributions. Due to the large variety of driving situations within the context of automated driving, it is very challenging to manually design such distributions. This paper introduces therefore a data-driven approach utilizing a deep convolutional neural network (CNN): Given the current driving situation, future ego-vehicle poses can be directly generated from the output of the CNN allowing to guide the motion planner efficiently towards the optimal solution. A benchmark highlights that the CNN predicts future vehicle poses with a higher accuracy compared to uniform sampling and a state-of-the-art A*-based approach. Combining this CNN-guided sampling with the motion planner Bidirectional RRT* reduces the computation time by up to an order of magnitude and yields a faster convergence to a lower cost as well as a success rate of 100 % in the tested scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge