Hiroaki Funayama

Automatic Feedback Generation for Short Answer Questions using Answer Diagnostic Graphs

Jan 27, 2025

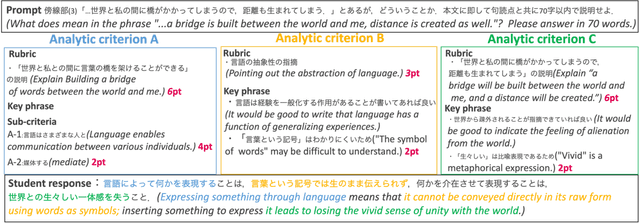

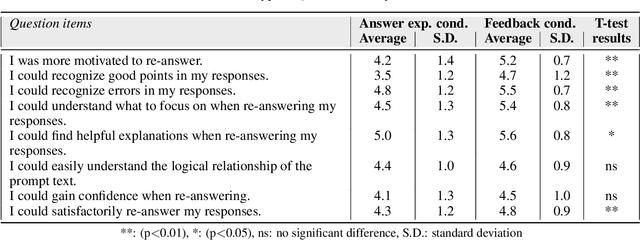

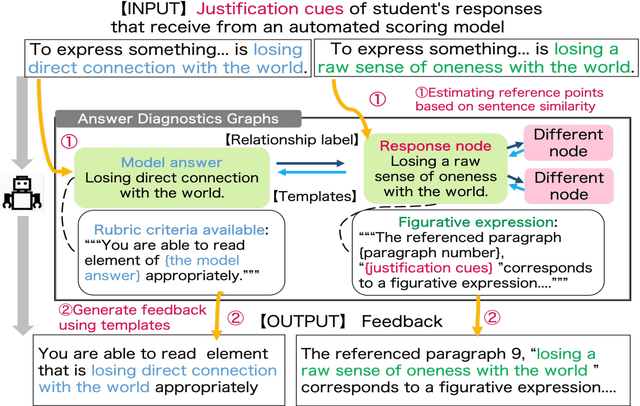

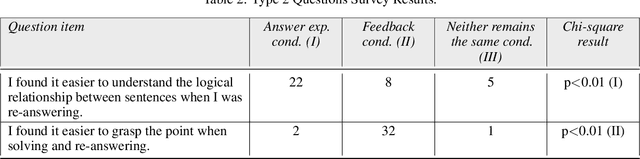

Abstract:Short-reading comprehension questions help students understand text structure but lack effective feedback. Students struggle to identify and correct errors, while manual feedback creation is labor-intensive. This highlights the need for automated feedback linking responses to a scoring rubric for deeper comprehension. Despite advances in Natural Language Processing (NLP), research has focused on automatic grading, with limited work on feedback generation. To address this, we propose a system that generates feedback for student responses. Our contributions are twofold. First, we introduce the first system for feedback on short-answer reading comprehension. These answers are derived from the text, requiring structural understanding. We propose an "answer diagnosis graph," integrating the text's logical structure with feedback templates. Using this graph and NLP techniques, we estimate students' comprehension and generate targeted feedback. Second, we evaluate our feedback through an experiment with Japanese high school students (n=39). They answered two 70-80 word questions and were divided into two groups with minimal academic differences. One received a model answer, the other system-generated feedback. Both re-answered the questions, and we compared score changes. A questionnaire assessed perceptions and motivation. Results showed no significant score improvement between groups, but system-generated feedback helped students identify errors and key points in the text. It also significantly increased motivation. However, further refinement is needed to enhance text structure understanding.

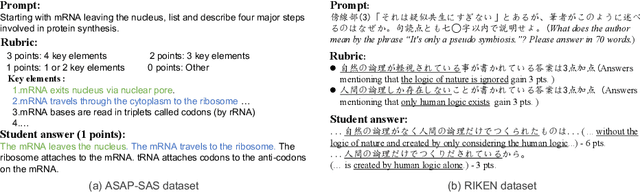

Reducing the Cost: Cross-Prompt Pre-Finetuning for Short Answer Scoring

Aug 26, 2024Abstract:Automated Short Answer Scoring (SAS) is the task of automatically scoring a given input to a prompt based on rubrics and reference answers. Although SAS is useful in real-world applications, both rubrics and reference answers differ between prompts, thus requiring a need to acquire new data and train a model for each new prompt. Such requirements are costly, especially for schools and online courses where resources are limited and only a few prompts are used. In this work, we attempt to reduce this cost through a two-phase approach: train a model on existing rubrics and answers with gold score signals and finetune it on a new prompt. Specifically, given that scoring rubrics and reference answers differ for each prompt, we utilize key phrases, or representative expressions that the answer should contain to increase scores, and train a SAS model to learn the relationship between key phrases and answers using already annotated prompts (i.e., cross-prompts). Our experimental results show that finetuning on existing cross-prompt data with key phrases significantly improves scoring accuracy, especially when the training data is limited. Finally, our extensive analysis shows that it is crucial to design the model so that it can learn the task's general property.

* This is the draft submitted to AIED 2023. For the latest version, please visit: https://link.springer.com/chapter/10.1007/978-3-031-36272-9_7

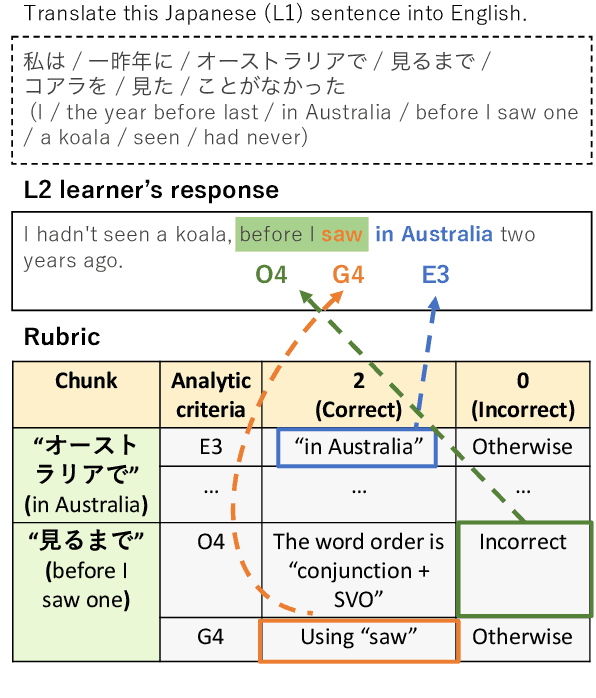

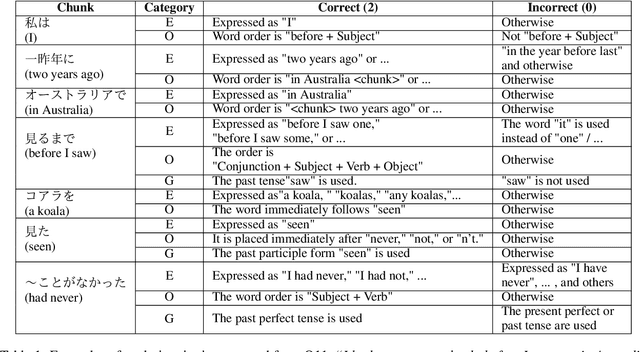

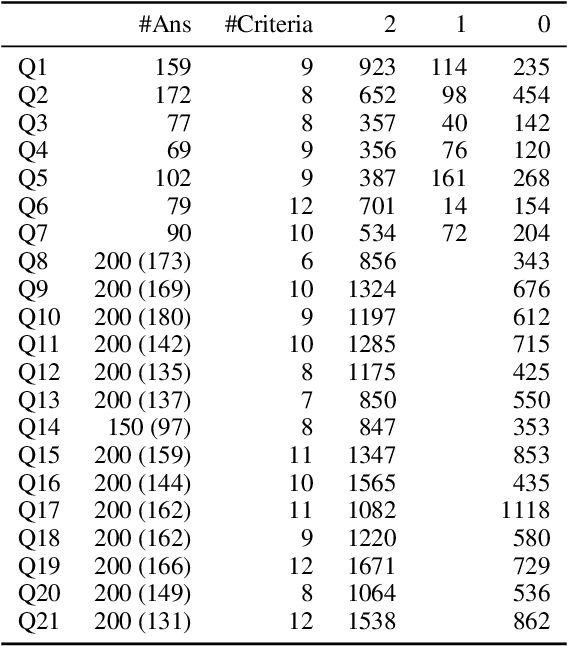

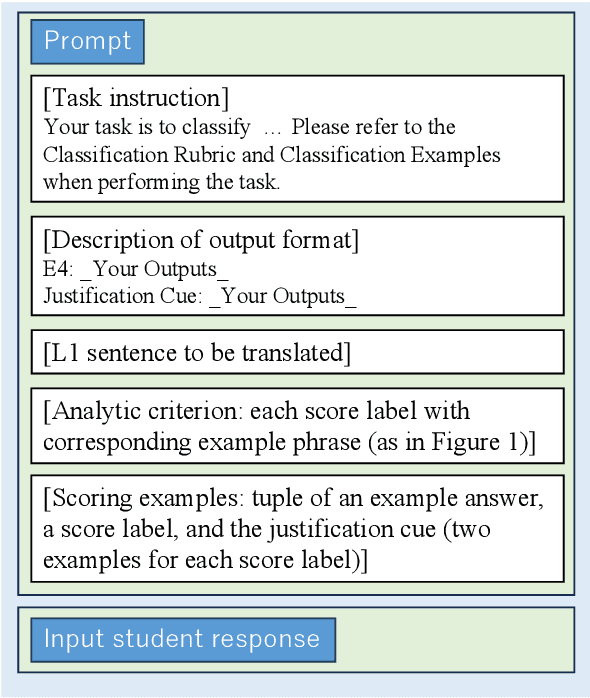

Japanese-English Sentence Translation Exercises Dataset for Automatic Grading

Mar 06, 2024

Abstract:This paper proposes the task of automatic assessment of Sentence Translation Exercises (STEs), that have been used in the early stage of L2 language learning. We formalize the task as grading student responses for each rubric criterion pre-specified by the educators. We then create a dataset for STE between Japanese and English including 21 questions, along with a total of 3, 498 student responses (167 on average). The answer responses were collected from students and crowd workers. Using this dataset, we demonstrate the performance of baselines including finetuned BERT and GPT models with few-shot in-context learning. Experimental results show that the baseline model with finetuned BERT was able to classify correct responses with approximately 90% in F1, but only less than 80% for incorrect responses. Furthermore, the GPT models with few-shot learning show poorer results than finetuned BERT, indicating that our newly proposed task presents a challenging issue, even for the stateof-the-art large language models.

Assessing Step-by-Step Reasoning against Lexical Negation: A Case Study on Syllogism

Oct 23, 2023

Abstract:Large language models (LLMs) take advantage of step-by-step reasoning instructions, e.g., chain-of-thought (CoT) prompting. Building on this, their ability to perform CoT-style reasoning robustly is of interest from a probing perspective. In this study, we inspect the step-by-step reasoning ability of LLMs with a focus on negation, which is a core linguistic phenomenon that is difficult to process. In particular, we introduce several controlled settings (e.g., reasoning in case of fictional entities) to evaluate the logical reasoning abilities of the models. We observed that dozens of modern LLMs were not robust against lexical negation (e.g., plausible ->implausible) when performing CoT-style reasoning, and the results highlight unique limitations in each LLM family.

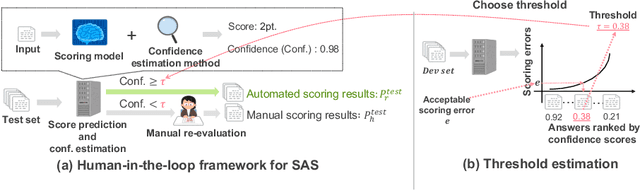

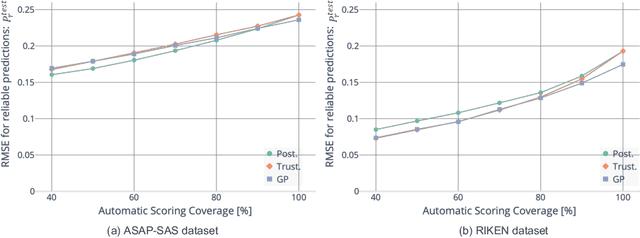

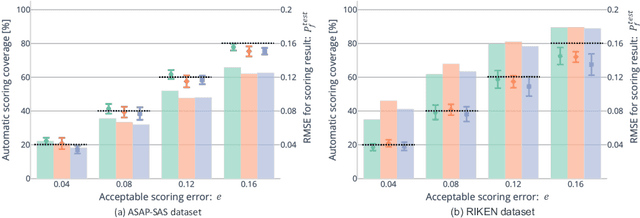

Balancing Cost and Quality: An Exploration of Human-in-the-loop Frameworks for Automated Short Answer Scoring

Jun 16, 2022

Abstract:Short answer scoring (SAS) is the task of grading short text written by a learner. In recent years, deep-learning-based approaches have substantially improved the performance of SAS models, but how to guarantee high-quality predictions still remains a critical issue when applying such models to the education field. Towards guaranteeing high-quality predictions, we present the first study of exploring the use of human-in-the-loop framework for minimizing the grading cost while guaranteeing the grading quality by allowing a SAS model to share the grading task with a human grader. Specifically, by introducing a confidence estimation method for indicating the reliability of the model predictions, one can guarantee the scoring quality by utilizing only predictions with high reliability for the scoring results and casting predictions with low reliability to human graders. In our experiments, we investigate the feasibility of the proposed framework using multiple confidence estimation methods and multiple SAS datasets. We find that our human-in-the-loop framework allows automatic scoring models and human graders to achieve the target scoring quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge