Heyi Sun

Simulation of Optical Tactile Sensors Supporting Slip and Rotation using Path Tracing and IMPM

May 05, 2024

Abstract:Optical tactile sensors are extensively utilized in intelligent robot manipulation due to their ability to acquire high-resolution tactile information at a lower cost. However, achieving adequate reality and versatility in simulating optical tactile sensors is challenging. In this paper, we propose a simulation method and validate its effectiveness through experiments. We utilize path tracing for image rendering, achieving higher similarity to real data than the baseline method in simulating pressing scenarios. Additionally, we apply the improved Material Point Method(IMPM) algorithm to simulate the relative rest between the object and the elastomer surface when the object is in motion, enabling more accurate simulation of complex manipulations such as slip and rotation.

PI3D: Efficient Text-to-3D Generation with Pseudo-Image Diffusion

Dec 14, 2023

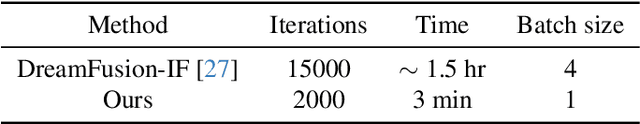

Abstract:In this paper, we introduce PI3D, a novel and efficient framework that utilizes the pre-trained text-to-image diffusion models to generate high-quality 3D shapes in minutes. On the one hand, it fine-tunes a pre-trained 2D diffusion model into a 3D diffusion model, enabling both 3D generative capabilities and generalization derived from the 2D model. On the other, it utilizes score distillation sampling of 2D diffusion models to quickly improve the quality of the sampled 3D shapes. PI3D enables the migration of knowledge from image to triplane generation by treating it as a set of pseudo-images. We adapt the modules in the pre-training model to enable hybrid training using pseudo and real images, which has proved to be a well-established strategy for improving generalizability. The efficiency of PI3D is highlighted by its ability to sample diverse 3D models in seconds and refine them in minutes. The experimental results confirm the advantages of PI3D over existing methods based on either 3D diffusion models or lifting 2D diffusion models in terms of fast generation of 3D consistent and high-quality models. The proposed PI3D stands as a promising advancement in the field of text-to-3D generation, and we hope it will inspire more research into 3D generation leveraging the knowledge in both 2D and 3D data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge