Henry Arguello

Hypercomplex Phase Retrieval

Feb 27, 2026Abstract:Hypercomplex signal processing (HSP) offers powerful tools for analyzing and processing multidimensional signals by explicitly exploiting inter-dimensional correlations through Clifford algebra. In recent years, hypercomplex formulations of the phase retrieval (PR) problem, wheren a complex-valued signal is recovered from intensity-only measurements, have attracted growing interest. Hypercomplex phase retrieval (HPR) naturally arises in a range of optical imaging and computational sensing applications, where signals are often modeled using quaternion- or octonion-valued representations. Similar to classical PR, HPR problems may involve measurements obtained via complex, hypercomplex, Fourier, or other structured sensing operators. These formulations open new avenues for the development of advanced HSP-based algorithms and theoretical frameworks. This chapter surveys emerging methodologies and applications of HPR, with particular emphasis on optical imaging systems.

GSNR: Graph Smooth Null-Space Representation for Inverse Problems

Feb 23, 2026Abstract:Inverse problems in imaging are ill-posed, leading to infinitely many solutions consistent with the measurements due to the non-trivial null-space of the sensing matrix. Common image priors promote solutions on the general image manifold, such as sparsity, smoothness, or score function. However, as these priors do not constrain the null-space component, they can bias the reconstruction. Thus, we aim to incorporate meaningful null-space information in the reconstruction framework. Inspired by smooth image representation on graphs, we propose Graph-Smooth Null-Space Representation (GSNR), a mechanism that imposes structure only into the invisible component. Particularly, given a graph Laplacian, we construct a null-restricted Laplacian that encodes similarity between neighboring pixels in the null-space signal, and we design a low-dimensional projection matrix from the $p$-smoothest spectral graph modes (lowest graph frequencies). This approach has strong theoretical and practical implications: i) improved convergence via a null-only graph regularizer, ii) better coverage, how much null-space variance is captured by $p$ modes, and iii) high predictability, how well these modes can be inferred from the measurements. GSNR is incorporated into well-known inverse problem solvers, e.g., PnP, DIP, and diffusion solvers, in four scenarios: image deblurring, compressed sensing, demosaicing, and image super-resolution, providing consistent improvement of up to 4.3 dB over baseline formulations and up to 1 dB compared with end-to-end learned models in terms of PSNR.

NPN: Non-Linear Projections of the Null-Space for Imaging Inverse Problems

Oct 02, 2025Abstract:Imaging inverse problems aims to recover high-dimensional signals from undersampled, noisy measurements, a fundamentally ill-posed task with infinite solutions in the null-space of the sensing operator. To resolve this ambiguity, prior information is typically incorporated through handcrafted regularizers or learned models that constrain the solution space. However, these priors typically ignore the task-specific structure of that null-space. In this work, we propose \textit{Non-Linear Projections of the Null-Space} (NPN), a novel class of regularization that, instead of enforcing structural constraints in the image domain, promotes solutions that lie in a low-dimensional projection of the sensing matrix's null-space with a neural network. Our approach has two key advantages: (1) Interpretability: by focusing on the structure of the null-space, we design sensing-matrix-specific priors that capture information orthogonal to the signal components that are fundamentally blind to the sensing process. (2) Flexibility: NPN is adaptable to various inverse problems, compatible with existing reconstruction frameworks, and complementary to conventional image-domain priors. We provide theoretical guarantees on convergence and reconstruction accuracy when used within plug-and-play methods. Empirical results across diverse sensing matrices demonstrate that NPN priors consistently enhance reconstruction fidelity in various imaging inverse problems, such as compressive sensing, deblurring, super-resolution, computed tomography, and magnetic resonance imaging, with plug-and-play methods, unrolling networks, deep image prior, and diffusion models.

DICE: Diffusion Consensus Equilibrium for Sparse-view CT Reconstruction

Sep 18, 2025

Abstract:Sparse-view computed tomography (CT) reconstruction is fundamentally challenging due to undersampling, leading to an ill-posed inverse problem. Traditional iterative methods incorporate handcrafted or learned priors to regularize the solution but struggle to capture the complex structures present in medical images. In contrast, diffusion models (DMs) have recently emerged as powerful generative priors that can accurately model complex image distributions. In this work, we introduce Diffusion Consensus Equilibrium (DICE), a framework that integrates a two-agent consensus equilibrium into the sampling process of a DM. DICE alternates between: (i) a data-consistency agent, implemented through a proximal operator enforcing measurement consistency, and (ii) a prior agent, realized by a DM performing a clean image estimation at each sampling step. By balancing these two complementary agents iteratively, DICE effectively combines strong generative prior capabilities with measurement consistency. Experimental results show that DICE significantly outperforms state-of-the-art baselines in reconstructing high-quality CT images under uniform and non-uniform sparse-view settings of 15, 30, and 60 views (out of a total of 180), demonstrating both its effectiveness and robustness.

UTOPY: Unrolling Algorithm Learning via Fidelity Homotopy for Inverse Problems

Sep 17, 2025Abstract:Imaging Inverse problems aim to reconstruct an underlying image from undersampled, coded, and noisy observations. Within the wide range of reconstruction frameworks, the unrolling algorithm is one of the most popular due to the synergistic integration of traditional model-based reconstruction methods and modern neural networks, providing an interpretable and highly accurate reconstruction. However, when the sensing operator is highly ill-posed, gradient steps on the data-fidelity term can hinder convergence and degrade reconstruction quality. To address this issue, we propose UTOPY, a homotopy continuation formulation for training the unrolling algorithm. Mainly, this method involves using a well-posed (synthetic) sensing matrix at the beginning of the unrolling network optimization. We define a continuation path strategy to transition smoothly from the synthetic fidelity to the desired ill-posed problem. This strategy enables the network to progressively transition from a simpler, well-posed inverse problem to the more challenging target scenario. We theoretically show that, for projected gradient descent-like unrolling models, the proposed continuation strategy generates a smooth path of unrolling solutions. Experiments on compressive sensing and image deblurring demonstrate that our method consistently surpasses conventional unrolled training, achieving up to 2.5 dB PSNR improvement in reconstruction performance. Source code at

Deep Distillation Gradient Preconditioning for Inverse Problems

Aug 06, 2025

Abstract:Imaging inverse problems are commonly addressed by minimizing measurement consistency and signal prior terms. While huge attention has been paid to developing high-performance priors, even the most advanced signal prior may lose its effectiveness when paired with an ill-conditioned sensing matrix that hinders convergence and degrades reconstruction quality. In optimization theory, preconditioners allow improving the algorithm's convergence by transforming the gradient update. Traditional linear preconditioning techniques enhance convergence, but their performance remains limited due to their dependence on the structure of the sensing matrix. Learning-based linear preconditioners have been proposed, but they are optimized only for data-fidelity optimization, which may lead to solutions in the null-space of the sensing matrix. This paper employs knowledge distillation to design a nonlinear preconditioning operator. In our method, a teacher algorithm using a better-conditioned (synthetic) sensing matrix guides the student algorithm with an ill-conditioned sensing matrix through gradient matching via a preconditioning neural network. We validate our nonlinear preconditioner for plug-and-play FISTA in single-pixel, magnetic resonance, and super-resolution imaging tasks, showing consistent performance improvements and better empirical convergence.

Single Snapshot Distillation for Phase Coded Mask Design in Phase Retrieval

May 23, 2025

Abstract:Phase retrieval (PR) reconstructs phase information from magnitude measurements, known as coded diffraction patterns (CDPs), whose quality depends on the number of snapshots captured using coded phase masks. High-quality phase estimation requires multiple snapshots, which is not desired for efficient PR systems. End-to-end frameworks enable joint optimization of the optical system and the recovery neural network. However, their application is constrained by physical implementation limitations. Additionally, the framework is prone to gradient vanishing issues related to its global optimization process. This paper introduces a Knowledge Distillation (KD) optimization approach to address these limitations. KD transfers knowledge from a larger, lower-constrained network (teacher) to a smaller, more efficient, and implementable network (student). In this method, the teacher, a PR system trained with multiple snapshots, distills its knowledge into a single-snapshot PR system, the student. The loss functions compare the CPMs and the feature space of the recovery network. Simulations demonstrate that this approach improves reconstruction performance compared to a PR system trained without the teacher's guidance.

Distilling Knowledge for Designing Computational Imaging Systems

Jan 29, 2025

Abstract:Designing the physical encoder is crucial for accurate image reconstruction in computational imaging (CI) systems. Currently, these systems are designed via end-to-end (E2E) optimization, where the encoder is modeled as a neural network layer and is jointly optimized with the decoder. However, the performance of E2E optimization is significantly reduced by the physical constraints imposed on the encoder. Also, since the E2E learns the parameters of the encoder by backpropagating the reconstruction error, it does not promote optimal intermediate outputs and suffers from gradient vanishing. To address these limitations, we reinterpret the concept of knowledge distillation (KD) for designing a physically constrained CI system by transferring the knowledge of a pretrained, less-constrained CI system. Our approach involves three steps: (1) Given the original CI system (student), a teacher system is created by relaxing the constraints on the student's encoder. (2) The teacher is optimized to solve a less-constrained version of the student's problem. (3) The teacher guides the training of the student through two proposed knowledge transfer functions, targeting both the encoder and the decoder feature space. The proposed method can be employed to any imaging modality since the relaxation scheme and the loss functions can be adapted according to the physical acquisition and the employed decoder. This approach was validated on three representative CI modalities: magnetic resonance, single-pixel, and compressive spectral imaging. Simulations show that a teacher system with an encoder that has a structure similar to that of the student encoder provides effective guidance. Our approach achieves significantly improved reconstruction performance and encoder design, outperforming both E2E optimization and traditional non-data-driven encoder designs.

Learning to reconstruct signals with inexact sensing operator via knowledge distillation

Jan 18, 2025

Abstract:In computational optical imaging and wireless communications, signals are acquired through linear coded and noisy projections, which are recovered through computational algorithms. Deep model-based approaches, i.e., neural networks incorporating the sensing operators, are the state-of-the-art for signal recovery. However, these methods require exact knowledge of the sensing operator, which is often unavailable in practice, leading to performance degradation. Consequently, we propose a new recovery paradigm based on knowledge distillation. A teacher model, trained with full or almost exact knowledge of a synthetic sensing operator, guides a student model with an inexact real sensing operator. The teacher is interpreted as a relaxation of the student since it solves a problem with fewer constraints, which can guide the student to achieve higher performance. We demonstrate the improvement of signal reconstruction in computational optical imaging for single-pixel imaging with miscalibrated coded apertures systems and multiple-input multiple-output symbols detection with inexact channel matrix.

Physically Guided Deep Unsupervised Inversion for 1D Magnetotelluric Models

Oct 20, 2024

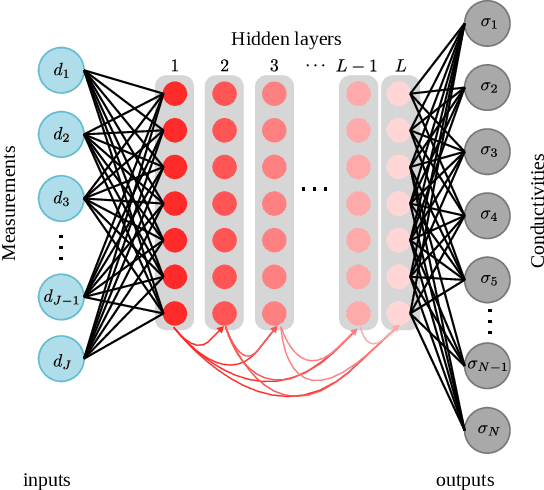

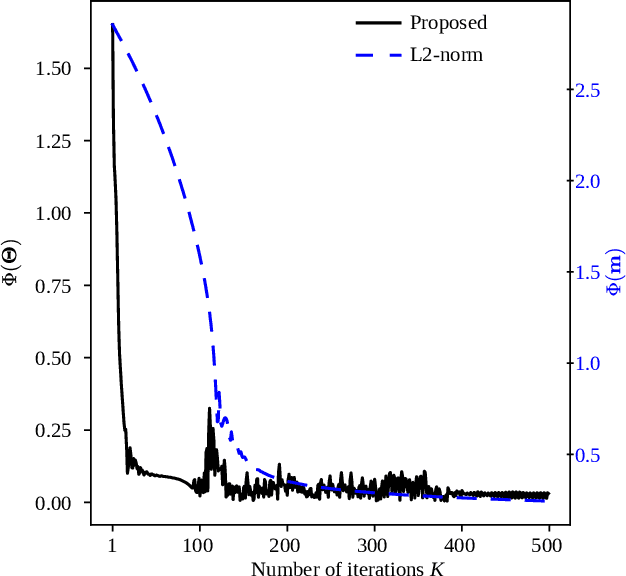

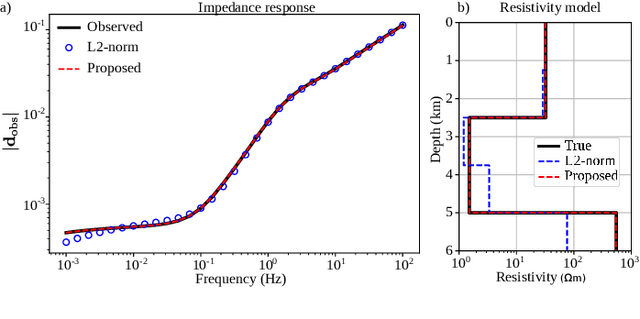

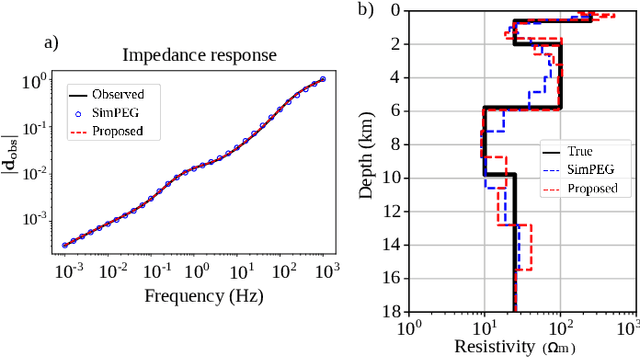

Abstract:The global demand for unconventional energy sources such as geothermal energy and white hydrogen requires new exploration techniques for precise subsurface structure characterization and potential reservoir identification. Magnetotelluric (MT) inversion is crucial for these tasks, providing critical information on the distribution of subsurface electrical resistivity at depths ranging from hundreds to thousands of meters. However, traditional iterative algorithm-based inversion methods require the adjustment of multiple parameters, demanding time-consuming and exhaustive tuning processes to achieve proper cost function minimization. Although recent advances have incorporated deep learning algorithms for MT inversion, these have been primarily based on supervised learning, which needs large labeled datasets for training. Therefore, it causes issues in generalization and model characteristics that are restricted to the neural network's features. This work utilizes TensorFlow operations to create a differentiable forward MT operator, leveraging its automatic differentiation capability. Moreover, instead of solving for the subsurface model directly, as classical algorithms perform, this paper presents a new deep unsupervised inversion algorithm guided by physics to estimate 1D MT models. Instead of using datasets with the observed data and their respective model as labels during training, our method employs a differentiable modeling operator that physically guides the cost function minimization, making the proposed method solely dependent on observed data. Therefore, the optimization problem is updating the network weights to minimize the data misfit. We test the proposed method with field and synthetic data at different acquisition frequencies, demonstrating that the resistivity models are more accurate than other results using state-of-the-art techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge