Haowei He

Training Report of TeleChat3-MoE

Dec 30, 2025Abstract:TeleChat3-MoE is the latest series of TeleChat large language models, featuring a Mixture-of-Experts (MoE) architecture with parameter counts ranging from 105 billion to over one trillion,trained end-to-end on Ascend NPU cluster. This technical report mainly presents the underlying training infrastructure that enables reliable and efficient scaling to frontier model sizes. We detail systematic methodologies for operator-level and end-to-end numerical accuracy verification, ensuring consistency across hardware platforms and distributed parallelism strategies. Furthermore, we introduce a suite of performance optimizations, including interleaved pipeline scheduling, attention-aware data scheduling for long-sequence training,hierarchical and overlapped communication for expert parallelism, and DVM-based operator fusion. A systematic parallelization framework, leveraging analytical estimation and integer linear programming, is also proposed to optimize multi-dimensional parallelism configurations. Additionally, we present methodological approaches to cluster-level optimizations, addressing host- and device-bound bottlenecks during large-scale training tasks. These infrastructure advancements yield significant throughput improvements and near-linear scaling on clusters comprising thousands of devices, providing a robust foundation for large-scale language model development on hardware ecosystems.

Consistent and Truthful Interpretation with Fourier Analysis

Oct 31, 2022

Abstract:For many interdisciplinary fields, ML interpretations need to be consistent with what-if scenarios related to the current case, i.e., if one factor changes, how does the model react? Although the attribution methods are supported by the elegant axiomatic systems, they mainly focus on individual inputs, and are generally inconsistent. To support what-if scenarios, we introduce a new notion called truthful interpretation, and apply Fourier analysis of Boolean functions to get rigorous guarantees. Experimental results show that for neighborhoods with various radii, our method achieves 2x - 50x lower interpretation error compared with the other methods.

Anomaly Detection with Test Time Augmentation and Consistency Evaluation

Jun 06, 2022

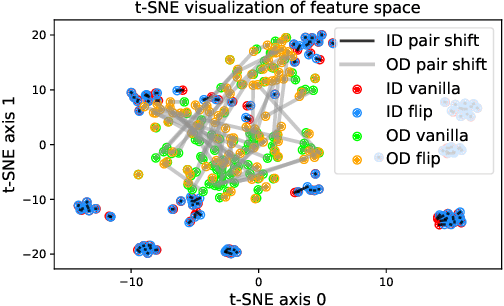

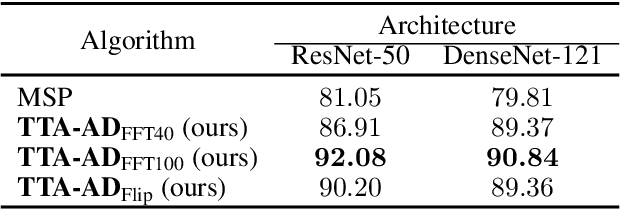

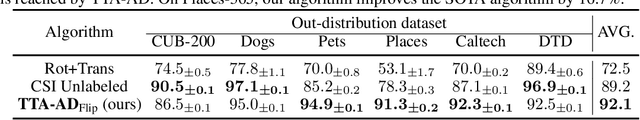

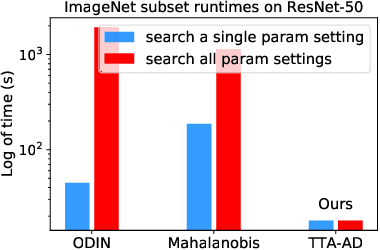

Abstract:Deep neural networks are known to be vulnerable to unseen data: they may wrongly assign high confidence stcores to out-distribuion samples. Recent works try to solve the problem using representation learning methods and specific metrics. In this paper, we propose a simple, yet effective post-hoc anomaly detection algorithm named Test Time Augmentation Anomaly Detection (TTA-AD), inspired by a novel observation. Specifically, we observe that in-distribution data enjoy more consistent predictions for its original and augmented versions on a trained network than out-distribution data, which separates in-distribution and out-distribution samples. Experiments on various high-resolution image benchmark datasets demonstrate that TTA-AD achieves comparable or better detection performance under dataset-vs-dataset anomaly detection settings with a 60%~90\% running time reduction of existing classifier-based algorithms. We provide empirical verification that the key to TTA-AD lies in the remaining classes between augmented features, which has long been partially ignored by previous works. Additionally, we use RUNS as a surrogate to analyze our algorithm theoretically.

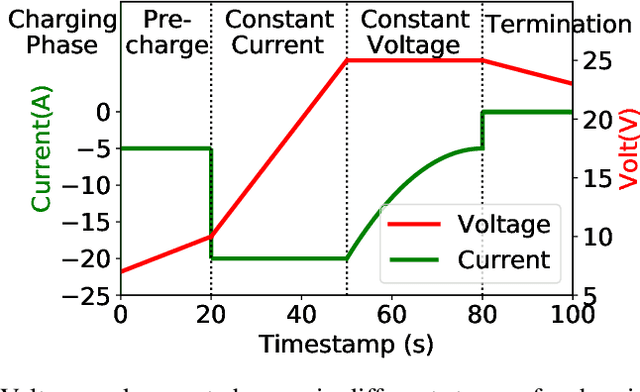

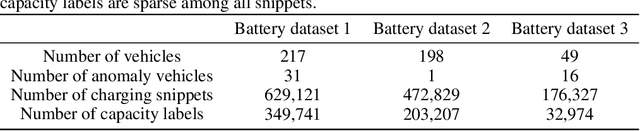

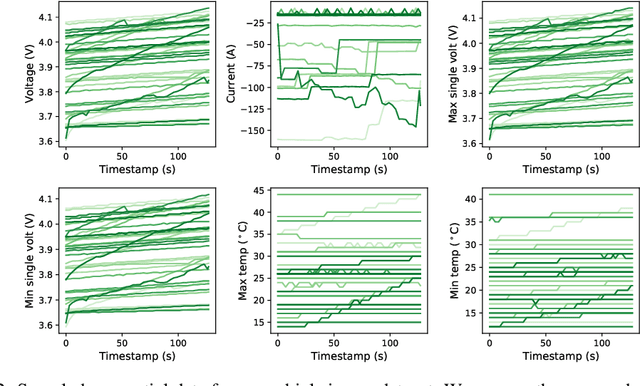

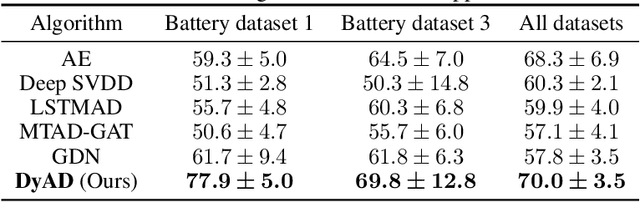

Detecting Electric Vehicle Battery Failure via Dynamic-VAE

Jan 28, 2022

Abstract:In this note, we describe a battery failure detection pipeline backed up by deep learning models. We first introduce a large-scale Electric vehicle (EV) battery dataset including cleaned battery-charging data from hundreds of vehicles. We then formulate battery failure detection as an outlier detection problem, and propose a new algorithm named Dynamic-VAE based on dynamic system and variational autoencoders. We validate the performance of our proposed algorithm against several baselines on our released dataset and demonstrated the effectiveness of Dynamic-VAE.

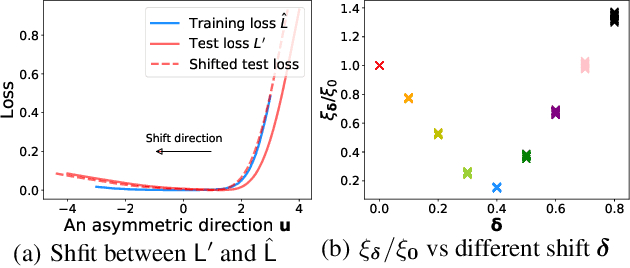

Asymmetric Valleys: Beyond Sharp and Flat Local Minima

Apr 07, 2019

Abstract:Despite the non-convex nature of their loss functions, deep neural networks are known to generalize well when optimized with stochastic gradient descent (SGD). Recent work conjectures that SGD with proper configuration is able to find wide and flat local minima, which have been proposed to be associated with good generalization performance. In this paper, we observe that local minima of modern deep networks are more than being flat or sharp. Specifically, at a local minimum there exist many asymmetric directions such that the loss increases abruptly along one side, and slowly along the opposite side--we formally define such minima as asymmetric valleys. Under mild assumptions, we prove that for asymmetric valleys, a solution biased towards the flat side generalizes better than the exact minimizer. Further, we show that simply averaging the weights along the SGD trajectory gives rise to such biased solutions implicitly. This provides a theoretical explanation for the intriguing phenomenon observed by Izmailov et al. (2018). In addition, we empirically find that batch normalization (BN) appears to be a major cause for asymmetric valleys.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge