Hans-Ulrich Kauczor

Unsupervised Segmentation of Micro-CT Scans of Polyurethane Structures By Combining Hidden-Markov-Random Fields and a U-Net

Nov 14, 2025

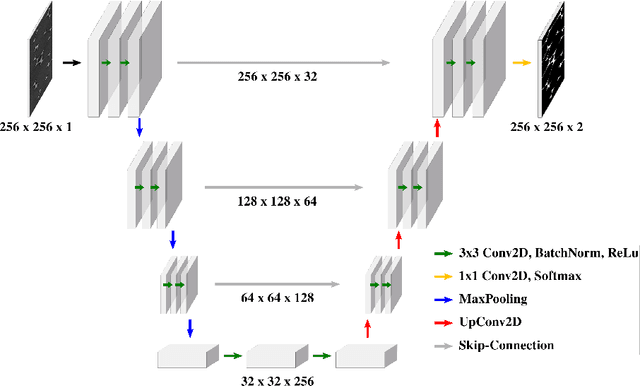

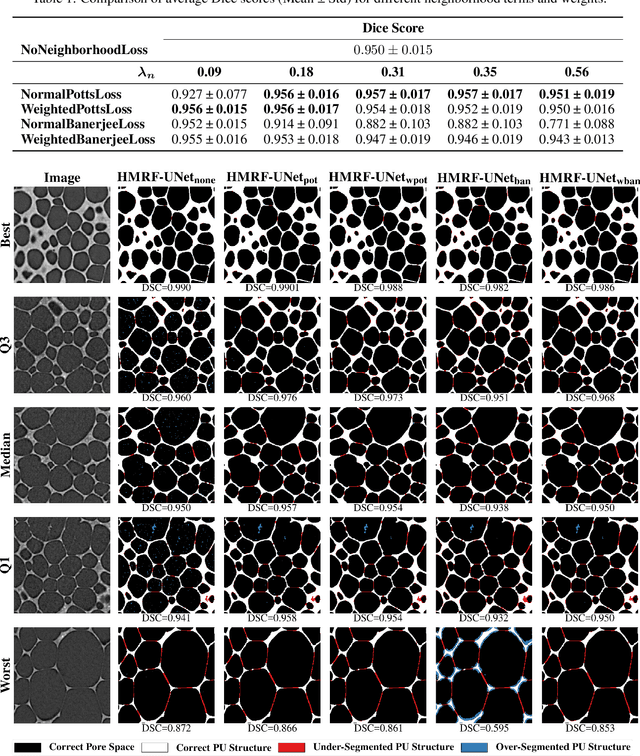

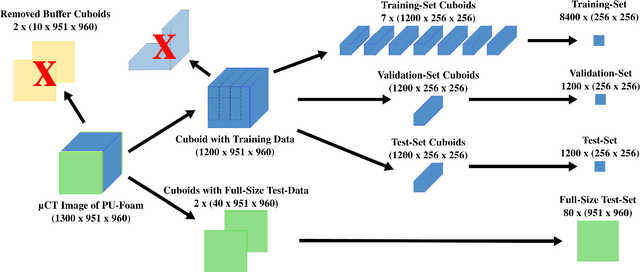

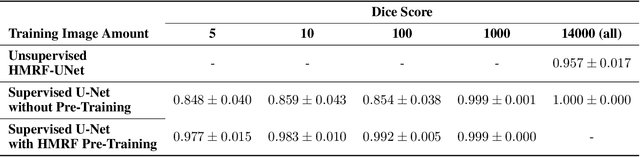

Abstract:Extracting digital material representations from images is a necessary prerequisite for a quantitative analysis of material properties. Different segmentation approaches have been extensively studied in the past to achieve this task, but were often lacking accuracy or speed. With the advent of machine learning, supervised convolutional neural networks (CNNs) have achieved state-of-the-art performance for different segmentation tasks. However, these models are often trained in a supervised manner, which requires large labeled datasets. Unsupervised approaches do not require ground-truth data for learning, but suffer from long segmentation times and often worse segmentation accuracy. Hidden Markov Random Fields (HMRF) are an unsupervised segmentation approach that incorporates concepts of neighborhood and class distributions. We present a method that integrates HMRF theory and CNN segmentation, leveraging the advantages of both areas: unsupervised learning and fast segmentation times. We investigate the contribution of different neighborhood terms and components for the unsupervised HMRF loss. We demonstrate that the HMRF-UNet enables high segmentation accuracy without ground truth on a Micro-Computed Tomography ($μ$CT) image dataset of Polyurethane (PU) foam structures. Finally, we propose and demonstrate a pre-training strategy that considerably reduces the required amount of ground-truth data when training a segmentation model.

MedLoRD: A Medical Low-Resource Diffusion Model for High-Resolution 3D CT Image Synthesis

Mar 17, 2025

Abstract:Advancements in AI for medical imaging offer significant potential. However, their applications are constrained by the limited availability of data and the reluctance of medical centers to share it due to patient privacy concerns. Generative models present a promising solution by creating synthetic data as a substitute for real patient data. However, medical images are typically high-dimensional, and current state-of-the-art methods are often impractical for computational resource-constrained healthcare environments. These models rely on data sub-sampling, raising doubts about their feasibility and real-world applicability. Furthermore, many of these models are evaluated on quantitative metrics that alone can be misleading in assessing the image quality and clinical meaningfulness of the generated images. To address this, we introduce MedLoRD, a generative diffusion model designed for computational resource-constrained environments. MedLoRD is capable of generating high-dimensional medical volumes with resolutions up to 512$\times$512$\times$256, utilizing GPUs with only 24GB VRAM, which are commonly found in standard desktop workstations. MedLoRD is evaluated across multiple modalities, including Coronary Computed Tomography Angiography and Lung Computed Tomography datasets. Extensive evaluations through radiological evaluation, relative regional volume analysis, adherence to conditional masks, and downstream tasks show that MedLoRD generates high-fidelity images closely adhering to segmentation mask conditions, surpassing the capabilities of current state-of-the-art generative models for medical image synthesis in computational resource-constrained environments.

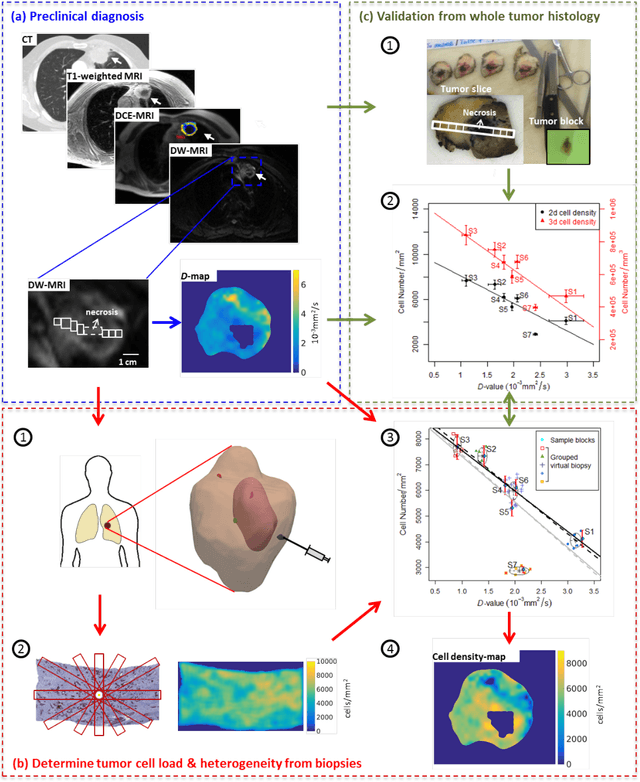

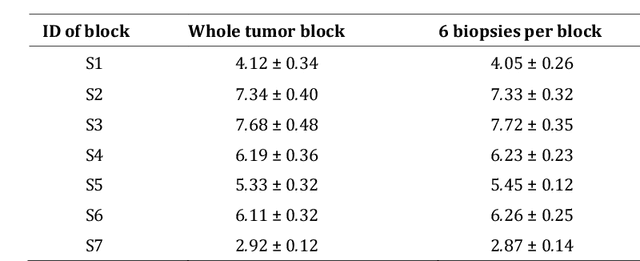

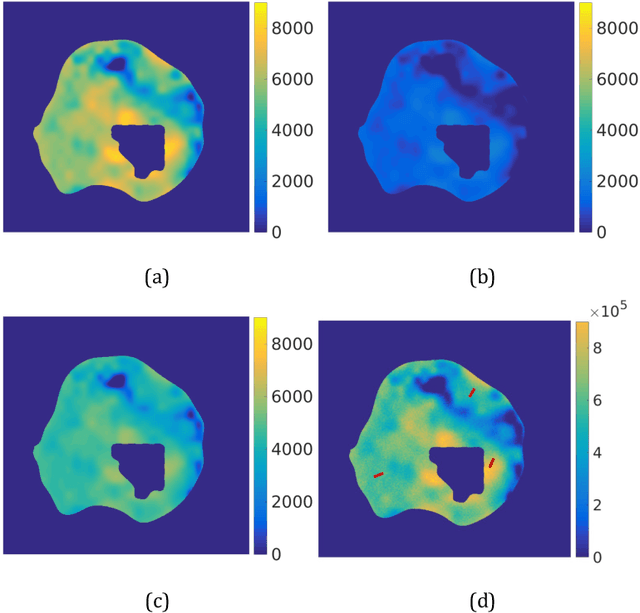

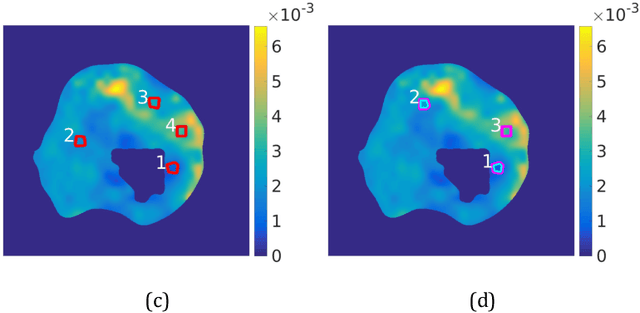

Diffusion-weighted MRI-guided needle biopsies permit quantitative tumor heterogeneity assessment and cell load estimation

Mar 01, 2021

Abstract:Quantitative information on tumor heterogeneity and cell load could assist in designing effective and refined personalized treatment strategies. It was recently shown by us that such information can be inferred from the diffusion parameter D derived from the diffusion-weighted MRI (DWI) if a relation between D and cell density can be established. However, such relation cannot a priori be assumed to be constant for all patients and tumor types. Hence to assist in clinical decisions in palliative settings, the relation needs to be established without tumor resection. It is here demonstrated that biopsies may contain sufficient information for this purpose if the localization of biopsies is chosen as systematically elaborated in this paper. A superpixel-based method for automated optimal localization of biopsies from the DWI D-map is proposed. The performance of the DWI-guided procedure is evaluated by extensive simulations of biopsies. Needle biopsies yield sufficient histological information to establish a quantitative relationship between D-value and cell density, provided they are taken from regions with high, intermediate, and low D-value in DWI. The automated localization of the biopsy regions is demonstrated from a NSCLC patient tumor. In this case, even two or three biopsies give a reasonable estimate. Simulations of needle biopsies under different conditions indicate that the DWI-guidance highly improves the estimation results. Tumor cellularity and heterogeneity in solid tumors may be reliably investigated from DWI and a few needle biopsies that are sampled in regions of well-separated D-values, excluding adipose tissue. This procedure could provide a way of embedding in the clinical workflow assistance in cancer diagnosis and treatment based on personalized information.

Prediction of low-keV monochromatic images from polyenergetic CT scans for improved automatic detection of pulmonary embolism

Feb 23, 2021

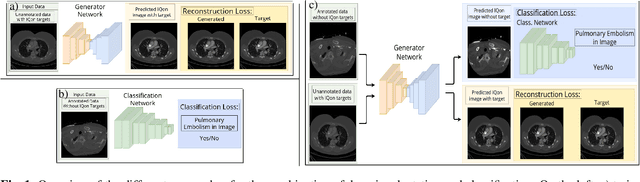

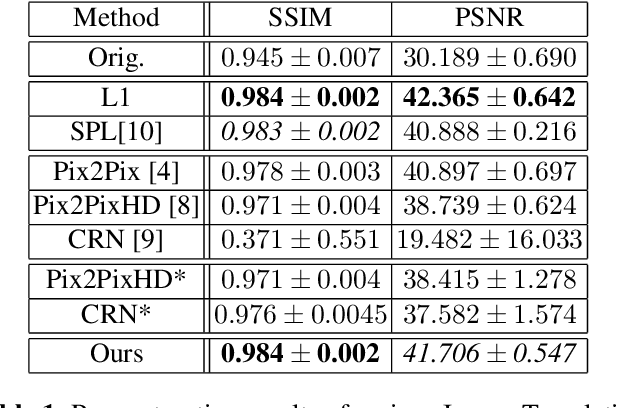

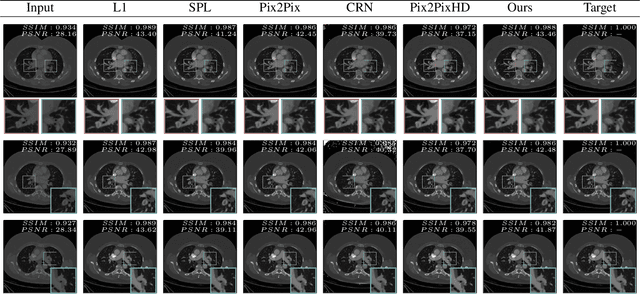

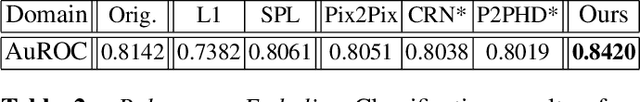

Abstract:Detector-based spectral computed tomography is a recent dual-energy CT (DECT) technology that offers the possibility of obtaining spectral information. From this spectral data, different types of images can be derived, amongst others virtual monoenergetic (monoE) images. MonoE images potentially exhibit decreased artifacts, improve contrast, and overall contain lower noise values, making them ideal candidates for better delineation and thus improved diagnostic accuracy of vascular abnormalities. In this paper, we are training convolutional neural networks~(CNN) that can emulate the generation of monoE images from conventional single energy CT acquisitions. For this task, we investigate several commonly used image-translation methods. We demonstrate that these methods while creating visually similar outputs, lead to a poorer performance when used for automatic classification of pulmonary embolism (PE). We expand on these methods through the use of a multi-task optimization approach, under which the networks achieve improved classification as well as generation results, as reflected by PSNR and SSIM scores. Further, evaluating our proposed framework on a subset of the RSNA-PE challenge data set shows that we are able to improve the Area under the Receiver Operating Characteristic curve (AuROC) in comparison to a na\"ive classification approach from 0.8142 to 0.8420.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge