Haichuan Li

INDOOR-LiDAR: Bridging Simulation and Reality for Robot-Centric 360 degree Indoor LiDAR Perception -- A Robot-Centric Hybrid Dataset

Dec 13, 2025Abstract:We present INDOOR-LIDAR, a comprehensive hybrid dataset of indoor 3D LiDAR point clouds designed to advance research in robot perception. Existing indoor LiDAR datasets often suffer from limited scale, inconsistent annotation formats, and human-induced variability during data collection. INDOOR-LIDAR addresses these limitations by integrating simulated environments with real-world scans acquired using autonomous ground robots, providing consistent coverage and realistic sensor behavior under controlled variations. Each sample consists of dense point cloud data enriched with intensity measurements and KITTI-style annotations. The annotation schema encompasses common indoor object categories within various scenes. The simulated subset enables flexible configuration of layouts, point densities, and occlusions, while the real-world subset captures authentic sensor noise, clutter, and domain-specific artifacts characteristic of real indoor settings. INDOOR-LIDAR supports a wide range of applications including 3D object detection, bird's-eye-view (BEV) perception, SLAM, semantic scene understanding, and domain adaptation between simulated and real indoor domains. By bridging the gap between synthetic and real-world data, INDOOR-LIDAR establishes a scalable, realistic, and reproducible benchmark for advancing robotic perception in complex indoor environments.

IndoorBEV: Joint Detection and Footprint Completion of Objects via Mask-based Prediction in Indoor Scenarios for Bird's-Eye View Perception

Jul 23, 2025Abstract:Detecting diverse objects within complex indoor 3D point clouds presents significant challenges for robotic perception, particularly with varied object shapes, clutter, and the co-existence of static and dynamic elements where traditional bounding box methods falter. To address these limitations, we propose IndoorBEV, a novel mask-based Bird's-Eye View (BEV) method for indoor mobile robots. In a BEV method, a 3D scene is projected into a 2D BEV grid which handles naturally occlusions and provides a consistent top-down view aiding to distinguish static obstacles from dynamic agents. The obtained 2D BEV results is directly usable to downstream robotic tasks like navigation, motion prediction, and planning. Our architecture utilizes an axis compact encoder and a window-based backbone to extract rich spatial features from this BEV map. A query-based decoder head then employs learned object queries to concurrently predict object classes and instance masks in the BEV space. This mask-centric formulation effectively captures the footprint of both static and dynamic objects regardless of their shape, offering a robust alternative to bounding box regression. We demonstrate the effectiveness of IndoorBEV on a custom indoor dataset featuring diverse object classes including static objects and dynamic elements like robots and miscellaneous items, showcasing its potential for robust indoor scene understanding.

GarchingSim: An Autonomous Driving Simulator with Photorealistic Scenes and Minimalist Workflow

Jan 30, 2024

Abstract:Conducting real road testing for autonomous driving algorithms can be expensive and sometimes impractical, particularly for small startups and research institutes. Thus, simulation becomes an important method for evaluating these algorithms. However, the availability of free and open-source simulators is limited, and the installation and configuration process can be daunting for beginners and interdisciplinary researchers. We introduce an autonomous driving simulator with photorealistic scenes, meanwhile keeping a user-friendly workflow. The simulator is able to communicate with external algorithms through ROS2 or Socket.IO, making it compatible with existing software stacks. Furthermore, we implement a highly accurate vehicle dynamics model within the simulator to enhance the realism of the vehicle's physical effects. The simulator is able to serve various functions, including generating synthetic data and driving with machine learning-based algorithms. Moreover, we prioritize simplicity in the deployment process, ensuring that beginners find it approachable and user-friendly.

BCSSN: Bi-direction Compact Spatial Separable Network for Collision Avoidance in Autonomous Driving

Mar 12, 2023

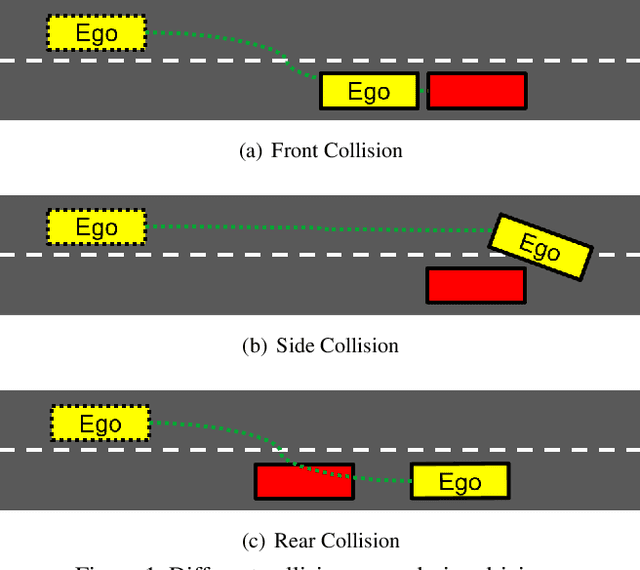

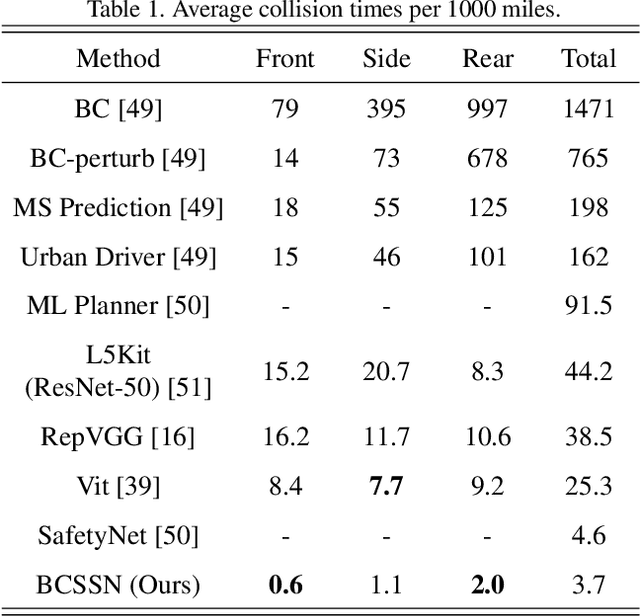

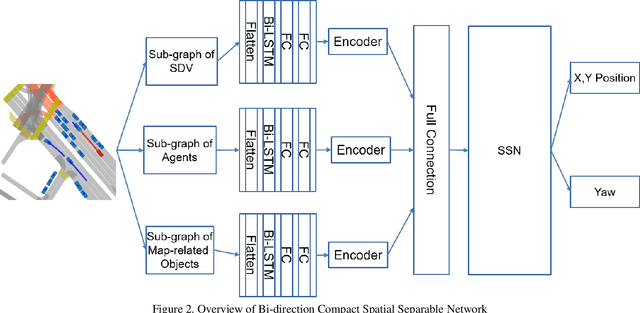

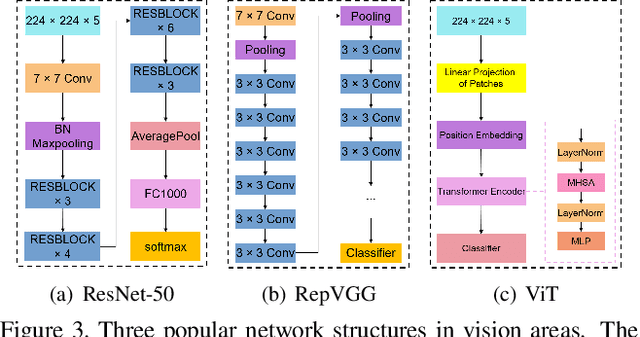

Abstract:Autonomous driving has been an active area of research and development, with various strategies being explored for decision-making in autonomous vehicles. Rule-based systems, decision trees, Markov decision processes, and Bayesian networks have been some of the popular methods used to tackle the complexities of traffic conditions and avoid collisions. However, with the emergence of deep learning, many researchers have turned towards CNN-based methods to improve the performance of collision avoidance. Despite the promising results achieved by some CNN-based methods, the failure to establish correlations between sequential images often leads to more collisions. In this paper, we propose a CNN-based method that overcomes the limitation by establishing feature correlations between regions in sequential images using variants of attention. Our method combines the advantages of CNN in capturing regional features with a bi-directional LSTM to enhance the relationship between different local areas. Additionally, we use an encoder to improve computational efficiency. Our method takes "Bird's Eye View" graphs generated from camera and LiDAR sensors as input, simulates the position (x, y) and head offset angle (Yaw) to generate future trajectories. Experiment results demonstrate that our proposed method outperforms existing vision-based strategies, achieving an average of only 3.7 collisions per 1000 miles of driving distance on the L5kit test set. This significantly improves the success rate of collision avoidance and provides a promising solution for autonomous driving.

Sequential Spatial Network for Collision Avoidance in Autonomous Driving

Mar 12, 2023

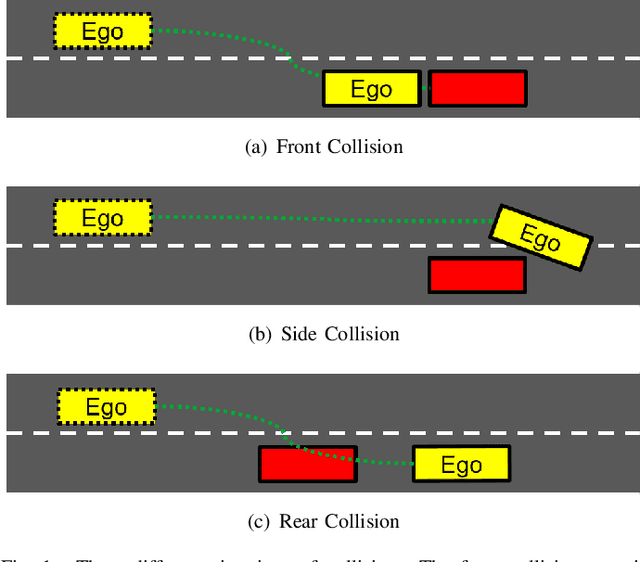

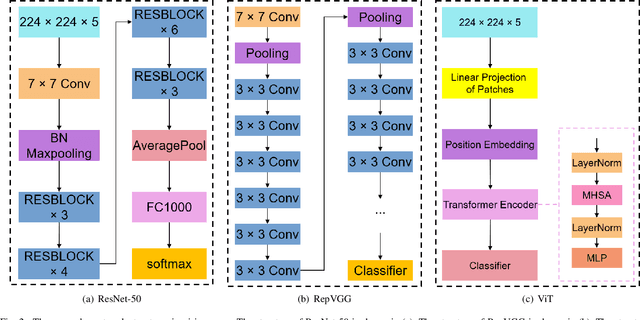

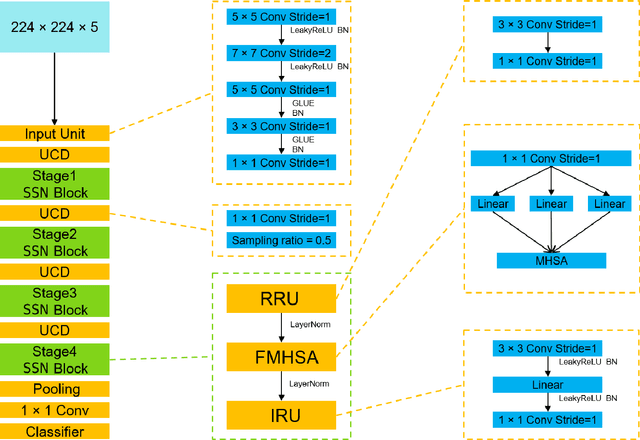

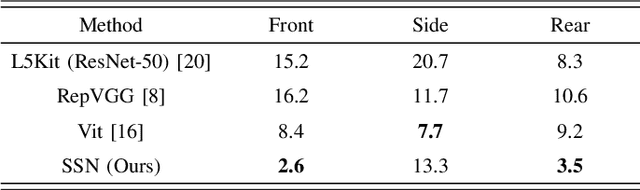

Abstract:Several autonomous driving strategies have been applied to autonomous vehicles, especially in the collision avoidance area. The purpose of collision avoidance is achieved by adjusting the trajectory of autonomous vehicles (AV) to avoid intersection or overlap with the trajectory of surrounding vehicles. A large number of sophisticated vision algorithms have been designed for target inspection, classification, and other tasks, such as ResNet, YOLO, etc., which have achieved excellent performance in vision tasks because of their ability to accurately and quickly capture regional features. However, due to the variability of different tasks, the above models achieve good performance in capturing small regions but are still insufficient in correlating the regional features of the input image with each other. In this paper, we aim to solve this problem and develop an algorithm that takes into account the advantages of CNN in capturing regional features while establishing feature correlation between regions using variants of attention. Finally, our model achieves better performance in the test set of L5Kit compared to the other vision models. The average number of collisions is 19.4 per 10000 frames of driving distance, which greatly improves the success rate of collision avoidance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge