Georg Nührenberg

nn-dependability-kit: Engineering Neural Networks for Safety-Critical Systems

Nov 16, 2018

Abstract:nn-dependability-kit is an open-source toolbox to support safety engineering of neural networks. The key functionality of nn-dependability-kit includes (a) novel dependability metrics for indicating sufficient elimination of uncertainties in the product life cycle, (b) formal reasoning engine for ensuring that the generalization does not lead to undesired behaviors, and (c) runtime monitoring for reasoning whether a decision of a neural network in operation time is supported by prior similarities in the training data.

Runtime Monitoring Neuron Activation Patterns

Sep 19, 2018

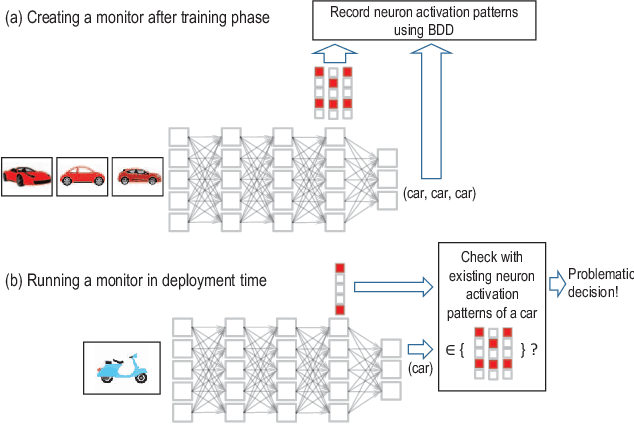

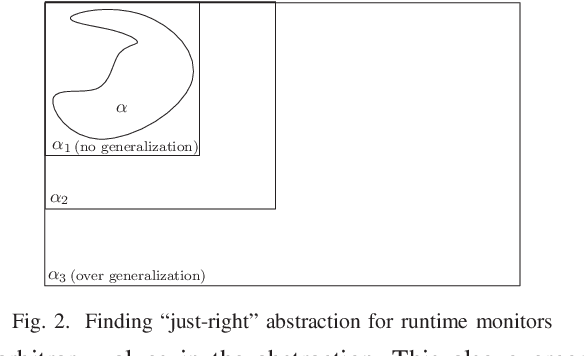

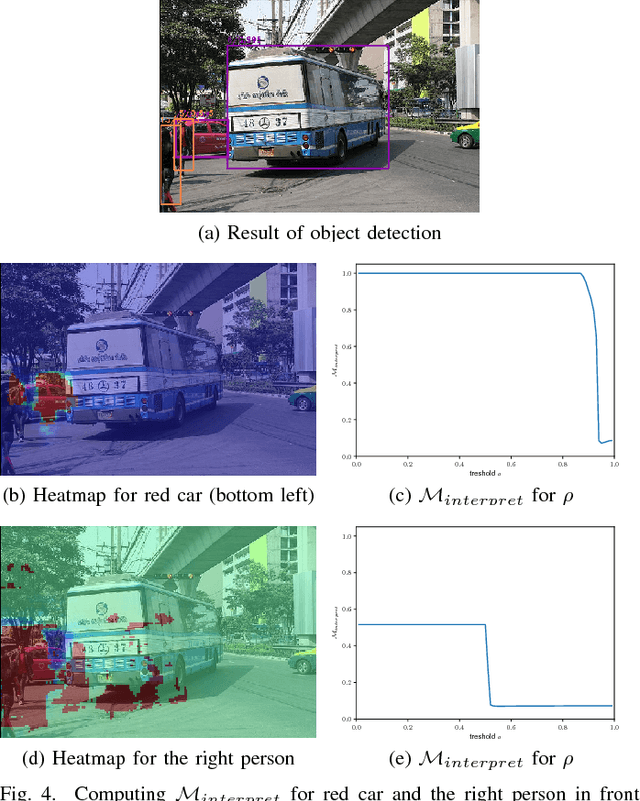

Abstract:For using neural networks in safety critical domains, it is important to know if a decision made by a neural network is supported by prior similarities in training. We propose runtime neuron activation pattern monitoring - after the standard training process, one creates a monitor by feeding the training data to the network again in order to store the neuron activation patterns in abstract form. In operation, a classification decision over an input is further supplemented by examining if a pattern similar (measured by Hamming distance) to the generated pattern is contained in the monitor. If the monitor does not contain any pattern similar to the generated pattern, it raises a warning that the decision is not based on the training data. Our experiments show that, by adjusting the similarity-threshold for activation patterns, the monitors can report a significant portion of misclassfications to be not supported by training with a small false-positive rate, when evaluated on a test set.

Towards Dependability Metrics for Neural Networks

Jun 08, 2018

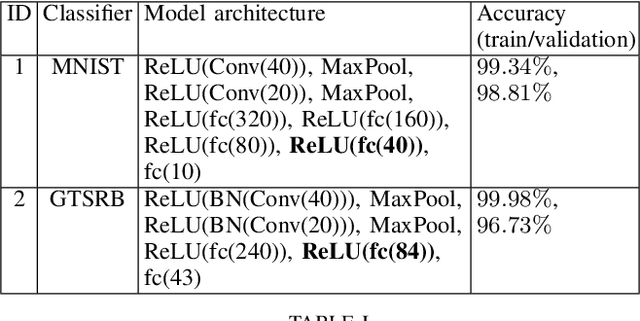

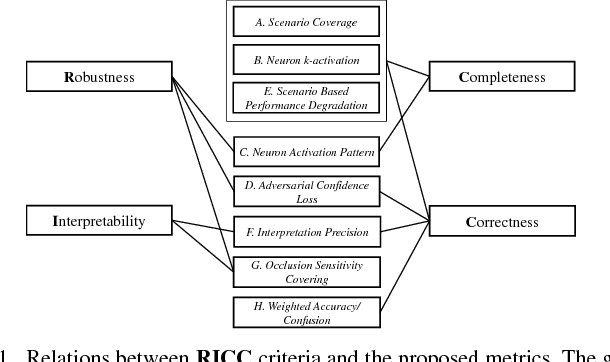

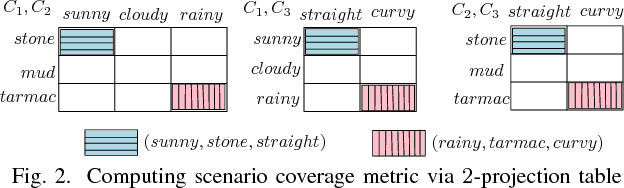

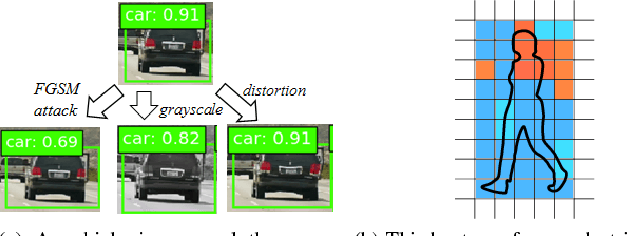

Abstract:Artificial neural networks (NN) are instrumental in realizing highly-automated driving functionality. An overarching challenge is to identify best safety engineering practices for NN and other learning-enabled components. In particular, there is an urgent need for an adequate set of metrics for measuring all-important NN dependability attributes. We address this challenge by proposing a number of NN-specific and efficiently computable metrics for measuring NN dependability attributes including robustness, interpretability, completeness, and correctness.

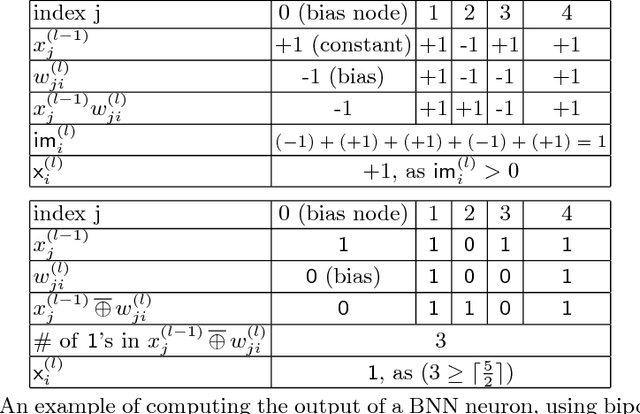

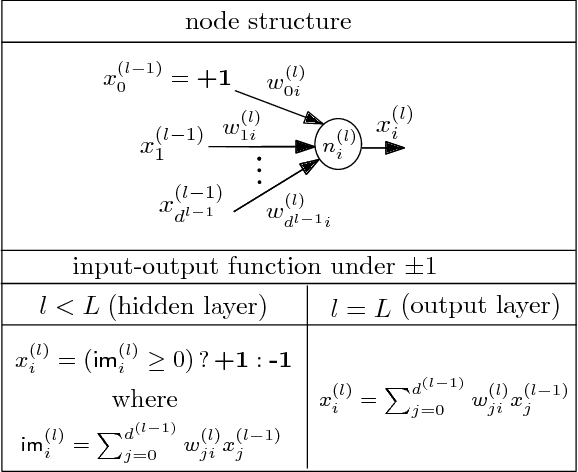

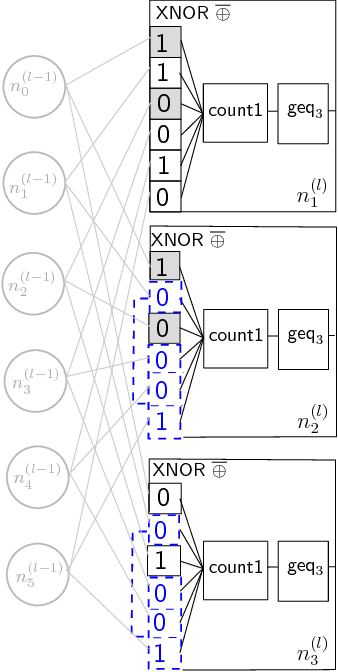

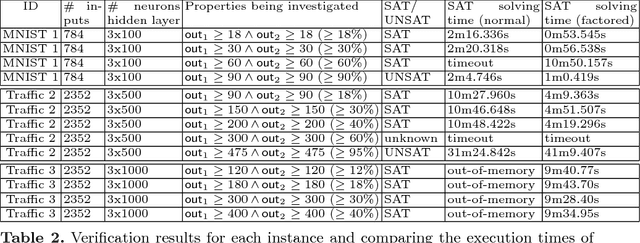

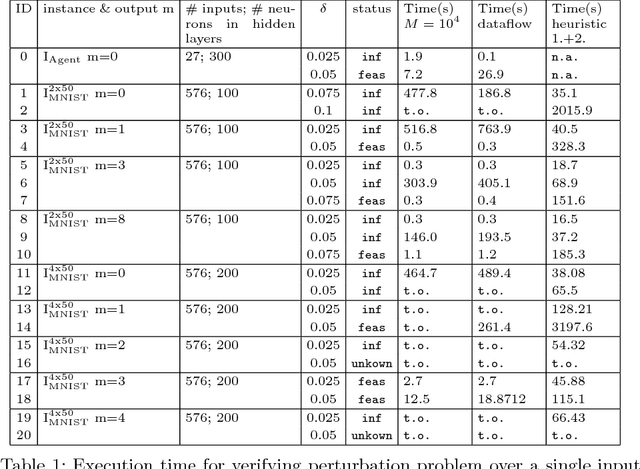

Verification of Binarized Neural Networks via Inter-Neuron Factoring

Jan 19, 2018

Abstract:We study the problem of formal verification of Binarized Neural Networks (BNN), which have recently been proposed as a energy-efficient alternative to traditional learning networks. The verification of BNNs, using the reduction to hardware verification, can be even more scalable by factoring computations among neurons within the same layer. By proving the NP-hardness of finding optimal factoring as well as the hardness of PTAS approximability, we design polynomial-time search heuristics to generate factoring solutions. The overall framework allows applying verification techniques to moderately-sized BNNs for embedded devices with thousands of neurons and inputs.

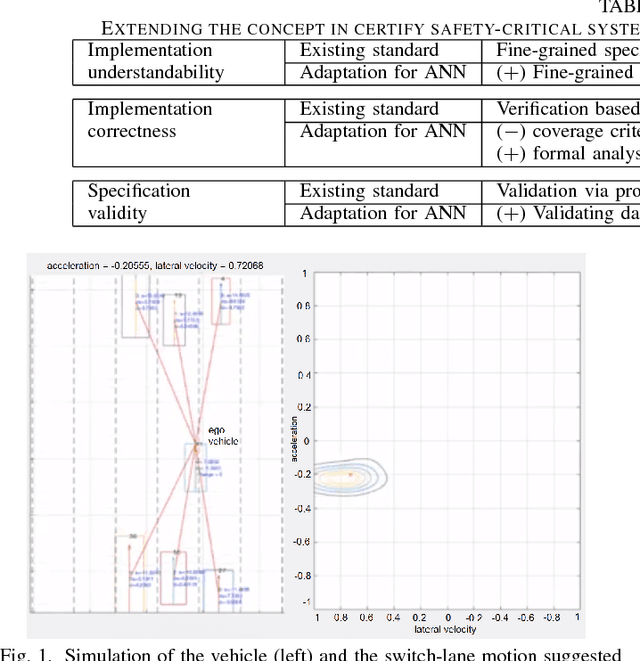

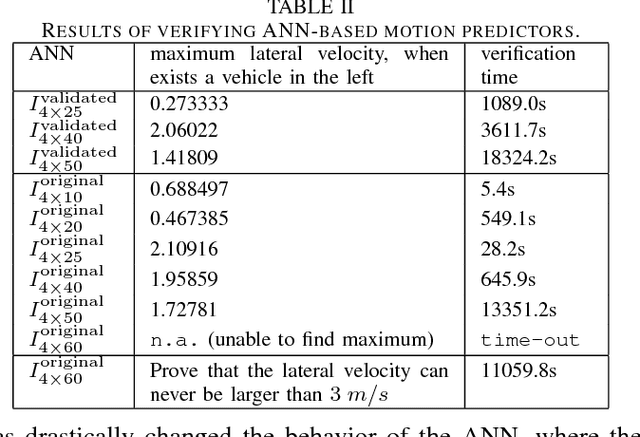

Neural Networks for Safety-Critical Applications - Challenges, Experiments and Perspectives

Sep 04, 2017

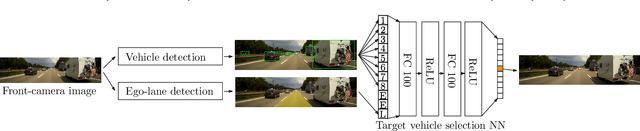

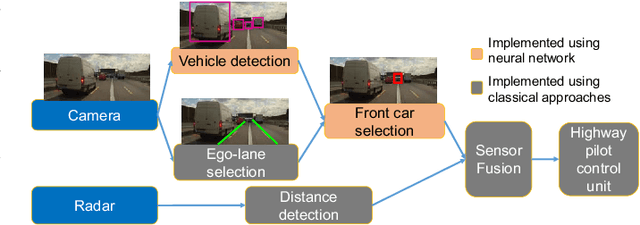

Abstract:We propose a methodology for designing dependable Artificial Neural Networks (ANN) by extending the concepts of understandability, correctness, and validity that are crucial ingredients in existing certification standards. We apply the concept in a concrete case study in designing a high-way ANN-based motion predictor to guarantee safety properties such as impossibility for the ego vehicle to suggest moving to the right lane if there exists another vehicle on its right.

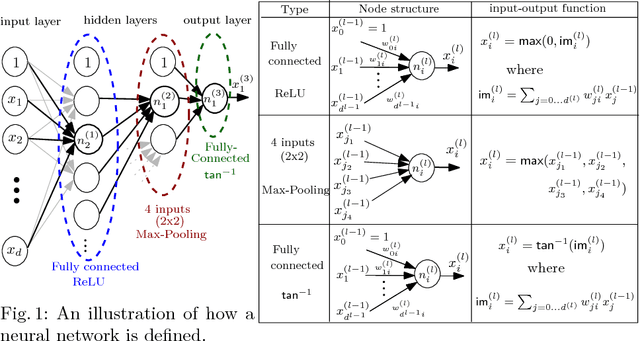

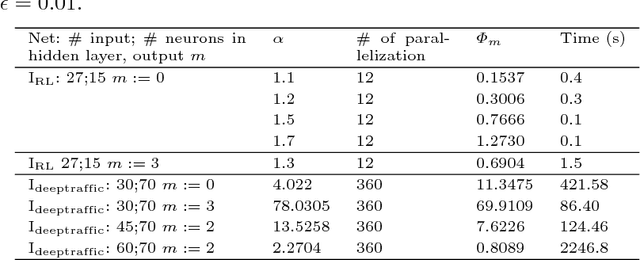

Maximum Resilience of Artificial Neural Networks

Jul 05, 2017

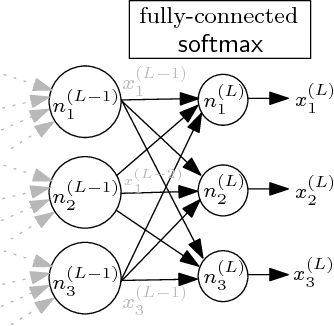

Abstract:The deployment of Artificial Neural Networks (ANNs) in safety-critical applications poses a number of new verification and certification challenges. In particular, for ANN-enabled self-driving vehicles it is important to establish properties about the resilience of ANNs to noisy or even maliciously manipulated sensory input. We are addressing these challenges by defining resilience properties of ANN-based classifiers as the maximal amount of input or sensor perturbation which is still tolerated. This problem of computing maximal perturbation bounds for ANNs is then reduced to solving mixed integer optimization problems (MIP). A number of MIP encoding heuristics are developed for drastically reducing MIP-solver runtimes, and using parallelization of MIP-solvers results in an almost linear speed-up in the number (up to a certain limit) of computing cores in our experiments. We demonstrate the effectiveness and scalability of our approach by means of computing maximal resilience bounds for a number of ANN benchmark sets ranging from typical image recognition scenarios to the autonomous maneuvering of robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge