Geonhee Lee

SurgRIPE challenge: Benchmark of Surgical Robot Instrument Pose Estimation

Jan 06, 2025

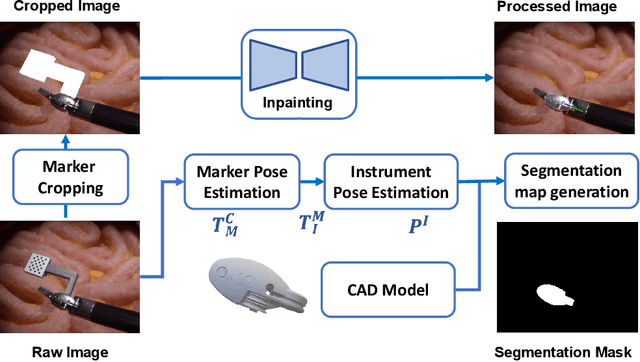

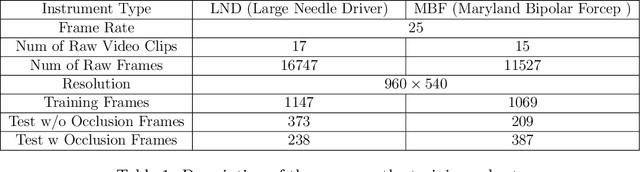

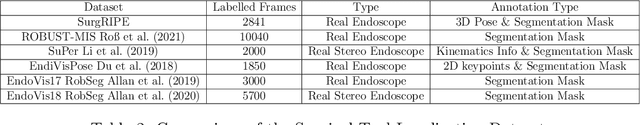

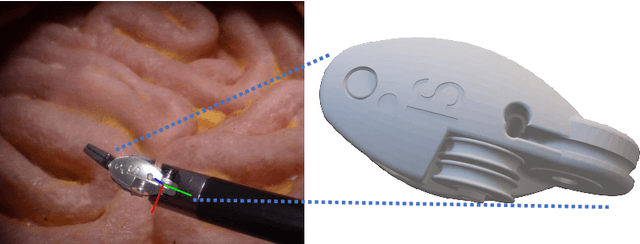

Abstract:Accurate instrument pose estimation is a crucial step towards the future of robotic surgery, enabling applications such as autonomous surgical task execution. Vision-based methods for surgical instrument pose estimation provide a practical approach to tool tracking, but they often require markers to be attached to the instruments. Recently, more research has focused on the development of marker-less methods based on deep learning. However, acquiring realistic surgical data, with ground truth instrument poses, required for deep learning training, is challenging. To address the issues in surgical instrument pose estimation, we introduce the Surgical Robot Instrument Pose Estimation (SurgRIPE) challenge, hosted at the 26th International Conference on Medical Image Computing and Computer-Assisted Intervention (MICCAI) in 2023. The objectives of this challenge are: (1) to provide the surgical vision community with realistic surgical video data paired with ground truth instrument poses, and (2) to establish a benchmark for evaluating markerless pose estimation methods. The challenge led to the development of several novel algorithms that showcased improved accuracy and robustness over existing methods. The performance evaluation study on the SurgRIPE dataset highlights the potential of these advanced algorithms to be integrated into robotic surgery systems, paving the way for more precise and autonomous surgical procedures. The SurgRIPE challenge has successfully established a new benchmark for the field, encouraging further research and development in surgical robot instrument pose estimation.

Normalized Convolutional Neural Network

May 18, 2020

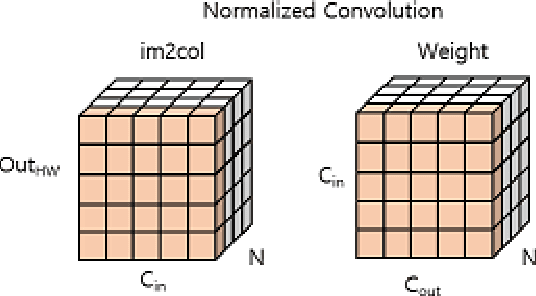

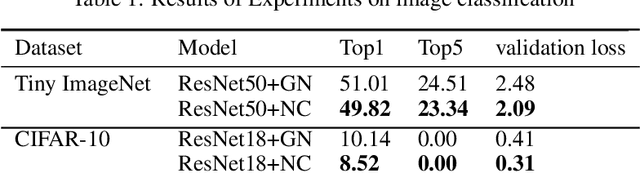

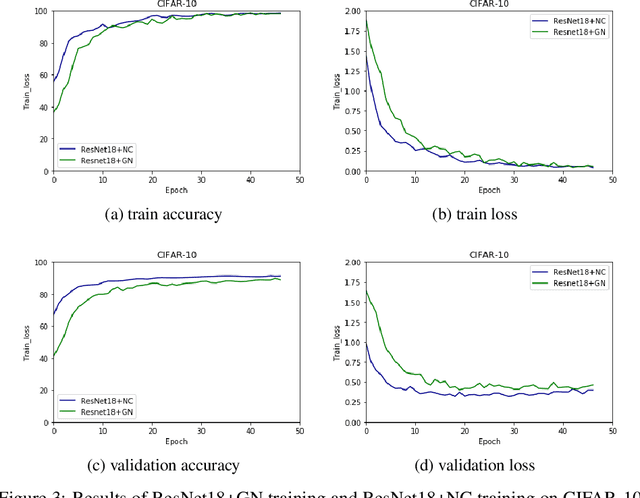

Abstract:In this paper, we propose Normalized Convolutional Neural Network(NCNN). NCNN is more fitted to a convolutional operator than other nomralizaiton methods. The normalized process is similar to a normalization methods, but NCNN is more adapative to sliced-inputs and corresponding the convolutional kernel. Therefor NCNN can be targeted to micro-batch training. Normalizaing of NC is conducted during convolutional process. In short, NC process is not usual normalization and can not be realized in deep learning framework optimizing standard convolution process. Hence we named this method 'Normalized Convolution'. As a result, NC process has universal property which means NC can be applied to any AI tasks involving convolution neural layer . Since NC don't need other normalization layer, NCNN looks like convolutional version of Self Normalizing Network.(SNN). Among micro-batch trainings, NCNN outperforms other batch-independent normalization methods. NCNN archives these superiority by standardizing rows of im2col matrix of inputs, which theoretically smooths the gradient of loss. The code need to manipulate standard convolution neural networks step by step. The code is available : https://github.com/kimdongsuk1/ NormalizedCNN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge