Ganesh Venkataraman

Not Your Grandfathers Test Set: Reducing Labeling Effort for Testing

Jul 10, 2020

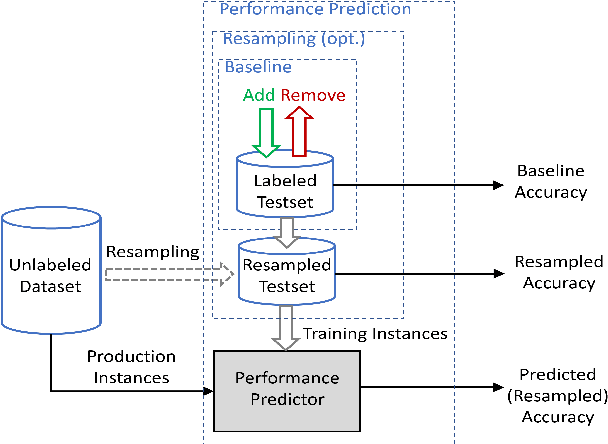

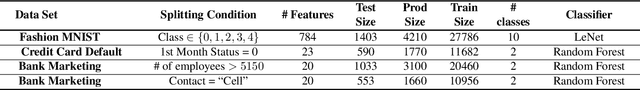

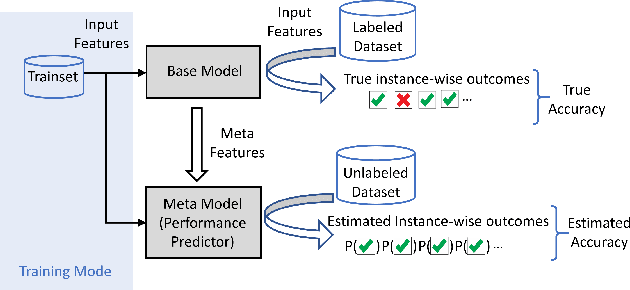

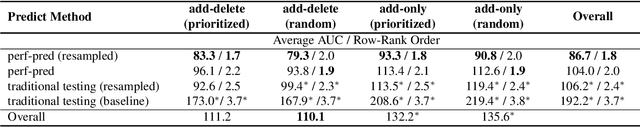

Abstract:Building and maintaining high-quality test sets remains a laborious and expensive task. As a result, test sets in the real world are often not properly kept up to date and drift from the production traffic they are supposed to represent. The frequency and severity of this drift raises serious concerns over the value of manually labeled test sets in the QA process. This paper proposes a simple but effective technique that drastically reduces the effort needed to construct and maintain a high-quality test set (reducing labeling effort by 80-100% across a range of practical scenarios). This result encourages a fundamental rethinking of the testing process by both practitioners, who can use these techniques immediately to improve their testing, and researchers who can help address many of the open questions raised by this new approach.

Exploring the Hyperparameter Landscape of Adversarial Robustness

May 09, 2019

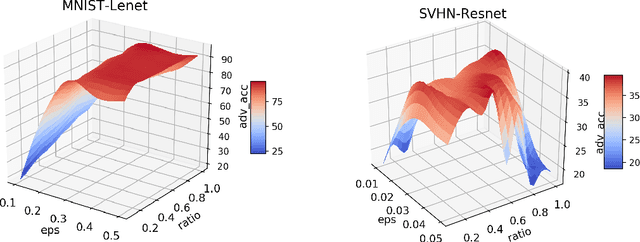

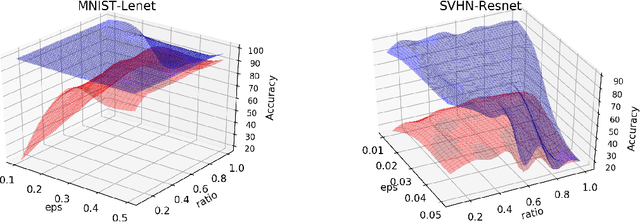

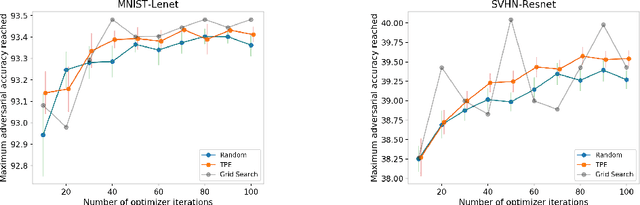

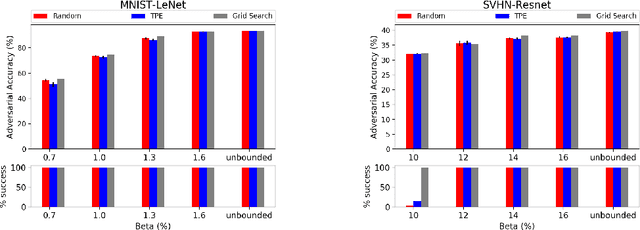

Abstract:Adversarial training shows promise as an approach for training models that are robust towards adversarial perturbation. In this paper, we explore some of the practical challenges of adversarial training. We present a sensitivity analysis that illustrates that the effectiveness of adversarial training hinges on the settings of a few salient hyperparameters. We show that the robustness surface that emerges across these salient parameters can be surprisingly complex and that therefore no effective one-size-fits-all parameter settings exist. We then demonstrate that we can use the same salient hyperparameters as tuning knob to navigate the tension that can arise between robustness and accuracy. Based on these findings, we present a practical approach that leverages hyperparameter optimization techniques for tuning adversarial training to maximize robustness while keeping the loss in accuracy within a defined budget.

NeuNetS: An Automated Synthesis Engine for Neural Network Design

Jan 17, 2019

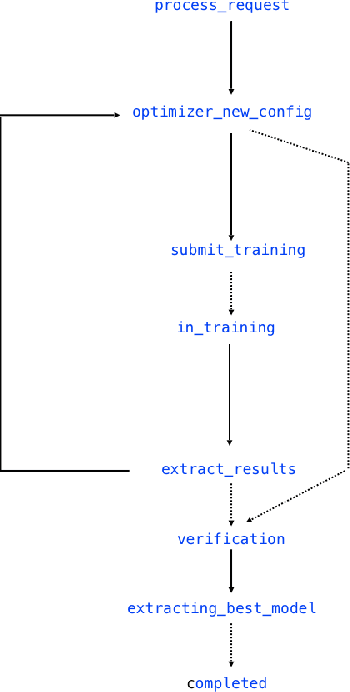

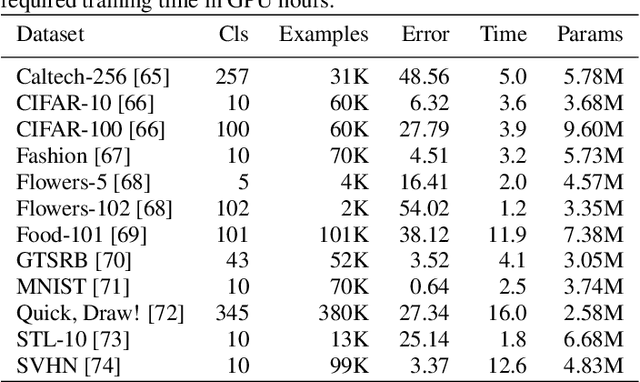

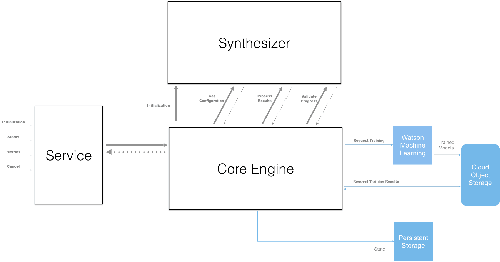

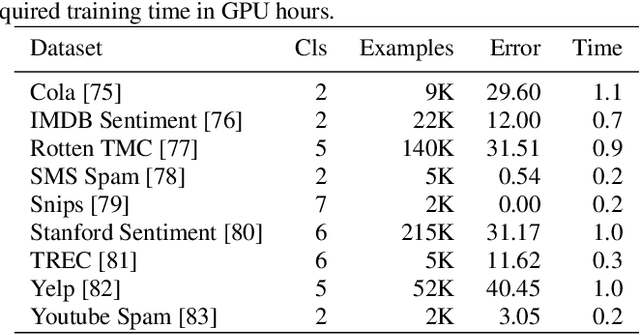

Abstract:Application of neural networks to a vast variety of practical applications is transforming the way AI is applied in practice. Pre-trained neural network models available through APIs or capability to custom train pre-built neural network architectures with customer data has made the consumption of AI by developers much simpler and resulted in broad adoption of these complex AI models. While prebuilt network models exist for certain scenarios, to try and meet the constraints that are unique to each application, AI teams need to think about developing custom neural network architectures that can meet the tradeoff between accuracy and memory footprint to achieve the tight constraints of their unique use-cases. However, only a small proportion of data science teams have the skills and experience needed to create a neural network from scratch, and the demand far exceeds the supply. In this paper, we present NeuNetS : An automated Neural Network Synthesis engine for custom neural network design that is available as part of IBM's AI OpenScale's product. NeuNetS is available for both Text and Image domains and can build neural networks for specific tasks in a fraction of the time it takes today with human effort, and with accuracy similar to that of human-designed AI models.

Personalized Expertise Search at LinkedIn

Feb 15, 2016

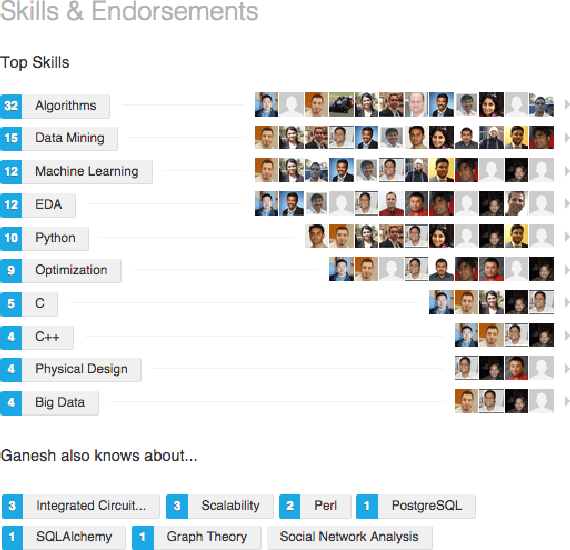

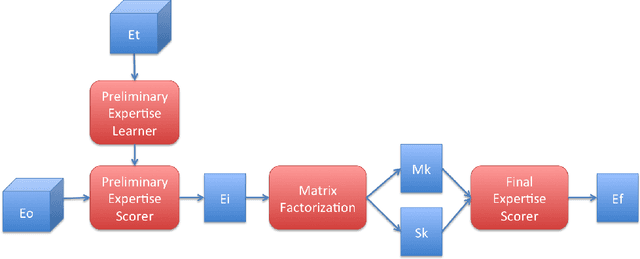

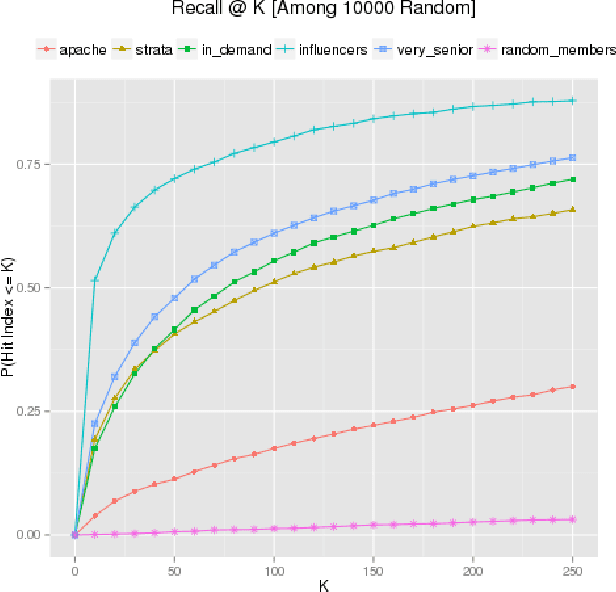

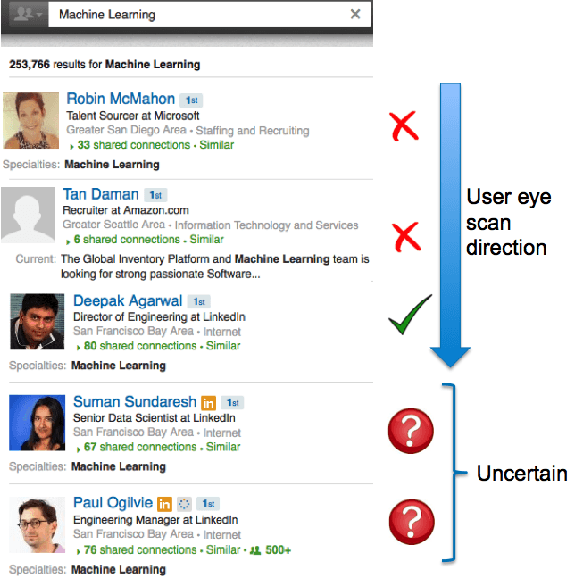

Abstract:LinkedIn is the largest professional network with more than 350 million members. As the member base increases, searching for experts becomes more and more challenging. In this paper, we propose an approach to address the problem of personalized expertise search on LinkedIn, particularly for exploratory search queries containing {\it skills}. In the offline phase, we introduce a collaborative filtering approach based on matrix factorization. Our approach estimates expertise scores for both the skills that members list on their profiles as well as the skills they are likely to have but do not explicitly list. In the online phase (at query time) we use expertise scores on these skills as a feature in combination with other features to rank the results. To learn the personalized ranking function, we propose a heuristic to extract training data from search logs while handling position and sample selection biases. We tested our models on two products - LinkedIn homepage and LinkedIn recruiter. A/B tests showed significant improvements in click through rates - 31% for CTR@1 for recruiter (18% for homepage) as well as downstream messages sent from search - 37% for recruiter (20% for homepage). As of writing this paper, these models serve nearly all live traffic for skills search on LinkedIn homepage as well as LinkedIn recruiter.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge