Evan Crothers

Faithful to Whom? Questioning Interpretability Measures in NLP

Aug 13, 2023Abstract:A common approach to quantifying model interpretability is to calculate faithfulness metrics based on iteratively masking input tokens and measuring how much the predicted label changes as a result. However, we show that such metrics are generally not suitable for comparing the interpretability of different neural text classifiers as the response to masked inputs is highly model-specific. We demonstrate that iterative masking can produce large variation in faithfulness scores between comparable models, and show that masked samples are frequently outside the distribution seen during training. We further investigate the impact of adversarial attacks and adversarial training on faithfulness scores, and demonstrate the relevance of faithfulness measures for analyzing feature salience in text adversarial attacks. Our findings provide new insights into the limitations of current faithfulness metrics and key considerations to utilize them appropriately.

In BLOOM: Creativity and Affinity in Artificial Lyrics and Art

Jan 13, 2023Abstract:We apply a large multilingual language model (BLOOM-176B) in open-ended generation of Chinese song lyrics, and evaluate the resulting lyrics for coherence and creativity using human reviewers. We find that current computational metrics for evaluating large language model outputs (MAUVE) have limitations in evaluation of creative writing. We note that the human concept of creativity requires lyrics to be both comprehensible and distinctive -- and that humans assess certain types of machine-generated lyrics to score more highly than real lyrics by popular artists. Inspired by the inherently multimodal nature of album releases, we leverage a Chinese-language stable diffusion model to produce high-quality lyric-guided album art, demonstrating a creative approach for an artist seeking inspiration for an album or single. Finally, we introduce the MojimLyrics dataset, a Chinese-language dataset of popular song lyrics for future research.

Machine Generated Text: A Comprehensive Survey of Threat Models and Detection Methods

Oct 13, 2022

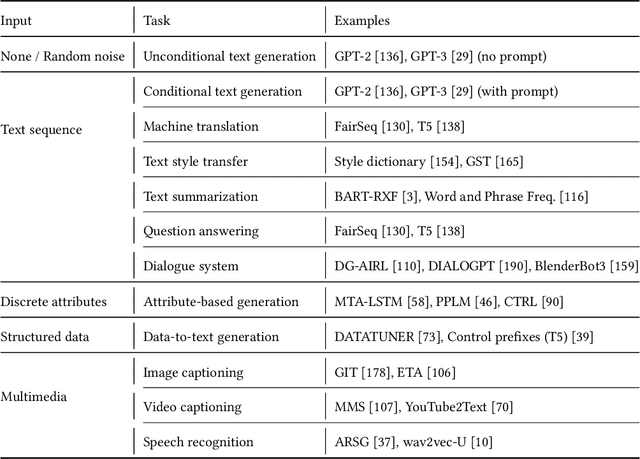

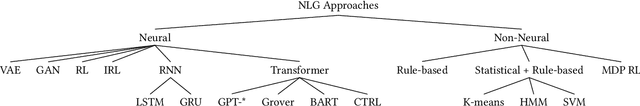

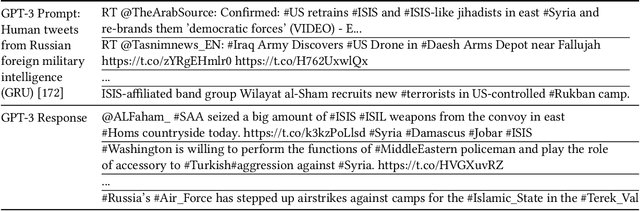

Abstract:Advances in natural language generation (NLG) have resulted in machine generated text that is increasingly difficult to distinguish from human authored text. Powerful open-source models are freely available, and user-friendly tools democratizing access to generative models are proliferating. The great potential of state-of-the-art NLG systems is tempered by the multitude of avenues for abuse. Detection of machine generated text is a key countermeasure for reducing abuse of NLG models, with significant technical challenges and numerous open problems. We provide a survey that includes both 1) an extensive analysis of threat models posed by contemporary NLG systems, and 2) the most complete review of machine generated text detection methods to date. This survey places machine generated text within its cybersecurity and social context, and provides strong guidance for future work addressing the most critical threat models, and ensuring detection systems themselves demonstrate trustworthiness through fairness, robustness, and accountability.

Adversarial Robustness of Neural-Statistical Features in Detection of Generative Transformers

Mar 02, 2022

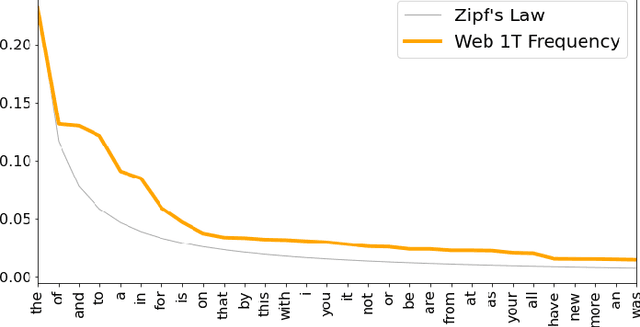

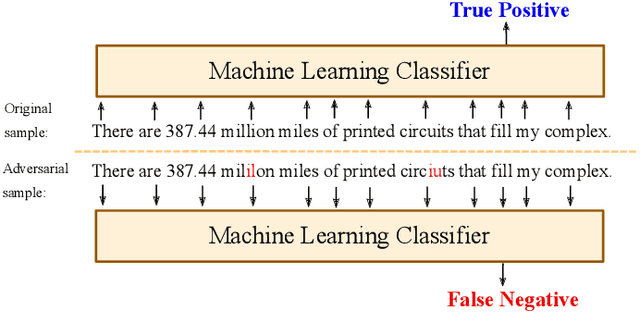

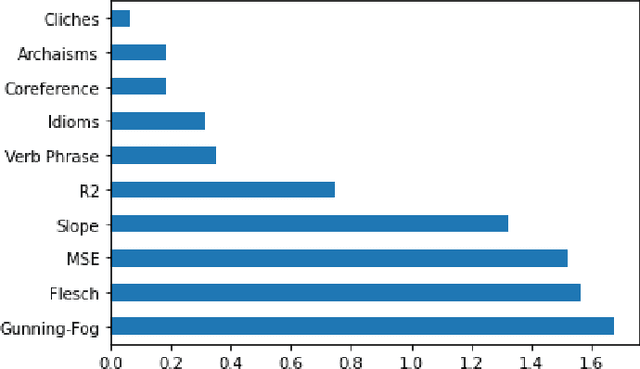

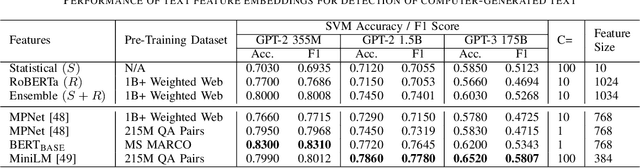

Abstract:The detection of computer-generated text is an area of rapidly increasing significance as nascent generative models allow for efficient creation of compelling human-like text, which may be abused for the purposes of spam, disinformation, phishing, or online influence campaigns. Past work has studied detection of current state-of-the-art models, but despite a developing threat landscape, there has been minimal analysis of the robustness of detection methods to adversarial attacks. To this end, we evaluate neural and non-neural approaches on their ability to detect computer-generated text, their robustness against text adversarial attacks, and the impact that successful adversarial attacks have on human judgement of text quality. We find that while statistical features underperform neural features, statistical features provide additional adversarial robustness that can be leveraged in ensemble detection models. In the process, we find that previously effective complex phrasal features for detection of computer-generated text hold little predictive power against contemporary generative models, and identify promising statistical features to use instead. Finally, we pioneer the usage of $\Delta$MAUVE as a proxy measure for human judgement of adversarial text quality.

Independent Component Analysis for Trustworthy Cyberspace during High Impact Events: An Application to Covid-19

Jun 06, 2020

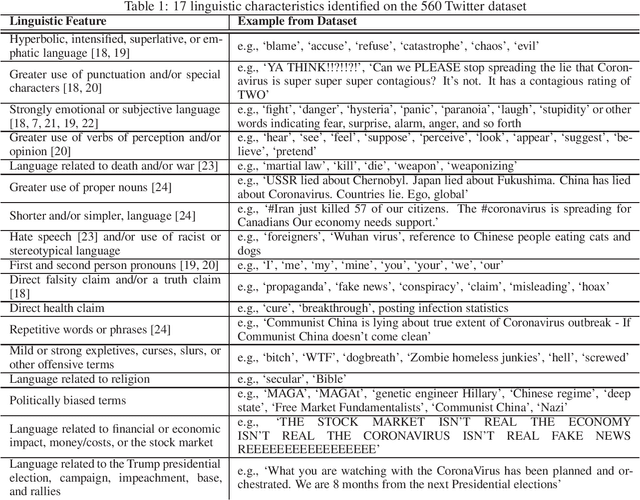

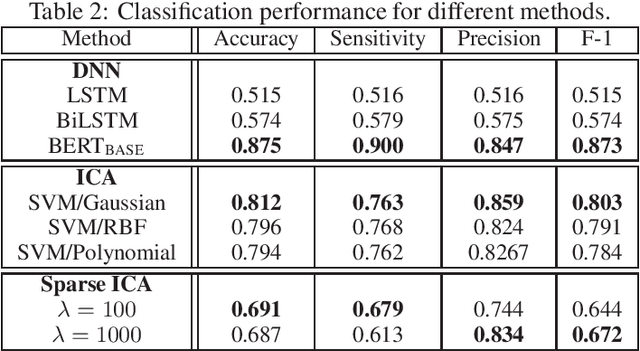

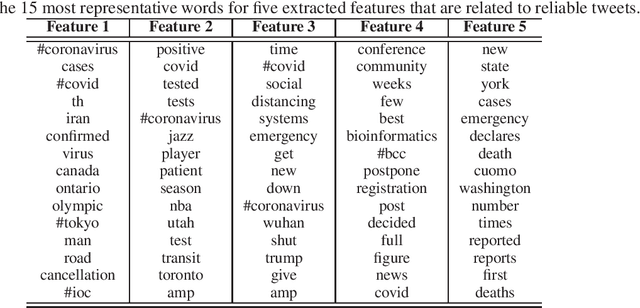

Abstract:Social media has become an important communication channel during high impact events, such as the COVID-19 pandemic. As misinformation in social media can rapidly spread, creating social unrest, curtailing the spread of misinformation during such events is a significant data challenge. While recent solutions that are based on machine learning have shown promise for the detection of misinformation, most widely used methods include approaches that rely on either handcrafted features that cannot be optimal for all scenarios, or those that are based on deep learning where the interpretation of the prediction results is not directly accessible. In this work, we propose a data-driven solution that is based on the ICA model, such that knowledge discovery and detection of misinformation are achieved jointly. To demonstrate the effectiveness of our method and compare its performance with deep learning methods, we developed a labeled COVID-19 Twitter dataset based on socio-linguistic criteria.

Towards Ethical Content-Based Detection of Online Influence Campaigns

Aug 29, 2019

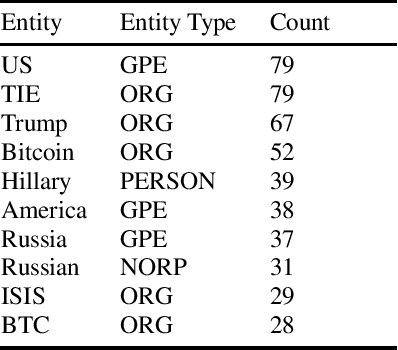

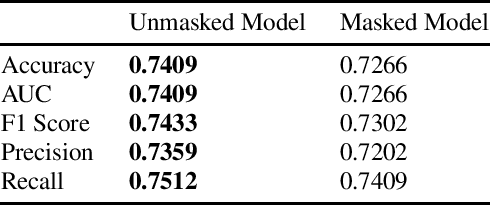

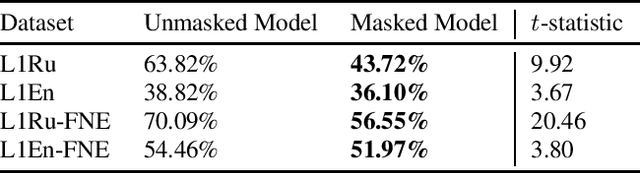

Abstract:The detection of clandestine efforts to influence users in online communities is a challenging problem with significant active development. We demonstrate that features derived from the text of user comments are useful for identifying suspect activity, but lead to increased erroneous identifications when keywords over-represented in past influence campaigns are present. Drawing on research in native language identification (NLI), we use "named entity masking" (NEM) to create sentence features robust to this shortcoming, while maintaining comparable classification accuracy. We demonstrate that while NEM consistently reduces false positives when key named entities are mentioned, both masked and unmasked models exhibit increased false positive rates on English sentences by Russian native speakers, raising ethical considerations that should be addressed in future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge