Eoin Kenny

Advancing Post Hoc Case Based Explanation with Feature Highlighting

Nov 06, 2023

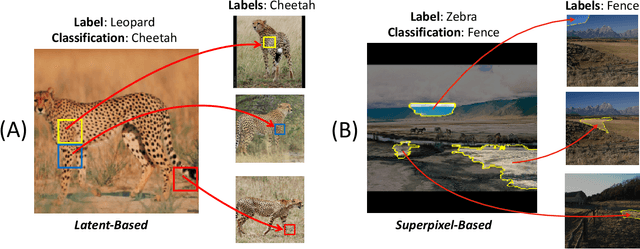

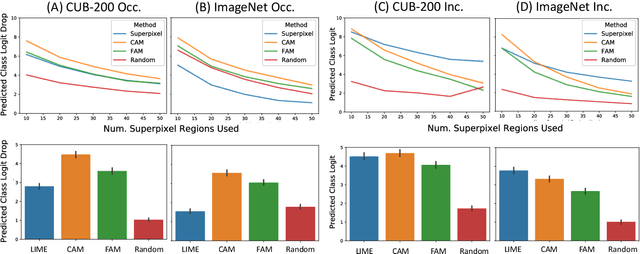

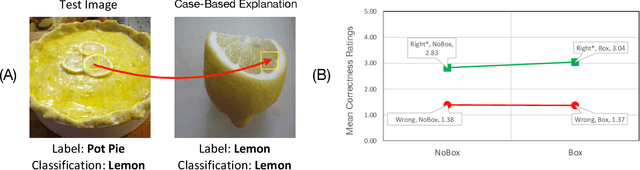

Abstract:Explainable AI (XAI) has been proposed as a valuable tool to assist in downstream tasks involving human and AI collaboration. Perhaps the most psychologically valid XAI techniques are case based approaches which display 'whole' exemplars to explain the predictions of black box AI systems. However, for such post hoc XAI methods dealing with images, there has been no attempt to improve their scope by using multiple clear feature 'parts' of the images to explain the predictions while linking back to relevant cases in the training data, thus allowing for more comprehensive explanations that are faithful to the underlying model. Here, we address this gap by proposing two general algorithms (latent and super pixel based) which can isolate multiple clear feature parts in a test image, and then connect them to the explanatory cases found in the training data, before testing their effectiveness in a carefully designed user study. Results demonstrate that the proposed approach appropriately calibrates a users feelings of 'correctness' for ambiguous classifications in real world data on the ImageNet dataset, an effect which does not happen when just showing the explanation without feature highlighting.

Human-Guided Complexity-Controlled Abstractions

Oct 27, 2023

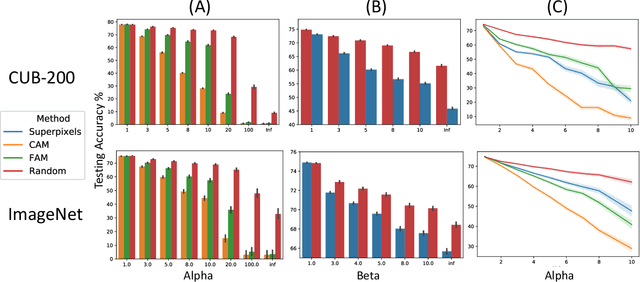

Abstract:Neural networks often learn task-specific latent representations that fail to generalize to novel settings or tasks. Conversely, humans learn discrete representations (i.e., concepts or words) at a variety of abstraction levels (e.g., "bird" vs. "sparrow") and deploy the appropriate abstraction based on task. Inspired by this, we train neural models to generate a spectrum of discrete representations, and control the complexity of the representations (roughly, how many bits are allocated for encoding inputs) by tuning the entropy of the distribution over representations. In finetuning experiments, using only a small number of labeled examples for a new task, we show that (1) tuning the representation to a task-appropriate complexity level supports the highest finetuning performance, and (2) in a human-participant study, users were able to identify the appropriate complexity level for a downstream task using visualizations of discrete representations. Our results indicate a promising direction for rapid model finetuning by leveraging human insight.

Handling Climate Change Using Counterfactuals: Using Counterfactuals in Data Augmentation to Predict Crop Growth in an Uncertain Climate Future

Apr 08, 2021

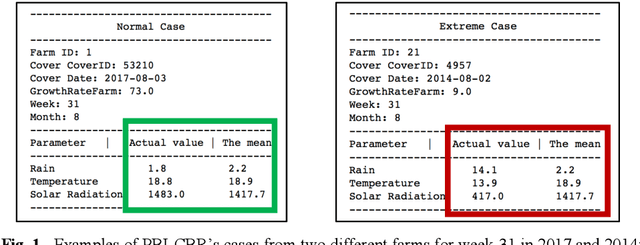

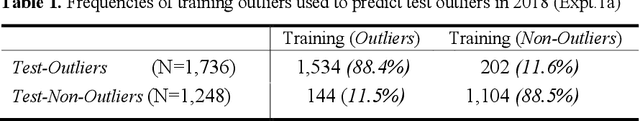

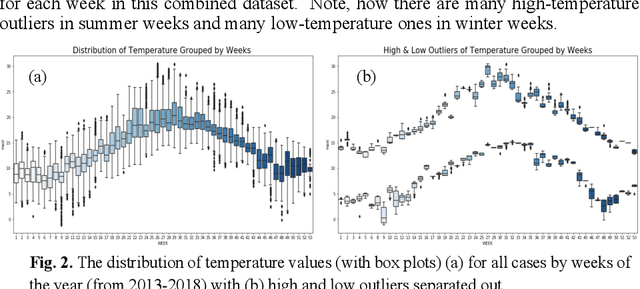

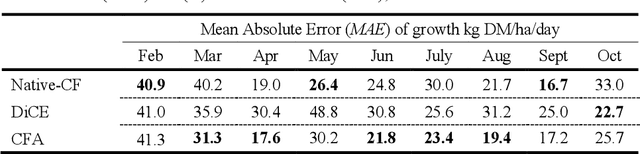

Abstract:Climate change poses a major challenge to humanity, especially in its impact on agriculture, a challenge that a responsible AI should meet. In this paper, we examine a CBR system (PBI-CBR) designed to aid sustainable dairy farming by supporting grassland management, through accurate crop growth prediction. As climate changes, PBI-CBRs historical cases become less useful in predicting future grass growth. Hence, we extend PBI-CBR using data augmentation, to specifically handle disruptive climate events, using a counterfactual method (from XAI). Study 1 shows that historical, extreme climate-events (climate outlier cases) tend to be used by PBI-CBR to predict grass growth during climate disrupted periods. Study 2 shows that synthetic outliers, generated as counterfactuals on a outlier-boundary, improve the predictive accuracy of PBICBR, during the drought of 2018. This study also shows that an instance-based counterfactual method does better than a benchmark, constraint-guided method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge