D. Hyunchul Shim

Words to Wheels: Vision-Based Autonomous Driving Understanding Human Language Instructions Using Foundation Models

Oct 14, 2024

Abstract:This paper introduces an innovative application of foundation models, enabling Unmanned Ground Vehicles (UGVs) equipped with an RGB-D camera to navigate to designated destinations based on human language instructions. Unlike learning-based methods, this approach does not require prior training but instead leverages existing foundation models, thus facilitating generalization to novel environments. Upon receiving human language instructions, these are transformed into a 'cognitive route description' using a large language model (LLM)-a detailed navigation route expressed in human language. The vehicle then decomposes this description into landmarks and navigation maneuvers. The vehicle also determines elevation costs and identifies navigability levels of different regions through a terrain segmentation model, GANav, trained on open datasets. Semantic elevation costs, which take both elevation and navigability levels into account, are estimated and provided to the Model Predictive Path Integral (MPPI) planner, responsible for local path planning. Concurrently, the vehicle searches for target landmarks using foundation models, including YOLO-World and EfficientViT-SAM. Ultimately, the vehicle executes the navigation commands to reach the designated destination, the final landmark. Our experiments demonstrate that this application successfully guides UGVs to their destinations following human language instructions in novel environments, such as unfamiliar terrain or urban settings.

Topological Exploration using Segmented Map with Keyframe Contribution in Subterranean Environments

Sep 15, 2023

Abstract:Existing exploration algorithms mainly generate frontiers using random sampling or motion primitive methods within a specific sensor range or search space. However, frontiers generated within constrained spaces lead to back-and-forth maneuvers in large-scale environments, thereby diminishing exploration efficiency. To address this issue, we propose a method that utilizes a 3D dense map to generate Segmented Exploration Regions (SERs) and generate frontiers from a global-scale perspective. In particular, this paper presents a novel topological map generation approach that fully utilizes Line-of-Sight (LOS) features of LiDAR sensor points to enhance exploration efficiency inside large-scale subterranean environments. Our topological map contains the contributions of keyframes that generate each SER, enabling rapid exploration through a switch between local path planning and global path planning to each frontier. The proposed method achieved higher explored volume generation than the state-of-the-art algorithm in a large-scale simulation environment and demonstrated a 62% improvement in explored volume increment performance. For validation, we conducted field tests using UAVs in real subterranean environments, demonstrating the efficiency and speed of our method.

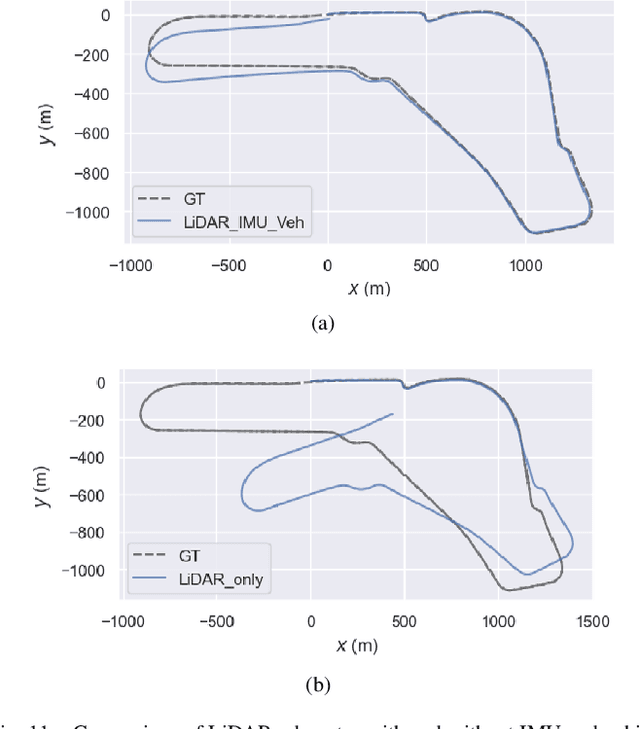

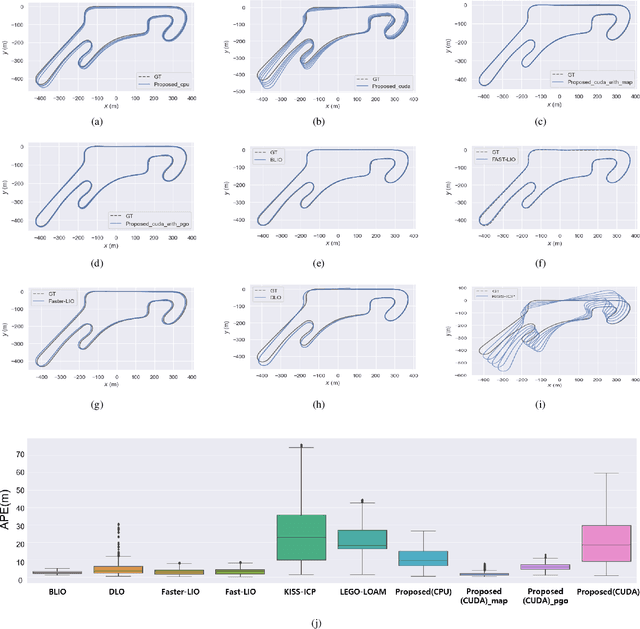

Enhancing State Estimator for Autonomous Race Car : Leveraging Multi-modal System and Managing Computing Resources

Aug 14, 2023

Abstract:This paper introduces an innovative approach to enhance the state estimator for high-speed autonomous race cars, addressing challenges related to unreliable measurements, localization failures, and computing resource management. The proposed robust localization system utilizes a Bayesian-based probabilistic approach to evaluate multimodal measurements, ensuring the use of credible data for accurate and reliable localization, even in harsh racing conditions. To tackle potential localization failures during intense racing, we present a resilient navigation system. This system enables the race car to continue track-following by leveraging direct perception information in planning and execution, ensuring continuous performance despite localization disruptions. Efficient computing resource management is critical to avoid overload and system failure. We optimize computing resources using an efficient LiDAR-based state estimation method. Leveraging CUDA programming and GPU acceleration, we perform nearest points search and covariance computation efficiently, overcoming CPU bottlenecks. Real-world and simulation tests validate the system's performance and resilience. The proposed approach successfully recovers from failures, effectively preventing accidents and ensuring race car safety.

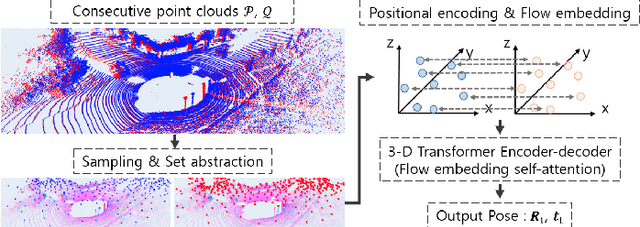

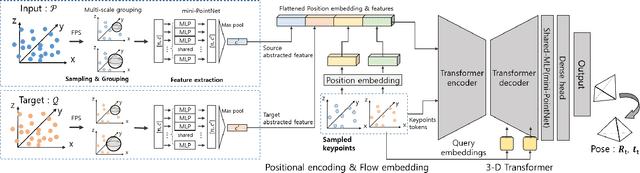

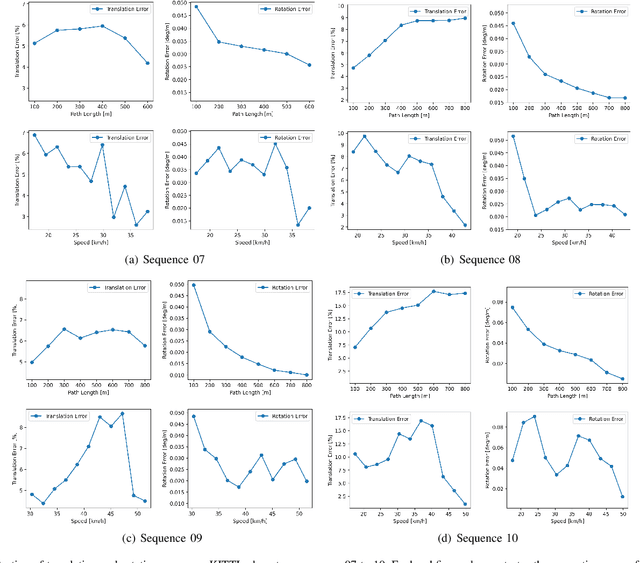

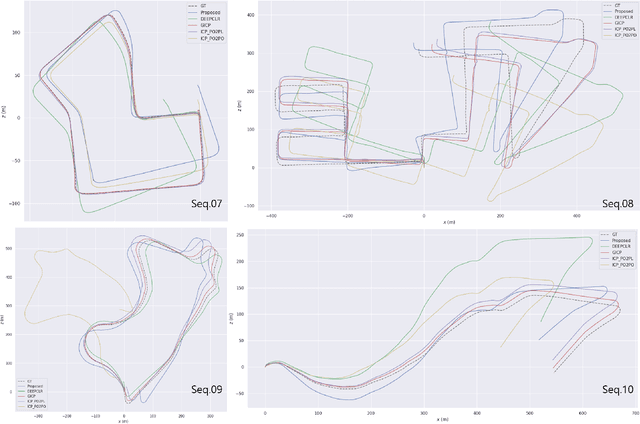

ELiOT : End-to-end Lidar Odometry using Transformer Framework

Jul 31, 2023

Abstract:In recent years, deep-learning-based point cloud registration methods have shown significant promise. Furthermore, learning-based 3D detectors have demonstrated their effectiveness in encoding semantic information from LiDAR data. In this paper, we introduce ELiOT, an end-to-end LiDAR odometry framework built on a transformer architecture. Our proposed Self-attention flow embedding network implicitly represents the motion of sequential LiDAR scenes, bypassing the need for 3D-2D projections traditionally used in such tasks. The network pipeline, composed of a 3D transformer encoder-decoder, has shown effectiveness in predicting poses on urban datasets. In terms of translational and rotational errors, our proposed method yields encouraging results, with 7.59% and 2.67% respectively on the KITTI odometry dataset. This is achieved with an end-to-end approach that foregoes the need for conventional geometric concepts.

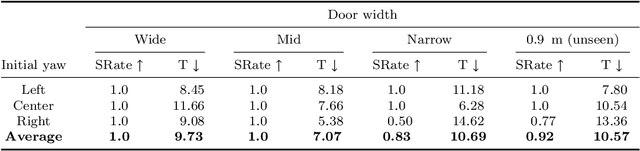

A Versatile Door Opening System with Mobile Manipulator through Adaptive Position-Force Control and Reinforcement Learning

Jul 10, 2023

Abstract:The ability of robots to navigate through doors is crucial for their effective operation in indoor environments. Consequently, extensive research has been conducted to develop robots capable of opening specific doors. However, the diverse combinations of door handles and opening directions necessitate a more versatile door opening system for robots to successfully operate in real-world environments. In this paper, we propose a mobile manipulator system that can autonomously open various doors without prior knowledge. By using convolutional neural networks, point cloud extraction techniques, and external force measurements during exploratory motion, we obtained information regarding handle types, poses, and door characteristics. Through two different approaches, adaptive position-force control and deep reinforcement learning, we successfully opened doors without precise trajectory or excessive external force. The adaptive position-force control method involves moving the end-effector in the direction of the door opening while responding compliantly to external forces, ensuring safety and manipulator workspace. Meanwhile, the deep reinforcement learning policy minimizes applied forces and eliminates unnecessary movements, enabling stable operation across doors with different poses and widths. The RL-based approach outperforms the adaptive position-force control method in terms of compensating for external forces, ensuring smooth motion, and achieving efficient speed. It reduces the maximum force required by 3.27 times and improves motion smoothness by 1.82 times. However, the non-learning-based adaptive position-force control method demonstrates more versatility in opening a wider range of doors, encompassing revolute doors with four distinct opening directions and varying widths.

Design, Field Evaluation, and Traffic Analysis of a Competitive Autonomous Driving Model in a Congested Environment

Nov 07, 2022

Abstract:Recently, numerous studies have investigated cooperative traffic systems using the communication among vehicle-to-everything (V2X). Unfortunately, when multiple autonomous vehicles are deployed while exposed to communication failure, there might be a conflict of ideal conditions between various autonomous vehicles leading to adversarial situation on the roads. In South Korea, virtual and real-world urban autonomous multi-vehicle races were held in March and November of 2021, respectively. During the competition, multiple vehicles were involved simultaneously, which required maneuvers such as overtaking low-speed vehicles, negotiating intersections, and obeying traffic laws. In this study, we introduce a fully autonomous driving software stack to deploy a competitive driving model, which enabled us to win the urban autonomous multi-vehicle races. We evaluate module-based systems such as navigation, perception, and planning in real and virtual environments. Additionally, an analysis of traffic is performed after collecting multiple vehicle position data over communication to gain additional insight into a multi-agent autonomous driving scenario. Finally, we propose a method for analyzing traffic in order to compare the spatial distribution of multiple autonomous vehicles. We study the similarity distribution between each team's driving log data to determine the impact of competitive autonomous driving on the traffic environment.

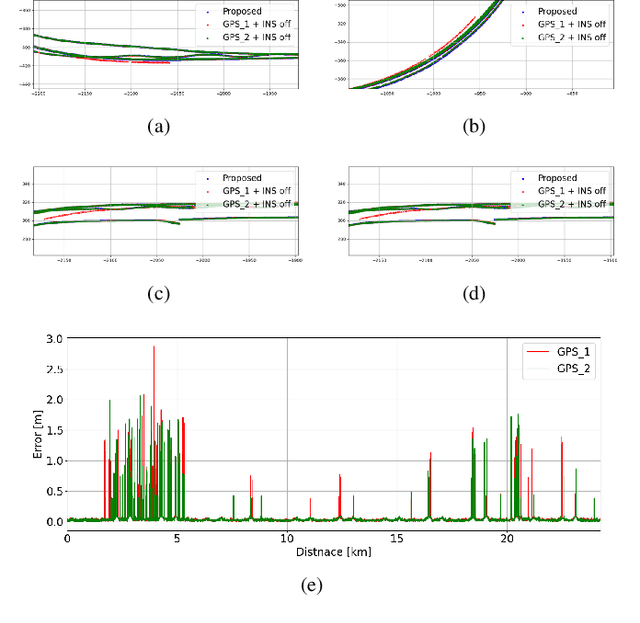

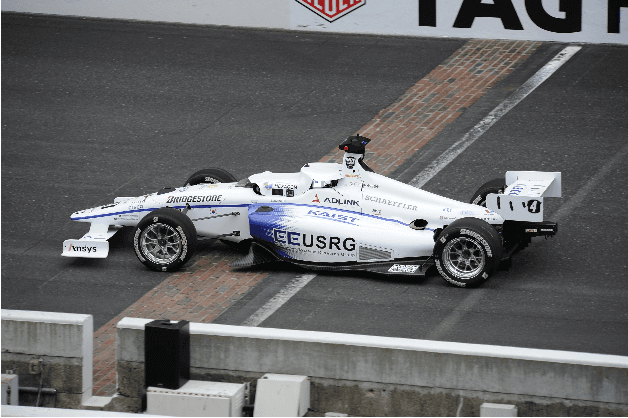

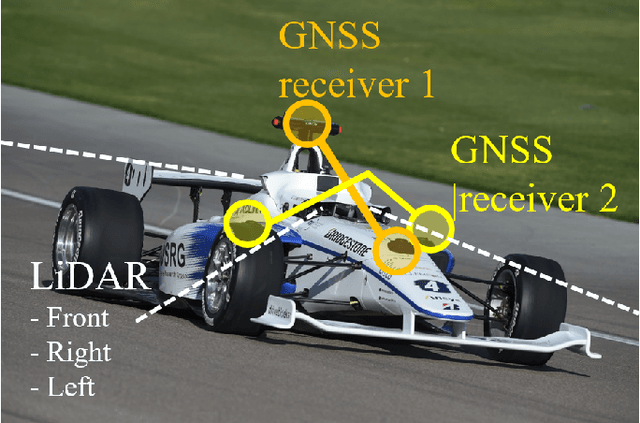

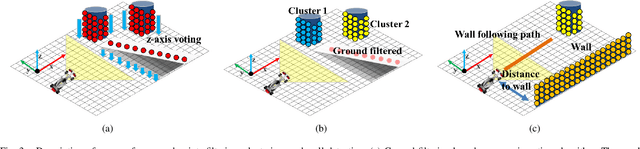

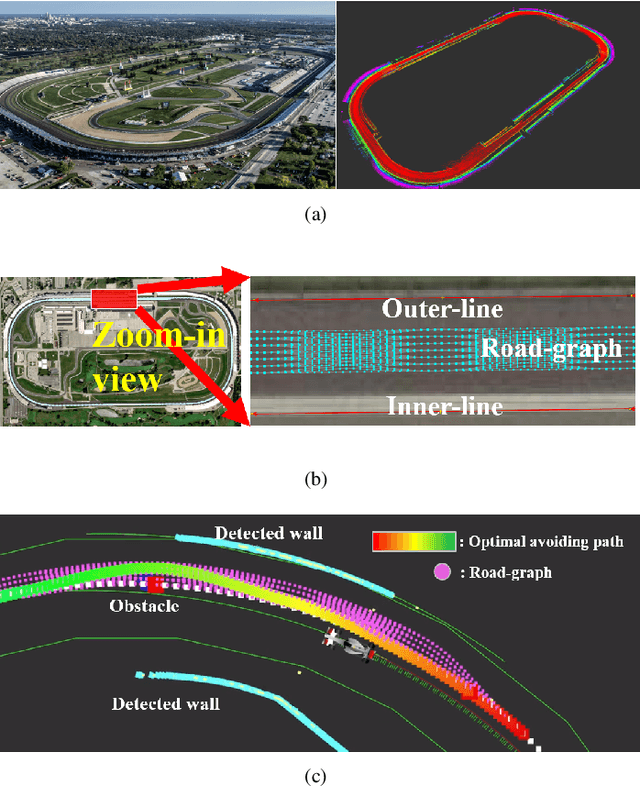

Resilient Navigation and Path Planning System for High-speed Autonomous Race Car

Jul 25, 2022

Abstract:This paper describes resilient navigation and planning algorithm for high-speed autonomous race, Indy Autonomous Challenge (IAC). The IAC is a competition with full-scale autonomous race car that can drive up to 290 km/h(180mph). Due to its high-speed and heavy vibration of the race car, GPS/INS system is prone to be degraded. These degraded GPS measurements can cause a critical localization error leading to a serious crashing accidents. To this end, we propose a robust navigation system to implement multi-sensor fusion Kalman filter. In this study, we present how to identify the degradation of measurement based on probabilistic approaches. Based on this approach, we could compute optimal measurement values for Kalman filter correction step. At the same time, we present the other resilient navigation system so that race car can follow the race track in fatal localization failure situations. In addition, this paper also covers the optimal path planning algorithm for obstacle avoidance. To take account for original optimal racing line, obstacles, vehicle dynamics, we propose a road-graph-based path planning algorithm to guarantee our race car drives in-bounded conditions. In the experiments, we will evaluate our designed localization system can handle the degraded data, and sometimes prevent serious crashing accidents while high-speed driving. In addition, we will describe how we successfully completed the obstacle avoidance challenge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge