Congduan Li

Truncated Non-Uniform Quantization for Distributed SGD

Feb 02, 2024

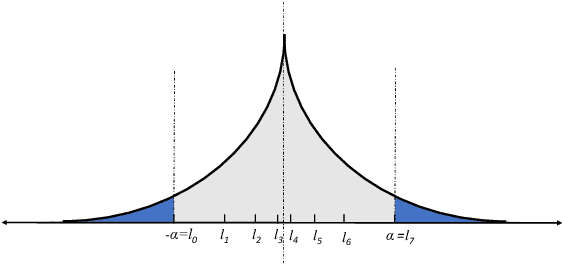

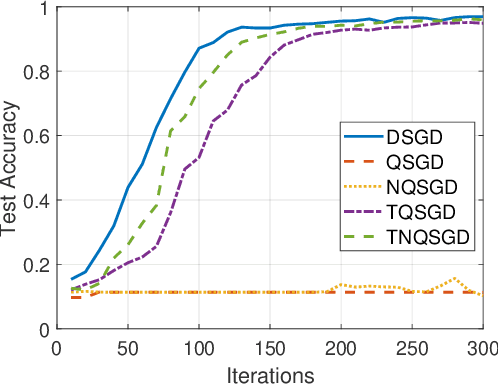

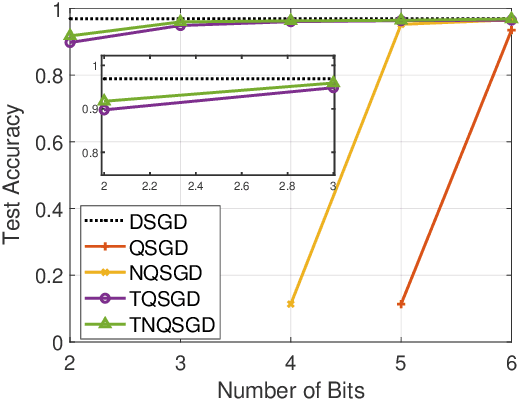

Abstract:To address the communication bottleneck challenge in distributed learning, our work introduces a novel two-stage quantization strategy designed to enhance the communication efficiency of distributed Stochastic Gradient Descent (SGD). The proposed method initially employs truncation to mitigate the impact of long-tail noise, followed by a non-uniform quantization of the post-truncation gradients based on their statistical characteristics. We provide a comprehensive convergence analysis of the quantized distributed SGD, establishing theoretical guarantees for its performance. Furthermore, by minimizing the convergence error, we derive optimal closed-form solutions for the truncation threshold and non-uniform quantization levels under given communication constraints. Both theoretical insights and extensive experimental evaluations demonstrate that our proposed algorithm outperforms existing quantization schemes, striking a superior balance between communication efficiency and convergence performance.

Towards Communication Efficient and Fair Federated Personalized Sequential Recommendation

Aug 24, 2022

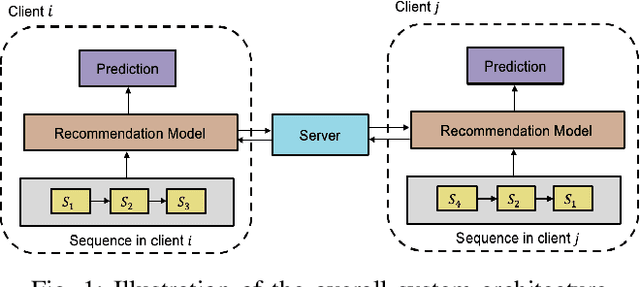

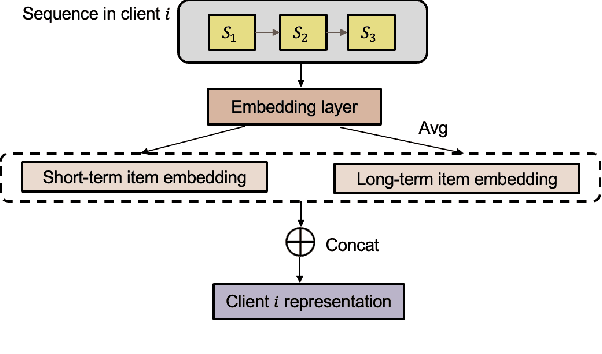

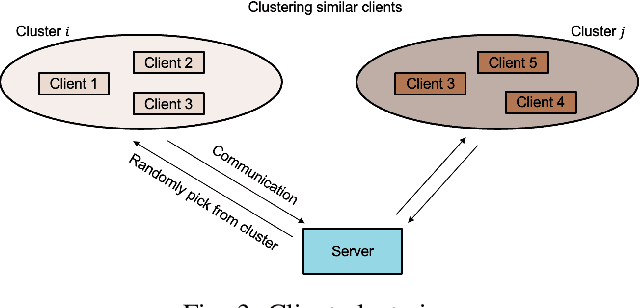

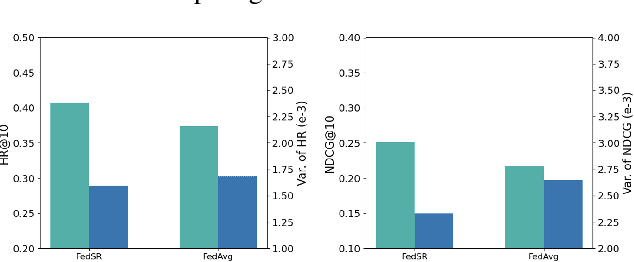

Abstract:Federated recommendations leverage the federated learning (FL) techniques to make privacy-preserving recommendations. Though recent success in the federated recommender system, several vital challenges remain to be addressed: (i) The majority of federated recommendation models only consider the model performance and the privacy-preserving ability, while ignoring the optimization of the communication process; (ii) Most of the federated recommenders are designed for heterogeneous systems, causing unfairness problems during the federation process; (iii) The personalization techniques have been less explored in many federated recommender systems. In this paper, we propose a Communication efficient and Fair personalized Federated personalized Sequential Recommendation algorithm (CF-FedSR) to tackle these challenges. CF-FedSR introduces a communication-efficient scheme that employs adaptive client selection and clustering-based sampling to accelerate the training process. A fairness-aware model aggregation algorithm that can adaptively capture the data and performance imbalance among different clients to address the unfairness problems is proposed. The personalization module assists clients in making personalized recommendations and boosts the recommendation performance via local fine-tuning and model adaption. Extensive experimental results show the effectiveness and efficiency of our proposed method.

The Tradeoff Between Privacy and Accuracy in Anomaly Detection Using Federated XGBoost

Jul 16, 2019

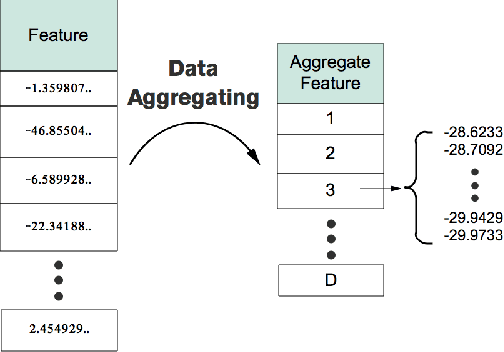

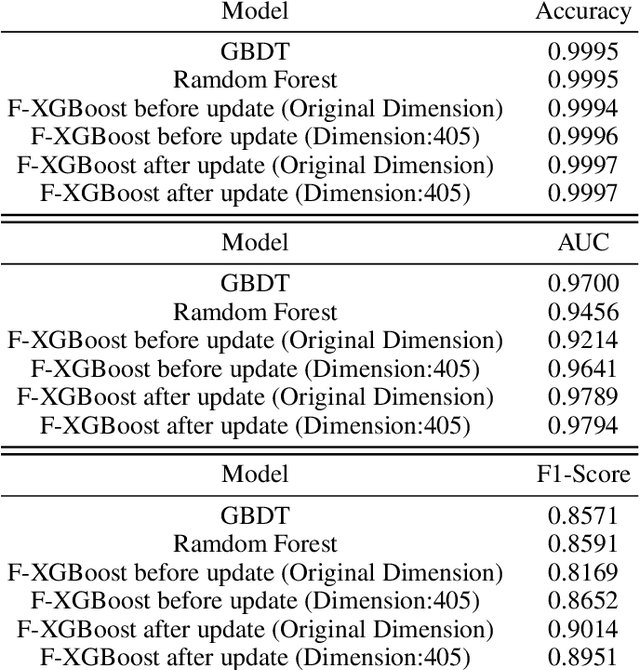

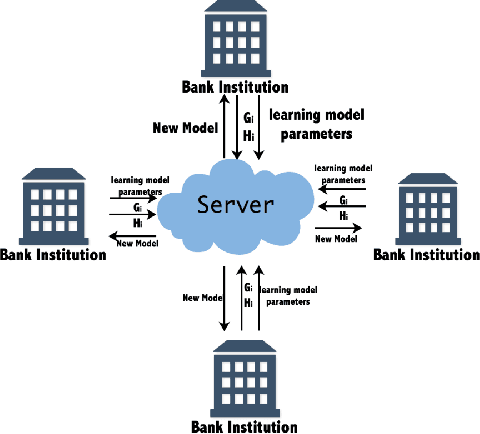

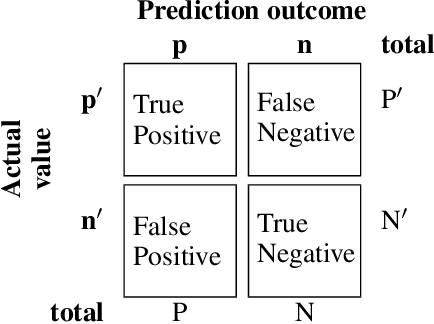

Abstract:Privacy has raised considerable concerns recently, especially with the advent of information explosion and numerous data mining techniques to explore the information inside large volumes of data. In this context, a new distributed learning paradigm termed federated learning becomes prominent recently to tackle the privacy issues in distributed learning, where only learning models will be transmitted from the distributed nodes to servers without revealing users' own data and hence protecting the privacy of users. In this paper, we propose a horizontal federated XGBoost algorithm to solve the federated anomaly detection problem, where the anomaly detection aims to identify abnormalities from extremely unbalanced datasets and can be considered as a special classification problem. Our proposed federated XGBoost algorithm incorporates data aggregation and sparse federated update processes to balance the tradeoff between privacy and learning performance. In particular, we introduce the virtual data sample by aggregating a group of users' data together at a single distributed node. We compute parameters based on these virtual data samples in the local nodes and aggregate the learning model in the central server. In the learning model upgrading process, we focus more on the wrongly classified data before in the virtual sample and hence to generate sparse learning model parameters. By carefully controlling the size of these groups of samples, we can achieve a tradeoff between privacy and learning performance. Our experimental results show the effectiveness of our proposed scheme by comparing with existing state-of-the-arts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge