Chunyao Lu

Foundation Models in Medical Imaging -- A Review and Outlook

Jun 10, 2025Abstract:Foundation models (FMs) are changing the way medical images are analyzed by learning from large collections of unlabeled data. Instead of relying on manually annotated examples, FMs are pre-trained to learn general-purpose visual features that can later be adapted to specific clinical tasks with little additional supervision. In this review, we examine how FMs are being developed and applied in pathology, radiology, and ophthalmology, drawing on evidence from over 150 studies. We explain the core components of FM pipelines, including model architectures, self-supervised learning methods, and strategies for downstream adaptation. We also review how FMs are being used in each imaging domain and compare design choices across applications. Finally, we discuss key challenges and open questions to guide future research.

Ordinal Learning: Longitudinal Attention Alignment Model for Predicting Time to Future Breast Cancer Events from Mammograms

Sep 10, 2024Abstract:Precision breast cancer (BC) risk assessment is crucial for developing individualized screening and prevention. Despite the promising potential of recent mammogram (MG) based deep learning models in predicting BC risk, they mostly overlook the 'time-to-future-event' ordering among patients and exhibit limited explorations into how they track history changes in breast tissue, thereby limiting their clinical application. In this work, we propose a novel method, named OA-BreaCR, to precisely model the ordinal relationship of the time to and between BC events while incorporating longitudinal breast tissue changes in a more explainable manner. We validate our method on public EMBED and inhouse datasets, comparing with existing BC risk prediction and time prediction methods. Our ordinal learning method OA-BreaCR outperforms existing methods in both BC risk and time-to-future-event prediction tasks. Additionally, ordinal heatmap visualizations show the model's attention over time. Our findings underscore the importance of interpretable and precise risk assessment for enhancing BC screening and prevention efforts. The code will be accessible to the public.

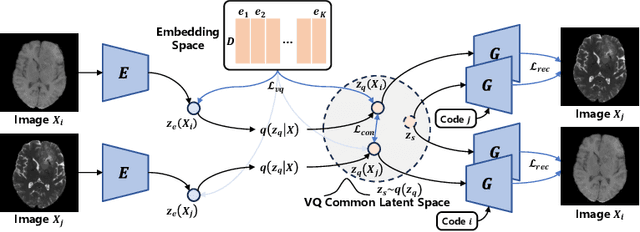

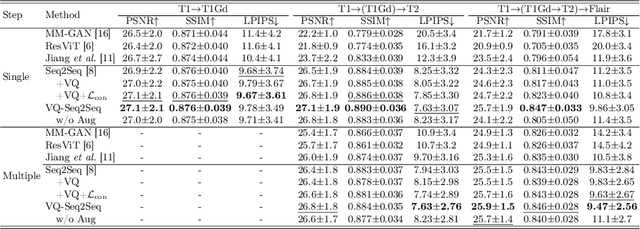

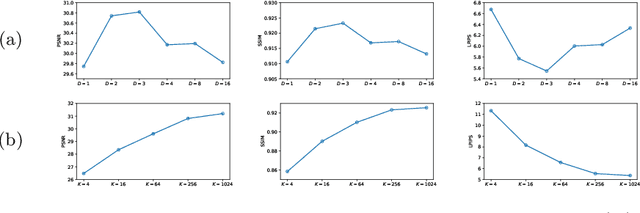

Non-Adversarial Learning: Vector-Quantized Common Latent Space for Multi-Sequence MRI

Jul 03, 2024

Abstract:Adversarial learning helps generative models translate MRI from source to target sequence when lacking paired samples. However, implementing MRI synthesis with adversarial learning in clinical settings is challenging due to training instability and mode collapse. To address this issue, we leverage intermediate sequences to estimate the common latent space among multi-sequence MRI, enabling the reconstruction of distinct sequences from the common latent space. We propose a generative model that compresses discrete representations of each sequence to estimate the Gaussian distribution of vector-quantized common (VQC) latent space between multiple sequences. Moreover, we improve the latent space consistency with contrastive learning and increase model stability by domain augmentation. Experiments using BraTS2021 dataset show that our non-adversarial model outperforms other GAN-based methods, and VQC latent space aids our model to achieve (1) anti-interference ability, which can eliminate the effects of noise, bias fields, and artifacts, and (2) solid semantic representation ability, with the potential of one-shot segmentation. Our code is publicly available.

DisAsymNet: Disentanglement of Asymmetrical Abnormality on Bilateral Mammograms using Self-adversarial Learning

Jul 06, 2023

Abstract:Asymmetry is a crucial characteristic of bilateral mammograms (Bi-MG) when abnormalities are developing. It is widely utilized by radiologists for diagnosis. The question of 'what the symmetrical Bi-MG would look like when the asymmetrical abnormalities have been removed ?' has not yet received strong attention in the development of algorithms on mammograms. Addressing this question could provide valuable insights into mammographic anatomy and aid in diagnostic interpretation. Hence, we propose a novel framework, DisAsymNet, which utilizes asymmetrical abnormality transformer guided self-adversarial learning for disentangling abnormalities and symmetric Bi-MG. At the same time, our proposed method is partially guided by randomly synthesized abnormalities. We conduct experiments on three public and one in-house dataset, and demonstrate that our method outperforms existing methods in abnormality classification, segmentation, and localization tasks. Additionally, reconstructed normal mammograms can provide insights toward better interpretable visual cues for clinical diagnosis. The code will be accessible to the public.

An Explainable Deep Framework: Towards Task-Specific Fusion for Multi-to-One MRI Synthesis

Jul 03, 2023Abstract:Multi-sequence MRI is valuable in clinical settings for reliable diagnosis and treatment prognosis, but some sequences may be unusable or missing for various reasons. To address this issue, MRI synthesis is a potential solution. Recent deep learning-based methods have achieved good performance in combining multiple available sequences for missing sequence synthesis. Despite their success, these methods lack the ability to quantify the contributions of different input sequences and estimate the quality of generated images, making it hard to be practical. Hence, we propose an explainable task-specific synthesis network, which adapts weights automatically for specific sequence generation tasks and provides interpretability and reliability from two sides: (1) visualize the contribution of each input sequence in the fusion stage by a trainable task-specific weighted average module; (2) highlight the area the network tried to refine during synthesizing by a task-specific attention module. We conduct experiments on the BraTS2021 dataset of 1251 subjects, and results on arbitrary sequence synthesis indicate that the proposed method achieves better performance than the state-of-the-art methods. Our code is available at \url{https://github.com/fiy2W/mri_seq2seq}.

Synthesis of Contrast-Enhanced Breast MRI Using Multi-b-Value DWI-based Hierarchical Fusion Network with Attention Mechanism

Jul 03, 2023

Abstract:Magnetic resonance imaging (MRI) is the most sensitive technique for breast cancer detection among current clinical imaging modalities. Contrast-enhanced MRI (CE-MRI) provides superior differentiation between tumors and invaded healthy tissue, and has become an indispensable technique in the detection and evaluation of cancer. However, the use of gadolinium-based contrast agents (GBCA) to obtain CE-MRI may be associated with nephrogenic systemic fibrosis and may lead to bioaccumulation in the brain, posing a potential risk to human health. Moreover, and likely more important, the use of gadolinium-based contrast agents requires the cannulation of a vein, and the injection of the contrast media which is cumbersome and places a burden on the patient. To reduce the use of contrast agents, diffusion-weighted imaging (DWI) is emerging as a key imaging technique, although currently usually complementing breast CE-MRI. In this study, we develop a multi-sequence fusion network to synthesize CE-MRI based on T1-weighted MRI and DWIs. DWIs with different b-values are fused to efficiently utilize the difference features of DWIs. Rather than proposing a pure data-driven approach, we invent a multi-sequence attention module to obtain refined feature maps, and leverage hierarchical representation information fused at different scales while utilizing the contributions from different sequences from a model-driven approach by introducing the weighted difference module. The results show that the multi-b-value DWI-based fusion model can potentially be used to synthesize CE-MRI, thus theoretically reducing or avoiding the use of GBCA, thereby minimizing the burden to patients. Our code is available at \url{https://github.com/Netherlands-Cancer-Institute/CE-MRI}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge