Christos Mousas

Game-Based and Gamified Robotics Education: A Comparative Systematic Review and Design Guidelines

Jan 29, 2026Abstract:Robotics education fosters computational thinking, creativity, and problem-solving, but remains challenging due to technical complexity. Game-based learning (GBL) and gamification offer engagement benefits, yet their comparative impact remains unclear. We present the first PRISMA-aligned systematic review and comparative synthesis of GBL and gamification in robotics education, analyzing 95 studies from 12,485 records across four databases (2014-2025). We coded each study's approach, learning context, skill level, modality, pedagogy, and outcomes (k = .918). Three patterns emerged: (1) approach-context-pedagogy coupling (GBL more prevalent in informal settings, while gamification dominated formal classrooms [p < .001] and favored project-based learning [p = .009]); (2) emphasis on introductory programming and modular kits, with limited adoption of advanced software (~17%), advanced hardware (~5%), or immersive technologies (~22%); and (3) short study horizons, relying on self-report. We propose eight research directions and a design space outlining best practices and pitfalls, offering actionable guidance for robotics education.

Co-design of Embodied Neural Intelligence via Constrained Evolution

May 21, 2022

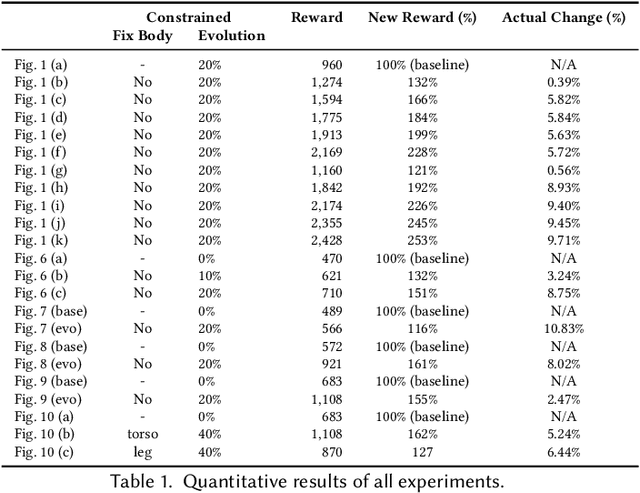

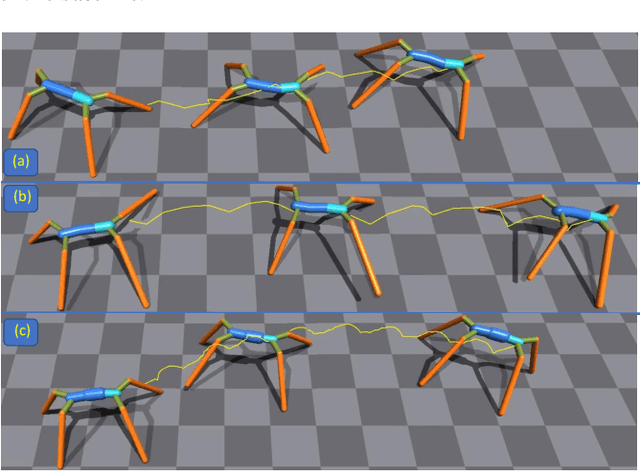

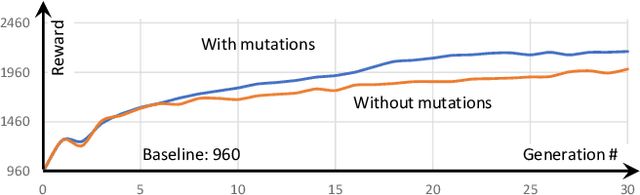

Abstract:We introduce a novel co-design method for autonomous moving agents' shape attributes and locomotion by combining deep reinforcement learning and evolution with user control. Our main inspiration comes from evolution, which has led to wide variability and adaptation in Nature and has the potential to significantly improve design and behavior simultaneously. Our method takes an input agent with optional simple constraints such as leg parts that should not evolve or allowed ranges of changes. It uses physics-based simulation to determine its locomotion and finds a behavior policy for the input design, later used as a baseline for comparison. The agent is then randomly modified within the allowed ranges creating a new generation of several hundred agents. The generation is trained by transferring the previous policy, which significantly speeds up the training. The best-performing agents are selected, and a new generation is formed using their crossover and mutations. The next generations are then trained until satisfactory results are reached. We show a wide variety of evolved agents, and our results show that even with only 10% of changes, the overall performance of the evolved agents improves 50%. If more significant changes to the initial design are allowed, our experiments' performance improves even more to 150%. Contrary to related work, our co-design works on a single GPU and provides satisfactory results by training thousands of agents within one hour.

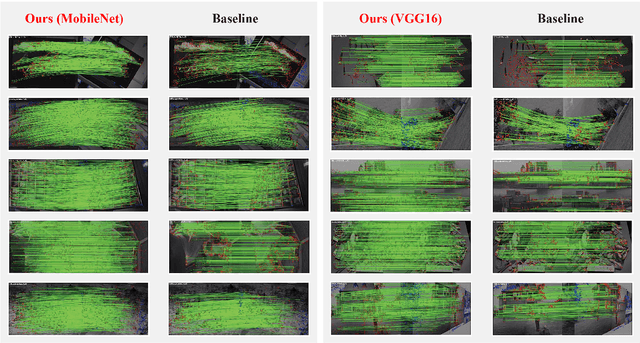

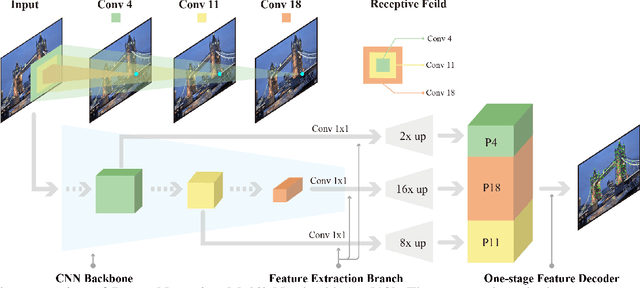

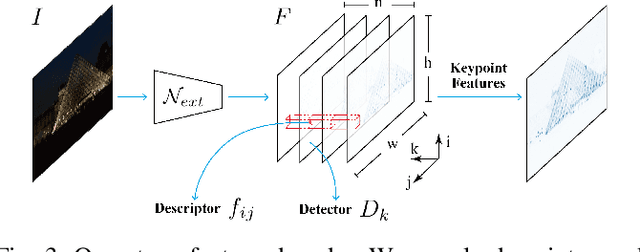

DenserNet: Weakly Supervised Visual Localization Using Multi-scale Feature Aggregation

Dec 31, 2020

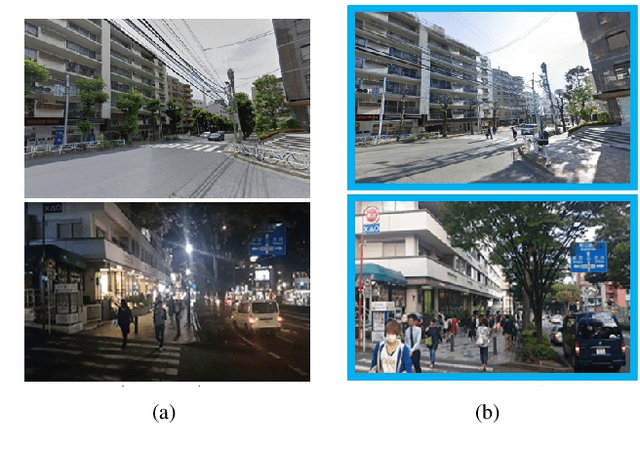

Abstract:In this work, we introduce a Denser Feature Network (DenserNet) for visual localization. Our work provides three principal contributions. First, we develop a convolutional neural network (CNN) architecture which aggregates feature maps at different semantic levels for image representations. Using denser feature maps, our method can produce more keypoint features and increase image retrieval accuracy. Second, our model is trained end-to-end without pixel-level annotation other than positive and negative GPS-tagged image pairs. We use a weakly supervised triplet ranking loss to learn discriminative features and encourage keypoint feature repeatability for image representation. Finally, our method is computationally efficient as our architecture has shared features and parameters during computation. Our method can perform accurate large-scale localization under challenging conditions while remaining the computational constraint. Extensive experiment results indicate that our method sets a new state-of-the-art on four challenging large-scale localization benchmarks and three image retrieval benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge