Christopher Diehl

LoRD: Adapting Differentiable Driving Policies to Distribution Shifts

Oct 15, 2024

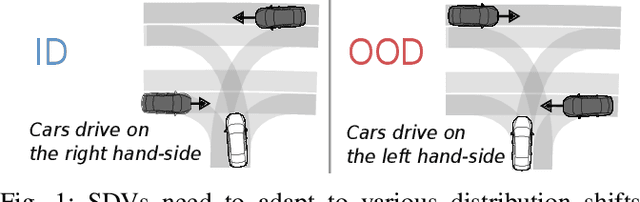

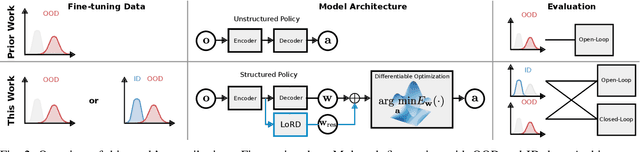

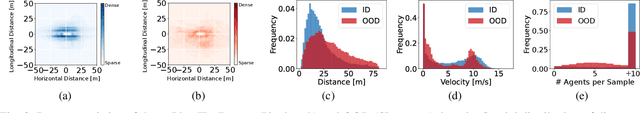

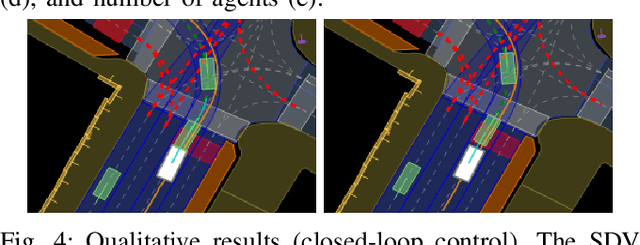

Abstract:Distribution shifts between operational domains can severely affect the performance of learned models in self-driving vehicles (SDVs). While this is a well-established problem, prior work has mostly explored naive solutions such as fine-tuning, focusing on the motion prediction task. In this work, we explore novel adaptation strategies for differentiable autonomy stacks consisting of prediction, planning, and control, perform evaluation in closed-loop, and investigate the often-overlooked issue of catastrophic forgetting. Specifically, we introduce two simple yet effective techniques: a low-rank residual decoder (LoRD) and multi-task fine-tuning. Through experiments across three models conducted on two real-world autonomous driving datasets (nuPlan, exiD), we demonstrate the effectiveness of our methods and highlight a significant performance gap between open-loop and closed-loop evaluation in prior approaches. Our approach improves forgetting by up to 23.33% and the closed-loop OOD driving score by 8.83% in comparison to standard fine-tuning.

Energy-based Potential Games for Joint Motion Forecasting and Control

Dec 04, 2023

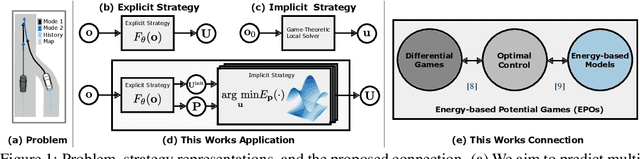

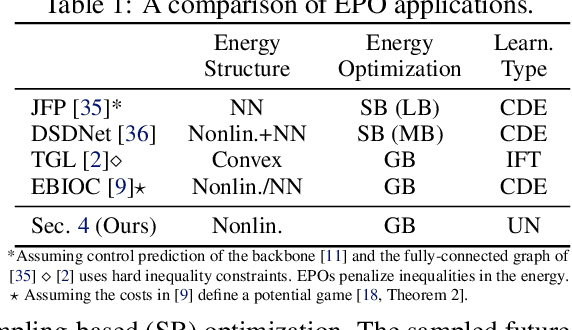

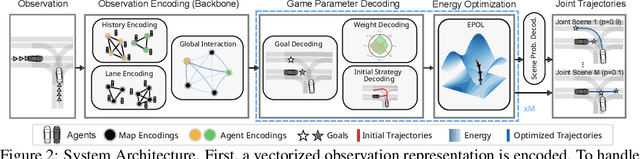

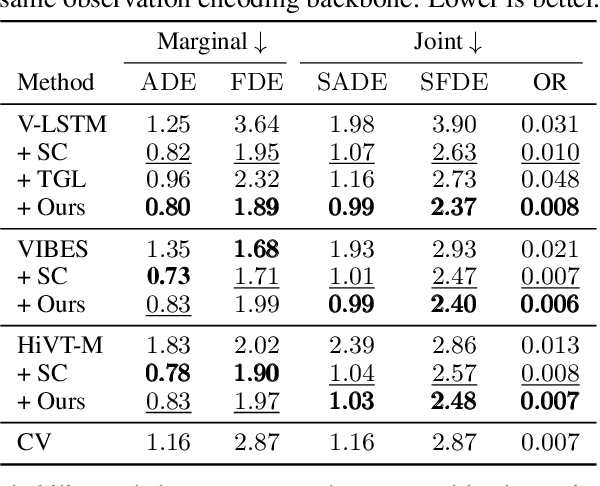

Abstract:This work uses game theory as a mathematical framework to address interaction modeling in multi-agent motion forecasting and control. Despite its interpretability, applying game theory to real-world robotics, like automated driving, faces challenges such as unknown game parameters. To tackle these, we establish a connection between differential games, optimal control, and energy-based models, demonstrating how existing approaches can be unified under our proposed Energy-based Potential Game formulation. Building upon this, we introduce a new end-to-end learning application that combines neural networks for game-parameter inference with a differentiable game-theoretic optimization layer, acting as an inductive bias. The analysis provides empirical evidence that the game-theoretic layer adds interpretability and improves the predictive performance of various neural network backbones using two simulations and two real-world driving datasets.

On a Connection between Differential Games, Optimal Control, and Energy-based Models for Multi-Agent Interactions

Aug 31, 2023Abstract:Game theory offers an interpretable mathematical framework for modeling multi-agent interactions. However, its applicability in real-world robotics applications is hindered by several challenges, such as unknown agents' preferences and goals. To address these challenges, we show a connection between differential games, optimal control, and energy-based models and demonstrate how existing approaches can be unified under our proposed Energy-based Potential Game formulation. Building upon this formulation, this work introduces a new end-to-end learning application that combines neural networks for game-parameter inference with a differentiable game-theoretic optimization layer, acting as an inductive bias. The experiments using simulated mobile robot pedestrian interactions and real-world automated driving data provide empirical evidence that the game-theoretic layer improves the predictive performance of various neural network backbones.

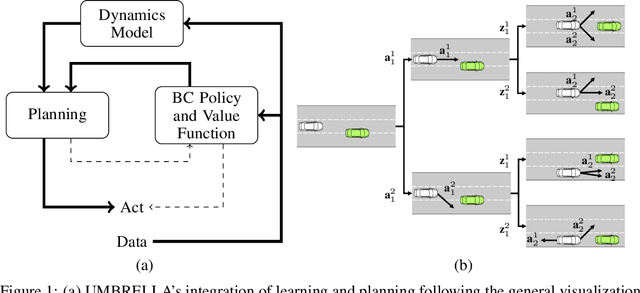

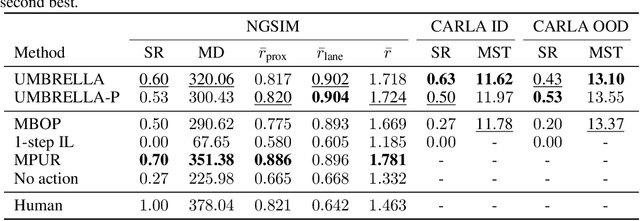

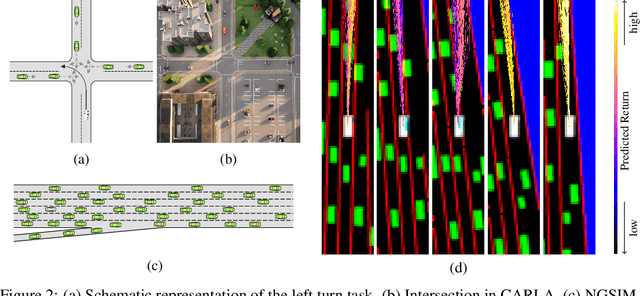

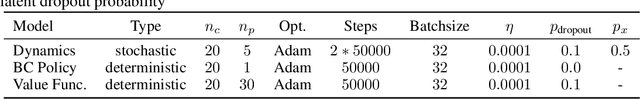

UMBRELLA: Uncertainty-Aware Model-Based Offline Reinforcement Learning Leveraging Planning

Nov 23, 2021

Abstract:Offline reinforcement learning (RL) provides a framework for learning decision-making from offline data and therefore constitutes a promising approach for real-world applications as automated driving. Self-driving vehicles (SDV) learn a policy, which potentially even outperforms the behavior in the sub-optimal data set. Especially in safety-critical applications as automated driving, explainability and transferability are key to success. This motivates the use of model-based offline RL approaches, which leverage planning. However, current state-of-the-art methods often neglect the influence of aleatoric uncertainty arising from the stochastic behavior of multi-agent systems. This work proposes a novel approach for Uncertainty-aware Model-Based Offline REinforcement Learning Leveraging plAnning (UMBRELLA), which solves the prediction, planning, and control problem of the SDV jointly in an interpretable learning-based fashion. A trained action-conditioned stochastic dynamics model captures distinctively different future evolutions of the traffic scene. The analysis provides empirical evidence for the effectiveness of our approach in challenging automated driving simulations and based on a real-world public dataset.

Radar-based Dynamic Occupancy Grid Mapping and Object Detection

Aug 09, 2020

Abstract:Environment modeling utilizing sensor data fusion and object tracking is crucial for safe automated driving. In recent years, the classical occupancy grid map approach, which assumes a static environment, has been extended to dynamic occupancy grid maps, which maintain the possibility of a low-level data fusion while also estimating the position and velocity distribution of the dynamic local environment. This paper presents the further development of a previous approach. To the best of the author's knowledge, there is no publication about dynamic occupancy grid mapping with subsequent analysis based only on radar data. Therefore in this work, the data of multiple radar sensors are fused, and a grid-based object tracking and mapping method is applied. Subsequently, the clustering of dynamic areas provides high-level object information. For comparison, also a lidar-based method is developed. The approach is evaluated qualitatively and quantitatively with real-world data from a moving vehicle in urban environments. The evaluation illustrates the advantages of the radar-based dynamic occupancy grid map, considering different comparison metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge