Chris McIntosh

Multi-Task Learning for Integrated Automated Contouring and Voxel-Based Dose Prediction in Radiotherapy

Nov 27, 2024

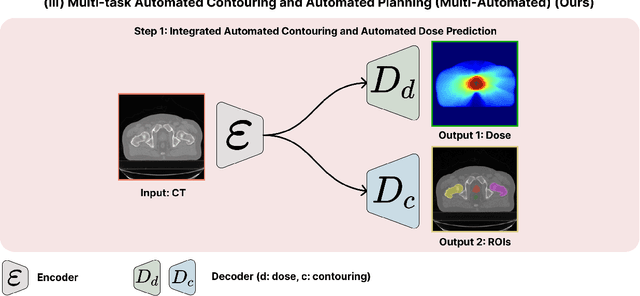

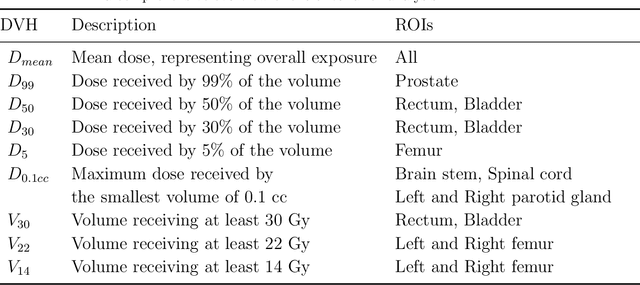

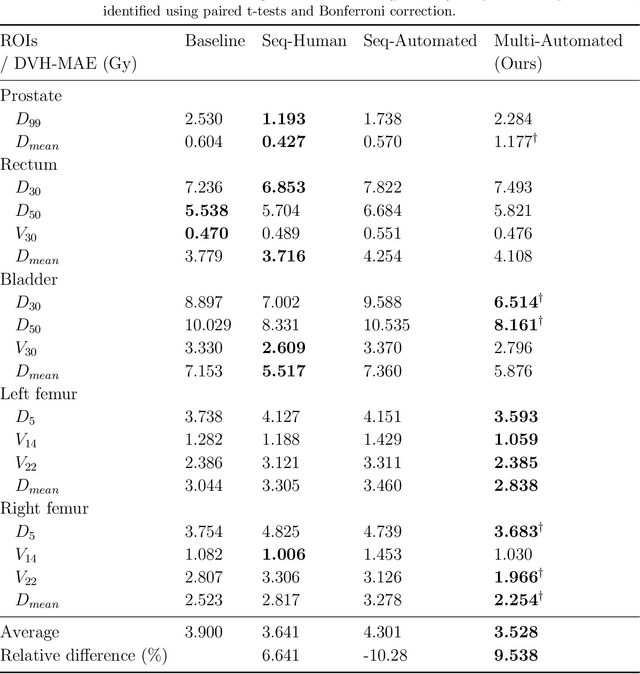

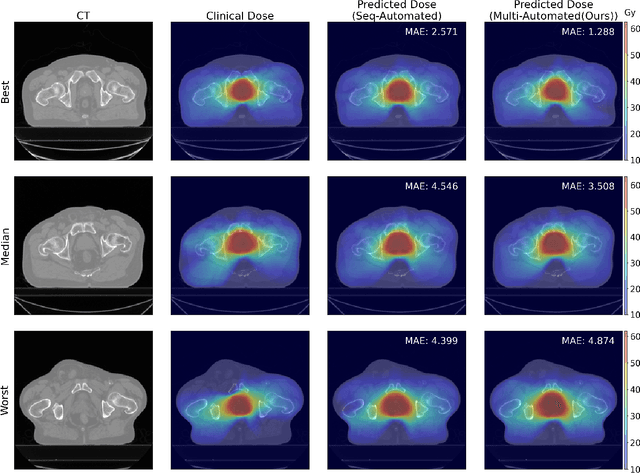

Abstract:Deep learning-based automated contouring and treatment planning has been proven to improve the efficiency and accuracy of radiotherapy. However, conventional radiotherapy treatment planning process has the automated contouring and treatment planning as separate tasks. Moreover in deep learning (DL), the contouring and dose prediction tasks for automated treatment planning are done independently. In this study, we applied the multi-task learning (MTL) approach in order to seamlessly integrate automated contouring and voxel-based dose prediction tasks, as MTL can leverage common information between the two tasks and be able able to increase the efficiency of the automated tasks. We developed our MTL framework using the two datasets: in-house prostate cancer dataset and the publicly available head and neck cancer dataset, OpenKBP. Compared to the sequential DL contouring and treatment planning tasks, our proposed method using MTL improved the mean absolute difference of dose volume histogram metrics of prostate and head and neck sites by 19.82% and 16.33%, respectively. Our MTL model for automated contouring and dose prediction tasks demonstrated enhanced dose prediction performance while maintaining or sometimes even improving the contouring accuracy. Compared to the baseline automated contouring model with the dice score coefficients of 0.818 for prostate and 0.674 for head and neck datasets, our MTL approach achieved average scores of 0.824 and 0.716 for these datasets, respectively. Our study highlights the potential of the proposed automated contouring and planning using MTL to support the development of efficient and accurate automated treatment planning for radiotherapy.

MEDBind: Unifying Language and Multimodal Medical Data Embeddings

Mar 20, 2024

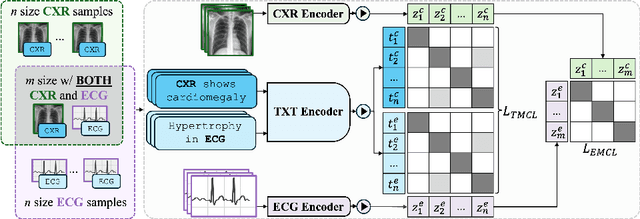

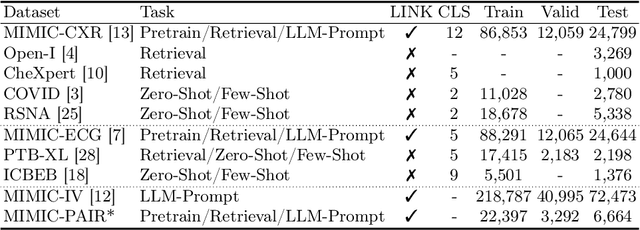

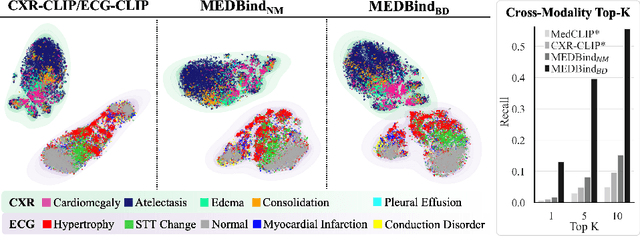

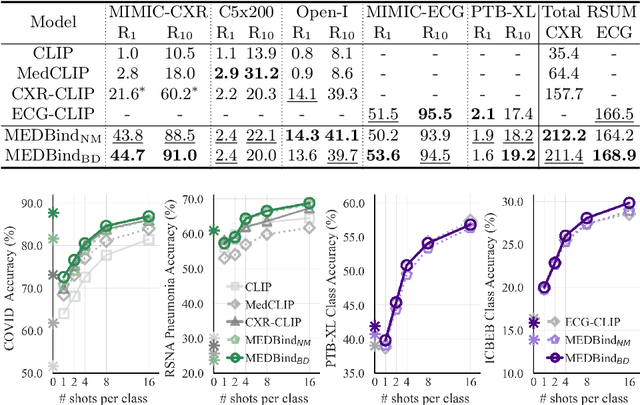

Abstract:Medical vision-language pretraining models (VLPM) have achieved remarkable progress in fusing chest X-rays (CXR) with clinical texts, introducing image-text data binding approaches that enable zero-shot learning and downstream clinical tasks. However, the current landscape lacks the holistic integration of additional medical modalities, such as electrocardiograms (ECG). We present MEDBind (Medical Electronic patient recorD), which learns joint embeddings across CXR, ECG, and medical text. Using text data as the central anchor, MEDBind features tri-modality binding, delivering competitive performance in top-K retrieval, zero-shot, and few-shot benchmarks against established VLPM, and the ability for CXR-to-ECG zero-shot classification and retrieval. This seamless integration is achieved through combination of contrastive loss on modality-text pairs with our proposed contrastive loss function, Edge-Modality Contrastive Loss, fostering a cohesive embedding space for CXR, ECG, and text. Finally, we demonstrate that MEDBind can improve downstream tasks by directly integrating CXR and ECG embeddings into a large-language model for multimodal prompt tuning.

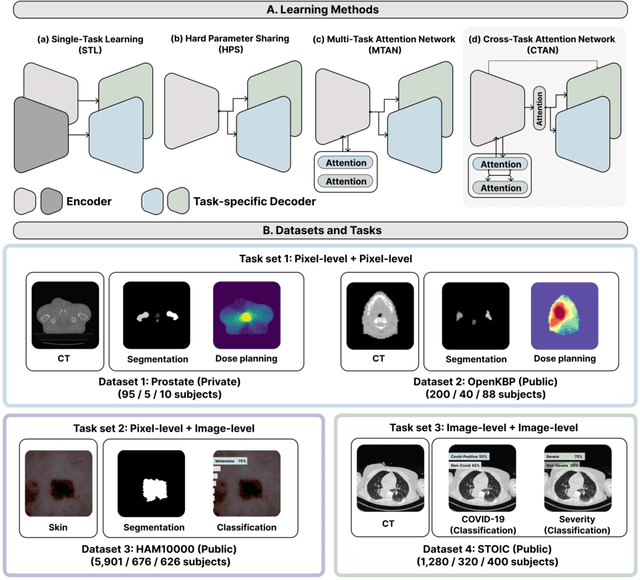

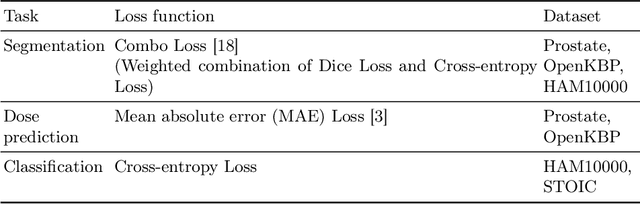

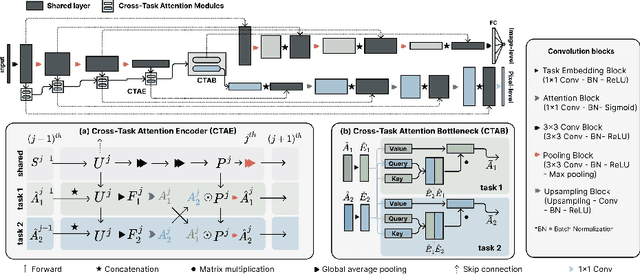

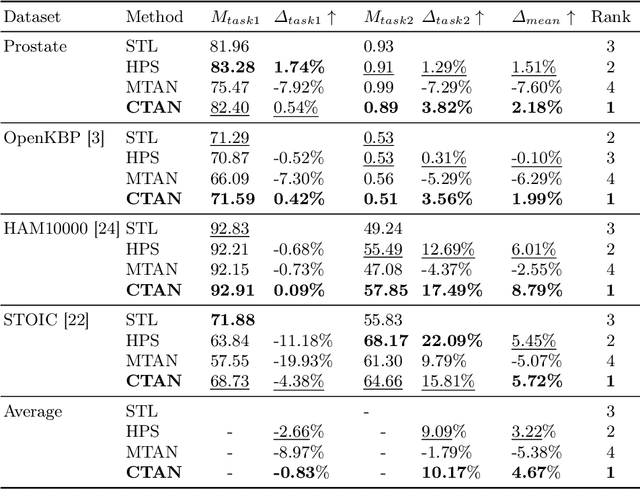

Cross-Task Attention Network: Improving Multi-Task Learning for Medical Imaging Applications

Sep 07, 2023

Abstract:Multi-task learning (MTL) is a powerful approach in deep learning that leverages the information from multiple tasks during training to improve model performance. In medical imaging, MTL has shown great potential to solve various tasks. However, existing MTL architectures in medical imaging are limited in sharing information across tasks, reducing the potential performance improvements of MTL. In this study, we introduce a novel attention-based MTL framework to better leverage inter-task interactions for various tasks from pixel-level to image-level predictions. Specifically, we propose a Cross-Task Attention Network (CTAN) which utilizes cross-task attention mechanisms to incorporate information by interacting across tasks. We validated CTAN on four medical imaging datasets that span different domains and tasks including: radiation treatment planning prediction using planning CT images of two different target cancers (Prostate, OpenKBP); pigmented skin lesion segmentation and diagnosis using dermatoscopic images (HAM10000); and COVID-19 diagnosis and severity prediction using chest CT scans (STOIC). Our study demonstrates the effectiveness of CTAN in improving the accuracy of medical imaging tasks. Compared to standard single-task learning (STL), CTAN demonstrated a 4.67% improvement in performance and outperformed both widely used MTL baselines: hard parameter sharing (HPS) with an average performance improvement of 3.22%; and multi-task attention network (MTAN) with a relative decrease of 5.38%. These findings highlight the significance of our proposed MTL framework in solving medical imaging tasks and its potential to improve their accuracy across domains.

A Comprehensive Study of Radiomics-based Machine Learning for Fibrosis Detection

Nov 25, 2022Abstract:Objectives: Early detection of liver fibrosis can help cure the disease or prevent disease progression. We perform a comprehensive study of machine learning-based fibrosis detection in CT images using radiomic features to develop a non-invasive approach to fibrosis detection. Methods: Two sets of radiomic features were extracted from spherical ROIs in CT images of 182 patients who underwent simultaneous liver biopsy and CT examinations, one set corresponding to biopsy locations and another distant from biopsy locations. Combinations of contrast, normalization, machine learning model, feature selection method, bin width, and kernel radius were investigated, each of which were trained and evaluated 100 times with randomized development and test cohorts. The best settings were evaluated based on their mean test AUC and the best features were determined based on their frequency among the best settings. Results: Logistic regression models with NC images normalized using Gamma correction with $\gamma = 1.5$ performed best for fibrosis detection. Boruta was the best for radiomic feature selection method. Training a model using these optimal settings and features consisting of first order energy, first order kurtosis, and first order skewness, resulted in a model that achieved mean test AUCs of 0.7549 and 0.7166 on biopsy-based and non-biopsy ROIs respectively, outperforming a baseline and best models found during the initial study. Conclusions: Logistic regression models trained on radiomic features from NC images normalized using Gamma correction with $\gamma = 1.5$ that underwent Boruta feature selection are effective for liver fibrosis detection. Energy, kurtosis, and skewness are particularly effective features for fibrosis detection.

Domain Adaptation of Automated Treatment Planning from Computed Tomography to Magnetic Resonance

Mar 07, 2022

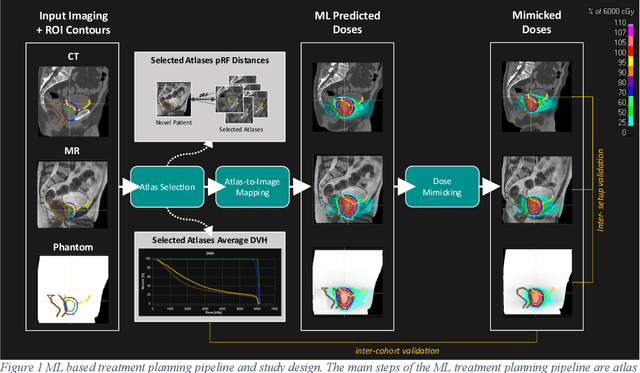

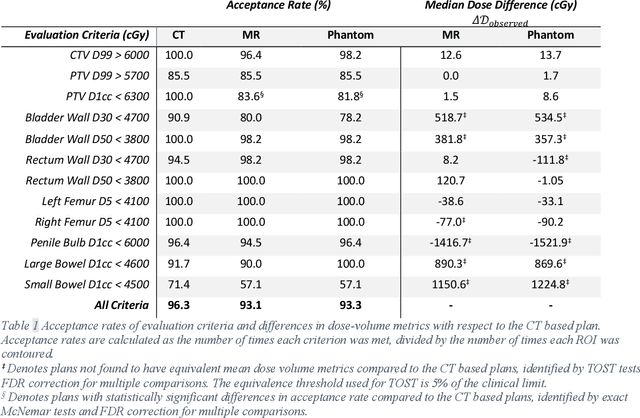

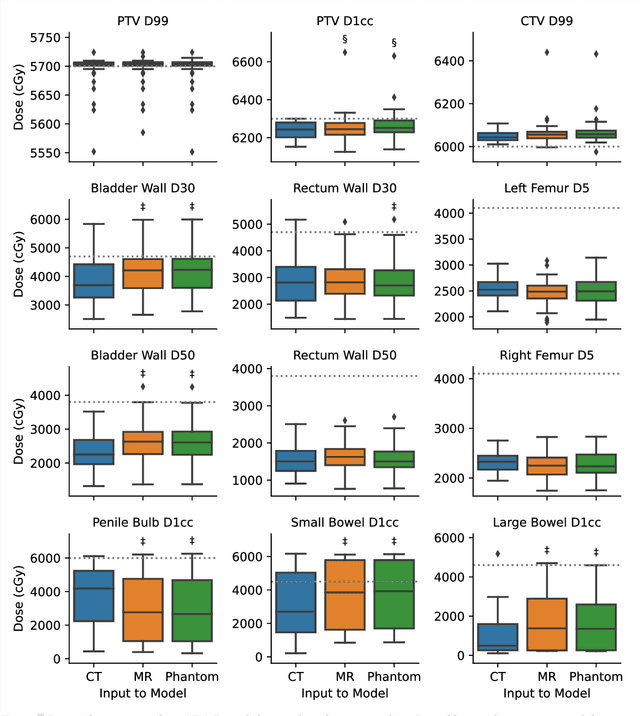

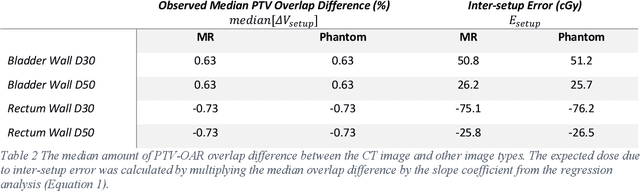

Abstract:Objective: Machine learning (ML) based radiation treatment (RT) planning addresses the iterative and time-consuming nature of conventional inverse planning. Given the rising importance of Magnetic resonance (MR) only treatment planning workflows, we sought to determine if an ML based treatment planning model, trained on computed tomography (CT) imaging, could be applied to MR through domain adaptation. Methods: In this study, MR and CT imaging was collected from 55 prostate cancer patients treated on an MR linear accelerator. ML based plans were generated for each patient on both CT and MR imaging using a commercially available model in RayStation 8B. The dose distributions and acceptance rates of MR and CT based plans were compared using institutional dose-volume evaluation criteria. The dosimetric differences between MR and CT plans were further decomposed into setup, cohort, and imaging domain components. Results: MR plans were highly acceptable, meeting 93.1% of all evaluation criteria compared to 96.3% of CT plans, with dose equivalence for all evaluation criteria except for the bladder wall, penile bulb, small and large bowel, and one rectum wall criteria (p<0.05). Changing the input imaging modality (domain component) only accounted for about half of the dosimetric differences observed between MR and CT plans. Anatomical differences between the ML training set and the MR linac cohort (cohort component) were also a significant contributor. Significance: We were able to create highly acceptable MR based treatment plans using a CT-trained ML model for treatment planning, although clinically significant dose deviations from the CT based plans were observed.

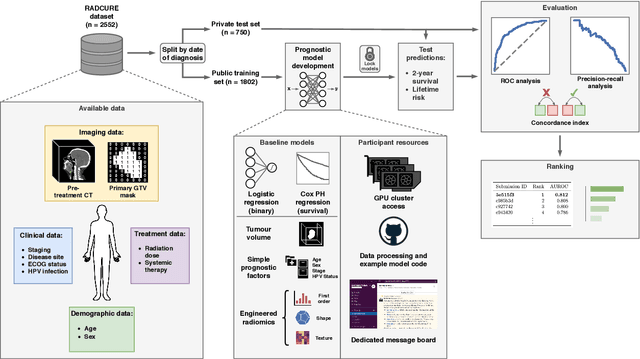

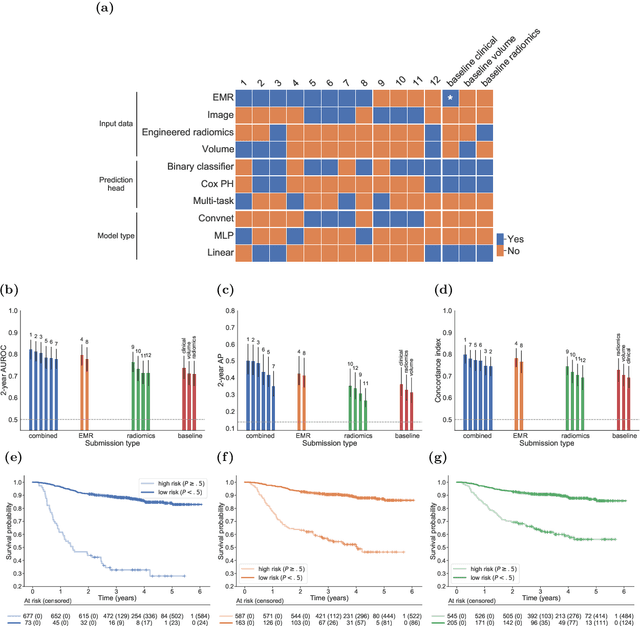

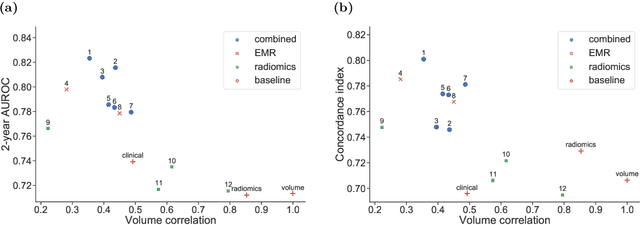

A Machine Learning Challenge for Prognostic Modelling in Head and Neck Cancer Using Multi-modal Data

Jan 28, 2021

Abstract:Accurate prognosis for an individual patient is a key component of precision oncology. Recent advances in machine learning have enabled the development of models using a wider range of data, including imaging. Radiomics aims to extract quantitative predictive and prognostic biomarkers from routine medical imaging, but evidence for computed tomography radiomics for prognosis remains inconclusive. We have conducted an institutional machine learning challenge to develop an accurate model for overall survival prediction in head and neck cancer using clinical data etxracted from electronic medical records and pre-treatment radiological images, as well as to evaluate the true added benefit of radiomics for head and neck cancer prognosis. Using a large, retrospective dataset of 2,552 patients and a rigorous evaluation framework, we compared 12 different submissions using imaging and clinical data, separately or in combination. The winning approach used non-linear, multitask learning on clinical data and tumour volume, achieving high prognostic accuracy for 2-year and lifetime survival prediction and outperforming models relying on clinical data only, engineered radiomics and deep learning. Combining all submissions in an ensemble model resulted in improved accuracy, with the highest gain from a image-based deep learning model. Our results show the potential of machine learning and simple, informative prognostic factors in combination with large datasets as a tool to guide personalized cancer care.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge