Chloe Qinyu Zhu

Tailoring Vaccine Messaging with Common-Ground Opinions

May 17, 2024

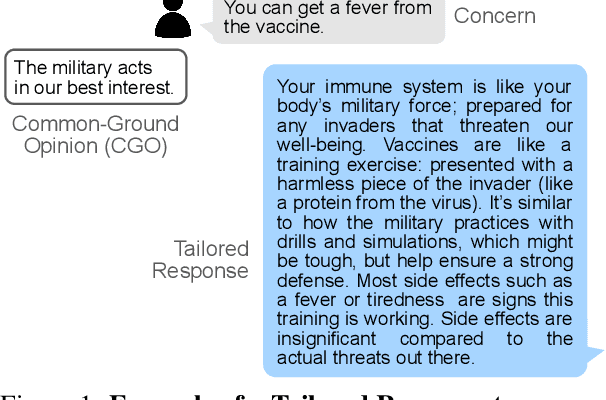

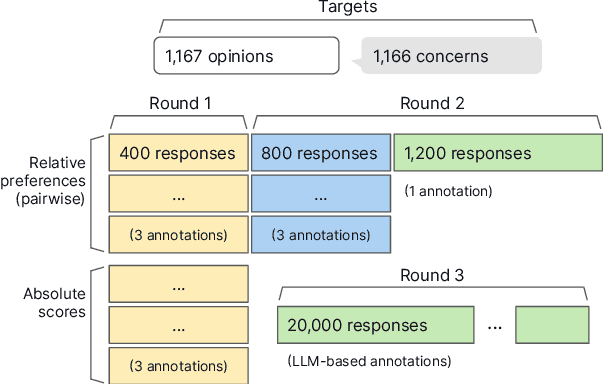

Abstract:One way to personalize chatbot interactions is by establishing common ground with the intended reader. A domain where establishing mutual understanding could be particularly impactful is vaccine concerns and misinformation. Vaccine interventions are forms of messaging which aim to answer concerns expressed about vaccination. Tailoring responses in this domain is difficult, since opinions often have seemingly little ideological overlap. We define the task of tailoring vaccine interventions to a Common-Ground Opinion (CGO). Tailoring responses to a CGO involves meaningfully improving the answer by relating it to an opinion or belief the reader holds. In this paper we introduce TAILOR-CGO, a dataset for evaluating how well responses are tailored to provided CGOs. We benchmark several major LLMs on this task; finding GPT-4-Turbo performs significantly better than others. We also build automatic evaluation metrics, including an efficient and accurate BERT model that outperforms finetuned LLMs, investigate how to successfully tailor vaccine messaging to CGOs, and provide actionable recommendations from this investigation. Code and model weights: https://github.com/rickardstureborg/tailor-cgo Dataset: https://huggingface.co/datasets/DukeNLP/tailor-cgo

Hierarchical Multi-Label Classification of Online Vaccine Concerns

Feb 01, 2024Abstract:Vaccine concerns are an ever-evolving target, and can shift quickly as seen during the COVID-19 pandemic. Identifying longitudinal trends in vaccine concerns and misinformation might inform the healthcare space by helping public health efforts strategically allocate resources or information campaigns. We explore the task of detecting vaccine concerns in online discourse using large language models (LLMs) in a zero-shot setting without the need for expensive training datasets. Since real-time monitoring of online sources requires large-scale inference, we explore cost-accuracy trade-offs of different prompting strategies and offer concrete takeaways that may inform choices in system designs for current applications. An analysis of different prompting strategies reveals that classifying the concerns over multiple passes through the LLM, each consisting a boolean question whether the text mentions a vaccine concern or not, works the best. Our results indicate that GPT-4 can strongly outperform crowdworker accuracy when compared to ground truth annotations provided by experts on the recently introduced VaxConcerns dataset, achieving an overall F1 score of 78.7%.

Fast and Interpretable Mortality Risk Scores for Critical Care Patients

Nov 21, 2023

Abstract:Prediction of mortality in intensive care unit (ICU) patients is an important task in critical care medicine. Prior work in creating mortality risk models falls into two major categories: domain-expert-created scoring systems, and black box machine learning (ML) models. Both of these have disadvantages: black box models are unacceptable for use in hospitals, whereas manual creation of models (including hand-tuning of logistic regression parameters) relies on humans to perform high-dimensional constrained optimization, which leads to a loss in performance. In this work, we bridge the gap between accurate black box models and hand-tuned interpretable models. We build on modern interpretable ML techniques to design accurate and interpretable mortality risk scores. We leverage the largest existing public ICU monitoring datasets, namely the MIMIC III and eICU datasets. By evaluating risk across medical centers, we are able to study generalization across domains. In order to customize our risk score models, we develop a new algorithm, GroupFasterRisk, which has several important benefits: (1) it uses hard sparsity constraint, allowing users to directly control the number of features; (2) it incorporates group sparsity to allow more cohesive models; (3) it allows for monotonicity correction on models for including domain knowledge; (4) it produces many equally-good models at once, which allows domain experts to choose among them. GroupFasterRisk creates its risk scores within hours, even on the large datasets we study here. GroupFasterRisk's risk scores perform better than risk scores currently used in hospitals, and have similar prediction performance to black box ML models (despite being much sparser). Because GroupFasterRisk produces a variety of risk scores and handles constraints, it allows design flexibility, which is the key enabler of practical and trustworthy model creation.

Do Not Harm Protected Groups in Debiasing Language Representation Models

Oct 27, 2023Abstract:Language Representation Models (LRMs) trained with real-world data may capture and exacerbate undesired bias and cause unfair treatment of people in various demographic groups. Several techniques have been investigated for applying interventions to LRMs to remove bias in benchmark evaluations on, for example, word embeddings. However, the negative side effects of debiasing interventions are usually not revealed in the downstream tasks. We propose xGAP-DEBIAS, a set of evaluations on assessing the fairness of debiasing. In this work, We examine four debiasing techniques on a real-world text classification task and show that reducing biasing is at the cost of degrading performance for all demographic groups, including those the debiasing techniques aim to protect. We advocate that a debiasing technique should have good downstream performance with the constraint of ensuring no harm to the protected group.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge